Spotify on May 7, 2026, launched a beta feature that allows AI agents to generate privately addressed audio content - called Personal Podcasts - and save it directly to a listener's Spotify library. The announcement marks a structural shift in how audio fits into AI-assisted daily routines, extending Spotify's reach from the streaming platform itself into the agentic software layer that is reshaping how people interact with digital services.

The move is narrow but deliberate. Spotify is not launching a standalone AI product. It is opening a door for agents running on desktop tools - explicitly naming OpenClaw, Claude Code, and OpenAI Codex - to deposit audio content into the Spotify ecosystem that users already occupy.

What the feature does and how it works

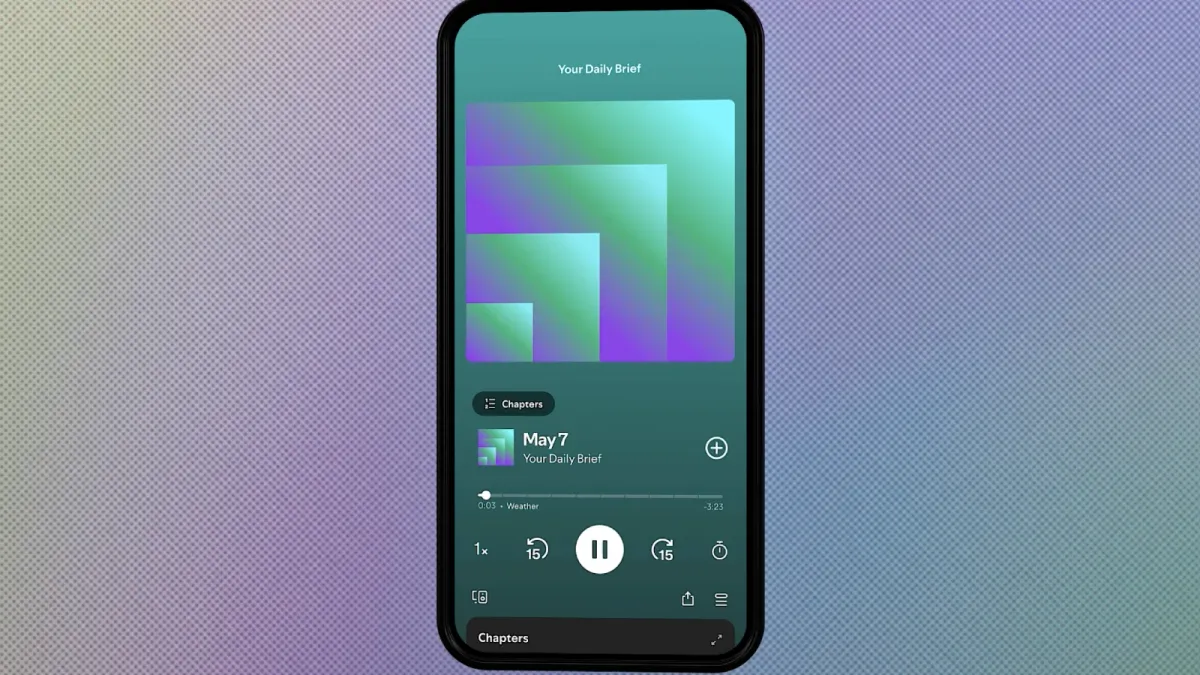

According to Spotify, the feature makes it possible to save and play Personal Podcasts inside the platform. An agent generates a briefing - a daily digest, a summary of class notes, a weekend schedule, or any other structured audio segment - and deposits it into the listener's Spotify library. The content is private, visible only to the account holder, and sits alongside existing subscriptions, playlists, and saved episodes. Because Spotify is available on more than 2,000 devices, the podcast inherits that portability without additional configuration.

The setup process runs through a command-line interface tool. According to Spotify, the steps are: open the Save to Spotify CLI GitHub page on desktop, follow the installation instructions, sign in to Spotify via a browser prompt, and then issue a natural language request to the agent describing the podcast content. The agent handles generation and upload. A link is returned, and the episode becomes available immediately in the listener's library.

The feature is available to eligible Spotify Free and Premium users globally. Spotify noted that usage limits are in place during the beta phase and that some aspects may evolve as the company learns from listener feedback.

The use cases Spotify is targeting

Spotify's announcement describes two broad categories of use. The first is a daily briefing model: a quick morning audio segment that flags key meetings, checks weather, and recommends a podcast for the commute. The second is a structured learning model - a progressive weekly audio series assembled from notes, saved articles, recent searches, and relevant files, with pauses built in at key points for reflection.

Both examples share a design logic. They take content the listener already has in other forms - calendar entries, reading lists, text files - and convert it into audio formatted for passive consumption. This suits Spotify's core distribution advantage: the platform moves with a listener across the day, across devices, and across contexts where reading or screen interaction is impractical.

The framing is notably different from traditional podcast production. There is no recording session, no editing workflow, no RSS feed, no public distribution. The Personal Podcast exists only for the account holder and is created on demand. According to Spotify, the experience is "seamlessly integrated across the devices you use," placing agent-generated audio on equal footing with any other content in the library.

Agents as a new content creation layer

The beta positions AI agents as a content creation layer sitting above Spotify rather than inside it. Spotify is not building the agent. It is building the target - a stable, authenticated destination where agent output lands. This is architecturally similar to how Spotify has handled other integrations, including its April 23, 2026, integration with Claude, which placed Spotify's personalization engine inside Claude conversations for music and podcast recommendations. In that case, the AI interface was Claude, and Spotify was the content source. In the Personal Podcast beta, the roles partially invert: an agent on any supported desktop tool becomes the content creator, and Spotify becomes the storage and playback destination.

The distinction matters. Spotify is not dependent on a single AI partner for this feature to work. Any agent running Claude Code, OpenClaw, or OpenAI Codex on desktop can use the Save to Spotify CLI tool. That breadth makes this less a bilateral deal and more an infrastructure opening - one that invites a range of AI tools to route audio output into Spotify's ecosystem.

This is consistent with a broader pattern Spotify has been building across 2026. In January, the company lowered Partner Program thresholds and introduced Distribution API integrations with Acast, Audioboom, Libsyn, Omny, and Podigee, making it easier for content created elsewhere to land inside Spotify's monetization framework. The Personal Podcast beta applies a similar logic to AI-generated content, without a monetization layer at this stage.

Spotify's relationship with AI agents runs deeper than this announcement

The May 7 launch does not represent Spotify's first engagement with AI agents - it is the most visible consumer-facing layer of a relationship that runs into the company's core engineering operations.

Spotify disclosed during its Q4 2025 earnings call on February 10, 2026, that its most senior engineers had stopped writing code manually in December 2025. The engineering team operates through an internal system called Honk, built on Anthropic's Claude Code. Co-CEO Gustav Söderström described developers managing the full development cycle from mobile devices during morning commutes, generating and supervising code rather than writing it. That disclosure - covered in detail on PPC Land - established that the Claude integration announced on April 23, 2026, was not Spotify's first operational dependency on Anthropic technology, but the first time that dependency reached the consumer product surface.

The pattern suggests that Spotify's leadership views agentic AI not as a feature category but as infrastructure. The Personal Podcast beta extends that logic outward: if AI agents already run the development process, why not let them run personal content creation too, and route the output into Spotify where users already spend listening time?

What this means for the podcast ecosystem

For the broader podcast industry, the feature introduces a category of audio content that has no precedent in existing distribution models. Personal Podcasts are not public. They do not appear in discovery feeds, search results, or advertiser-facing inventory. They carry no metadata that would surface them in Spotify's recommendation engine or in third-party measurement systems like those operated by Triton Digital or Magellan AI.

That invisibility is intentional - the content is private by design. But it creates a structural gap in how the industry thinks about podcast consumption. Edison Research's Infinite Dial 2026 report, published March 12, 2026, found that 58 percent of Americans - roughly 167 million people - now listen to podcasts monthly. That figure captures public podcast consumption. It captures nothing about AI-generated private audio that lands in a Spotify library and plays during a commute.

As noted in PPC Land's coverage of the Spotify-Claude integration, the advertising and measurement industries are already grappling with discovery experiences that begin in AI interfaces rather than on the Spotify home screen. Personal Podcasts push that challenge a step further: here, the content itself originates outside the platform and outside any publishing or distribution infrastructure that advertisers or measurement firms currently monitor.

For podcast advertisers, the immediate impact is close to zero - there is no inventory to buy inside a private, agent-generated episode. The longer-term question is whether this category of consumption grows large enough to displace listening time that would otherwise go to ad-supported public podcasts. That is speculative at this stage, but it is a structural dynamic worth watching as the beta scales.

The CLI tool and technical constraints

The Save to Spotify tool is published on GitHub and designed for desktop agent environments. According to Spotify, it is built to work with agents already operating on desktop, specifically Claude Code, OpenClaw, and OpenAI Codex. This restricts the feature to users running desktop agent setups - not casual users opening a mobile app. The CLI-based approach means technical familiarity is a prerequisite, at least during the beta phase.

Spotify has not disclosed the audio format generated by the process, the maximum episode length, or the underlying text-to-speech infrastructure powering the narration. The announcement describes the output as a Personal Podcast deposited to the listener's library, but does not detail the voice model, the audio quality specifications, or whether the listener can choose between multiple voice options. These gaps are consistent with beta-phase launches where the core mechanic is being validated before secondary features are built out.

According to Spotify, the beta will evolve based on listener feedback. That language suggests Spotify is watching for usage patterns before committing to specific technical directions - whether that means supporting mobile agent environments, enabling multi-episode series management, or eventually allowing some form of sharing or controlled access to Personal Podcast content.

Where this fits in the MCP and agent infrastructure picture

The Personal Podcast beta fits inside a wider movement toward AI agents using standardized protocols to interact with platform services. Anthropic introduced the Model Context Protocol as an open standard in November 2024, subsequently donating it to the Linux Foundation. Beehiiv launched a Podcast MCP integration on April 23, 2026, making it the first podcast hosting platform to offer native MCP connectivity - a move that allows creators to query podcast analytics through natural language inside Claude, ChatGPT, or other MCP-compatible clients.

The Save to Spotify CLI is not an MCP server in the formal protocol sense - it is a command-line tool for desktop agents rather than a remotely hosted MCP endpoint. But it reflects the same underlying logic: platforms opening programmatic interfaces that allow AI agents to take actions, not just retrieve information. Beehiiv's MCP lets agents read podcast data; Spotify's CLI lets agents write podcast content. Both represent a move from AI as a passive query layer to AI as an active participant in content workflows.

The beehiiv parallel is instructive for the marketing and media community. As PPC Land noted in its coverage of the beehiiv MCP launch, the decision to embed into AI interfaces reflects a calculation that platforms must meet operators where they already work. Spotify appears to be making a similar calculation: listeners are already using agents to organize their days, and some of those agents are capable of generating audio. Rather than let that audio exist only as a text summary or a file outside the platform, Spotify is offering a destination.

Spotify's platform context at the time of launch

Spotify reported Q1 2026 results on April 29, 2026, showing 761 million monthly active users and 293 million Premium subscribers. Biddable programmatic advertising exceeded one-third of ad-supported revenue - a milestone for a company that was still building its programmatic infrastructure when it launched the Spotify Ad Exchange in April 2025. The company's investor day is scheduled for May 21, 2026, in New York, where longer-term strategic priorities are expected to be detailed.

The Personal Podcast beta launches in that context: a platform with substantial scale, an accelerating advertising business, and a leadership team publicly committed to AI as a development philosophy rather than a feature add-on. The feature is not, by itself, a revenue mechanism. But it establishes Spotify as a viable destination for AI-generated audio in a way that no other major streaming platform has done publicly.

Timeline

- November 2024: Anthropic introduces the Model Context Protocol as an open standard for connecting AI systems to external services; later donated to the Linux Foundation

- January 2, 2025: Spotify launches the Partner Program, introducing dual revenue streams combining Premium subscriber engagement payouts and advertising income

- November 2025: Spotify expands Partner Program to additional markets; Anthropic launches Claude third-party Integrations framework in May 2025 with ten initial services

- December 2025: Spotify engineers transition to Claude Code-based development via internal system Honk, ceasing manual coding

- January 7, 2026: Spotify lowers Partner Program thresholds and launches Distribution API with Acast, Audioboom, Libsyn, Omny, and Podigee

- January 22, 2026: Spotify extends Prompted Playlist to US and Canada Premium subscribers

- February 10, 2026: Spotify Q4 2025 earnings reveal senior engineers have not written code manually since December; 751 million MAU reported

- March 12, 2026: Edison Research Infinite Dial 2026 reports 58 percent of Americans - 167 million people - listening to podcasts monthly

- March 24, 2026: Beehiiv launches newsletter MCP integration across Claude, ChatGPT, Gemini, and Perplexity

- April 23, 2026: Spotify launches direct integration with Claude, enabling personalized music and podcast recommendations through conversation for Free and Premium users globally

- April 23, 2026: Beehiiv launches Podcast MCP, making it the first podcast host to offer native MCP connectivity

- April 29, 2026: Spotify Q1 2026 results: 761 million MAU, 293 million Premium subscribers, biddable programmatic exceeding one-third of ad-supported revenue

- May 7, 2026: Spotify announces Save to Spotify beta tool, enabling AI agents running Claude Code, OpenClaw, or OpenAI Codex to generate and deposit Personal Podcasts into Spotify libraries

Summary

Who: Spotify, the Stockholm-based audio streaming company with 761 million monthly active users and 293 million Premium subscribers as of Q1 2026, announced the feature. The intended users are people already operating desktop AI agents running Claude Code, OpenClaw, or OpenAI Codex.

What: Spotify launched a beta CLI tool called Save to Spotify, available on GitHub, that allows AI agents on desktop to generate privately addressed audio episodes - called Personal Podcasts - and save them directly to a listener's Spotify library. The content is private, not publicly distributed, and inherits Spotify's cross-device availability across more than 2,000 devices. Available to eligible Free and Premium users globally, with usage limits in place during the beta.

When: The announcement was made on May 7, 2026.

Where: The tool operates on desktop environments and routes content into Spotify's existing library infrastructure, available globally to eligible users. Setup is initiated from the Save to Spotify CLI GitHub page.

Why: Listeners are already using AI agents to create structured audio content - daily briefings, study guides, schedule summaries. Spotify is providing a stable, cross-device destination for that content rather than leaving it in formats outside its ecosystem. The move extends Spotify's existing strategy of widening the range of content and creator tools that route into its platform, and fits within a broader company orientation toward agentic AI that includes Spotify's own engineering operations running on Anthropic's Claude Code since December 2025.