Google announced on April 29, 2026, a new feature for Google Photos that uses artificial intelligence to automatically scan a user's photo library, extract the clothing items that appear across those images, and assemble them into a dedicated digital wardrobe. The feature is scheduled to begin rolling out during the summer of 2026, first on Android and then on iOS, according to Tommy Meaney, Senior Product Manager at Google Photos.

The announcement arrives as Google has spent nearly two years expanding AI-powered visual tools across its product line - from Google Lens shopping integrations to virtual try-on inside AI Mode in Search and, most recently, Circle to Search gaining the ability to identify every item in a complete outfit at once. The Google Photos wardrobe feature is distinct from all of those because it operates entirely on clothing the user already owns, not on items for sale elsewhere.

How the AI wardrobe cataloging works

According to the announcement, the wardrobe feature constructs its catalog by analyzing the photos already stored in a user's Google Photos library. The AI identifies clothing items visible in those images and classifies them by category. The categories listed in the announcement include jewelry, tops, and bottoms - suggesting the classification system covers a broad range of garment types rather than a narrow subset.

Once the library has been processed, the resulting collection becomes browsable by category. A user can filter to see all tops together, all bottoms together, or all jewelry, rather than only having access to images organized by date or location as the current interface provides. The intended effect, according to the announcement, is that items buried deep in a photo archive become discoverable again, even if they appear in a photo taken years earlier.

The depth of that library scan is notable. Google Photos is one of the most widely used photo storage services globally and commonly holds libraries spanning many years. For users who have stored thousands or tens of thousands of photos, the AI will be processing a substantial corpus of images to identify clothing. The announcement did not specify how the system handles cases where the same garment appears across many different photos, nor whether it attempts to deduplicate or consolidate identical items into a single entry in the wardrobe collection.

Outfit creation and moodboards

Beyond cataloging, the feature introduces a tool for mixing and matching items to create outfits. According to the announcement, users can select individual pieces from their wardrobe collection and combine them into proposed outfits. Those outfits can then be saved on a digital moodboard or shared with other people.

The moodboard functionality supports multiple separate boards for different occasions. The announcement gives three concrete examples of the use case: summer weddings, a trip to Italy, and work outfits. Each board can hold a different configuration of items drawn from the same underlying wardrobe collection. The ability to maintain separate boards by occasion suggests the system is designed to handle wardrobe planning across different contexts simultaneously, rather than producing a single list of outfit combinations.

Sharing outfits directly from the moodboard feature implies the results can be exported or sent to others, though the announcement did not detail through which channels or applications that sharing would be facilitated.

Virtual try-on directly from the wardrobe

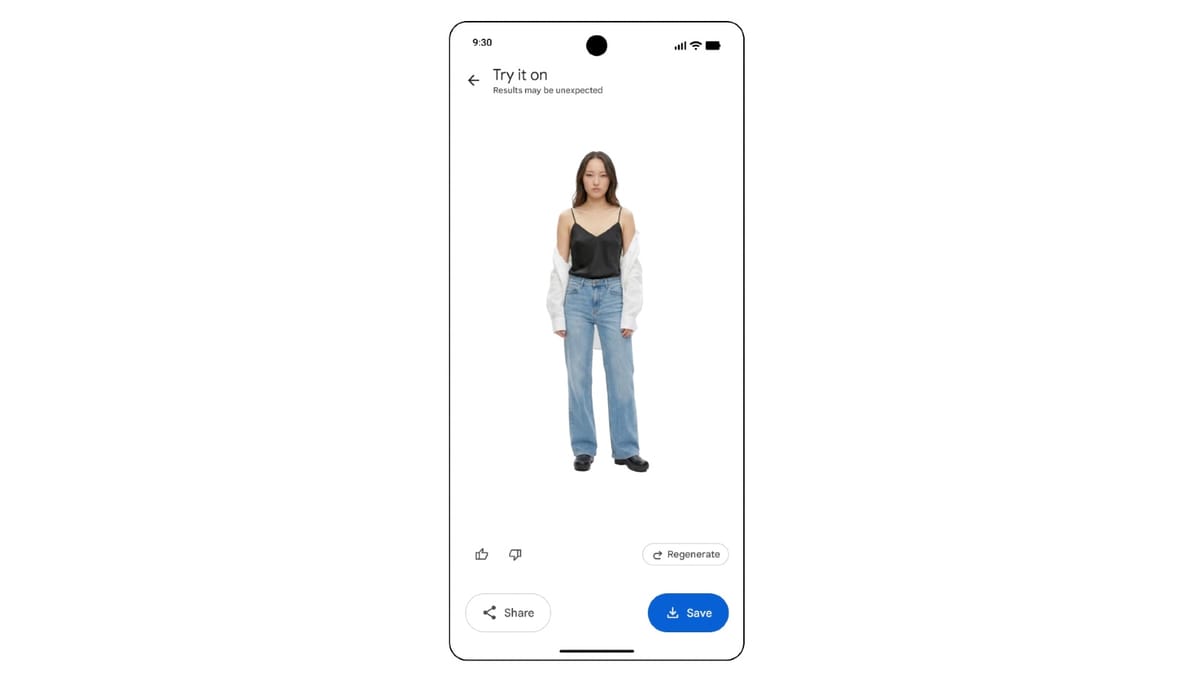

The third capability described in the announcement is virtual try-on. Once a user has selected individual pieces to form an outfit within the feature, they can tap "Try it on" to generate a preview of how the combination would look when worn. This renders the proposed outfit onto the user visually before they physically put it on.

The announcement does not specify the technical architecture behind this rendering. It is not stated whether the try-on preview uses the user's own image as a base, a generated representation, or another method. The announcement also does not describe which photo or image the system uses as the reference body for the preview, nor whether the try-on result is a static image or an interactive visualization.

What distinguishes this from the virtual try-on Google has already deployed in other contexts is the source of the clothing. The virtual try-on feature inside AI Mode in Search, which launched in May 2025, was designed for apparel pulled from the Shopping Graph - a database of more than 50 billion product listings from retailers worldwide. That feature allowed users to see how clothes available for purchase would look on them. The new Google Photos wardrobe feature, by contrast, operates on clothing the user already possesses, sourcing garment data from their own photo history rather than from any external retail inventory.

The broader context: Google's visual AI push since 2024

Google has been systematically building out visual AI capabilities across its product suite for roughly two years. Google Lens crossed nearly 20 billion monthly visual searches in October 2024, with 20 percent of those being shopping-related queries. That same month, Google introduced Shopping ads directly into the Lens interface. By May 2025, virtual try-on became available in Search Labs, allowing users to upload personal photographs and see retailer apparel rendered onto their own image within moments. The system at that stage already extended across the entirety of the Shopping Graph inventory.

The April 27, 2026 update to Circle to Search on Pixel 10 marked another step. That update introduced multi-object recognition - a capability called Find the Look - that allows the feature to identify every item within a photographed outfit simultaneously rather than only one at a time. The virtual try-on functionality is integrated at the end of that workflow, meaning a user can go from spotting a complete outfit in an image to evaluating an individual garment on their own body in fewer steps than before.

Google's 2026 retail advertising analysis published by PPC Land noted that Merchant Center product data now supplies information to a much broader set of Google surfaces than originally designed - including free listings, the Gemini shopping experience, AI Mode, virtual try-on in Google Lens, Business Agent, and brand profiles. The Google Photos wardrobe feature is positioned differently from those surfaces because it does not connect directly to retail inventory. However, the underlying image analysis and garment classification technology draws from the same general capability set that Google has been developing and deploying across its shopping and search interfaces.

What the feature does not address

The announcement, published on The Keyword blog, is notably brief. Several technical questions remain unanswered. The announcement does not describe how the system handles clothing that only partially appears in a photograph - for instance, a shirt visible only from the shoulders up, or trousers photographed from the knee down. It does not address how items from formal occasions versus casual settings are handled differently, if at all.

The announcement is also silent on data handling. Google Photos already processes user images with AI for features including automatic scene detection, face grouping, and object recognition. The wardrobe feature extends that processing specifically to clothing classification. The announcement references Google's privacy policy for the newsletter subscription section of the page, but provides no specific language about how wardrobe data is stored, whether it is used for any purpose beyond the feature itself, or how users can delete wardrobe catalog data they no longer want retained.

No information was provided about whether the feature will be available across all Google Photos subscription tiers or whether it will be limited to users on paid Google One plans. Google Photos offers free storage up to 15 gigabytes shared across Google services, with paid tiers starting at 100 gigabytes. The announcement does not address this distinction.

Rollout schedule and platform availability

According to the announcement, the feature will begin rolling out in Google Photos during the summer of 2026. Android users will receive access first, with iOS following at a later stage. No specific months were given for either platform. No information was provided about geographic availability or whether the feature will launch globally or in specific markets first.

The Android-first sequencing is consistent with Google's typical approach for new Google Photos features. The platform is developed by Google and features generally reach Android before iOS, though the gap between the two rollouts varies by feature. The announcement does not indicate how long after the Android launch the iOS version is expected to follow.

Why this matters for the marketing community

From a marketing perspective, a Google Photos feature that catalogs owned clothing and enables virtual try-on operates on a different layer of consumer behavior than shopping discovery tools. It inserts Google's AI into the moment before a purchase decision has been made - specifically, the process of deciding what to wear from clothing that already exists in a wardrobe. That is a moment where, historically, Google had no presence.

If the feature is widely adopted, Google gains a new category of behavioral signal: which garments users actively consider wearing, how frequently, and in what combinations. Whether or how that data connects to advertising infrastructure is not addressed in the announcement. But the pattern is consistent with Google's broader strategy of extending AI capabilities into daily user behaviors that generate useful product and preference signals.

The Circle to Search update on Pixel 10 already illustrated the commercial relevance of connecting outfit discovery to shoppable inventory. The Google Photos wardrobe feature covers the complementary scenario: once users have a clear view of what they already own, the path to purchasing missing items or replacements becomes shorter. Whether Google connects those two surfaces - owned wardrobe and shoppable inventory - is a question the announcement does not answer but that the design logic raises.

Fashion and apparel retailers who rely on Google's visual shopping surfaces should note that a personal wardrobe tool within Google Photos strengthens the platform's relevance to consumers across the full clothing decision cycle, not only at the discovery or purchase stage.

Timeline

- October 3, 2024: Google introduces Shopping ads in Google Lens as visual searches on the platform reach nearly 20 billion per month, with 20 percent being shopping-related.

- May 20, 2025: Google launches virtual try-on in AI Mode via Search Labs, allowing users to upload personal photographs to preview retailer apparel across the Shopping Graph's 50 billion product listings.

- April 27, 2026: Circle to Search on Pixel 10 gains Find the Look, a multi-object recognition capability allowing users to identify and search for every item in an outfit simultaneously, with virtual try-on integrated at the end of the workflow.

- April 29, 2026: Google announces the Google Photos wardrobe feature, which uses AI to scan a user's photo library, classify clothing by category, enable outfit creation and moodboard saving, and offer virtual try-on of owned garments. Rollout is scheduled for summer 2026, Android first.

Summary

Who: Google, announced by Tommy Meaney, Senior Product Manager at Google Photos.

What: A new AI-powered wardrobe feature for Google Photos that automatically scans a user's existing photo library to catalog their clothing by category, allows outfit combinations to be saved on digital moodboards for different occasions, and enables virtual try-on of selected outfits before getting dressed.

When: Announced on April 29, 2026. The feature is scheduled to roll out during the summer of 2026, first on Android and then on iOS.

Where: The feature will be available within the Google Photos application on Android and iOS.

Why: Google is extending AI-powered visual analysis into a new use case - the personal wardrobe - drawing on the same image recognition and garment classification capabilities that underpin its shopping and search tools. The feature is designed to make it easier for users to see what clothing they own, plan outfits for specific occasions, and evaluate how combinations will look without physically trying them on. For Google, the wardrobe sits at a stage in consumer behavior - deciding what to wear from owned clothing - where the company previously had no presence.