An updated working paper by researchers at Rutgers Business School and The Wharton School of the University of Pennsylvania today finds that news publishers who blocked large language model crawlers via the robots.txt standard lost roughly 7% of weekly website traffic within six weeks of blocking - and that the decline appears in human browsing data, not just automated bot metrics.

The paper, titled "Strategic Response of News Publishers to Generative AI," was written by Hangcheng Zhao of Rutgers Business School and Ron Berman of The Wharton School. The latest version is dated April 15, 2026, and was last revised on April 21, 2026, on SSRN. It draws on data from SimilarWeb, Semrush, Comscore, the HTTP Archive, the Internet Archive's Wayback Machine, and Revelio Labs job posting records. The core empirical window covers November 2022 - the month OpenAI launched ChatGPT - through May 2024, stopping just before Google introduced AI Overviews in search results, which the authors treat as a separate event that would otherwise contaminate the analysis.

What the study measured

The dataset underpinning the study is unusual in its breadth. The authors constructed a publisher panel covering 30 newspaper domains - selected from 6,315 URLs that appear in both the SimilarWeb dataset and Revelio's employer-linked job postings - and then expanded certain analyses to the top 500 news-publisher domains by Comscore traffic. The 30 core publishers generated aggregate SimilarWeb traffic ranging from a low of 448,320 daily visits at the median to over 20 million daily visits at the upper bound. CNN and The New York Times each recorded roughly 6.7 to 6.8 billion visits in 2023 in the SimilarWeb data. The BBC registered 5.83 billion. The Wall Street Journal recorded 940 million.

The study covers three independently constructed traffic measures. SimilarWeb provides daily domain-level estimates of total worldwide visits from January 2019 through February 2026. Semrush supplies channel-level breakdowns - direct, organic search, referral, social - for both desktop and mobile devices, worldwide. The Comscore Web-Behavior Panel is a household-level browsing panel tracking U.S. desktop activity from 2022 through 2024, filtered to active panelists with at least four monthly sessions per calendar year. Using three sources rather than one is a methodological strength: if the same negative effect appears across all three, the risk of a spurious result is substantially lower.

How publishers responded to generative AI

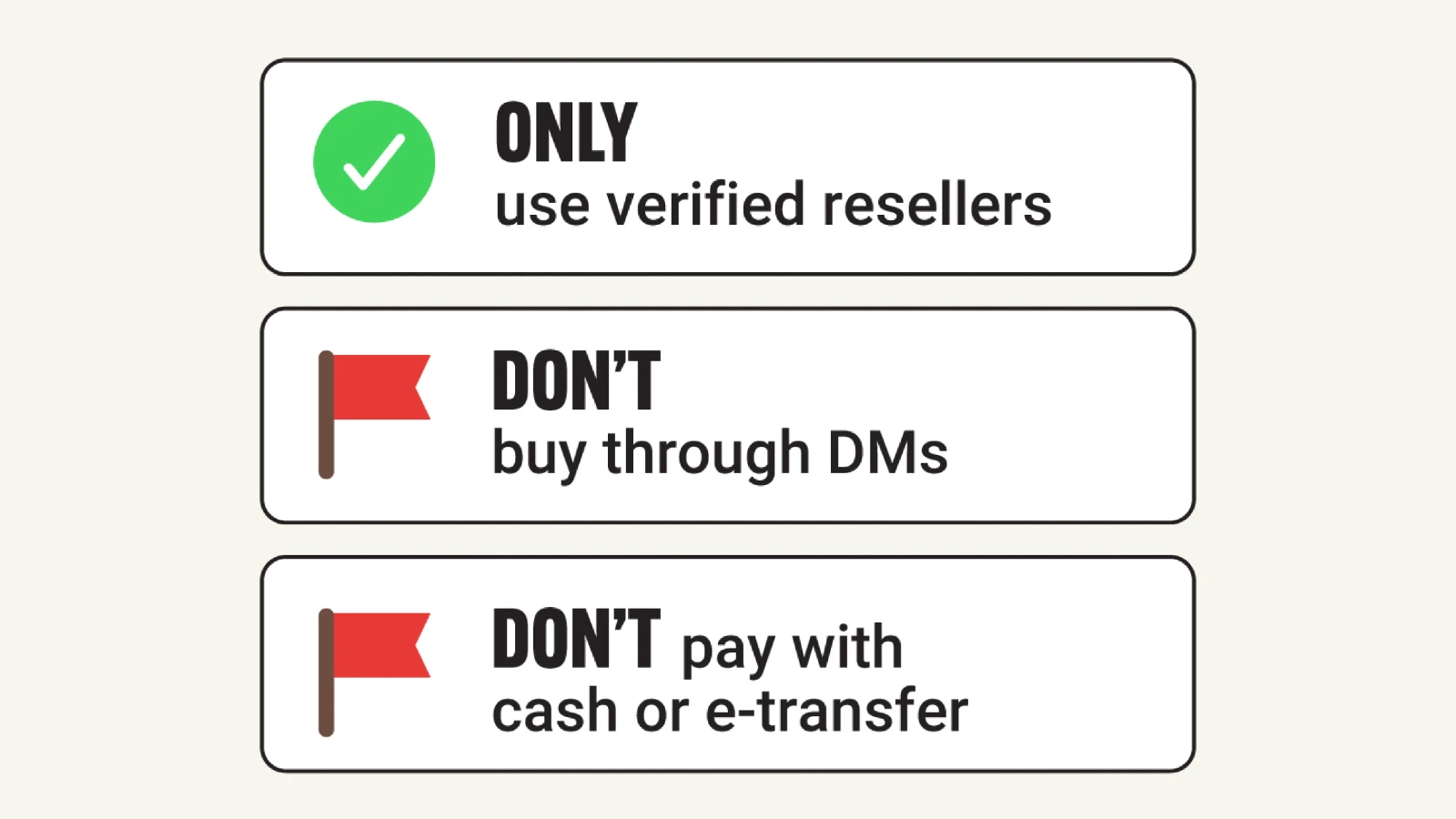

According to the paper, the most common publisher reaction to the growth of LLMs was to issue robots.txt directives blocking AI crawlers. This protocol, originally designed to communicate crawling preferences to search engines, provides a Disallow rule that tells a bot not to access certain paths or the entire site. Roughly 75% of the 30 top publishers in the study's sample blocked at least one major generative AI crawler at some point. News publishers adopted these blocks at substantially higher rates than non-news domains in the information sector and far higher than the retail sector, where the blocking ratio among top retailers remained below 10%.

Blocking adoption began in mid-2023 and followed a staggered pattern. OpenAI-related crawlers were targeted first. Crawlers from Anthropic, Perplexity, and others followed. The study identifies each publisher's first blocking event using historical robots.txt snapshots from the HTTP Archive, which tracks website structure changes over time. By the time the blocking wave peaked, the blocking ratio among the top 500 news publishing websites had climbed above 60%, compared to a rate below 10% among top retail domains.

Among the specific crawlers targeted, the study identifies GPTBot and ChatGPT-User from OpenAI, ClaudeBot and Claude-User from Anthropic, PerplexityBot from Perplexity, Google-Extended from Google, and ByteSpider from ByteDance, among others. Each has a distinct function: some gather training data, others handle user-triggered retrieval, and others serve search or browsing features.

The traffic cost of blocking

The central empirical result is expressed as an Average Treatment Effect on the Treated (ATT), estimated with a staggered difference-in-differences method following Callaway and Sant'Anna (2021). The procedure compares publishers that block in a given week against publishers not yet blocking and publishers that never block, tracking the evolution of log weekly visits in the 12 weeks before and 6 weeks after the blocking event. Pre-blocking coefficients are statistically indistinguishable from zero across all three datasets, satisfying the parallel trends assumption required for causal identification.

After blocking, traffic falls. The SimilarWeb ATT estimate is -0.074 (statistically significant at the 1% level, 95% confidence interval of -0.141 to -0.007). The Semrush ATT is -0.069 (significant at the 10% level, confidence interval of -0.145 to 0.007). The Comscore ATT is -0.065 (a 95% confidence interval of -0.150 to 0.021, not statistically significant, likely reflecting the smaller panel). Translated from logarithms, these figures represent an approximate 7% decline in weekly visits within six weeks of blocking across all three data sources.

The Comscore result is particularly meaningful because that dataset tracks actual human household browsing behavior, not aggregate server-side traffic estimates. A traffic decline measured in human panel data cannot be explained away as the mechanical removal of bot traffic from the overall count. The authors also run the analysis using Synthetic DiD and Two-Way Fixed Effects as robustness checks, and report negative estimates across both methods and all three traffic sources.

Three placebo exercises test whether the observed pattern could be a coincidental artifact. The first re-randomizes treatment timing for actual blockers. The second assigns fake blocking dates to publishers that never blocked. The third uses pre-blocking observations from eventual blockers alongside the never-blockers as controls. In all three designs, the placebo distributions center near zero, and none of the 50 random draws per exercise produces an ATT more negative than the baseline estimate. The study also verifies that the robots.txt changes in question were specific to AI crawler directives: of 24 publishers that blocked, 19 made no other simultaneous changes, and the remaining five made only minor modifications unrelated to search-engine crawlers.

Larger publishers bear the effect most clearly. For the top 50 publishers by Semrush traffic rank, the ATT is -0.069 (significant at 10%). For publishers ranked 51 to 100, the estimate is -0.034 but no longer significant. For publishers ranked 101 to 500, the point estimate turns positive. A similar gradient appears in the Comscore data, stratified by average daily visits.

Why blocking appears to reduce human traffic

The paper proposes two channels. The more likely one is reduced brand exposure: when a publisher blocks a generative AI crawler, the LLM stops including that publisher as a source in query responses and AI-powered summaries. Consumers who would have encountered the brand through an AI-generated answer instead encounter a competitor or no reference at all. Direct visits then decline because brand recall weakens. The secondary channel is the loss of actual referral clicks from LLM citations and links. The authors consider this less likely to be dominant given that AI-driven referral traffic was still small before May 2024.

Consistent with the brand-exposure hypothesis, the study documents that the pre-May 2024 traffic decline is concentrated in direct visits, not organic search referrals. After Google introduced AI Overviews in May 2024 and rolled out a core algorithm update in August 2024, both channels decline simultaneously. PPC Land has reported extensively on the AI Overviews impact, including Ahrefs data showing a 58% reduction in click-through rates for position-one content, and NewzDash analysis showing Google Web Search traffic to news publishers fell from 51% of referrals in 2023 to 27% by the fourth quarter of 2025.

The study deliberately excludes the post-May 2024 period from its main causal analysis, because the blocking adoption was concentrated in mid-to-late 2023 and the AI Overviews rollout would conflate two separate effects.

Publishers shifted format, not volume

Beyond access control, the study examines two other dimensions of publisher response: content format and hiring.

On content format, the authors use webpage composition data from the HTTP Archive, counting HTML elements by functional category and benchmarking against the top 100 retail domains, which have broadly similar total traffic levels. The retail comparison helps separate publisher-specific strategic shifts from general web development trends.

The results are striking. Interactive elements - buttons, input fields, forms, textareas - increased 68.1% relative to retail websites in the post-November 2022 period. Advertising and targeting technologies, including third-party JavaScript and embedded iframes, increased 50.1%. Site framework and layout components rose 70.2%. Article volume, measured by the count of article and section HTML tags, decreased 31.2%. The number of unique text and HTML URLs, tracked via the Internet Archive's Wayback Machine, shows a moderate decline, while image-based URLs grew substantially.

According to the paper, publishers that increased their use of responsive image elements showed modestly better traffic trajectories, with a correlation coefficient of r = 0.37 (p = 0.045) between per-publisher trends in log traffic and responsive image element counts. The association for video or aggregate multimedia elements was positive but noisier (r = 0.27, p = 0.14).

The interpretation the authors advance is one of differentiation: investing in formats that large language models cannot easily replicate. Visual content, interactive features, and embedded video require manipulation before an LLM can reproduce them, and such manipulation tends to produce outputs that draw negative consumer response. By emphasizing these formats, publishers can maintain audience appeal even if AI systems draw on their text.

Newsroom hiring did not contract

A third hypothesis the study examines is whether publishers reduced editorial headcount as generative AI reduced the cost of content production. The authors use Revelio Labs employer-linked job postings, classified monthly by occupation category (editorial and content-production roles vs. all other roles).

The finding is clear: editorial postings did not fall after November 2022. The share of editorial and content-production roles relative to total postings did not decline in the post-generative AI period and in several months increased. A Two-Way Fixed Effects regression comparing editorial postings to non-editorial postings after November 2022 yields an ATT of 7.578, statistically significant at the 1% level. This evidence aligns with the broader academic literature finding limited near-term labor displacement from generative AI despite broad task exposure.

What this means for the advertising and marketing industry

The results carry several implications for publishers, content strategists, and digital advertisers. Publishers who blocked AI crawlers did not gain protection proportional to the traffic they lost. The robots.txt mechanism is a voluntary protocol: crawlers can ignore it without technical consequence, and several studies and legal complaints have documented non-compliance. PPC Land covered Anthropic's February 2026 crawler documentation update, which came in part because Cloudflare data had shown Anthropic crawling 38,000 pages for every single page visit referred back to publishers.

Infrastructure responses have developed alongside the strategic debate. Cloudflare's December 2024 Robotcop tool moves enforcement from voluntary compliance to network-level Web Application Firewall rules. BuzzStream published research in March 2026 showing that among the top 50 news sites blocking ChatGPT's live retrieval bot, 70.6% still appeared in AI citation datasets - a finding that PPC Land reported on and that complicates simple decisions to opt out of AI access entirely.

PPC Land covered the original version of this Wharton-Rutgers working paper in January 2026, when it reported the traffic decline at 23% in monthly visits - a figure that differs from the 7% weekly estimate in the current version, reflecting different measurement windows (monthly versus weekly) and updated methodology. The April 2026 revision also adds Semrush as a third traffic dataset, and the LinkedIn announcement by Hangcheng Zhao notes that "Semrush is new in this version of the paper, and its estimates line up closely with the others."

For digital advertisers, the longer-term question is how publisher content strategies - shifting toward interactive and multimedia formats, maintaining editorial headcount, and potentially accepting short-run traffic losses to strengthen negotiating positions with AI platforms - affect the inventory and audience quality on which programmatic campaigns depend. As PPC Land reported in August 2025, over 80 media executives gathered at IAB Tech Lab to establish technical standards requiring AI platforms to respect publisher consent and compensation, with the four principles of control, consent, credit, and compensation framing what has become an industrywide negotiation.

The paper ends with a note on strategic optionality. Some publishers may view short-term traffic losses from blocking as acceptable costs if they strengthen future bargaining positions over licensing, attribution, or platform access agreements with generative AI companies. That calculation depends partly on how quickly AI-driven referral traffic grows as a share of total publisher visits - a dynamic the current dataset, which stops before May 2024, cannot fully resolve.

- November 2022 - OpenAI launches ChatGPT; the authors' study period begins; editorial job postings treated as post-generative AI from this point

- Early 2023 - Change-point detection on SimilarWeb daily traffic identifies a structural break; direct traffic declines while organic search referrals remain stable

- Mid-2023 - Publishers begin blocking generative AI crawlers in robots.txt files; OpenAI-related crawlers targeted first; Anthropic, Perplexity and others follow

- August 2023 - OpenAI's GPTBot blocked by 5% of top 1,000 websites when first introduced (baseline figure cited in the paper)

- August 2024 - Over 35% of the world's top 1,000 websites block OpenAI's GPTBot; news publishers among the most active blockers

- May 2024 - Google introduces AI Overviews; the paper's main causal analysis stops here to avoid contamination from the AI Overviews effect

- August 2024 - Google rolls out a core algorithm update; both direct and organic search traffic to publishers decline simultaneously

- June 2024 - Cloudflare introduces feature to block AI scrapers and crawlers

- August 2025 - Over 80 media executives at IAB Tech Lab summit address AI scraping

- December 2024 - Cloudflare launches Robotcop, enforcing robots.txt at the network level

- December 9, 2025 - OpenAI revises ChatGPT crawler documentation

- December 23, 2025 - News publishers lose half their Google search traffic in two years

- December 31, 2025 - Zhao and Berman post the initial version of the paper to SSRN; date written listed as December 31, 2025

- January 1, 2026 - PPC Land covers the earlier version of the paper

- February 25, 2026 - Anthropic clarifies its three web crawlers and blocking options

- March 19, 2026 - BuzzStream study of 4 million AI citations shows blocking does not reliably reduce citation rates

- April 15, 2026 - Current version of the paper dated; last revised April 21, 2026 on SSRN; Hangcheng Zhao shares the update on LinkedIn today

Summary

Who: Hangcheng Zhao, Assistant Professor of Marketing at Rutgers Business School, and Ron Berman, professor at The Wharton School of the University of Pennsylvania.

What: An updated working paper using difference-in-differences analysis across SimilarWeb, Semrush, and Comscore data finds that news publishers who blocked LLM crawlers via robots.txt lost approximately 7% of weekly traffic within six weeks of blocking, with the decline visible in human browsing panel data. Publishers also shifted toward richer, more interactive content formats rather than increasing text volume, and did not reduce editorial hiring relative to other roles.

When: The working paper covers November 2022 through May 2024 as its core empirical window. The paper was first posted to SSRN on January 14, 2026, and last revised on April 21, 2026. Zhao shared the update on LinkedIn today, April 26, 2026.

Where: The analysis covers global publisher traffic patterns using SimilarWeb and Semrush data, and U.S. desktop browsing behavior through the Comscore Web-Behavior Panel. The sample centers on 30 top newspaper publisher domains, with certain analyses extending to the top 500 news publishers.

Why: The findings matter because robots.txt blocking was the most widespread defensive response by news publishers to generative AI, yet the data shows it was followed by lower, not higher, traffic. The study documents an overlooked tradeoff: technical access control is straightforward to implement but can reduce a publisher's visibility in AI-mediated discovery channels, weakening brand exposure and audience reach. For the advertising and marketing industry, the results raise questions about the content strategies, inventory characteristics, and negotiating postures of publishers who supply the open web environments on which digital campaigns depend.

Discussion