Google this week expanded A/B testing capabilities in the Google Ads API with the release of version 24.1, introducing direct experiment statistics retrieval and support for several new experiment types - changes that affect how developers and large advertiser teams build and automate experimentation workflows.

The update was announced on May 14, 2026, through the Google Ads Developer Blog by Laura Chevalier of the Google Ads API Team. The underlying v24.1 release itself launched on May 13, 2026. According to Google, the impetus for the update is that "API functionality is critical for advertisers who manage large sophisticated accounts," and the team's stated goal was to give those advertisers the ability to "better create and manage their A/B experiments at scale."

Background: three workflows, seven experiment types

The Google Ads API structures experiments around three distinct workflows, each corresponding to a different testing methodology. Understanding which workflow applies determines how traffic is divided, how campaigns are created, and what reporting approach is available.

The system-managed workflow creates one or more treatment campaigns that run alongside an original control campaign. Traffic is split between the two at a configurable percentage. This applies to SEARCH_CUSTOM, DISPLAY_CUSTOM, HOTEL_CUSTOM, YOUTUBE_CUSTOM, and PMAX_REPLACEMENT_SHOPPING experiment types. The intra-campaign workflow does not create any additional campaign - instead, traffic is split within a single existing campaign, with one portion exposed to the feature under test and the other not. This applies to ADOPT_AI_MAX and ADOPT_BROAD_MATCH_KEYWORDS. The asset optimization workflow, used for OPTIMIZE_ASSETS, tests different asset sets against each other within Performance Max asset groups.

According to Google's documentation, updated May 13, 2026, all three workflows share the same four-stage lifecycle: setup, schedule, run and report, and complete. How each stage unfolds differs significantly depending on the workflow.

System-managed experiments: setup in detail

For system-managed experiments, the setup process follows three steps in sequence. The first is creating an Experimentresource with fields that define the experiment's parameters. According to the documentation, key fields include name(which must be unique), description, suffix (appended to treatment campaign names to distinguish them from the control), type, status (set to SETUP at creation), start_date and end_date, and sync_enabled.

The sync_enabled field - disabled by default - is worth noting. When set to true, changes made to the original control campaign while the experiment is running are automatically copied to the treatment campaign. This prevents the two campaigns from drifting apart during the test period, a common source of confounding variables in longer-running experiments.

The second step is creating ExperimentArm resources. According to the documentation, all arms must be created in a single request. Each experiment must have exactly one control arm and one or more treatment arms. The traffic_split field must be defined for every arm, and the sum across all arms must equal 100. The control arm must reference exactly one existing campaign in its campaigns field. When a treatment arm is created with control set to false, the API automatically generates a draft campaign based on the control campaign. The resource name of this draft is populated in the in_design_campaigns field of the treatment arm.

The third step is modifying those in-design campaigns. Draft campaigns are returned only when the include_drafts=trueparameter is added to the query. At least one change must be applied to an in-design campaign before the experiment can be scheduled. Once modifications are complete, ExperimentService.ScheduleExperiment materializes the in-design campaigns into real, servable campaigns, ready to begin serving when start_date arrives. This is an asynchronous operation.

Type-specific requirements for system-managed experiments

Two of the system-managed types carry specific constraints. For PMAX_REPLACEMENT_SHOPPING, the experiment requires exactly two arms - one control, one treatment - and the control arm must reference a Shopping campaign. The treatment arm setup is flexible: leaving the campaigns field empty causes the API to automatically create an in-design Performance Max campaign based on the control Shopping campaign. Alternatively, specifying an existing Performance Max campaign in the campaigns field uses that campaign as the treatment, skipping the in-design step entirely.

According to the documentation, it is recommended to discard the first seven days of statistics from evaluation "to give the campaign time to finish its ramp-up and learning phase." The PMAX_REPLACEMENT_SHOPPING type cannot be promoted - only ended or graduated. For YOUTUBE_CUSTOM, the documentation notes support for a maximum of 10 arms, and that creative_asset_testing_info can be set on the experiment arm to test assets.

Intra-campaign experiments: how traffic is split internally

Intra-campaign experiments operate on a different structural logic. Rather than producing separate campaigns, the experiment splits traffic within a single campaign based on whether the feature under test is active. According to the documentation, ADOPT_AI_MAX and ADOPT_BROAD_MATCH_KEYWORDS are the two supported types. Once set up, traffic is split within the campaign so that 50% of traffic is exposed to the enabled feature (the treatment group) and 50% is not (the control group). The split is fixed at 50/50 and is not configurable for these types.

The setup process has three steps. The developer defines the Experiment with a type, a control ExperimentArm, and a treatment ExperimentArm. Both arms reference the same campaign. Then, for AI Max, a field mask is used to enable the test feature for the experiment. For ADOPT_BROAD_MATCH_KEYWORDS, this step is skipped - the broad match campaign setting is enabled automatically upon experiment creation. All of this is sent in a single GoogleAdsService.Mutate request containing mutate operations for the experiment, the arms, and - where applicable - the feature enablement.

The documentation is explicit about a reporting constraint that is structurally different from system-managed experiments: "Because control and treatment traffic are mixed within a single campaign, you must use direct experiment reporting to compare metrics between the control and treatment groups. Standard campaign-level reporting only shows aggregated metrics for the entire campaign and cannot distinguish between the two groups." This is the only way to compare control versus treatment for intra-campaign experiments. Querying the campaign resource for an intra-campaign experiment returns only aggregated totals.

When an intra-campaign experiment concludes, two outcomes are available. An end operation disables the feature and reverts the campaign to serving all traffic without it. A promote operation applies the experimental change as the new permanent state of the campaign. The graduate operation is not available for intra-campaign experiments, because there is no separate treatment campaign to convert into an independent campaign.

Why intra-campaign AI Max and broad match tests matter now

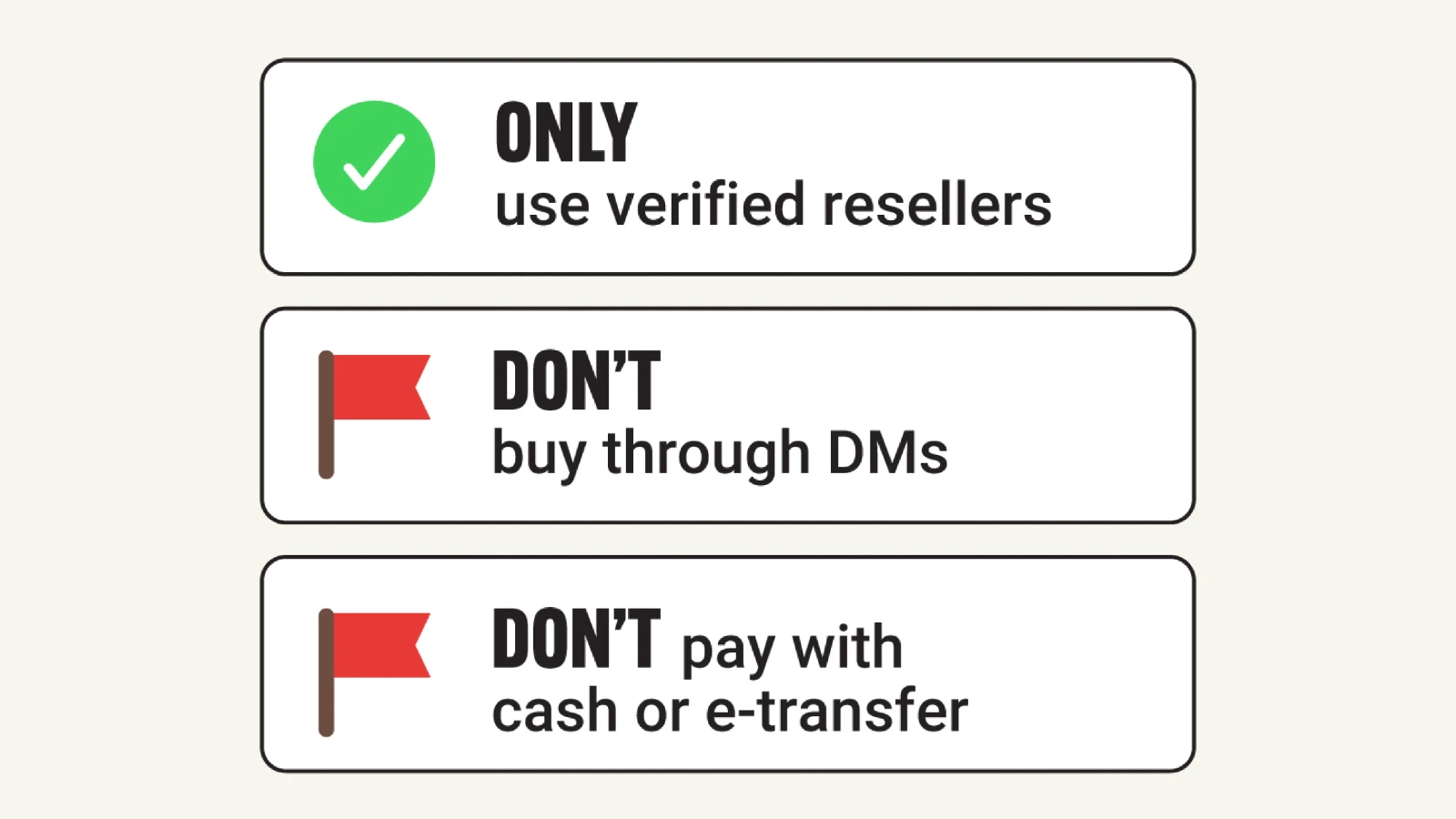

The inclusion of ADOPT_AI_MAX and ADOPT_BROAD_MATCH_KEYWORDS as formalized API experiment types arrives at a moment of significant debate in the industry. Multiple independent tests published in late 2025 found AI Max delivering conversions at substantially higher cost than traditional match types - one four-month test documented by Xavier Mantica showed AI Max at $100.37 per conversion versus $43.97 for phrase match. A separate analysis by Brad Geddes in December 2025 highlighted attribution problems where AI Max was found to treat all keywords as broad match regardless of specified match type, distorting per-match-type performance data.

Google has now announced that Dynamic Search Ads will be upgraded to AI Max by September 2026, increasing the pressure on advertisers to understand AI Max's actual incremental contribution before it becomes the default. The ADOPT_AI_MAX experiment type in v24.1 gives developers a programmatic path to run a properly controlled test - with a fixed 50/50 traffic split and built-in significance statistics - rather than relying on before/after comparisons that cannot isolate the feature's effect.

The intra-campaign broad match experiment method was introduced at the UI level in June 2025, replacing the older approach of duplicating campaigns. The v24.1 API release extends that methodology to programmatic workflows, meaning agencies and martech platforms can now automate the creation of broad match tests across large account portfolios without manual setup in the interface.

Asset optimization experiments: Performance Max creative testing

The asset optimization workflow is purpose-built for Performance Max campaigns. According to the documentation, asset optimization experiments "are used to test different asset combinations within Performance Max campaigns, allowing you to compare how different sets of assets perform against a base set." The supported experiment type is OPTIMIZE_ASSETS.

The setup requires a specific operation sequence within a single mutate request, because the entities involved are interdependent. According to the documentation, the correct order is: first, create an Asset with a temporary resource name (such as customers/CUSTOMER_ID/assets/-1); second, create an Experiment with a temporary resource name; third, create two ExperimentArm resources - a control arm linked to a base AssetGroup and a treatment arm linked to the same base AssetGroup, with the asset_groups field set using the temporary resource name of the asset; fourth, create an AssetGroupAsset linking the asset from step one to the asset group used in the experiment arms.

The interdependency requirement - all four operations in a single request using temporary resource names - differs from other experiment types where steps are performed sequentially. Attempting to execute these operations across multiple requests would fail because the AssetGroupAsset reference cannot resolve until the Asset resource exists, and both the Experiment and ExperimentArm resources must be created before the AssetGroupAsset can link them.

Reporting on asset optimization experiments uses the experiment resource to compare metrics between control and treatment asset groups, consistent with the direct experiment reporting approach described below. When the test concludes, only two operations are available: end or graduate. An end operation stops the experiment if results from the treatment arm are unsatisfactory. A graduate operation - when the treatment asset group performs better - converts the treatment arm into a new independent campaign. As part of the graduate process, the documentation notes, "the original base campaign associated with the control arm is paused, and the treatment arm is converted to a new, independent campaign." Promotion is not available for asset optimization experiments.

Asset-level A/B testing had expanded to all Performance Max campaigns in January 2026 through the UI. The v24.1 API formalization means developers can now automate this testing at scale - relevant for agencies managing many Performance Max campaigns who want to run systematic creative testing without configuring each test through the interface individually.

Direct experiment reporting: the new statistical output

Before v24.1, comparing experiment performance through the API required querying campaign resources separately and calculating significance metrics externally. The new direct experiment reporting makes this unnecessary. According to the documentation, querying the experiment resource directly retrieves both treatment metrics and control metrics in the same row. For example, metrics.clicks returns the treatment arm's click count while metrics.control_clicks returns the control arm's, both in a single query response.

For statistical significance, the documentation defines three fields for each supported metric. metrics.*_p_value is "the probability that the observed results would occur if the experiment had no actual effect on the metric. A lower p-value indicates higher statistical significance." metrics.*_point_estimate is "the estimated percentage lift (positive or negative) in the given metric for the treatment arm compared to the control arm." Together with margin_of_error, these describe a confidence interval. The quantity estimated is (treatment / control - 1), with the point estimate at the center of the interval. metrics.*_margin_of_error is "the radius of the confidence interval, which is centered at point_estimate."

The supported core metric fields for direct experiment reporting are: clicks, impressions, cost_micros, conversions, cost_per_conversion, conversion_value, and conversion_value_per_cost. For conversions specifically, statistical fields are exposed through separate absolute_change fields rather than relative values: metrics.conversions_absolute_change_p_value, metrics.conversions_absolute_change_point_estimate, and metrics.conversions_absolute_change_margin_of_error.

The documentation gives a concrete interpretation example: if conversions_absolute_change_p_value is below 0.05 (the threshold for 95% confidence) and conversions_absolute_change_point_estimate minus conversions_absolute_change_margin_of_error is greater than zero, that indicates the treatment arm is performing significantly better than the control arm in terms of conversions. The point estimate minus the margin of error establishes the lower bound of the confidence interval - if even the lower bound is above zero, the improvement is statistically real rather than noise at the 95% confidence level.

The documentation lists four advantages of direct experiment reporting over campaign-level reporting: centralized metrics (control and treatment in a single row), statistical confidence data, efficiency (no need to manually join results from multiple reports), and intra-campaign support (the only way to compare control versus treatment for intra-campaign experiments where traffic is split inside a single campaign).

Campaign-level reporting as an alternative

For system-managed experiments that create separate treatment campaigns, campaign-level reporting remains available as an alternative. Developers can query the campaign resource and use campaign.experiment_type to distinguish between BASE(control) and EXPERIMENT (treatment) campaigns. The documentation notes this approach is useful "if you need to segment metrics at a more granular level (for example, by ad group or keyword) or view campaign metadata not available on the experiment resource." The tradeoff is that performance comparisons and statistical calculations must be performed manually. Campaign-level reporting cannot be used for intra-campaign experiments at all.

Best practices from the documentation

The reporting documentation published May 14, 2026, includes operational guidance. According to Google, selecting an appropriate confidence level is the first decision: "Setting a lower p-value threshold can provide directional guidance faster, especially with lower budgets or conversion volumes. A 95% confidence (p-value <= 0.05) is considered the academic standard and may be better for more accurate results over a longer timeframe."

On duration, Google recommends running experiments for at least four weeks "to account for weekly performance cycles, conversion delays, and learning periods." For campaigns using automated bidding or testing new features, the documentation advises disregarding the first one to two weeks of data "to give time for bidding models and traffic levels to recalibrate to the split." A 50/50 traffic split is described as "generally the fastest way to achieve statistically significant results." Setting experiment start dates three to seven days in the future is recommended to allow time for ad review and approval. And only one experiment per campaign can run at any given time.

Context in the broader API release cadence

The v24.1 release is a minor version update under the monthly release schedule Google announced in September 2025 and began implementing in January 2026. Minor versions are additive and non-breaking, meaning existing integrations do not need modification to consume the new experiment functionality. The parent version, Google Ads API v24, launched April 22, 2026, introduced CartDataSalesView, nine lead generation conversion type enumerations, and view-through conversion optimization for Demand Gen and App campaigns.

A product reporting expansion announced April 30, 2026, scheduled to take effect June 15, 2026, will bring cost and conversion metrics to Video, Demand Gen, and App campaigns at the shopping_product level. Together, the v24 reporting expansion and the v24.1 experiment additions move the API toward a more complete measurement and testing infrastructure within the same monthly cycle. Google Ads API v23, released January 2026, had addressed a parallel gap by introducing channel-level reporting for Performance Max - data that now becomes more actionable when combined with the experiment significance outputs from v24.1.

According to Google, updated documentation and code examples are available on the Google Ads API Developer site. Additional experiment types are planned for future releases, though no specific types or timelines were named in the announcement.

Timeline

- January 2023 - Google rolls out Performance Max Experiments for Shopping comparisons

- February 2024 - Google Ads API v16 adds Experiment.sync_enabled for live campaign synchronization

- May 6, 2025 - Google launches AI Max for Search campaigns

- June 4, 2025 - Google Ads API v20 launches with Performance Max campaign-level negative keywords

- June 30, 2025 - Google introduces intra-campaign broad match experiment workflow in the UI

- August 6, 2025 - Google Ads API v21 introduces AI Max programmatic support

- August 27, 2025 - Industry analysis flags AI Max Search Partner Network expansion concerns

- September 4, 2025 - Google announces monthly API release schedule beginning January 2026

- November 8, 2025 - Independent tests across 250+ campaigns show AI Max underperforming traditional match types

- December 13, 2025 - Google clarifies AI Max attribution discrepancies

- January 9, 2026 - Asset-level A/B testing expands to all Performance Max campaigns via UI

- January 27-28, 2026 - Google Ads API v23 launches with channel-level Performance Max reporting

- April 15, 2026 - Google announces DSA deprecation; upgrade to AI Max scheduled for September 2026

- April 22, 2026 - Google Ads API v24 launches with CartDataSalesView and lead gen conversions

- April 30, 2026 - Google announces product reporting expansion for Video, Demand Gen, and App campaigns, effective June 15

- May 13, 2026 - Google Ads API v24.1 releases with expanded experiment functionality and updated documentation

- May 14, 2026 - Google Ads Developer Blog publishes announcement by Laura Chevalier

Summary

Who: Google's Ads API Team, with the announcement authored by Laura Chevalier, targeting developers, marketing technology vendors, and large-scale advertisers building on the Google Ads API.

What: Google Ads API v24.1 adds direct querying of experiment arm-level and significance statistics - including control arm stats, point estimates, margin of error, and p-values - across seven experiment types spanning three workflows: system-managed (Search, Display, Hotel, Video, and Performance Max vs Shopping), intra-campaign (AI Max and broad match), and asset optimization (Performance Max creative testing).

When: Version 24.1 launched on May 13, 2026. The announcement was published on May 14, 2026, on the Google Ads Developer Blog. Supporting documentation across all experiment types was updated May 13-14, 2026.

Where: The changes are available through the Google Ads API. Documentation and code examples are published on the Google Ads API Developer site. Technical discussion takes place on the Google Advertising and Measurement Community Discord server.

Why: Before v24.1, experiment statistics required manual calculation by querying campaign resources separately. Direct experiment reporting removes that burden, enables automated significance testing within API workflows, and extends programmatic experiment support to campaign types - including Video, AI Max, broad match, and Performance Max asset optimization - that previously lacked dedicated API experiment coverage.

Discussion