Researchers at the University of California, Davis have published the first systematic measurement of web tracking on AI chatbot platforms, finding that 17 of 20 popular services share information with at least one third party during a single normal chat session - and that three transmit the readable text of user conversations to Microsoft Clarity, a session replay tool.

The paper, titled "Tracking Conversations: Measuring Content and Identity Exposure on AI Chatbots," was submitted to arXiv on April 30, 2026 and revised on May 13, 2026. The authors - Muhammad Jazlan, Ethan Wang, Yash Vekaria, and Zubair Shafiq, all affiliated with the University of California, Davis - used a controlled test prompt to capture and compare network traffic across 20 services in both normal and private chat modes.

The prompt and the methodology

The researchers chose a single, consistent prompt for all tests: "pregnancy test near me." The choice was deliberate. According to the paper, this query combines health-related information with an implicit location request, making it representative of the kind of sensitive, personal content that users regularly submit to AI chatbots. The test was conducted under Chrome, which lacks the tracking protections offered by Safari's Intelligent Tracking Prevention or Firefox's Enhanced Tracking Protection. Chrome was selected specifically because it provides a baseline for tracking caused by chatbot website design and third-party integrations, without browser-level interference.

For each of the 20 chatbots, the researchers created a fresh user account using either email registration or Google single sign-on. They recorded all network traffic generated while loading the chatbot interface, submitting the prompt, and waiting for the response. For services that offer a private or temporary chat mode - ChatGPT, Gemini, Claude, Grok, Perplexity, Qwen Chat, and Mistral among them - they ran a second session using the same prompt to evaluate whether private modes reduce third-party exposure.

The traffic analysis used a four-stage process: preprocessing to decompress and decode encoded payloads, defining search targets across content and identity categories, matching those targets against captured traffic, and attributing matches to the receiving parties. For matching, the researchers generated not just exact string matches but also common encoding variants including base64, URL encoding, hex, and cryptographic hashes including MD5, SHA-1, and the SHA-2 and SHA-3 families. This is technically significant: it means that obfuscating a user identifier by hashing it does not prevent detection in this methodology.

Third-party presence across the landscape

Across 20 chatbots, the study identified 47 unique third-party owners and 178 distinct chatbot-to-third-party domain pairs during normal chat sessions. Analytics services appeared on 17 of the 20 chatbots. Advertising services appeared on 12 of the 20. Despite appearing on fewer chatbots than analytics, advertising accounted for 73 of the 178 observed pairs - the largest single category - because chatbots that load advertising services often contact many different advertising domains within a single session.

Three chatbots contacted no external third parties at all: Gemini, Meta AI, and Duck.ai. This does not mean those services send no data externally. Gemini contacts several Google-owned domains including Google Analytics, Google Tag Manager, and Google static content - but because those domains are owned by Google, the study classifies them as platform-party rather than third-party. Meta AI loads content from Facebook's CDN. Duck.ai sends requests to duckduckgo.com. The study notes that Duck.ai goes further than most: according to the paper, the service strips user IP addresses before forwarding requests to AI providers.

SeaArt stood out for the breadth of its advertising connections. In a single session, the service contacted 13 distinct advertisers: A8, Amazon, Google, Meta, Microsoft, Outbrain, Pinterest, Quora, Reddit, TikTok, Twitter/X, Yahoo Japan, and Yandex. Genspark contacted a comparable number in the same test.

Session replay and plaintext conversation exposure

The most technically significant finding concerns session replay. Four chatbots - Genspark, SeaArt, ChatOn, and Microsoft Copilot - embed Microsoft Clarity, a free behavioral analytics tool that records user interactions including cursor movement, clicks, and page content. Because the rendered DOM of a chatbot page contains the conversation itself, session replay can capture both the user's prompt and the model's response.

Three of the four - Genspark, SeaArt, and ChatOn - transmitted conversation text in plaintext to Clarity. According to the paper, Clarity received the exact prompt string "pregnancy test near me" on all three chatbots. On Genspark, the response text captured included "Here are a few pregnancy test options near you." On ChatOn, Clarity received "Most pharmacies like CVS, Walgreens, or Rite Aid carry home pregnancy tests." On SeaArt, extended roleplay response text spanned multiple sentences.

Microsoft Copilot also embeds Clarity but routes it through the first-party endpoint https://copilot.microsoft.com/cl/eus2-g/collect. The study found no plaintext conversation text in those payloads. Instead, the Copilot payloads carried message-specific identifiers and the order in which messages appeared in the DOM - a meaningfully different level of exposure from the three chatbots sending full conversation text.

The researchers note that Chaton, SeaArt, and Genspark each name specific third parties in their privacy policies but omit Microsoft Clarity - despite Clarity receiving plaintext conversation text in the measurements. Claude and OpenRouter were identified as positive examples: both list a broad set of third parties present on their websites and describe the types of data shared with them.

How prompts reach Google Maps

One of the more specific content exposure paths documented in the study involves Genspark and Google Maps. According to the paper, Genspark transmits the full prompt to Google Maps by embedding it as a URL parameter in a map widget query: www.google.com/maps/embed/v1/search?q=pregnancy+test+near+me. The raw prompt is URL-encoded and passed to Google in the q parameter as part of loading the embedded map. The map loads as a third-party iframe, which can attach Google cookies depending on whether the user is logged into a Google account - potentially linking the prompt to the user's Google identity without any deliberate action by the user.

The researchers propose a more privacy-preserving design: resolve the location query server-side and pass only the minimum information needed to render the map, such as coordinates or a sanitized place query, rather than embedding the raw prompt.

Chat identifiers and page titles as tracking vectors

Chatbots typically assign a per-conversation URL that appears in the browser address bar. The study found that 15 of the 20 chatbots transmit this URL to at least one third party through standard analytics or advertising tags, reaching 29 distinct destinations in total. Meta Pixel's dl parameter, Google Analytics's collect endpoint, Bing's UET tag, and DoubleClick's conversion tags all collect the current page URL by default. Any chatbot that embeds these tags automatically transmits the chat identifier to the corresponding destination.

SeaArt transmitted chat identifiers to 12 destinations including Facebook, Google, TikTok, Yandex, Outbrain, and Quora. Genspark transmitted to 7, including Facebook, Google, Bing, TikTok, and Microsoft Clarity. The remaining chatbots transmitted to between 1 and 4 destinations.

The chat identifier does not itself reveal conversation text, but it does identify a specific conversation. On platforms where the chat URL is shareable or resolvable, exposing the URL exposes a pointer to the conversation. Even where the URL requires authentication, the identifier still allows the receiving party to associate information with the same chat over time.

Page titles create a parallel exposure path. Kimi, Claude, Manus, Gemini, and Genspark place the user's prompt or an extracted keyword into the page title, from where it is collected by analytics and advertising tags. Kimi's title reached Google's DoubleClick via the standard dt parameter and was forwarded to Google Ads via a tiba parameter. Claude and Manus both embed Intercom's customer support widget, which posts page metadata including the title to api-iam.intercom.io/messenger/web/metrics. Gemini sends the page title to its platform-party Google Analytics in the standard payload.

Identity exposure: email addresses, names, and cookies

The study separates identity exposure from content exposure. Identity includes names, email addresses, internal user identifiers, and first-party cookies transmitted to third-party destinations.

On Claude and Mistral, the Intercom widget fires a ping request when the chat page loads, carrying the user's email address, name, internal user ID, and user hash. According to the paper, this ping fires without any user interaction with the support widget - it triggers automatically on page load. Intercom also transmits first-party cookies including intercom-device-id-* and intercom-session-* to intercom.io. Genspark transmits two Stripe-related first-party cookies - __stripe_mid and __stripe_sid - to stripe.com.

On Character.ai, the Sentry error-monitoring tag transmits session data to sentry.io. The did field carries the user's email address, the user_agent field carries the User-Agent string directly, and the ip_address field is set to the sentinel value "{{auto}}", which instructs Sentry to record the client IP address from the connection. The paper distinguishes this from passive network-layer IP exposure because the tag explicitly configures IP collection as part of its payload - it is an intentional configuration choice, not an incidental side effect.

Character.ai's Statsig tag also transmits experimentation and feature-flag event payloads to prodregistryv2.org, each carrying the user's email, internal user ID, IP address, and User-Agent alongside the experiment or feature-flag being logged.

Advertising tags introduced a separate identity mechanism. Perplexity transmits a hashed email to Singular. SeaArt transmits a hashed email in the eb_email field to the TikTok pixel at analytics.tiktok.com. The paper cites a US Federal Trade Commission analysis noting that hashed emails can function as persistent cross-site identifiers because the same email produces the same hash across platforms, allowing Singular and TikTok to link activity on these chatbot sites to activity on any other site reporting the same hashed value.

Meta's _fbp cookie was the most widespread advertising identifier observed, transmitted on Character.ai, ChatOn, Genspark, Manus, PolyBuzz, and SeaArt to facebook.com. Microsoft's UET cookies _uetsid and _uetvid were sent to bat.bing.com on ChatOn, Copilot, Genspark, and SeaArt. SeaArt additionally transmitted TikTok's _ttp cookie and Pinterest's _pin_unauth first-party cookies to tiktok.com and pinterest.com respectively.

On ChatOn, Genspark, and SeaArt, the user's email address and name were captured by Microsoft Clarity as part of the rendered DOM and transmitted to *.clarity.ms/collect. Because these values appear in the chatbot interface - in greeting messages and in-conversation mentions - the session-replay tooling captures them alongside the rest of the page content. The researchers note this differs from the widget, analytics, and advertising examples because the identity information is not placed into a labeled field - it appears as readable text that the replay tool captures as a side effect.

Private modes reduce exposure substantially

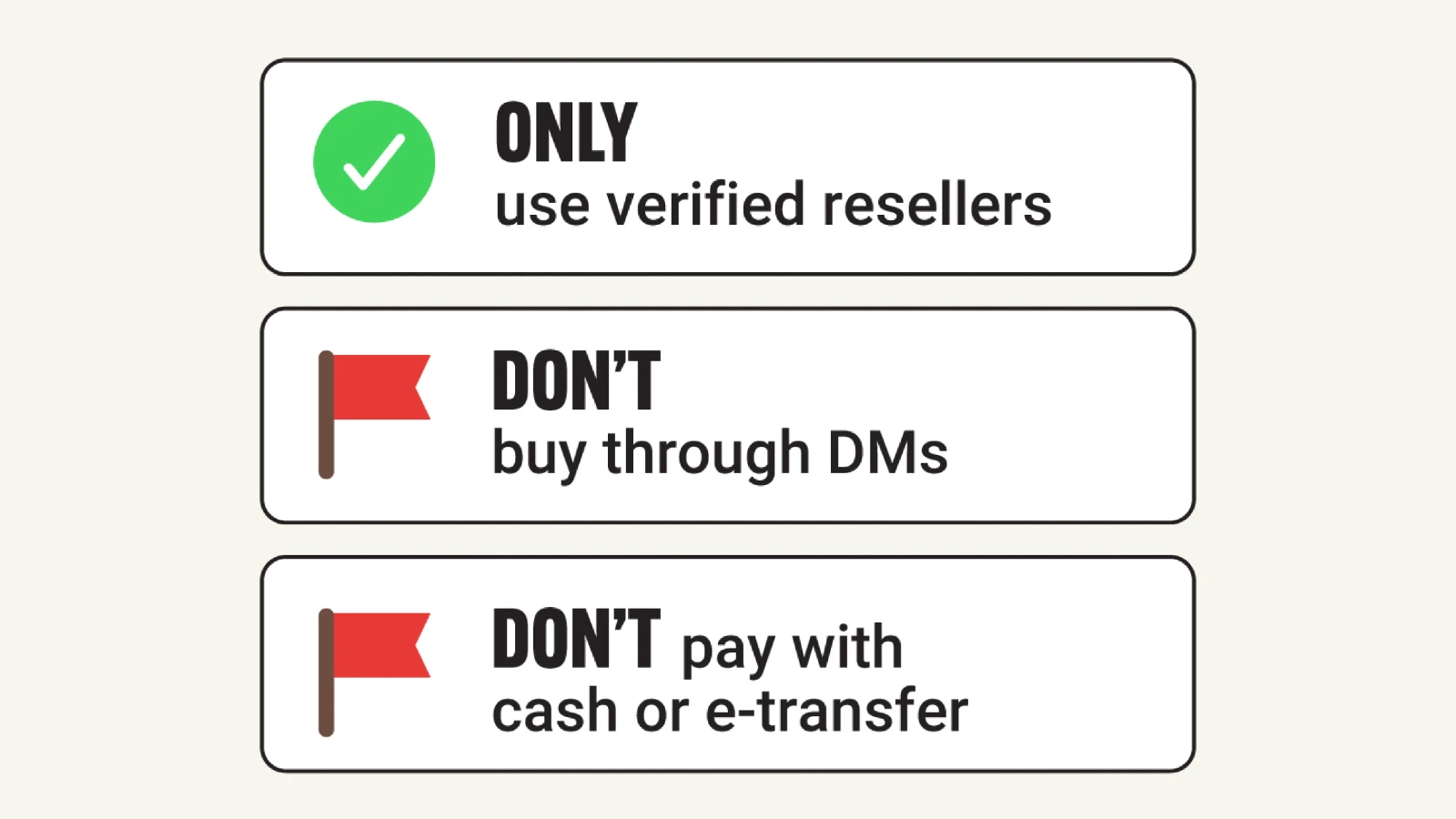

The study's private mode findings offer a partial counterweight to the broader pattern. Among chatbots offering a private or temporary chat mode, the number of distinct chatbot-to-third-party domain pairs dropped to 13, and the number of unique third-party owners fell to 3: Datadog, Mapbox, and Google. Only ChatGPT, Claude, Grok, Perplexity, and Qwen Chat sent any requests to third parties in private mode. According to the paper, no identity or content exposure to any third party was observed in any private chat session.

The disclosures shown when users open a private or temporary chat typically focus on chat retention, model training, and personalization - not tracking. The study found that private modes reduce third-party exposure even though privacy disclosures do not explicitly characterize these modes as tracking controls.

Design choices, not technical inevitabilities

The researchers conclude that most of the exposures documented result from specific design or configuration choices made by chatbot providers rather than from inherent limitations of the technology. The paper identifies several mitigations short of removing analytics or advertising entirely.

On page URLs and titles: providers can avoid placing prompt-derived text in document.title and can redact chat identifiers from URLs reported to third-party tags. On widget integrations: Genspark's exposure of the full prompt to Google Maps via the q parameter could be addressed by resolving the location query server-side and passing only coordinates or a sanitized place query to the widget. On session replay: the paper argues that session-replay tools should not be deployed on authenticated conversation pages where the rendered DOM contains both identity information and conversation text. Where they are deployed, content masking should be used to redact prompts, responses, and account fields.

For analytics and error monitoring specifically, the paper argues providers should avoid directly attaching identifying account fields when other identifiers would suffice. The Sentry configuration on Character.ai records the client IP and transmits the user's email - neither is described as necessary for typical error monitoring.

Why this matters for advertising professionals

The intersection of chatbot tracking and advertising infrastructure is directly relevant to marketing practitioners. As ChatGPT's advertising platform has expanded since its February 9, 2026 launch, questions about what data flows through AI chatbot interfaces have taken on commercial significance. OpenAI updated its US privacy policy on April 30, 2026 to formally disclose for the first time that it receives purchase data from advertisers and shares user information with marketing partners for third-party targeting - a disclosure that arrived the same day as the initial version of this study was submitted.

The study documents that advertising tags are already embedded in many AI chatbot interfaces. The 73 chatbot-to-advertising-domain pairs observed across 12 chatbots show that the tracking infrastructure enabling ad targeting is operating in these environments now. OpenAI's self-serve Ads Manager opened to all US businesses on May 5, 2026, and custom audience targeting using hashed emails and phone numbers has entered a gated rollout. The mechanism the FTC warned about - hashed email as a cross-site identifier - appears in both the study's findings and in the infrastructure being built for ChatGPT advertising.

The presence of Microsoft Clarity connects two separate threads. Microsoft Advertising required Clarity installation for all third-party publishers starting November 11, 2025, a mandate that placed session replay infrastructure across a wide range of publisher properties. The new research shows that Clarity is simultaneously embedded in multiple AI chatbot interfaces - and that on three of them, it captures and transmits readable conversation content. Microsoft Clarity has been expanding its capabilities throughout 2025 and into 2026, including the addition of AI channel groups to track traffic originating from ChatGPT, Claude, Gemini, and Perplexity.

For enterprise and agency teams conducting due diligence on AI tool deployments, the study provides concrete data on which platforms embed which third-party vendors and what categories of information reach them. The distinction the researchers draw between chatbots that list specific third parties in their privacy policies and those that omit them despite observed data flows is directly relevant to compliance assessments under GDPR, the CCPA, and other data protection frameworks that require transparency about data recipients.

Timeline

- November 11, 2025 - Microsoft Advertising requires Clarity installation for all third-party publishers, filtering non-compliant traffic as nonbillable

- January 16-17, 2026 - OpenAI confirms advertising tests for ChatGPT free and Go tiers, ending months of speculation about the company's monetization approach

- January 22, 2026 - Microsoft Clarity releases a new Bot Activity dashboard providing visibility into AI crawler traffic patterns

- February 9, 2026 - OpenAI formally launches ChatGPT advertising for Free and Go tier users in the United States

- March 2, 2026 - Criteo becomes the first formal ad tech partner to the ChatGPT advertising pilot, connecting approximately 17,000 advertiser clients

- April 30, 2026 - OpenAI updates its US privacy policy to formally disclose receipt of purchase data from advertisers and data sharing with marketing partners; on the same day, Jazlan, Wang, Vekaria, and Shafiq submit v1 of the chatbot tracking paper to arXiv

- May 5, 2026 - OpenAI opens its ChatGPT self-serve Ads Manager to all US businesses, introducing CPC bidding alongside the existing CPM model

- May 13, 2026 - Researchers publish revised version (v2) of the paper on arXiv with finalized findings

- May 14, 2026 - OpenAI's custom audience targeting feature - allowing advertisers to upload hashed emails and phone numbers - is observed in a gated rollout

Summary

Who: Muhammad Jazlan, Ethan Wang, Yash Vekaria, and Zubair Shafiq - researchers at the University of California, Davis. The chatbots studied include ChatGPT, Gemini, Claude, Grok, DeepSeek, Character.AI, Perplexity, Microsoft Copilot, PolyBuzz, Kimi, Qwen Chat, Manus, Genspark, Meta AI, Duck.ai, SeaArt, OpenRouter, Poe, Mistral, and ChatOn.

What: The first systematic measurement of web tracking on 20 popular AI chatbot platforms. The study finds that 17 of 20 chatbots share data with at least one third party during a single chat session. Three chatbots - Genspark, SeaArt, and ChatOn - transmit the plaintext content of user prompts and model responses to Microsoft Clarity through session replay. Fifteen chatbots share conversation URLs or chat identifiers with third-party advertising, analytics, or social endpoints. Several chatbots transmit user identity information including email addresses, names, and first-party cookies to third parties. Private chat modes substantially reduce third-party exposure, with no identity or content exposure to any third party observed in any private session.

When: The paper was first submitted to arXiv on April 30, 2026. A revised version (v2) was published on May 13, 2026. The study's data collection used privacy policies archived between March and April 2026.

Where: The research was conducted at the University of California, Davis. The chatbots tested are web-based services accessed through each provider's own website. The study excluded mobile applications, browser extensions, and embedded chatbot functionality. The paper is available at arXiv:2604.27438.

Why: As AI chatbots attract advertising infrastructure and replace search engines for many queries, the content users share with them has become more sensitive and more commercially valuable. Users disclose health concerns, financial situations, and personal relationships to chatbots in ways they would not to a traditional search engine. Authentication is common on these platforms, shifting tracking from browser-level identifiers toward account-level identifiers directly linked to real individuals. The paper is the first to measure what third parties actually receive during chatbot sessions - and to compare what privacy policies disclose against what network traffic reveals.

Discussion