Five of Germany's most significant media organisations published a joint position paper on 21 April 2026 calling on the federal government and European regulators to impose binding rules on how AI platforms may use journalistic content. The signatories - ARD, BDZV, MVFP, VAUNET and ZDF - represent the full breadth of the German media sector, spanning public broadcasting, newspaper publishers, magazine publishers, private commercial broadcasters and digital publishers alike. Their unusual alignment is itself the story. Public and private broadcasters rarely publish shared regulatory demands. That they have done so here signals how acute the pressure from AI platforms has become.

The paper, titled "Presse und Rundfunk fordern faire Rahmenbedingungen fur eine vielfältige Informations- und Medienlandschaft im Zeitalter kunstlicher Intelligenz" (Press and Broadcasting Demand Fair Framework Conditions for a Diverse Information and Media Landscape in the Age of Artificial Intelligence), sets out key points for a media regulatory framework covering AI. It identifies three areas where the signatories say regulation is urgently needed: copyright protection for editorial content, media law safeguards against AI gatekeepers, and competition law enforcement.

Big Tech as AI gatekeeper

The central argument of the paper is structural. According to the document, "due to inadequate regulation and deficits in the enforcement of applicable law, global Big Tech platforms are growing into AI gatekeepers in the already dysfunctional digital market." The signatories argue that these platforms are able to exploit editorially produced content - content created with considerable investment - and present it in their own AI information products without themselves investing in research, information gathering or journalistic work.

The consequences, according to the paper, are a shift in both attention and economic value creation that is "unacceptable from the perspectives of democracy, economic policy and media policy." The language is deliberate and pointed. It does not merely describe a commercial grievance. It frames the problem as a democratic one - a threat to the conditions that allow a pluralistic public sphere to function.

The document goes further, warning that media organisations risk becoming "pure data and input suppliers for AI systems" while journalistic content is replaced by AI-generated summaries. This is not a hypothetical concern. The structural dynamic the signatories describe - where AI systems extract and synthesise content without compensation or attribution - is already visible in how major search and AI products operate. Research cited in ongoing litigation in the United States found organic click-through rates for informational queries featuring AI Overviews fell 61% since mid-2024, with paid click-through rates on those same queries dropping 68% according to a Seer Interactive study covering 3,119 queries.

The three demands in detail

The first demand concerns copyright. The signatories call for full control by editorial media providers over how their content is used by AI providers and platforms. This applies specifically to the use of editorial content for training, for inference, and for extended generation by generative AI systems - and for the development of AI-based competitor products to media providers.

According to the paper, "the decision on the use of journalistic content by AI providers and platforms must be subject to the authority of journalistic media providers." This is not merely an opt-out right. The signatories also demand enforceable rights in Germany that would compel AI platforms accessing or commercially exploiting journalistic content to provide "appropriate remuneration" to media providers. Critically, they state that this requires the disclosure of how journalistic and editorial content is being used.

The transparency demand is significant. It mirrors arguments made across multiple jurisdictions. In the United States, the bipartisan TRAIN Act introduced in July 2025 created an administrative subpoena mechanism for copyright owners to identify which of their works were used to train AI models. Germany's leading media organisations are now seeking the same kind of disclosure capability, but through European media and copyright law rather than US legislation.

The second demand concerns media law. The paper calls for "consistent protection of media diversity against the market power of digital AI gatekeepers." According to the signatories, large Big Tech platforms have for some time played an increasingly central role in the aggregation and presentation of media content. Generative AI intensifies this dynamic. The paper calls for rules on access, prominence, sender identification, non-discrimination and financing to be applied to AI information services - just as such rules apply to traditional media distribution.

The prominence requirement deserves particular attention. In broadcasting regulation, prominence rules require that certain public interest content appear in accessible positions on platforms. The signatories are arguing that equivalent obligations should apply when AI systems select and surface journalistic content - or decline to do so. Without such rules, AI systems operated by dominant platforms can effectively determine which news organisations are visible to the public, with no accountability or transparency requirement.

The third demand is for AI competition oversight to prevent AI platforms establishing market positions that replicate or extend existing gatekeeper advantages into AI-generated information markets. The paper does not name specific companies, but the targets are unmistakable. The European Commission opened a formal antitrust investigation into Google's use of publisher and YouTube content for AI purposes on 9 December 2025, and Brussels regulators have emphasised concerns about gatekeepers establishing "unmatchable advantages" through exclusive access to training data and distribution infrastructure.

Public money, private market - two different models, one shared demand

The coalition is unusual not just in its breadth but in the economic tensions it papers over. ARD and ZDF - the two public broadcasters among the five signatories - are funded primarily through the Rundfunkbeitrag, the German broadcast licence fee. As of 2025, the fee stands at 18.36 euros per household per month. Combined, ARD and ZDF receive several billion euros annually from this mandatory public levy. Their editorial costs, including investment in digital infrastructure, are covered by public funds rather than advertising revenue or subscription income.

BDZV, MVFP and VAUNET, by contrast, represent organisations that depend on competitive private markets for their existence. Newspaper and magazine publishers in Germany sell copies, subscriptions and advertising space. Private commercial broadcasters and digital publishers - VAUNET's constituency - compete for audiences and advertising budgets in open markets. If AI platforms devalue their content and reduce their traffic, the financial consequences are direct and immediate. There is no public subsidy to absorb the shortfall.

This distinction matters for how the coalition's demands should be understood. ARD and ZDF face a different kind of problem. Their concern is not commercial survival in the ordinary sense. It is that AI platforms might substitute for public media's democratic function - that citizens might increasingly turn to AI-generated summaries for news and orientation, reducing the relevance and reach of publicly funded journalism. If AI gatekeepers control which sources are surfaced and which are not, public broadcasters face a distribution and prominence problem even where no revenue problem exists.

For the private sector signatories, the stakes are more existential. A newspaper publisher or a commercial broadcaster that loses search traffic to AI-generated summaries loses the advertising impressions and subscription conversions that fund its operations. The compensation and control demands in the paper - the call for enforceable remuneration rights and transparency over how content is used - are, for BDZV, MVFP and VAUNET, fundamentally economic demands. They are trying to prevent their business models from being hollowed out.

That public and private media have nonetheless signed the same document reflects how thoroughly the AI gatekeeper dynamic overrides the usual distinctions. Both types of organisation depend on being found, being read and being attributed. A system in which AI platforms synthesise journalism without attribution and without directing users to sources undermines both models, albeit through different mechanisms.

YouTube: Google's integrated competitor

The position paper identifies AI gatekeepers as platforms that aggregate and present media content while also developing their own AI information products. One platform that fits this description with particular precision - and which is conspicuously absent from the paper's explicit naming - is YouTube, owned by Google since 2006.

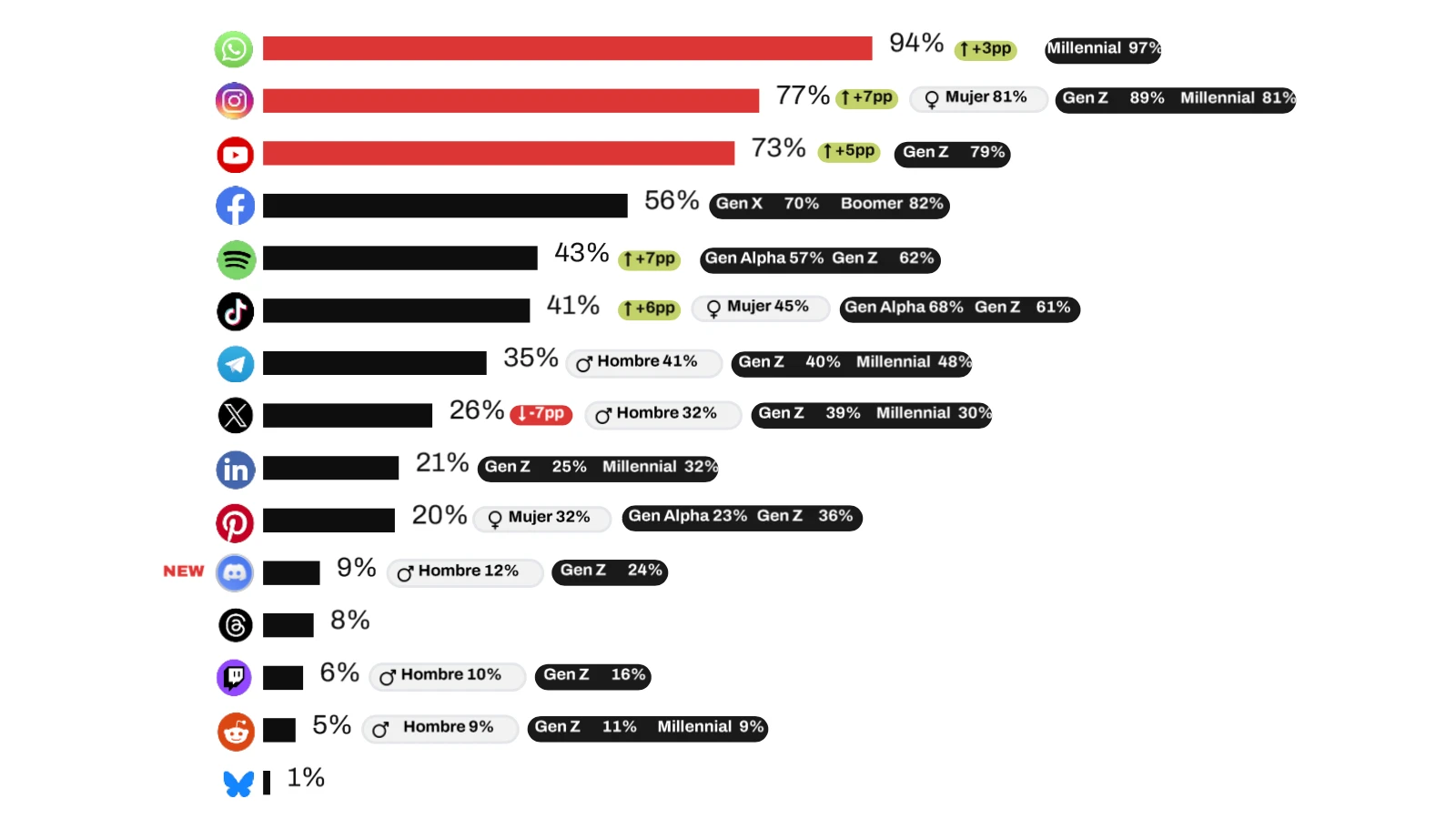

YouTube's relevance to the German media coalition's concerns runs on two tracks. First, it is a direct competitor to news video content. German public and private broadcasters invest in video journalism - live reporting, documentary content, news packages. YouTube hosts and distributes video content without the editorial cost base that broadcasters carry. As Google integrates AI into its products, YouTube content is increasingly surfaced as a substitute for traditional broadcaster content, particularly in Google Discover, the recommendation feed embedded in the Chrome browser and Android devices.

Analysis by Marfeel published in December 2025 found that 51% of the Google Discover feed in the United States, Brazil and Mexico now consists of AI Summaries, with 100% of AI Summary exits in Brazil and Mexico directing users to YouTube rather than publisher websites. Publishers receive brand visibility within AI summaries but no traffic. Vic Daniels, co-founder and executive chairman of GRV Media - which operates 35 active websites and employs over 150 content creators - described Discover as looking increasingly like "a giant ad for AI and YouTube." German broadcasters and publishers face the same structural dynamic when their journalism feeds AI summaries that close with a YouTube video.

Second, and more structurally significant, is YouTube's role in Google's AI training ecosystem. The European Commission's formal antitrust investigation opened on 9 December 2025 explicitly examines YouTube content usage alongside publisher content. According to the Commission's investigation, content creators uploading videos to YouTube must grant Google permission to use their data for various purposes, including training generative AI models. Google does not remunerate YouTube creators for this AI training use, nor does it allow uploads without granting this permission. Meanwhile, YouTube's policies explicitly prohibit rival AI developers from accessing the same content for their own model training.

This asymmetry is structurally identical to the problem the German media coalition describes with journalistic content. A broadcaster's news video uploaded to YouTube is used to train Google's AI models. Google's AI products then surface AI-generated answers and summaries that reduce traffic to the broadcaster's own platforms. The broadcaster is simultaneously a content supplier to Google's AI infrastructure and a competitor to Google's AI information products. It receives no compensation for the former and suffers commercial harm from the latter.

Research examining Google's AI Mode found that YouTube captures 1.76% of appearances in AI Mode results, while Google.com captures 7.41%. SISTRIX analysis found that AI Mode changes both the presentation and selection of results, concentrating visibility among a small number of sources. For German media organisations, the implication is that even within Google's AI systems, YouTube is privileged as a distribution channel over external publishers and broadcasters.

Thomas Höppner's reaction

Competition lawyer Thomas Höppner, a partner at Geradin Partners who has been closely involved in Digital Markets Act litigation, described the publication as a rare but powerful example of alignment. According to his LinkedIn post on the day of publication, "despite divergences on other points, public and private media in Germany agree on one thing - media law must be reformed."

Höppner cited directly from the document in his post, quoting the passage on Big Tech platforms growing into AI gatekeepers, and highlighted the demand that decisions on the use of journalistic content must remain with the media providers themselves. He described the joint paper as compelling through "an accurate assessment of the situation and consistent demands." He also called for "a consistent protection of media diversity against the market power of digital AI gatekeepers" - language taken verbatim from the position paper.

His comments carry weight. Höppner is no outside observer. He has appeared before the Frankfurt Regional Court in DMA-related cases and has described German enforcement as demonstrating "both sides of private enforcement: speed and openness for abuse and DMA cases on the one hand, but little patience for underdeveloped theories on the other," as reported by PPC Land covering the German court dismissal of an AI Overview lawsuit in February 2026.

The European Parliament signal

The timing of the joint paper is not accidental. It references a specific legislative development at EU level. According to the document, the European Parliament adopted on 10 March 2026, by a large majority, a resolution titled "Report on Copyright and Generative Artificial Intelligence - Opportunities and Challenges." The signatories describe this as "a clear political signal for better protection of media against AI" and welcome it because the report "reflects the demands mentioned here and addresses them from the Parliament to the EU Commission as a call to action."

The five organisations are explicitly calling on the German federal government to actively engage in this legislative process. That the Parliament acted first, and that the German media coalition is now pushing Berlin to follow, illustrates the interplay between national and European regulatory action that characterises digital policy in this period. A joint paper by the High-Level Group on Artificial Intelligence had previously outlined how dominant platforms could leverage AI capabilities to further entrench market positions, creating barriers to entry for competitors lacking equivalent data resources.

The paper also expresses support for the plans of the Rundfunkkommission - the German broadcasting commission - to create the necessary media law provisions in the so-called Digitaler Medienstaatsvertrag (Digital Media State Treaty). This is a significant domestic legislative vehicle. State treaties governing broadcasting have historically been the main mechanism through which Germany regulates audiovisual media. Extending this framework to cover AI-generated information services would be a structural shift.

Why this matters for the marketing and advertising sector

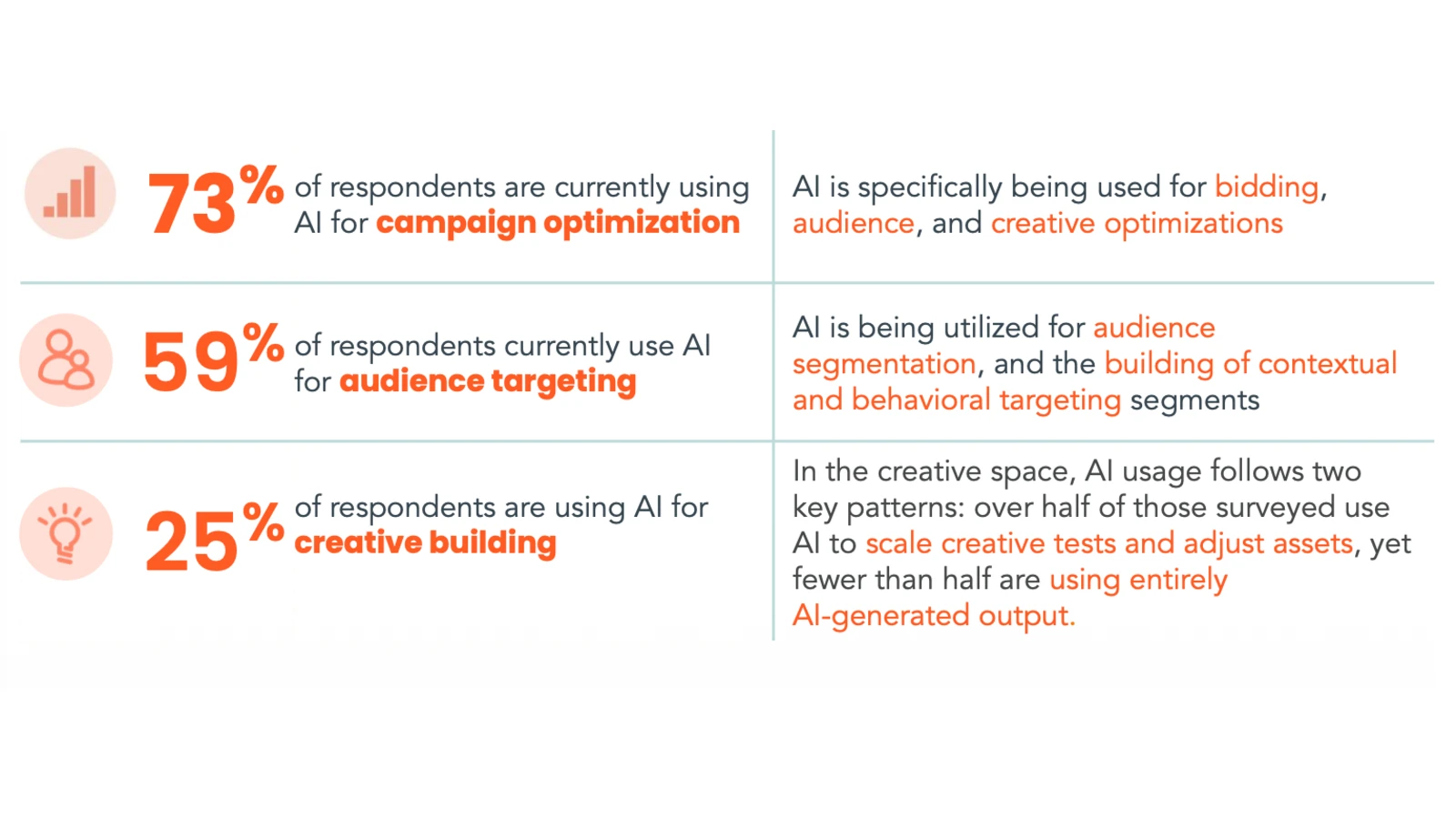

The implications for digital marketing professionals extend well beyond the media industry's immediate commercial interests. The advertising market is built on the assumption that publisher content creates audiences, and that those audiences can be monetised through advertising. If AI platforms systematically absorb the value of journalistic content without directing attention back to publishers, the supply of premium publisher inventory contracts. The consequences flow through to programmatic markets, to brand safety environments, and to the revenue base that funds editorial production in the first place.

Research shows Google Web Search traffic to news publishers fell to 27.42% in December 2025, down from 51.10% in 2023. That decline in referral traffic compresses publisher revenue at precisely the moment when AI-generated summaries are expanding. The German media coalition's demands, if translated into law, would introduce a compensation mechanism that could partially offset that structural drain - though enforcement and quantum of compensation would both be contested.

For advertisers and agencies, the regulatory push matters in a second way. The three-layer framework the signatories propose - copyright, media law, competition law - closely parallels the multi-jurisdictional landscape that marketing technology providers already navigate under the GDPR, the DSA, the DMA and the EU AI Act. The BVDW, representing over 600 German digital economy companies, flagged in October 2025 that fragmented regulatory authority structures could affect Germany's competitiveness as an AI development hub. Adding a media-specific layer - particularly one that imposes compensation and disclosure obligations on AI platforms - increases compliance complexity for every organisation operating at the intersection of AI and content.

The paper also has a direct connection to the broader EU AI Act compliance timeline. The EU AI Act's general-purpose AI model obligations entered application on 2 August 2025, with full enforcement powers scheduled for August 2026. The copyright compliance requirements embedded in that framework - which require AI model providers to implement policies addressing EU copyright law throughout model lifecycles - overlap directly with what the German media coalition is demanding at national level.

The EU's General-Purpose AI Code of Practice, finalised on 10 July 2025 with nearly 1,000 participants, already mandates that providers use web crawlers that follow the Robot Exclusion Protocol and implement "proportionate technical safeguards to prevent their models from generating outputs that reproduce training content protected by Union law on copyright and related rights in an infringing manner." The German paper is, in effect, demanding that these principles be strengthened with enforceable remuneration rights - not merely technical safeguards.

The licensing question

Underpinning all three demands is a question that has become central to the global debate about AI and content: what constitutes fair remuneration, who determines it, and how is it enforced? The Copyright Clearance Center launched four distinct AI licensing options in March 2026, including a pay-per-use mechanism for content summarisation and internal AI re-use rights embedded in annual licences. That approach - collective licensing as a structural solution to individual rights management at scale - is one possible model for the kind of framework the German signatories are seeking.

The German paper does not specify a licensing model. What it demands is that the decision on whether and how AI platforms may use journalistic content remain with the media providers, not the platforms. This is a control argument, not just a compensation argument. It mirrors the position taken by Mediavine in August 2025, when the ad management company launched a petition arguing that AI training on copyright-protected content should not be considered fair use, representing over 17,000 digital publishers.

Timeline

- 10 March 2026 - The European Parliament adopts, by a large majority, a resolution on "Copyright and Generative Artificial Intelligence - Opportunities and Challenges," sending a call to action to the EU Commission on media protection

- 21 April 2026 - ARD, BDZV, MVFP, VAUNET and ZDF publish joint position paper setting out key points for a media regulatory framework governing AI, covering copyright, media law and competition rules; Thomas Höppner publishes commentary on LinkedIn welcoming the joint paper and citing its core demands

Related coverage on PPC Land:

- European Commission opens probe into Google's AI content practices (December 2025)

- EU regulators identify gatekeepers' AI advantages in data and infrastructure access (December 2025)

- Penske Media files antitrust lawsuit against Google over AI content practices (October 2025)

- German court dismisses surgeon's AI Overview lawsuit but confirms Google can be liable for false information(February 2026)

- Senate introduces bill requiring AI companies to disclose copyright use (August 2025)

- Mediavine launches petition demanding AI copyright protections (August 2025)

- CCC launches four AI licensing options, including pay-per-use for universities (March 2026)

- German digital association expresses concerns over AI Act implementation (October 2025)

- EU publishes final General-Purpose AI Code of Practice (July 2025)

- Commission releases AI Act guidelines and Meta won't sign code of practice (July 2025)

- Google Discover feeds users AI and YouTube while publishers watch traffic vanish (December 2025)

- Google breaks publishers' business models with new AI search experiment (July 2025)

Summary

Who: ARD, BDZV, MVFP, VAUNET and ZDF - five organisations representing Germany's public broadcasters, newspaper publishers, magazine and specialist publishers, private commercial broadcasters and digital publishers.

What: A joint position paper setting out key points for a media regulatory framework governing the use of journalistic content by AI platforms, covering three areas: copyright protection and fair remuneration, media law safeguards for plurality and prominence, and competition law enforcement against AI gatekeepers.

When: The paper was published on 21 April 2026. It references the European Parliament resolution of 10 March 2026 on copyright and generative AI as a legislative catalyst.

Where: The paper addresses German federal and state regulatory bodies, as well as the European Commission and European Parliament. It specifically supports the Rundfunkkommission's plans to introduce relevant provisions in the Digitaler Medienstaatsvertrag (Digital Media State Treaty).

Why: According to the signatories, inadequate regulation and enforcement failures have allowed Big Tech platforms to grow into AI gatekeepers that can exploit editorially produced content without compensation, creating shifts in attention and economic value creation that the publishers describe as unacceptable for democracy, the economy and the media sector alike.

Discussion