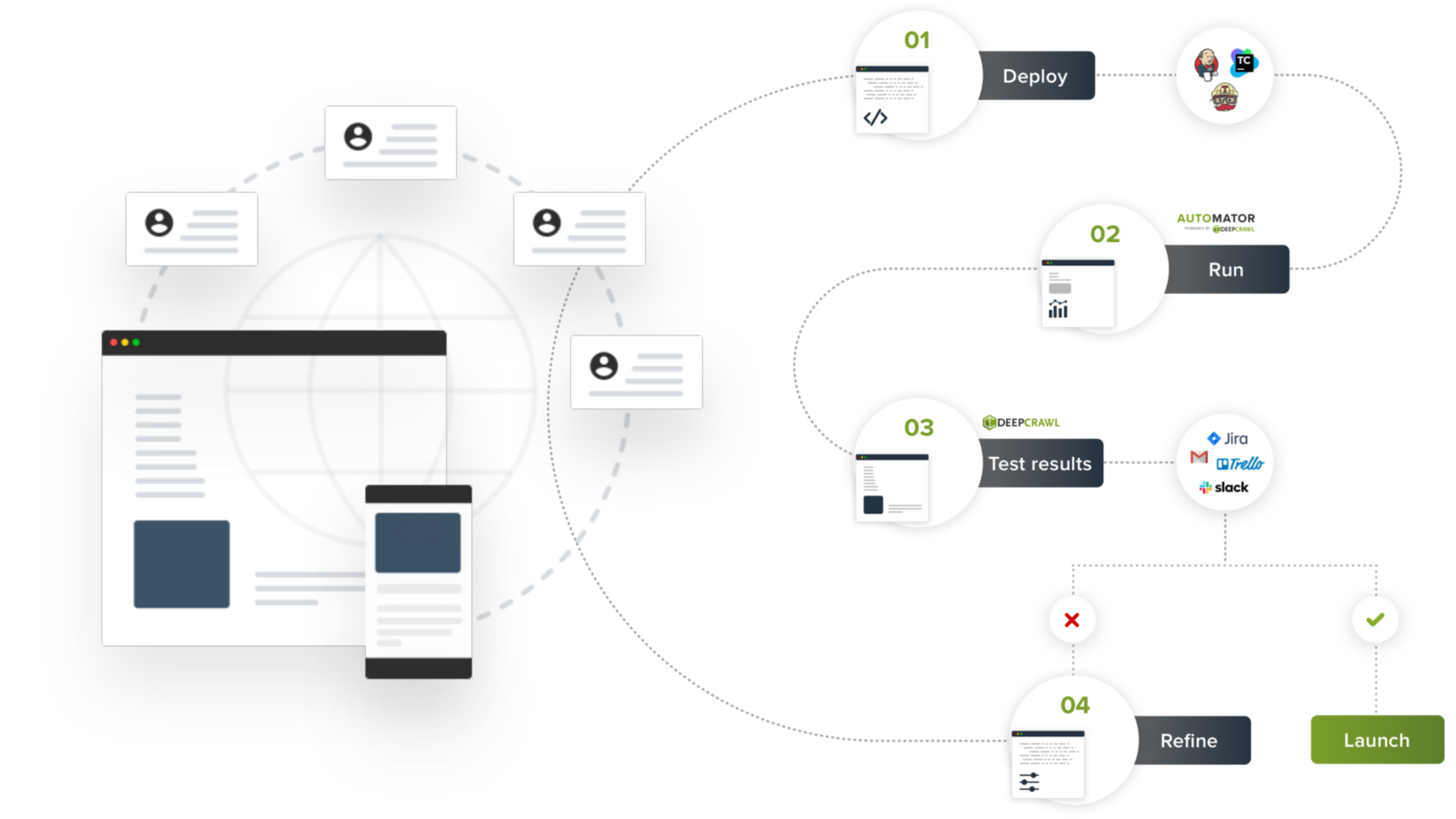

DeepCrawl this week launched a testing tool for SEO. The Automator tool enables developers to test their code for SEO impact before pushing to production.

According to DeepCrawl, Automator was designed to enable collaboration between developers and SEO/marketing teams so they can mitigate any risks that may lead to a loss in site traffic. Automator runs over 160 tests for SEO in multiple pre-production and QA environments and flag any critical issues that the new release may potentially cause.

“DeepCrawl Automator is a very reliable tool. We used Automator, for example, to check if anything is redirecting where it shouldn’t be,” said Sebastian Simon, Senior SEO Manager of Heine. “Before, we had to check everything manually, but with Automator, we can set up tests beforehand and really see what happens. It’s a great relief to know there is something that will notify us if anything has changed.”

DeepCrawl says that during four months of beta testing, Automator found SEO defects in approximately 35% of release.

“Automator is a unique tool designed to help agile organizations protect revenue from the largest digital channel – SEO,” said Michal Magdziarz, CEO of DeepCrawl. “Especially during this time when brands’ online presence is so critical, Automator can be implemented to reduce the risks associated with a decline in rankings due to code changes which can disrupt overall site performance.”

Automator is implemented into CI/CD platforms, such as Jenkins, Github Actions or TeamCity, using native integrations, shell scripts, or a GraphQL API.

Discussion