Elizabeth Reid, VP and head of search at Google, sat for a candid, hour-long conversation with Bloomberg's Odd Lots podcast on April 23, 2026, hosted by Joe Weisenthal and Tracy Alloway. What emerged was one of the most detailed public accounts Reid has given of how she thinks about search, artificial intelligence, advertising, and the competitive pressure Google now faces from every direction. She has been at the company for more than 20 years. She leads the team that builds Google Search - engineering, product, design, and data science all report to her. And she arrived at the interview with answers that were, at points, more specific than what executives at her level tend to offer in public.

The conversation covered a lot of ground: why users are typing longer queries, how Google decides whether to show an AI Overview at all, why she thinks the web is not dying, how search makes money in a world of fewer clicks, what hiring software engineers looks like now that AI can write code, and whether the search box - as a concept - has a future. It ran for nearly an hour and touched things the marketing community has been asking about for two years.

The person sitting at the middle of it all

Odd Lots is a Bloomberg podcast focused on finance and economics. Its hosts are journalists, not search insiders. That context shaped the interview in useful ways. They pushed on the business logic, not just the product roadmap. They asked what happens to Google's advertising revenue if people stop clicking. They asked whether query brain dumps raise privacy concerns. They asked whether the chat interface is actually more productive than traditional web browsing. Reid had to answer those questions for an audience that includes investors and economists, not just search marketers - which meant she had to be clearer about the commercial reasoning than she might have been in a product-focused setting.

Weisenthal opened with something many people in the industry feel but rarely state plainly: Google is a company trying to disrupt its own core business, which is one of the hardest things any organisation can do. The common intuition, he noted, is that incumbent companies are bad at building the thing that threatens them. Google's response - developing Gemini alongside AI Overviews and AI Mode - has been more credible than many expected. Alphabet's stock, which some analysts were shorting in late 2022 on the theory that ChatGPT would eat search's lunch, has not gone where those bets expected.

A 20-year arc of AI in search

Reid pushed back, gently, against the framing that AI in search is a recent development. "AI has been in search for many years in different forms," she said. The examples she gave trace a longer arc than the current discourse usually acknowledges. BERT, the transformer-based language model Google open-sourced in 2018 and applied to 100% of English queries in 2019, was a significant AI deployment in search ranking. Before that, RankBrain - which arrived in 2015 and was later confirmed as the third most important ranking signal - brought machine learning to query interpretation. Before that, spell correction in the early 2000s "felt sort of revolutionary at the time" and was, in a technical sense, AI. "No one calls spellcheck AI anymore," she noted. "But like it was at the time."

The point is not trivial. It means the team building Google Search has been managing the integration of AI into a live product used by billions of people for two decades. The current period, with AI Overviews generating summaries directly in results and AI Mode offering a conversational interface for complex queries, is more visible to users than the earlier transitions - but it is not the first time the underlying search system has been rebuilt around new AI capabilities.

DemandSphere's tracker of 173 Google algorithm and AI search updates from 2000 to 2026 maps this arc precisely - from Hummingbird's semantic rewrite in 2013 through BERT in 2019, MUM in 2021, and AI Overviews launching in May 2024. Reid's account of a long, continuous AI integration into search is documented in that historical record.

Why queries are getting longer - and what that reveals

The most practically useful section of the interview for the marketing community was Reid's account of how AI Overviews and AI Mode have changed the structure of search queries. The shift is not just cosmetic. Users are typing more words, but more importantly, they are expressing different kinds of things.

Reid used the restaurant example with precision. In the keyword era, a user thinking "I need a restaurant in this location for five people, not too pricey, one vegan in the group, also bringing kids" would type "restaurants in New York." The gap between the actual question and the submitted query was enormous. It existed because users had learned, through experience, that search engines could not handle specificity. They had internalised the limitations of the system and pre-compressed their needs into keywords.

"In the old world of keywords, none of that information would be kind of spread throughout the web," Reid said. "And so you wouldn't feel confident you could just put in the question." Now, with AI Mode, they can. And they do. "They tell you the real problem. They don't take their need and translate it into what the computer understands. They try to give the computer their actual need and expect us to do the translation."

That last sentence describes a reversal of a two-decade pattern. For most of search history, the cognitive work of query formulation fell on the user. The user had to figure out what a search engine could handle and compress their actual intent into a form the engine could process. AI Mode moves that translation burden to the machine. The user asks what they actually want to know.

The consequence is that query data becomes richer. A user who types "I want a restaurant for five people including a vegan and two kids, not expensive, in the West Village on a Saturday evening" has revealed a demographic profile, a timing preference, a budget constraint, and a party composition - all without being prompted for any of it. That is a fundamentally different input signal from "restaurants New York." Google's head of search confirmed in June 2025 that AI Mode queries run two to three times longer than traditional search queries. The density of commercial intent contained in those longer queries is, by extension, substantially higher.

Reid also noted an expansion in overall query volume she described as an "expansionary moment." The argument: many questions people have never get asked because the person judges the effort of searching not worth it. You have a question, you weigh how hard it would be to find a useful answer, and you let it go. AI lowers that threshold. "There's a whole bunch of curiosity that is people are not exploring, and they're not exploring because they view it as too difficult or too much time." When the expected quality of an answer rises and the effort of asking falls, those suppressed questions get asked. The result is growth in total query volume, not just redistribution of existing queries across platforms.

The falafel problem: a window into search's core tension

One of the interview's more illuminating moments came when Reid described what could be called the falafel problem. A user who types "falafel" into Google might want a definition, a recipe, a nearby restaurant, or nutritional information. All four possible intents collapse into the same single word. The search engine has to infer which applies to each individual user at that moment - and it has to do this simultaneously across millions of queries.

That is difficult with a single keyword. The system can build probabilistic models of what most people searching "falafel" want, but it cannot know what any individual person wants. The result is a ranking that serves the statistical majority while systematically failing the minority. The user who wants a definition gets a list of restaurants. The user who wants a recipe gets nutritional facts. There is no way to get it right for everyone without more information.

The same structural problem surfaced when Weisenthal described a personal frustration: searching for a person who has just appeared in the news and finding that all ten results are articles from the past 24 hours, because the recency signal dominates ranking. The user who wants background context on who this person actually is - before the news event - cannot find it because the system has correctly inferred that most people searching that name right now want the news coverage. Most do. But not all.

Reid acknowledged this is a recognised issue. "How do we figure out across all of the different intents and match them across?" She pointed to AI Mode as a partial solution: she said anecdotally that people are using AI Mode and AI Overviews for person-related queries specifically to understand who someone is independent of the surrounding news cycle. Because a user in AI Mode can ask "tell me about this person" rather than just their name, the system has a clearer signal about what kind of answer to produce. Unambiguous intent, stated directly in natural language, dissolves the ambiguity that makes keyword-based ranking so imprecise.

How Google decides when to show an AI Overview

Alloway posed a clean test case: searching for "Corgi" returns a list of links to the American Kennel Club and the Corgi subreddit. Searching for "What is a Corgi?" returns an AI Overview. Is the question mark doing the work?

No, Reid said. The question mark correlates with certain query types - those where users want a description or have a harder question a single webpage might not answer - but it is not the mechanism. The actual signal is whether the AI Overview adds value, as measured by user behavior. "We basically learn over time based on user signals, the same way we learn about when should you show the weather one box and when should you show local results."

The Corgi query without a question mark probably reveals something about user intent through click behavior. Most people searching "Corgi" are not trying to understand what a corgi is. They want pictures. They want to browse breed pages. They are engaging, not inquiring. An AI summary of corgi characteristics would add nothing to that experience and might displace the content the user was actually trying to reach.

By contrast, "What is a Corgi?" signals inquiry. The user wants explanation, not navigation. An AI Overview reduces the effort of getting that explanation without removing the ability to dig deeper. She was equally clear that AI Overviews are not shown simply because deploying AI is itself a goal. "We shouldn't give you AI for the sake of giving AI. The point is for it when we think it adds value to people." That selectivity has practical consequences. Research based on 10 million search engine results pages found that only 8.64% of AI Overviews appear outside position one, suggesting the quality constraints are actively working to limit where the feature fires.

As models improve, Reid said, the system can handle more cases without degrading quality. "We don't want to put in a review if we think it's not going to be high quality. So as the models have gotten more powerful, we can cover more cases." That trajectory - cautious expansion tied to model capability - is consistent with how Google has described its AI Overviews rollout across 200 countries and 40 languages since May 2024.

The click question: what actually happens to traffic

The central anxiety in the broader search industry - the one the hosts circled back to repeatedly - is the relationship between AI answers and web traffic. If users get answers directly on the results page, they stop clicking. If they stop clicking, publishers lose traffic. If publishers lose revenue, they produce less content. Less content means a worse web, which eventually harms the search engine that depends on it.

Reid's response moved through several layers. First, she distinguished between query types. For informational queries where the user just wants a fact - a temperature, a year, a definition - the click was never particularly valuable. The user bounced immediately after confirming the information. "If all you were going to do was go to the webpage, see the fact, and immediately click back, you're going to spend like a half a second on the page." Eliminating that visit does not harm publishers meaningfully because it was not generating engagement, time-on-page, or any secondary action.

Second, she argued that deeper engagements are preserved. Users who were going to spend five minutes on an article still go there. The AI Overview helps them identify the right article faster, reducing the "bounce clicks" that happen when users click the wrong result first. "I might help you point to the right page so we see fewer bounce clicks." Fewer low-quality clicks, same number of high-quality ones - that is Google's stated position on the traffic question.

Third, she raised the point that ads appear on fewer than a quarter of all queries. Most searches - informational, navigational, curiosity-driven - never showed ads to begin with. The AI Overview answering a question about the Strait of Malacca does not displace an advertising opportunity because that query never had one. This is Reid's clearest answer to the business model question: many queries AI Overviews handle were always outside the commercial inventory.

The industry data tells a more complicated story. Ahrefs documented in February 2026 that AI Overviews now correlate with a 58% reduction in click-through rates for top-ranking pages, nearly double the 34.5% decline documented in April 2025. Google's Network advertising revenue declined 1% year-over-year to $7.4 billion in Q2 2025, the first sign that publisher monetisation through Google's advertising network is absorbing real pressure even as Google's own search revenue grows. Those two data points together describe a system where Google's commercial position holds while the publishers that supply the content face a different trajectory.

Ads in an AI-first search engine

How does a search engine that answers questions without clicks make money? Reid addressed this directly and with more granularity than usual.

Start with the commercial intent that survives. An AI Overview can explain that multiple merchants sell the shoes a user is researching at prices ranging from $80 to $140. That is useful. But the user still has to choose a merchant and complete a transaction. "The answer doesn't buy the pair of shoes. You actually have to buy the shoes." The decision moment - selecting between competing commercial options - does not disappear because a summary appeared above it.

Next, consider the targeting opportunity created by richer query data. Short, under-specified keywords carry weak intent signals. A user searching "shoes" might want anything. A user explaining in natural language that they need waterproof trail running shoes in a wide fit for a trip next month has communicated substantially more. That specificity enables better targeting. Conversational, contextually rich queries allow the system to infer where in the purchase funnel the user sits. "If it's more of a conversation, they're going more down funnel. You can actually create better ads," Reid said.

She also pointed to the expansion of total query volume as a new source of advertising inventory. If the number of searches grows because AI lowers the threshold for asking questions, and if some fraction of those newly asked questions carry commercial intent, then new advertising opportunities appear that did not exist before. This is the "expansionary moment" framing Sundar Pichai used in Alphabet's Q3 2025 earnings call. According to that call, AI Mode had reached more than 75 million daily active users and AI Overviews were driving more than 10% additional queries globally for applicable search types.

The monetisation is already in motion. Adthena detected 13 instances of ads appearing inside Google AI Overviews in November 2025 across 25,000 monitored search engine results pages - a frequency of 0.052% - the first documented third-party evidence of Google monetising AI-generated answers outside controlled testing. By April 2026, Adthena's analysis of 29.1 million queries found the frequency had grown to 0.12% in the United States. Sponsored store listings have been observed inside AI Mode product panels, and Google expanded AI Overview ads to 11 countries beyond the United States on December 19, 2025 without a formal press release.

Reid pointed to a historical parallel when the hosts asked how new ad formats could emerge. "Some number of years ago people said, how can you make money from a feed? Well, Instagram ads are very popular." New formats develop as new interfaces develop. The underlying commercial need - users wanting to choose between products, hear about options, compare merchants - does not disappear because the surrounding interface changes.

The habits question: coming back more often

Reid described how Google measures success in a way that differs from simple session metrics. The question is not just whether users engage more within a session. It is whether they return to Google more often. "Does it cause people to come to search more often, not just use search more often, but come more often?" Taking out your phone, unlocking it, opening a browser, and navigating to a search engine is a friction-laden sequence of decisions. If users choose to do it more frequently, that signals genuine utility - "hiring Google more often," in her phrase.

She also offered a specific observation about latent demand. People do not ask all the questions they have. The gap between the questions that occur to someone and the questions they actually submit to a search engine is large, because every search involves a cost-benefit calculation. "You make a calculation when questions go through your mind of like, is it worth spending any time to figure out the answer to this question? And if the answer is no, then you just don't ask the question." AI lowers the expected cost and raises the expected quality. Some questions that previously fell below the threshold now get asked. That is not cannibalization of existing search volume - it is growth from previously suppressed curiosity.

This framing also appeared in how Reid described multilingual access. In many countries, web content is not available in the user's primary language. AI Overviews, generated by a language model rather than assembled from indexed documents, can surface information that was previously inaccessible. "AI Overviews, because it's using an LLM, can be more multilingual than just the web corpuses by default." A Hindi speaker can now access information that was effectively invisible behind a language barrier. That expansion of access is both a product justification and, over time, a commercial one.

User behavior across Google's surfaces

Alloway asked about something that affects many users: the same person switching between google.com, AI Mode, and the Gemini app at gemini.google.com. Are these distinct user populations? Do people use them for different purposes? What does Google see?

Reid confirmed substantial overlap - many users actively use multiple surfaces, and some use AI products that are not Google's at all. The pattern she described follows a logic of purpose. Informational queries - looking something up, understanding a topic, finding a fact - tend to go to search or AI Mode. Creative and productivity queries - rewriting a paragraph, generating a draft, reformatting content - tend to go to Gemini. The divide is not just about query complexity but about what cognitive task the user is offloading.

Within the search side, she distinguished between AI Mode and the traditional results page. Users who go directly to AI Mode tend to do so for "more complex, longer questions" where they expect follow-up turns. Conversational back-and-forth, iterative refinement, multi-step research - those behaviors fit AI Mode. Users who prefer the traditional results page often know they want to navigate to a specific website. "If your goal is to just get to a particular web page, you're more likely to start with the search result page."

She also addressed the fact-checking pattern that has become increasingly common: users ask a question in an LLM, get an answer, and then go to Google to verify it. "I think people have used Google as a place to fact check information pre-LLMs for a number of things. A friend tells them something and you sort of come..." That use of search as a verification layer for AI-generated claims is, in her account, a long-standing behavior applied to a new source. It is not a disruption - it is a familiar pattern finding a new context.

Hiring engineers in a world of AI-generated code

One of the interview's more unexpected turns came when the hosts asked about software engineering hiring. Weisenthal, experimenting with tools like Claude Code, wanted to know whether Google's technical interviews look different now that AI can write code on demand.

Reid gave a careful answer that distinguished between two separate problems. The first is straightforward: if an AI tool can generate the answer to a standard interview question, the interview no longer measures what it was designed to measure. You need questions that probe reasoning, not output. That probably means in-person components, verbal explanation of thought process, and questions about how a candidate approaches ambiguity rather than whether they can produce a syntactically correct function. "You need to make sure that that's actually what your assessment is capturing."

The second problem is more subtle. AI tools are genuinely powerful, and an engineer who uses them well is more productive than one who does not. The interview process needs to assess fluency with AI tools, not just code quality in isolation. But what fluency looks like changes rapidly. "What was possible with the tools six months ago, let alone two years ago, is different than what's possible now. And in six months it will be different." Designing an interview for AI fluency is hard when the definition of fluency keeps shifting.

Reid drew an analogy to earlier transitions: when integrated development environments became standard, interview questions had to evolve. When engineers moved from assembly language to higher-level languages, the relevant skills changed. "It's just that it's happening very fast." The result is a hiring process in genuine flux - not because Google has not tried to adapt, but because the target keeps moving.

Token leaderboards and the limits of proxy metrics

The hosts raised a specific reference: an article about Meta employees competing on a token usage leaderboard, with some apparently burning compute for its own sake to demonstrate AI engagement. Weisenthal found it bewildering. Reid's response was measured but direct.

"If you use them blindly, you're going to run yourself into trouble," she said about proxy metrics. A manager who treats token consumption as a performance signal without applying judgment will get employees running background jobs to inflate the numbers. The metric stops measuring what it was supposed to measure as soon as people optimise for it directly.

But she did not dismiss the underlying impulse entirely. There is a real problem with employees who are not engaging with AI tools at all, even when those tools can improve their work. The token metric, noisy as it is, at least identifies who is and is not experimenting. "Go look at it and understand as a place of where to look. Don't use it as a final judgment." That framing - noisy signal, use with judgment, do not mechanise - is probably the most defensible position available to anyone managing technical teams through a rapid capability shift.

She also offered a more fundamental observation about the pace of change. "People have to learn what's possible. They're doing different jobs. The tech is changing." The expectation that AI tool use should be fully optimised immediately, with no experimentation or wasted effort, misunderstands how new technology gets integrated into working practice. Some inefficiency in early adoption is not a bug - it is how people learn what the tools can do.

Will there be one box or many?

The interview ended on the question that has been generating the most speculation: does the search box as a concept survive? If everything can be handled by a personal agent, if the operating system becomes conversational, if the friction of navigating browser tabs and typing queries is eliminated by AI that understands context and intent - does Google Search still exist as a distinct product?

Reid declined to make a confident prediction. But her reasoning was instructive. She pointed to the historical record of device proliferation: the laptop arrived, then the phone, then the watch, then glasses. Each new form factor added to the ecosystem without eliminating the ones before it. "We haven't so far replaced all of the old ones." The phone displaced some desktop usage but did not eliminate the desktop. The watch supplemented both without replacing either. New interfaces accumulate; they do not simply replace.

Her argument is that interfaces multiply and specialise rather than converge. Different tasks fit different input modes. Editing a specific item in a long list is faster with a visual interface than a conversational exchange. "A chat interface is a super slow way to go" for that kind of task. Requesting a quick navigation to a website is faster with a search bar than describing your intent to an agent. But planning a complex research project, or figuring out what restaurants to choose for a group with multiple dietary restrictions, might genuinely be better in conversational mode. No single interface dominates all tasks.

She made the same point about Google's own surface proliferation. Google has been running both the Google app and Chrome for years - both allow search and browsing, both serve the same basic function - and neither has absorbed the other. The user populations have different preferences that have proven resistant to consolidation. "You can't necessarily convince either population that they want to stop using one app and just switch to the other app." The same logic applies at a larger scale: YouTube search, Google Maps, traditional search, AI Mode, and the Gemini app each serve user populations whose habits are distinct enough to persist.

Whether those will eventually converge into a single product is genuinely unknown, she said. "I don't know what life will be like in five years." What she was confident about is the directional quality of the change: less friction, more personalisation, more adaptability to whatever device or form factor the user is on. "It should feel much more personal. It should feel much more dynamic. It should feel much more ambient and available to you."

A December 2025 test documented on PPC Land showed Google already building bridges between its surfaces - an "Ask Anything" button at the bottom of expanded AI Overviews directing users into AI Mode while maintaining query context. That is not consolidation, but it is friction reduction between surfaces, which is the same directional logic Reid described.

AI slop: a problem as old as search itself

The final substantial topic was content quality. The hosts raised AI-generated low-effort content flooding the web, and whether it was degrading what search could surface. Reid reframed the problem immediately. Before AI-generated slop, there was human-generated slop. The web has always contained vast quantities of low-quality content designed to rank rather than to inform.

The hosts then proved the point live on air, finding a 2011 article from Mahalo.com - Jason Calacanis's content farm startup - giving instructions on how to play the xylophone. Step one: decide if you want to play. Step two: get a xylophone. Step three: learn to read sheet music. Step four: practice. Weisenthal called it a "steaming pile of garbage." It had existed on the web for 15 years. It had nothing to do with AI.

The relevant question, Reid argued, is not how much poor-quality content exists on the web. It is whether Google can find and surface the good content that also exists. "What doesn't really matter at some level is how much slop is on the web, so much as, is there great content on the web and can you surface it?" Google crawls many more pages than it indexes and indexes many more than it surfaces. The filtering pipeline is designed for precisely this problem, and the financial incentives driving spam mean the filtering never finishes - but it has been operating continuously for more than 20 years.

The practical implication for publishers producing quality content is that the competition is not primarily from AI-generated articles. It is from AI-generated articles that rank well despite low quality. Google's position is that the same detection systems it has always run against human-generated spam are being applied to AI-generated spam by the same mechanisms. Reid did not claim the system is perfect. She claimed it is working, continuously, and that the volume question is less important than the surfacing question.

What this all means for marketers

The interview was not designed as a briefing for the marketing community. But it contained more operational signal than many explicitly marketing-focused presentations do.

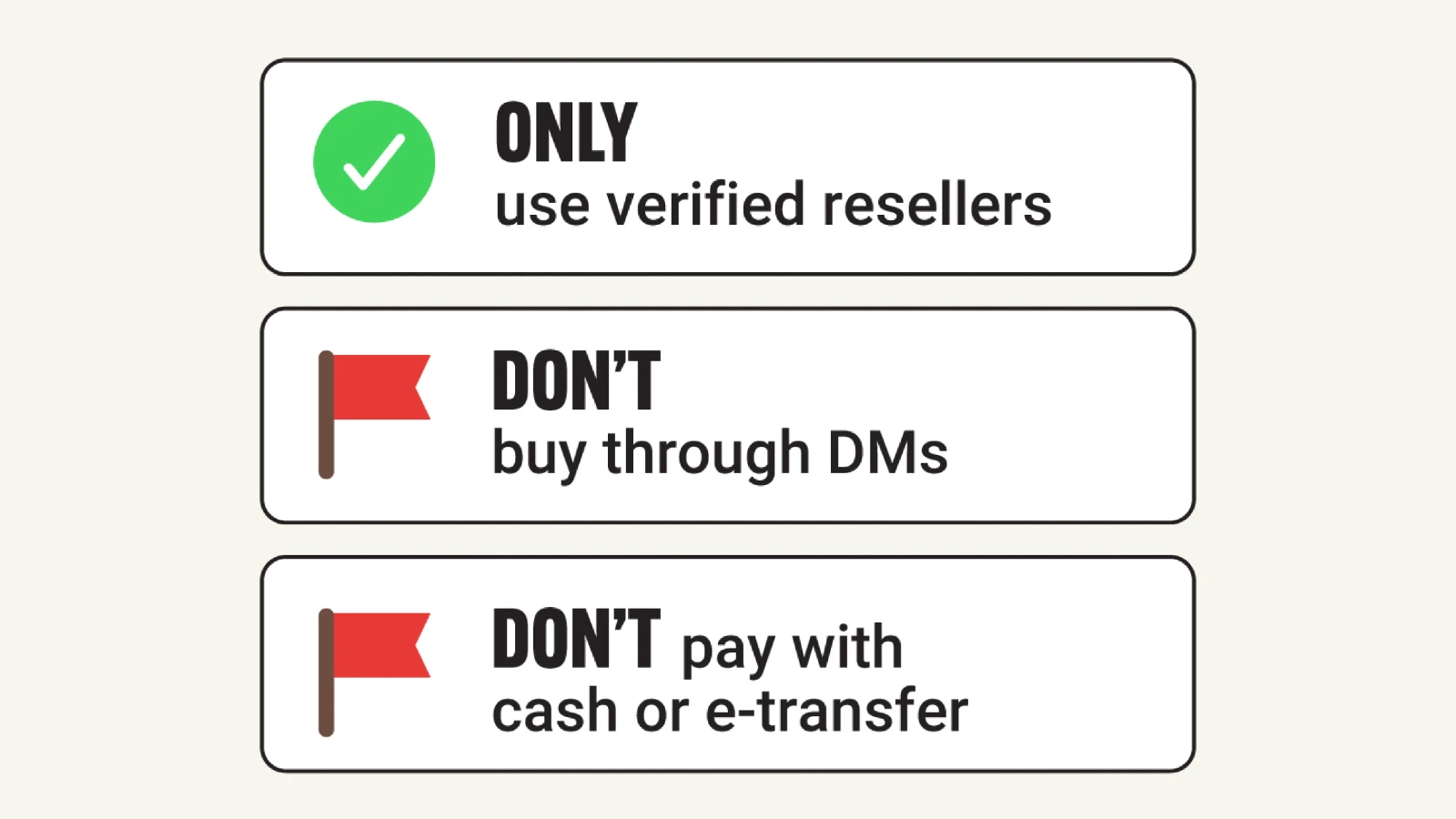

The shift to longer, natural-language queries is not a trend to monitor - it is already happening at scale, driven by product decisions Google has deliberately made and by user behavior responding to those decisions. Campaigns built around short-tail keyword matching are encountering users who are now operating in a mode where they express full intent. The keyword and the intent are no longer the same unit.

The monetisation of AI search surfaces is progressing faster than the public announcements suggest. The frequency of ads appearing inside AI Overviews grew from 0.052% in November 2025 to 0.12% by April 2026. Sponsored store listings are visible inside AI Mode product panels. The commercial architecture is being built incrementally, without formal announcements, and advertisers who are not monitoring placement data will not know where their ads are actually appearing.

The question of which Google surface a given user is on now carries strategic weight. Reid's description of distinct behavioral patterns across surfaces is a signal that audience strategy needs to account for surface-level differences rather than treating Google as a monolithic channel. The users doing conversational research in AI Mode are not the same population, in that session, as the users navigating to a specific website via the traditional results page. The commercial intent profile is different. The ad formats that reach them are different. And the optimization logic that governs ranking in each surface is different.

Finally, Reid's claim that AI is expanding the total volume of questions people ask - by lowering the cost of asking - is a commercial argument that deserves scrutiny from the marketing side. If true, it means query volume data from 2022 and 2023 understates the actual information need that existed then and was simply not being expressed. That gap - between the questions people had and the questions they asked - is now closing. The implications for demand forecasting, keyword strategy, and content planning are real, even if the exact magnitude is not yet measurable.

Timeline

- October 2015 - RankBrain brings machine learning to Google Search query interpretation

- October 2019 - BERT applied to 100% of English queries; Google describes it as its biggest search quality improvement in five years

- May 14, 2024 - Google launches AI Overviews in search results: Google Search AI Overviews: understanding the feature and how to disable it

- October 2024 - Google begins testing ads within AI Overviews for mobile users in the United States

- March 2025 - Google introduces AI Mode as a dedicated conversational search interface

- May 1, 2025 - AI Mode waitlist lifted; available to all US users over 18

- June 25, 2025 - Liz Reid confirms AI Mode queries run 2-3x longer than traditional search; advertising rollout begins: Google search head reveals "game system" approach as AI mode begins advertising rollout

- July 18, 2025 - Research across 10 million SERPs documents only 8.64% of AI Overviews appear outside position one: Google's AI Overviews appear outside top position only in 8.64% of searches

- July 23, 2025 - Alphabet Q2 2025 results; Google Network advertising revenue declines 1% to $7.4 billion: Google Network advertising revenue declines 1% as AI features reduce publisher traffic

- October 29, 2025 - Alphabet Q3 2025 earnings; AI Mode reaches 75 million daily active users; AI Overviews drive 10%+ additional queries; consolidated revenue $102.3 billion: Google executives hint at a unified AI search interface

- November 13, 2025 - Court filings reveal Google uses FastSearch to generate AI Overviews, with acknowledged lower quality than fully ranked results: AI Mode, FastSearch, and the antitrust case that exposed how Google works

- November 24, 2025 - Adthena becomes first platform to detect ads inside Google AI Overviews; 13 instances across 25,000 SERPs at 0.052% frequency: Adthena detects ads in Google AI Overviews across 25,000 searches

- December 1, 2025 - Google tests AI Mode access directly from AI Overviews via "Ask Anything" button: Google tests AI Mode access directly from search results page

- December 19, 2025 - Google expands AI Overview ads to 11 countries without formal announcement: Google quietly expands AI Overview ads to 11 countries without fanfare

- February 4, 2026 - Ahrefs documents AI Overviews correlating with 58% reduction in CTR, nearly double April 2025 figure: Google's AI summaries now swallow 58% of clicks that once went to websites

- March 6, 2026 - Liz Reid gives interview on Access podcast on AI Overviews growth and personalisation: Google's Liz Reid on why search won't die when AI answers everything

- March 25, 2026 - Sponsored stores spotted inside Google AI Mode product panels: Sponsored stores and quick web results spotted inside Google AI Mode

- April 18, 2026 - DemandSphere publishes tracker of 173 Google algorithm and AI search updates from 2000 to 2026: DemandSphere maps 25 years of Google algorithm and AI search changes

- April 2026 - Adthena analysis of 29.1 million queries finds AI ad auction competition up 35% year-over-year; US AI ad frequency now 0.12%: Adthena's 29M-query report reveals what's actually working in AI search ads

- April 23, 2026 - Bloomberg Odd Lots publishes interview with Liz Reid; 1,129 views on day of publication

Summary

Who: Elizabeth Reid, VP and head of search at Google, speaking with Bloomberg Odd Lots hosts Joe Weisenthal and Tracy Alloway. Reid has been at Google for more than 20 years and leads the search team responsible for engineering, product, design, and data science.

What: A wide-ranging interview covering the structural shift from keyword to natural-language queries driven by AI Overviews and AI Mode; how Google decides when to display AI-generated summaries; the commercial logic of advertising in a lower-click search environment; user behavior patterns across Google's multiple surfaces; the evolution of software engineering hiring under AI; token usage as a productivity metric; and whether the search box as an interface concept has a future.

When: The Bloomberg Odd Lots episode was published on April 23, 2026, recording 1,129 views on the day of publication.

Where: Published on the Bloomberg Podcasts YouTube channel and distributed through Bloomberg's podcast and newsletter network. The issues it addresses affect Google's global search operations across more than 200 countries and 40 languages.

Why: Reid holds direct authority over how Google Search integrates AI features. Her statements on query length growth, the logic behind AI Overview deployment, the advertising opportunity in conversational search, the distinctions between Google's multiple surfaces, and the future of search interfaces carry direct implications for digital marketers, publishers, and advertisers whose businesses depend on understanding how Google's systems work and where they are heading.

Discussion