Microsoft Clarity moved its Citations feature into general availability on May 13, 2026, giving publishers and brands a structured way to measure how content appears inside AI-generated answers. The announcement was made on the Clarity blog by Ihab Rizk, a product manager on the Clarity team, and is accompanied by updated documentation on Microsoft Learn, last updated on May 12, 2026.

The release marks a concrete shift in what web analytics can measure. Rather than counting page views or tracking how users navigate a site, Citations focuses on a stage of discovery that precedes any human visit at all - the moment an AI system retrieves, evaluates, and references content before composing a response.

What the feature measures

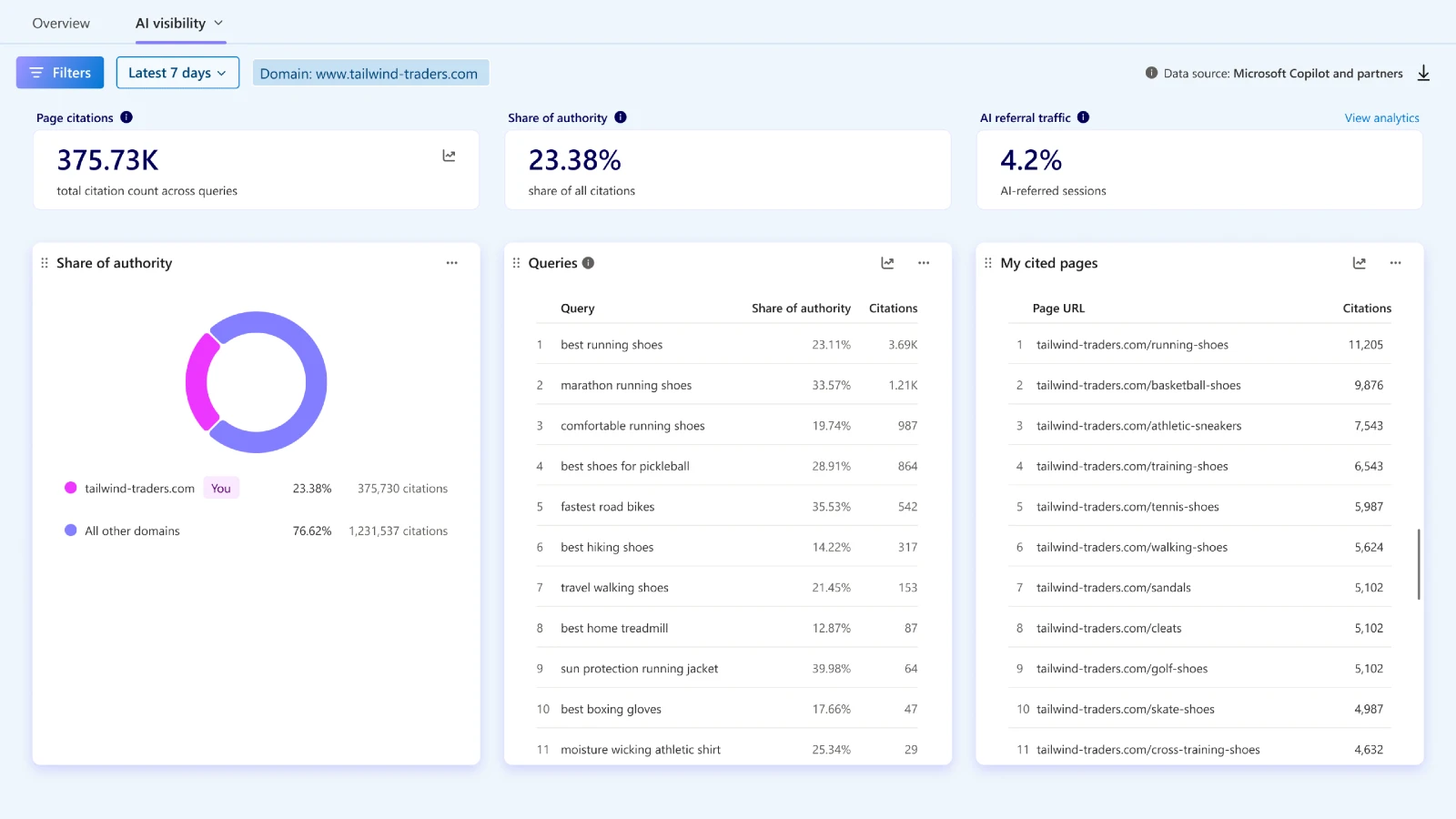

According to the Clarity documentation, the Citation dashboard sits inside the platform's AI Visibility section, accessible through the path Dashboards > AI Visibility > Citations. The dashboard aggregates citation activity across four primary metrics.

Page citations represent the total number of times pages from a domain were referenced in AI-generated answers during a selected period. The count includes multiple citations within the same answer, meaning a single AI response can contribute more than one citation to the total.

Share of authority is a competitive metric. According to the documentation, it is calculated as a domain's citations divided by total citations from all domains, applied at a daily level. If a domain is cited for a query on a given day, all citation instances for that query on that day are included. If the domain is not cited on a given day, that day is excluded from the calculation. The result is a figure that focuses specifically on active participation, which the documentation notes may produce higher values compared to broader calculations that do not filter for participation. A high share signals strong presence in AI-generated answers for the queries where the domain competes; a lower share points to topics where other domains are being selected more often.

AI referral traffic tracks the percentage of site sessions that originated from an AI assistant. According to the documentation, the calculation divides AI-referred sessions by total sessions for the selected period. This metric connects citation activity to real visitor behaviour - when an AI system cites content and a user then navigates to the site, that session is captured here.

Grounding queries are the queries that AI systems used to retrieve content before generating an answer. These may differ materially from the phrases users typed. According to Clarity's documentation, AI systems process user input and translate it into structured retrieval queries - a process sometimes called grounding - before selecting sources. The Grounding queries table in the dashboard surfaces those AI-generated queries, not the original user phrasing, offering a window into how AI models interpret and classify content for retrieval purposes.

My cited pages is a page-level table showing which URLs from a domain were referenced in AI-generated answers, along with citation counts and the associated grounding queries.

The documentation emphasises one important distinction: citation counts reflect how often a page was referenced, not its ranking or prominence within any individual AI-generated answer.

From preview to general availability

According to the Clarity blog post, Citations was introduced first as a preview. The preview gave early users visibility into when and where their content was being cited across supported AI experiences. Feedback from that period shaped how signals are measured, aggregated, and presented. According to the announcement, publishers and brands wanted clearer ways to interpret citation patterns across queries, pages, and overall visibility trends. Those requests drove a number of updates to the reporting experience and the underlying metrics before the feature moved to general availability.

The announcement states that the general availability release incorporates those refinements. Additional updates include changes to the reporting model, query views, filtering, and pagination, described as improvements to handle performance across larger datasets and longer time ranges. These are not cosmetic changes - they reflect the practical challenge of processing citation data at scale for domains that generate substantial content and appear across many queries.

Technical setup requirements

According to the documentation, Citations is now available for all Microsoft Clarity projects. Setup starts with creating a Clarity project and installing the Clarity tracking code on the site. Once installed, the Citations dashboard begins surfacing visibility data automatically. The metrics that appear include grounding queries, cited pages, share of authority, and AI referral traffic trends.

Domain ownership verification may be required before citation reporting becomes available. According to the documentation, this can be completed by connecting either Bing Webmaster Tools or Google Search Console to the Clarity project. Both are accepted verification methods.

For Clarity projects associated with multiple domains, a single domain must be selected during setup for Citations reporting. The documentation notes that support for changing the selected domain after setup is not yet available - a limitation worth noting for organisations that manage large multi-domain properties through a single Clarity account.

The dashboard itself requires no CDN or server-side integration. That requirement applies only to the separate Bot Activity dashboard, which surfaces AI crawler traffic patterns and became available in January 2026. Citations data is collected and processed through Clarity's standard client-side tracking code, making it accessible without infrastructure changes.

How AI grounding differs from traditional search

The distinction between grounding queries and search queries matters for anyone who has worked exclusively in search engine optimisation. Traditional SEO optimises content for queries that users type into a search engine. The relationship between the user query and the search result is relatively direct.

AI grounding introduces a more complex layer. According to the documentation, AI systems retrieve content before generating responses and do so using queries that may differ from what users originally typed. The AI model interprets the user's question, structures a retrieval query, pulls content from across the web, evaluates relevance and authority, and then generates an answer that may cite some of those sources. The Grounding queries table in the Citations dashboard surfaces the AI-generated retrieval queries, giving content teams data on how AI systems classify their material - not just how users describe it.

According to the Clarity blog post, this matters because two conditions can be true at once: a site can rank highly in search results for a given query and still be invisible in the AI-generated answer for that same topic. The announcement uses the example of a skincare query. "Imagine a customer asking an AI assistant, 'What's the best skincare routine for sensitive skin?'" Rizk wrote. "If your content isn't being cited in that answer, even if you rank #1 in search, you're effectively invisible in that moment of discovery."

What the data does not cover

The documentation includes a specific note about scope: the Citation dashboard tracks citation activity in AI-generated answers only. It does not measure traditional search rankings, impressions, or click-through rates. According to the documentation, it captures what it describes as "a new layer of influence - one that occurs before a user visits your site, inside the AI experience itself."

The Clarity FAQ section also addresses how Citations relates to Bot Activity. According to the FAQ, AI Bot Activity shows who is accessing content and how often. Citations show whether that access results in grounding, citation, or visible attribution. Together, the documentation says, the two dashboards help organisations understand the full lifecycle from bot access to potential value - though they operate through different infrastructure and require different setup steps.

There is also a specific limitation noted in the FAQ regarding AI-enhanced search features. According to the documentation, traffic from AI-enhanced search features such as Google's AI Overviews cannot be reliably separated from standard search, because both share the same referrer. The AIPlatform and Paid AI Platform channel groups currently focus on direct AI chat platforms including ChatGPT, Claude, Gemini, and Perplexity, where discovery behaviour is clearly distinct from conventional browsing.

Roadmap items

The Clarity blog post describes upcoming capabilities. According to the announcement, the next additions include topic insights, which will automatically group cited queries into intent-driven themes. The stated goal is to help teams understand not just what content is being surfaced in AI answers, but why, and in what context AI systems are selecting it.

According to the announcement, these topic-level groupings are intended to provide what the post calls "richer competitive and attribution analysis," helping site owners understand where content appears and how strongly it contributes to AI-generated responses relative to other sources. The announcement states these capabilities are "launching soon" without specifying a date.

Why this matters for marketers

The release fits inside a broader pattern that Microsoft Clarity has been building toward for roughly two years. The platform introduced AI channel groups in August 2025, enabling tracking of traffic from ChatGPT, Claude, Gemini, Copilot, and Perplexity as distinct sources. That feature addressed the downstream portion of the AI content lifecycle - measuring referral traffic after AI systems directed users to a site.

In January 2026, Clarity added Bot Activity tracking, which addressed the earliest observable signal in that same lifecycle: which AI crawlers access a site, how often, and which pages they prioritise. Citations sits between those two points. It covers the moment when content is evaluated and selected as a source - after a bot has accessed it but before a user has visited the site.

This sequencing is significant. A site can receive substantial AI crawler traffic, as measured through Bot Activity, and still see relatively few citations if the content does not meet the authority or relevance thresholds that AI systems apply during grounding. Conversely, a domain with limited bot traffic may still be cited frequently if its pages are consistently selected when they are accessed. Citations provides data on that conversion rate from access to selection.

Research published by Clarity in December 2025 found that AI-referred traffic grew 155% over an eight-month period across more than 1,200 publisher and news websites. The same analysis found that visitors arriving from AI platforms converted to sign-ups at 1.66%, compared to 0.15% from search. A separate study released in November 2025 placed AI traffic conversion rates at three times those of traditional channels across the same dataset.

These figures put the Citations dashboard in context. AI-referred traffic represents a small share of total sessions for most sites but converts at rates that conventional channels rarely match. The challenge until now has been that there was no direct way to measure citation activity - the mechanism through which AI-referred traffic is generated in the first place.

In November 2025, Microsoft Advertising made Clarity mandatory for all third-party publishers on its platform, citing editorial and safety standards. That decision increased the installed base of Clarity across publisher properties significantly. The Citations feature, now available to all of those projects, extends the platform's utility from campaign measurement and user behaviour analysis into the newer question of AI content visibility.

According to Clarity's FAQ documentation, the AI Visibility suite - which includes both Citations and Bot Activity - is most relevant to marketing, SEO, growth, and analytics teams. The documentation also notes that developers and technical operations teams gain visibility into AI bot traffic and site access through the same infrastructure.

The feature operates within Clarity's existing pricing model, which the documentation describes as free, without traffic limits or a paid tier. According to the FAQ, Clarity processes more than 1 petabyte of data from over 100 million users per month with no sampling.

Timeline

- August 29, 2025 - Microsoft Clarity introduces AI channel groups for traffic analytics, enabling tracking of traffic from ChatGPT, Claude, Gemini, Copilot, and Perplexity as distinct sources.

- November 6, 2025 - AI traffic converts at 3x higher rates than traditional channels, according to Clarity research covering over 1,200 publisher and news websites.

- November 11, 2025 - Microsoft Advertising requires Clarity for all third-party publishers, with non-compliant traffic filtered as nonbillable.

- December 18, 2025 - Microsoft Clarity publishes research showing AI referrals grew 155% in eight months, converting 11 times better than search despite under 1% traffic share.

- January 21, 2026 - Microsoft Clarity launches Bot Activity tracking, revealing which AI systems crawl websites and how automated traffic affects infrastructure performance.

- January 6, 2026 - Microsoft Advertising publishes AEO and GEO playbook for retailers, addressing AI-powered product discovery and generative engine optimisation.

- May 12, 2026 - Microsoft Learn documentation for the Citation dashboard in AI Visibility updated, last recorded update date.

- May 13, 2026 - Microsoft Clarity announces general availability of Citations in Microsoft Clarity, written by Ihab Rizk on the Clarity blog.

Summary

Who: Microsoft Clarity, through product manager Ihab Rizk, announced the release. The feature is available to all site owners, publishers, brands, and marketing teams using Clarity projects.

What: The Citations dashboard in Microsoft Clarity AI Visibility moved to general availability. It measures page citations in AI-generated answers, share of authority relative to competing domains, AI referral traffic, grounding queries, and cited pages - giving site operators a structured view of how content participates in AI-generated experiences.

When: The general availability announcement was published May 13, 2026. The associated Microsoft Learn documentation was last updated May 12, 2026.

Where: The dashboard is accessible inside Microsoft Clarity under Dashboards > AI Visibility > Citations. It requires a Clarity project with tracking code installed and, in some cases, domain ownership verification via Bing Webmaster Tools or Google Search Console.

Why: AI-generated answers increasingly mediate how users discover content, and traditional analytics tools measure only what happens after a user visits a site. Citations addresses the stage before that visit - when AI systems retrieve, evaluate, and select sources during grounding. Without this data, publishers have no way to determine whether their content is being used in AI answers or which pages are consistently selected as sources.

Discussion