Luma last week announced the launch of Luma Agents, a new class of AI collaborators capable of executing end-to-end creative projects across text, image, video, and audio from a single unified system. The Palo Alto, California-based company made the announcement on March 5, 2026, deploying the agents immediately with global enterprise partners including Publicis Groupe and Serviceplan Group.

The launch marks a substantive step beyond what has been the prevailing approach in AI-assisted creative production - chaining together separate, specialized models for language, vision, video, and reasoning, and then using orchestration layers to stitch their outputs together. According to Luma, that fragmented architecture loses context between steps and requires increasingly complex workflows to produce reliable results at scale.

A different model architecture underneath

The agents are built on what Luma calls Unified Intelligence, a new model architecture it developed to address the fragmentation problem directly. Rather than connecting specialized models after the fact, Unified Intelligence trains a single multimodal reasoning system capable of understanding and generating across formats within the same architecture.

The first model built on this architecture is Uni-1. According to the announcement, Uni-1 is a decoder-only autoregressive transformer operating over a shared token space that interleaves language and image tokens. Both modalities function as first-class inputs and outputs in the same sequence. This design, according to Luma, enables the model to reason in language while imagining and rendering in pixels within the same forward pass - rather than completing those operations across disconnected systems in sequence.

The distinction is technical but carries practical implications. When the same model handles both the reasoning and the rendering, it can plan, visualize, and produce creative artifacts as part of a single coherent process. Context is not lost between a brief and its execution, because there is no handoff between systems.

Luma CEO and co-founder Amit Jain framed it in operational terms. "Creative work has never lacked ambition; it's lacked execution capacity," Jain said, according to the announcement. "Creative teams shouldn't have to spend their time orchestrating tools. They should spend it creating. Agents aren't shortcuts. They're collaborators that maintain context, coordinate execution, and advance projects so teams can focus on taste, direction, and strategy."

Jain added: "Intelligence shouldn't be fragmented by modality. Unified systems reason holistically. When the same model can think, imagine, and render, you move closer to intelligence that behaves coherently across the entire creative process."

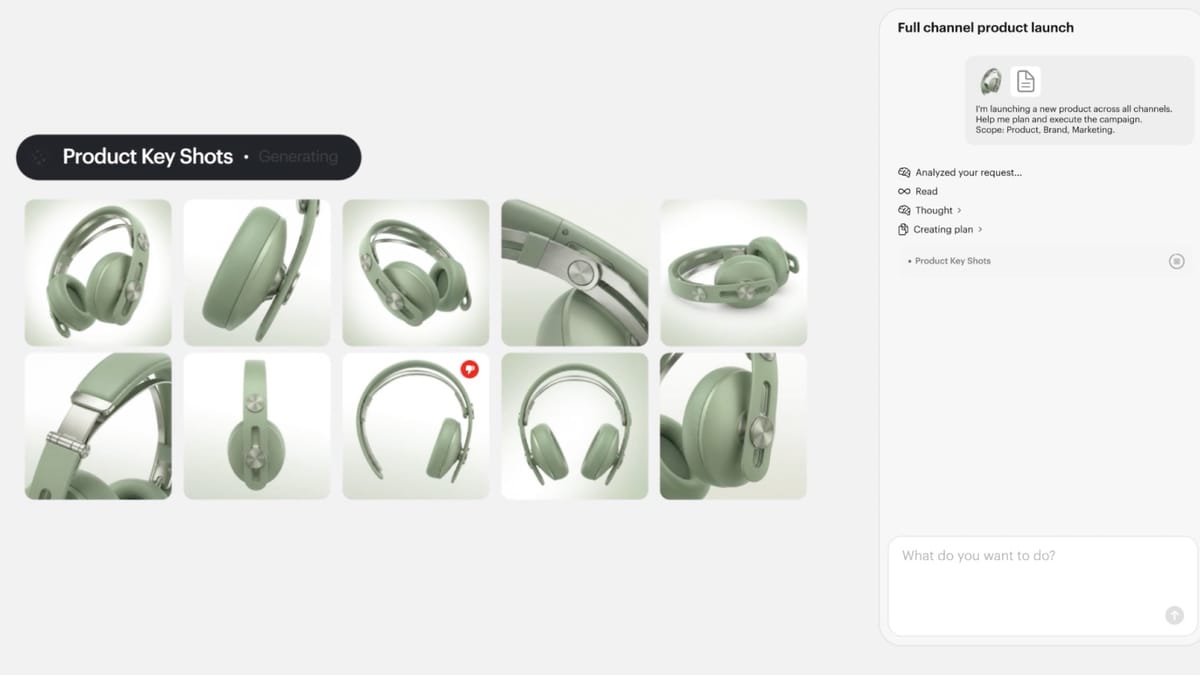

What Luma Agents actually do

The agents operate inside a collaborative, multiplayer environment. Humans direct creative intent; agents handle orchestration, routing, and execution. According to the announcement, agents can execute projects end-to-end from planning through production and delivery, maintain shared context across text, image, video, and audio assets, advance multiple creative directions in parallel, and evaluate and refine outputs through iterative self-critique rather than generating one-shot results.

Practically, agents integrate into enterprise tools and production systems via API. They coordinate across a roster of leading AI models including Ray3.14, Veo 3, Sora 2, Kling 2.6, Nano Banana Pro, Seedream, GPT Image 1.5, and ElevenLabs. Rather than requiring human operators to select and switch between these tools, the system automatically selects and routes tasks to the best model or capability for each step. Persistent context is maintained across assets, collaborators, and creative iterations throughout a project.

The ability to coordinate across multiple third-party models while maintaining context is notable. Previous multi-model workflows - and there have been many attempted in the industry - have generally required human operators to manage handoffs between tools or relied on orchestration layers that degraded coherence over longer creative projects.

Enterprise partners already deployed

Luma Agents are not entering a testing phase. According to the announcement, the system is already embedded in global agency operations. Publicis Groupe and Serviceplan Group are deploying the agents across strategy, creative development, and production workflows to increase throughput while maintaining brand consistency across markets.

Serviceplan Group's Global CCO Alexander Schill described the integration in operational terms. "Luma is now part of our broader House of AI ecosystem and integrated directly into our creative workflows. It allows our teams across more than 20 countries to collaborate more smoothly and develop great work faster. For our clients, that means high-quality creative output delivered with greater speed and efficiency - without compromising craft," Schill said, according to the announcement.

The involvement of Publicis Groupe is worth noting in context. The French holding company has been positioning itself aggressively at the intersection of AI and advertising services, dismissing concerns from analysts about platform-led creative automation threatening agency business models. Its deployment of Luma Agents fits within a broader strategy of embedding AI tools into agency operations rather than treating automation as a competitive threat from outside.

Serviceplan Group's simultaneous adoption is significant for a different reason. The Munich-based independent network operates across more than 20 countries, making brand consistency across markets a specific operational challenge. The claim that agents can maintain that consistency while increasing throughput is exactly the kind of enterprise value proposition that would accelerate adoption among holding company networks.

Where this sits in the broader AI creative landscape

The IAB reported in July 2025 that 86% of buyers currently use or plan to implement generative AI for video advertisement creation, with the study projecting that AI-generated creative will account for 40% of all advertisements by 2026. The infrastructure investments from platforms and agencies have accelerated significantly since then.

Google launched Asset Studio in September 2025, a centralized creative platform integrating Imagen 4 and Veo for generating and editing advertising assets. The tool can process up to 100 images simultaneously and introduced video generation capabilities directly within Google Ads. Amazon expanded its Creative Agent to Streaming TV and Sponsored TV formats in November 2025, bringing agentic AI into video advertising across its streaming inventory. Multiple advertising platforms scrambled to position their infrastructure around AI agents during the final months of 2025, with the IAB Tech Lab introducing its Agentic RTB Framework in November.

What distinguishes Luma's approach from most of these is the target user and the scope of the task. Platform-level AI creative tools - whether at Google, Meta, or Amazon - are designed primarily to help advertisers produce assets optimized for their specific inventory. Luma Agents are designed for agencies and creative studios that need to produce original work across formats and channels, not simply adapt existing assets for a particular platform's ad formats.

The distinction matters for the ongoing debate about whether AI tools will eventually displace creative agencies. Mark Zuckerberg articulated a vision in May 2025 where businesses connect a budget, state an objective, and have Meta's systems handle creative development, targeting, and optimization without agency involvement. Luma's deployment model points in a different direction - positioning AI as infrastructure that makes agency creative operations faster and more scalable, rather than replacing them.

Research published on PPC Land showed that AI-assisted commercial production can compress timelines from weeks to roughly 10 days while cutting costs by up to 60%. The operational gains are real and measurable. The question is who captures the value - platforms offering automated end-to-end advertising, or agencies equipped with AI infrastructure that scales their creative output.

Agentic AI's potential to threaten traditional DSP business models has been a recurring topic in industry analysis since mid-2025. The argument, made by Ari Paparo among others, is that AI systems capable of performing active campaign management tasks could bypass the centralized role traditionally occupied by demand-side platforms. The same logic applies, with different contours, to creative production: systems capable of executing complete creative projects could shift where creative value is generated and captured across the industry.

Enterprise safeguards

Luma has built the agents with enterprise intellectual property and compliance requirements in mind. According to the announcement, the system includes full IP ownership retained by customers, automated content review to reduce copyright risk, legal trace documentation demonstrating human involvement, required human review workflows prior to public release, and cloud-based infrastructure with enterprise-grade guardrails.

The legal trace documentation and mandatory human review workflow are deliberate features for regulated industries and large enterprises where demonstrating human involvement in creative decisions carries legal and contractual weight. The copyright risk reduction capability addresses one of the more persistent concerns in enterprise AI creative deployments, where automated generation tools have raised questions about provenance and ownership of generated assets.

These safeguards reflect a broader challenge that has slowed enterprise AI creative adoption. Research has documented a significant confidence-capability gap, where organizations claim AI readiness but lack the data governance foundations required for autonomous systems to operate reliably. Publicis Sapient's 2026 Guide to Next, published in November 2025, found that AI projects rarely fail because of bad models - they fail because the data feeding them is inconsistent and fragmented. Embedding compliance, human oversight, and IP documentation into the agent architecture is one way to address the governance gap rather than leaving it to clients to resolve independently.

Luma's track record with models

Luma is not a new entrant in the AI creative tools space. In 2025, the company launched Ray3, described as the world's first reasoning video model, capable of creating physically accurate videos, animations, and visuals. Ray3.14 followed, delivering native 1080p outputs and production-grade stability for professional workflows. The company is backed by HUMAIN, Andreessen Horowitz, AWS, AMD Ventures, NVIDIA, Amplify Partners, Matrix Partners, and leading investors across technology and entertainment.

The company is also running a parallel initiative aimed at the creative community at large. Luma AI announced a $1 million competition tied to the 2026 Cannes Lions festival, offering the prize to any team that wins a Gold Lion using Luma AI tools. The competition opened on February 2, 2026, with submissions accepted until March 22, 2026. The jury, comprising 18 industry figures, was announced on February 24, 2026. These two initiatives - enterprise agent deployment and a creative competition - address different ends of the market simultaneously.

The Cannes competition requires that submissions be at least 10 seconds long and comprised of at least 70% material generated using Luma AI's platform. Featured submissions are to be announced April 9, 2026, with the grand prize resting on results from the Cannes Lions festival in June 2026.

What this means for marketing teams

AI-generated ads have been shown to perform as well as human-made ads in research published in early 2026, provided they do not appear overtly AI-generated. AI-generated creatives increased or maintained click-through rates without negatively impacting conversion rates across Taboola's publisher network of approximately 600 million daily active users. Food and drink categories and personal finance industries showed particularly strong performance with AI-generated creative.

The performance data matters here because it changes the business case for AI creative infrastructure at the agency level. If AI-assisted creative production can match human-made performance while compressing timelines and cost, the competitive pressure on agencies to adopt tools like Luma Agents increases. Agencies that resist adoption face cost disadvantages; those that adopt too quickly without appropriate governance face quality and compliance risks.

PubMatic's launch of AgenticOS in January 2026 illustrated how quickly agentic infrastructure is moving from pilot programs to live campaign deployments. The programmatic buying side of advertising is already running live campaigns through autonomous systems. The creative production side - which Luma Agents addresses - is following a similar trajectory, just with different technical requirements and a different set of stakeholders.

The question for marketing teams is whether the creative production workflow they operate today is built for a world where a single unified system can take a brief and deliver finished creative across formats without manual orchestration between tools. If it is not, the pressure to rebuild that workflow is intensifying.

Timeline

- 2025: Luma launches Ray3, the world's first reasoning video model, followed by Ray3.14 with native 1080p output and production-grade stability

- July 2025: McKinsey Technology Trends Outlook identifies agentic AI as the most significant emerging trend for marketing organizations

- July 17, 2025: Publicis Groupe reports accelerated 5.9% organic growth and dismisses AI platform disruption fears

- September 10, 2025: Google launches Asset Studio, integrating Imagen 4 and Veo for AI creative production directly within Google Ads

- October 15, 2025: Ad Context Protocol launches, with six companies betting on open-source standards for agentic advertising automation

- November 2025: Publicis Sapient's 2026 Guide to Next identifies data governance failures as the primary barrier to AI success in enterprises

- November 16, 2025: Amazon, Google, and IAB Tech Lab converge behind agentic AI infrastructure for advertising with live deployments

- January 5, 2026: PubMatic launches AgenticOS, the first operating system built specifically for autonomous advertising execution

- February 2, 2026: Luma AI opens $1 million Cannes Lions competition for creative teams using Luma AI tools

- February 3, 2026: Research shows AI-generated ads match human creative performance when they do not appear visibly AI-made

- February 24, 2026: Luma AI announces 18-person jury for $1 million Cannes Lions Dream Brief competition

- March 5, 2026: Luma launches Luma Agents powered by Unified Intelligence, deploying immediately with Publicis Groupe and Serviceplan Group across global operations

Summary

Who: Luma, a Palo Alto, California-based AI company backed by HUMAIN, Andreessen Horowitz, NVIDIA, AWS, and AMD Ventures, announced Luma Agents on March 5, 2026. Enterprise partners Publicis Groupe and Serviceplan Group are deploying the agents across global operations at launch. Serviceplan Group's Global CCO Alexander Schill and Luma CEO Amit Jain provided statements on the deployment.

What: Luma Agents are AI collaborators built on Unified Intelligence, a new model architecture featuring Uni-1 - a decoder-only autoregressive transformer that interleaves language and image tokens in a shared token space. The agents execute end-to-end creative work across text, image, video, and audio, coordinating across third-party models including Ray3.14, Veo 3, Sora 2, Kling 2.6, and ElevenLabs. Enterprise safeguards include full IP ownership for customers, automated content review, legal trace documentation, required human review workflows, and cloud-based enterprise infrastructure.

When: The announcement was made on March 5, 2026, with agents deployed immediately into production environments at Publicis Groupe and Serviceplan Group at launch.

Where: Luma is headquartered in Palo Alto, California. Serviceplan Group's deployment spans more than 20 countries. The technical infrastructure is cloud-based with enterprise-grade guardrails.

Why: Luma launched the agents to address the fragmentation problem in AI-assisted creative production, where chaining separate models for language, vision, video, and reasoning loses context between steps and requires complex manual workflows. The Unified Intelligence architecture trains a single multimodal reasoning system capable of handling all modalities within the same architecture, enabling agents to maintain context from brief to final delivery without manual orchestration.