TikTok this week announced it is introducing a new moderation system in the US and Canada, that works in a aumtomated way.

The new automated moderation on TikTok works with violations on minor safety, adult nudity and sexual activities, violent and graphic content, and illegal activities and regulated goods. TikTok says the automated system has the highest degree of accuracy on those violations.

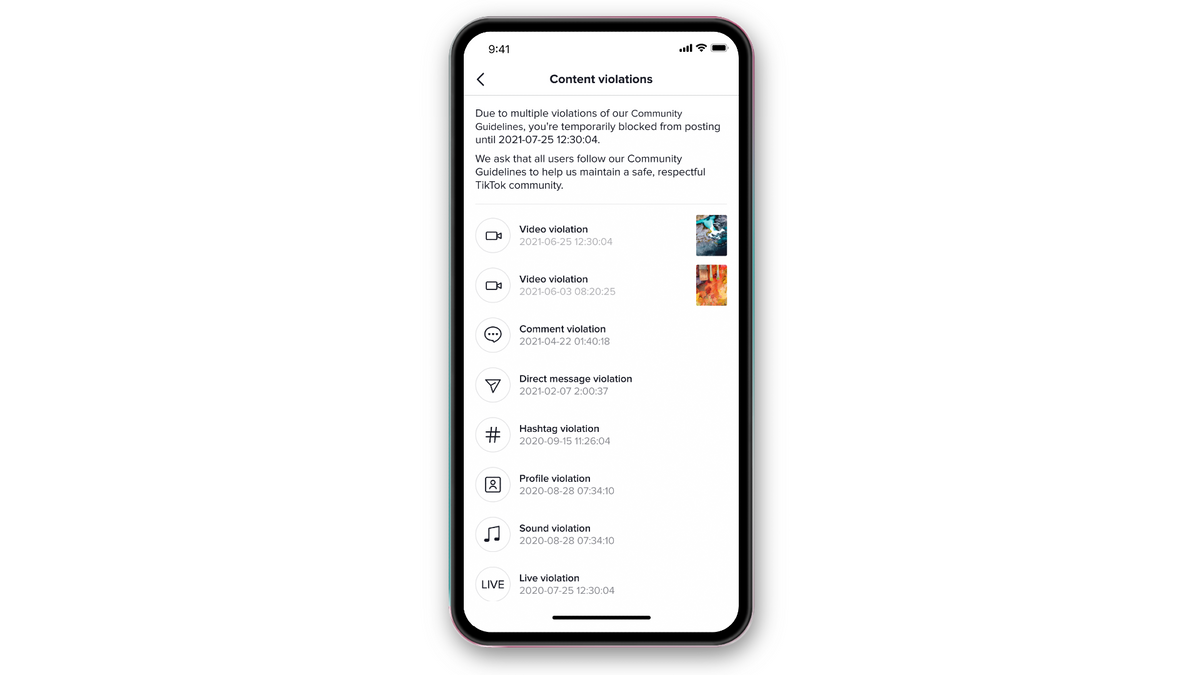

According to TikTok, when a violation happens, the video is removed and the creator is notified of the removal and reason, and given the opportunity to appeal the removal. If no violation is identified, the video is posted and others on TikTok are able to view it.

TikTok clarifies that mass reporting content or accounts do not lead to an automatic removal or to a greater likelihood of removal by our Safety team. And that the false positive rate for automated removals is 5%.