Blocking AI bots with a robots.txt file has become a reflex for many publishers. But a new study published on March 19, 2026, by BuzzStream challenges the assumption that blocking crawlers translates into fewer AI citations. The findings, drawn from 4 million citations across 3,600 prompts, suggest the relationship between crawler access and AI citation is far weaker than most publishers believe.

The research, authored by Vince Nero, Director of Content Marketing at BuzzStream, used data from Citation Labs' AI citation-tracking tool, XOFU. The dataset covered ChatGPT, Gemini, Google AI Overviews, and Google AI Mode, spanning 10 industries. It is the third installment in a multi-part series examining how AI systems select and surface news content.

The core numbers are striking. Among the top 50 news sites blocking ChatGPT's live retrieval bot, ChatGPT-User, 70.6% still appeared in the dataset's AI citations. Sites blocking OAI-SearchBot - the bot associated with ChatGPT's search features - still appeared in 82.4% of cases. For GPTBot, the training crawler, 88.2% of blocking sites were cited anyway.

On the Google side, 92.3% of sites blocking Google-Extended, the training bot used for Gemini, still appeared in citations. None of the studied sites blocked Googlebot itself, since doing so would also remove them from standard Google Search results - a trade-off no publisher has been willing to make.

What the bots actually do

To understand why these numbers matter, it helps to separate the different crawler types. According to the study, there are two distinct purposes: some bots collect data to train AI models, others power live retrieval and search features. The distinction is meaningful because citations - the links and sources that appear in AI responses - appear to emerge primarily from live retrieval rather than the training data.

OpenAI operates three separate crawlers: GPTBot handles model training, OAI-SearchBot covers search indexing, and ChatGPT-User handles live retrieval when a user interacts with ChatGPT in real time. Google's ecosystem similarly splits between Google-Extended for training and Googlebot for live search. This architecture means that even a publisher blocking every training crawler may still appear in citations if the retrieval system can reach their content through other means.

PPC Land has tracked this landscape closely, including when OpenAI revised its ChatGPT crawler documentation in December 2025 to remove training language from OAI-SearchBot's description entirely - a sign of increasing pressure on AI companies to clarify exactly what each bot does and does not do.

The citation share, not just presence

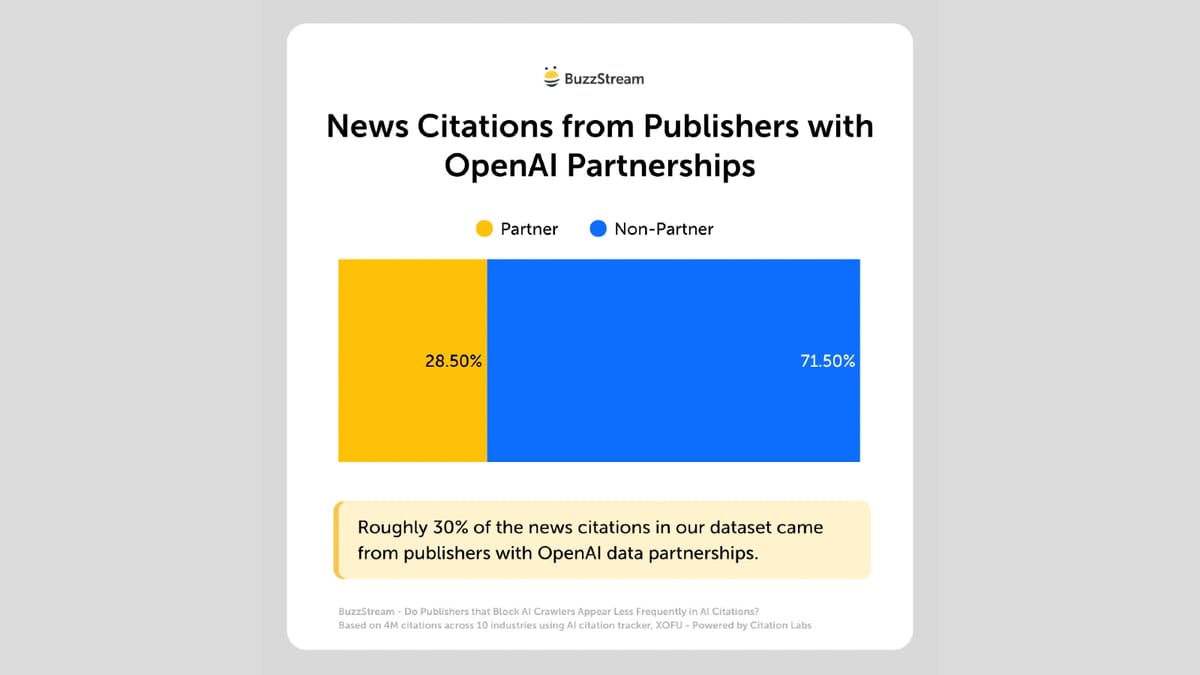

The BuzzStream data becomes sharper when looking at citation volume rather than mere presence. Roughly 70% of all ChatGPT citations in the dataset came from sites that block ChatGPT's retrieval bots. That figure rises to 95% when looking at sites that block training bots. In other words, the overwhelming majority of content that ChatGPT cites is content that has, at some level, tried to restrict AI access.

The Google figures follow a similar pattern. According to the study, citation percentages from sites blocking Google-Extended track closely with the ChatGPT results, reinforcing that blocking training crawlers does not remove a site from AI citation pools.

One concrete example from the data: cnbc.com blocks ChatGPT-User, GPTBot, and OAI-SearchBot simultaneously, yet the site appeared 1,298 times in the citation dataset. Yahoo.com blocks Google-Extended and still appeared in close to 30,000 citations.

Three possible explanations

The BuzzStream report offers several possible explanations, though it is careful to note that none are definitive. The underlying mechanics of how AI systems retrieve and cite content are not fully public.

Indexing before blocking began. Some publishers may have added robots.txt directives after their content was already indexed. However, the study finds limited support for this explanation. According to the BuzzStream data, only 15% of cited publications in the dataset predated ChatGPT's launch, and roughly 30% existed before Google AI Overviews launched. AI systems are clearly citing recent content, which means pre-blocking indexing alone does not explain the pattern.

The Common Crawl factor. Common Crawl, a non-profit, has been archiving the web for years and provided foundational training data to many AI systems including early versions of ChatGPT and Gemini. According to the study, however, around 70% of sites in the dataset also block CCBot, the Common Crawl crawler - so this explanation, too, is incomplete.

The scale of the Common Crawl issue was documented separately in November 2025, when an investigation found Common Crawl had supplied millions of paywalled news articles to AI developers including OpenAI, Google, Anthropic, and others, despite publisher objections.

Bots ignoring directives. The study cites a Reuters investigation finding substantial evidence of AI companies circumventing robots.txt. Harry Clarkson-Bennett of The Telegraph put it directly in a previous BuzzStream interview, saying: "So the robots.txt file is a directive. It's like a sign that says, 'please keep out, but doesn't stop a disobedient or maliciously wired robot. Lots of them flagrantly ignore these directives."

This is not a new concern. Cloudflare launched its Robotcop enforcement tool in December 2024 precisely because robots.txt relies on voluntary compliance. By converting robots.txt directives into Web Application Firewall rules, Cloudflare created a network-level enforcement mechanism rather than relying on crawlers to respect the directives themselves.

SERP-level extraction. The fourth and perhaps most technically significant explanation in the BuzzStream study is that robots.txt may simply be irrelevant to one of the key ways AI models gather citation data. According to Nero, some AI retrieval systems pull data directly from search engine results pages - titles, URLs, and snippet text - without ever fetching the underlying page. If AI citation generation can occur using SERP-level data alone, then access controls on the origin server are bypassed entirely.

Some documentation from Google on Vertex AI hints at this mechanism, though, according to the study, Google does not state explicitly that it does so. This uncertainty cuts to the heart of the problem: publishers are building access control strategies against systems whose architectures are not fully disclosed.

The enforcement gap is widening

The industry's response to AI crawling has escalated steadily over the past two years. In August 2024, data showed 35.7% of the top 1,000 global websites blocked OpenAI's GPTBot - a seven-fold increase from the 5% blocking rate when the crawler first launched in August 2023. Cloudflare data published in June 2024 showed AI bots accessed approximately 39% of the top one million internet properties, with only 2.98% implementing countermeasures.

By late 2025, AI crawlers accounted for 4.2% of all HTML requests across Cloudflare's network, against a backdrop of 19% overall global internet traffic growth. GPTBot's share of AI crawling traffic grew from 4.7% to 11.7% between July 2024 and July 2025 alone.

The cost of blocking has also come into sharper focus. Research published December 31, 2025, by academics from Rutgers Business School and The Wharton School found that publishers blocking AI crawlers via robots.txt experienced a total traffic decline of 23.1% in monthly visits and a 13.9% decline in human-only browsing. Blocking appears to reduce traffic without reliably reducing AI citation rates - a combination that makes the trade-off increasingly difficult to justify on operational grounds.

The BuzzStream findings arrive in the context of a broader collapse in publisher referral traffic. Media leaders surveyed by the Reuters Institute in January 2026 reported that Google Search traffic had fallen 38% in the United States, with Web Search declining from 51% to 27% of referrals between 2023 and the fourth quarter of 2025. Publishers expected an additional 43% traffic decline over the following three years.

Czech publishers in March 2026 moved toward a more structured approach to the problem, when SPIR - the Czech Association for Internet Development - published a two-tier robots.txt framework distinguishing between AI training bots and real-time retrieval bots. The framework was designed to align with the EU copyright law's text and data mining exception, giving publishers a legally grounded opt-out mechanism. That initiative suggests that the industry is beginning to treat robots.txt not as a reliable technical barrier but as a documented statement of intent under copyright frameworks.

What the data does not resolve

The BuzzStream study is transparent about its limits. The mechanics of how AI retrieval actually works - which bots are active at citation time, how SERP extraction interacts with origin-server blocks, what role cached content plays - remain substantially opaque. What the data can do is measure the correlation between blocking and citation rates, and that correlation is, by the evidence, extremely weak.

The study's scale is meaningful: 4 million citations from 3,600 prompts is a significant sample. But the authors draw no causal conclusions. The study cannot determine whether AI systems are actively circumventing robots.txt, whether they have already indexed blocked content, or whether SERP-level extraction is the dominant pathway for citation data.

Citation patterns themselves have been volatile. Research from August 2025 found that ChatGPT's referral traffic dropped 52% from July 21 as OpenAI adjusted how its retrieval-augmented generation system weighted sources, shifting citations toward Reddit and Wikipedia and away from branded publisher content. Three domains alone came to control 22% of all ChatGPT citations. That level of volatility makes it hard to build stable publisher strategies around citation optimization of any kind.

Why this matters for marketing professionals

For marketers tracking AI search visibility, the BuzzStream data raises a practical question: are bot access logs a reliable proxy for citation exposure? If blocking a bot does not reduce citation rates, then measuring bot blocks as a signal of AI invisibility is likely to produce misleading reporting.

PPC Land has noted that marketing teams using AI-userbot traffic as a performance metric may be measuring something that correlates only loosely with actual AI citation. The BuzzStream study provides direct empirical grounding for that concern: the bots that are blocked and the citations that appear are, in a majority of cases, not connected in the way that robots.txt logic would imply.

The study's practical observation is that the decision to block or not block AI crawlers appears, in current conditions, to have little measurable effect on whether a publisher appears in AI-generated responses. What drives citation - freshness, authority, SERP performance, licensing agreements with AI companies - remains an area where the industry lacks consensus and where data is still accumulating.

Timeline

- August 2023 - OpenAI launches GPTBot; 5% of top 1,000 websites block it at launch

- June 2024 - Cloudflare introduces feature to block AI scrapers and crawlers; AI bots accessed approximately 39% of top one million internet properties

- July 2024 - Cloudflare reveals extensive AI bot activity across its network; only 2.98% of properties had implemented countermeasures

- August 3, 2024 - GPTBot blocked by 35.7% of top 1,000 websites, a seven-fold increase from the 5% blocking rate at launch

- July 21, 2025 - ChatGPT referral traffic drops 52% as OpenAI adjusts citation weighting; Reddit and Wikipedia citations surge

- July 30, 2025 - Over 80 media executives rally against AI scraping at IAB Tech Lab summit in New York

- November 4-5, 2025 - Investigation reveals Common Crawl supplied paywalled content to AI companies including OpenAI, Google, Anthropic, and others

- November 6, 2025 - Microsoft Clarity research shows AI referral traffic grew 155.6% over eight months while converting to sign-ups at 1.66% versus 0.15% from search

- December 9, 2025 - OpenAI revises ChatGPT crawler documentation, removing training language from OAI-SearchBot description

- December 20, 2025 - Cloudflare data shows AI crawlers account for 4.2% of HTML requests as global internet traffic grows 19% in 2025

- December 31, 2025 - Research from Rutgers and Wharton finds AI crawler blocking led to 23.1% total traffic decline and 13.9% human traffic decline

- January 12, 2026 - Reuters Institute survey of 280 media executives finds publishers expect additional 43% traffic decline over three years

- March 19, 2026 - Czech publishers gain updated robots.txt framework under SPIR standard covering both AI training and real-time retrieval bots

- March 19, 2026 - BuzzStream publishes study of 4 million AI citations showing blocking crawlers does not reliably reduce citation rates across ChatGPT, Gemini, AI Overviews, and AI Mode

Summary

Who: BuzzStream, a digital PR and link-building platform, published the research. Vince Nero, Director of Content Marketing, authored the study using citation data from Citation Labs' XOFU tool. The findings affect news publishers, digital marketers, SEO professionals, and anyone managing content access policies in relation to AI platforms.

What: A study of 4 million AI citations from 3,600 prompts found that news publishers blocking AI crawlers via robots.txt still appeared in the vast majority of AI-generated citations. Among sites blocking ChatGPT-User, 70.6% still appeared in citations. Among sites blocking Google-Extended, 92.3% still appeared. Roughly 95% of ChatGPT citations came from sites blocking training bots, and around 70% came from sites blocking retrieval bots.

When: The study was published on March 19, 2026, as the third part of a multi-part research series examining AI citation behaviour across major platforms.

Where: The citation data was drawn from ChatGPT, Gemini, Google AI Overviews, and Google AI Mode across 10 industries. The robots.txt blocking analysis focused on the top 50 news sites blocking specific AI crawlers.

Why: The study matters because publishers have invested significant effort in blocking AI crawlers as a content protection strategy. The data suggests this approach does not reliably prevent AI citation, raising questions about the effectiveness of robots.txt as a control mechanism and about how AI systems actually retrieve the content they cite. This has direct implications for how marketers and publishers measure AI search visibility, set access policies, and understand the relationship between technical controls and content distribution in AI-powered environments.