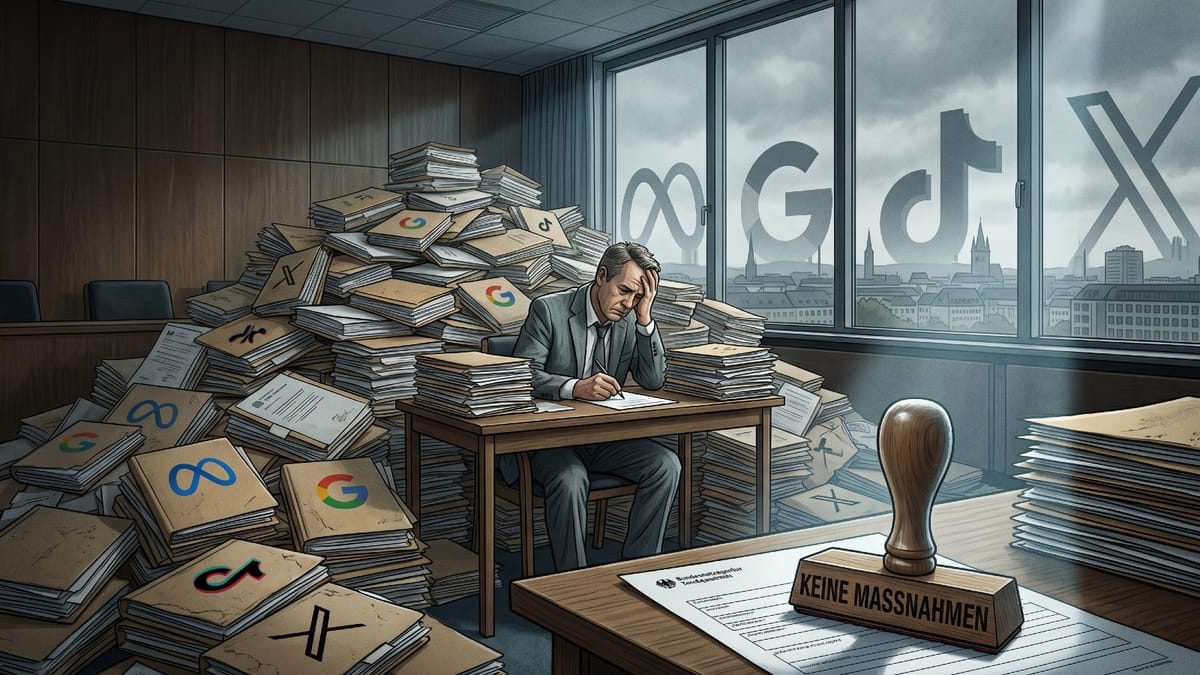

Germany's Digital Services Coordinator (DSC), housed within the Federal Network Agency (Bundesnetzagentur), this week published its 2025 activity report - the first full calendar year of Digital Services Act enforcement in the country. The document runs to 35 pages, is dated April 2026, and has been submitted to the German legislature as well as the European Commission. Its most striking numbers are not the ones on the cover. Of 2,033 formal complaints received, 26 led to proceedings. Of those 26 proceedings, none produced a fine. Of the three other German authorities sharing DSA responsibilities, none imposed a penalty either.

The agency's head, Johannes Heidelberger, addresses this directly in his foreword. According to the report, "the enforcement of the DSA is still in an early phase," and its results "require a long breath." Whether that framing satisfies the users who filed those 2,033 complaints is a separate question - one the report does not answer.

2,033 complaints, 26 proceedings: what the gap means

The 1.3% complaint-to-proceeding conversion rate is the central fact of the 2025 report. Understanding it requires tracing where complaints actually went.

Of the 2,033 DSA complaints received, 255 - roughly 12.5% - were forwarded to coordinators in other EU member states, because the platform being complained about was registered there rather than in Germany. Ireland alone absorbed 237 of those 255 referrals, or 92.9% of all outbound cases. This is not an administrative anomaly. It is the direct consequence of where the largest platforms - Meta, Google, TikTok, X - have placed their European legal entities. A user in Germany complaining about Facebook is, under DSA rules, a German resident complaining about an Irish-registered company. The German DSC reviews the complaint, may add a written opinion, and transfers the file to Dublin. Outcomes from that point are determined by Ireland's Coimisiun na Mean.

Three complaints were redirected to Germany's state media authorities, the Landesmedienanstalten, which hold separate jurisdiction over certain platform obligations. One went directly to the European Commission. The pool of complaints that the German DSC could potentially act on nationally shrank substantially from the 2,033 headline figure before any investigation began.

Among the complaints that remained, the agency does not - and legally cannot - open a proceeding for each one. According to the report, the DSC opens proceedings only when indicators of a violation are "sufficiently substantiated" and concern a provider within its territorial jurisdiction. German administrative law requires what the report calls ermessensfehlerfrei decision-making - discretion exercised without legal error. Consolidation of similar complaints, early-stage assessment, and prioritization decisions all filter the caseload further before a formal proceeding is recorded.

The 26 proceedings opened in 2025 focused primarily on three DSA articles: Article 16 (notice and action mechanisms, requiring platforms to maintain accessible illegal-content reporting channels), Article 17 (requiring platforms to justify moderation decisions to users), and Article 20 (internal complaint management systems). For online marketplace cases, Article 30 - trader traceability - was also involved. Twelve of the 26 proceedings concerned this cluster. The remaining 14 covered other DSA obligations within the DSC's competence.

38.5% resolved without escalation

Of the 26 proceedings opened in 2025, 10 were already closed by year-end - a 38.5% voluntary resolution rate within a single calendar year. In each of those cases, the platform corrected the specific deficiency after being contacted, without a formal order or financial penalty. The remaining 61.5% - 16 proceedings - were still active at December 31, 2025, either under investigation or moving toward further supervisory measures. Combined with four proceedings opened in 2024 that remained active, the DSC carried 20 open cases into 2026.

The report describes voluntary early compliance as a genuine enforcement outcome. This framing is consistent with how DSA enforcement is structured across the EU: the regulation gives platforms a path to remediate identified problems, and coordinators are expected to use that path before escalating. Whether the approach produces lasting behavioral change - or only surface-level corrections that erode once scrutiny moves on - is not something the 2025 data can resolve.

Zero fines from four German authorities

The DSC, the KidD, the BfDI, and the Landesmedienanstalten collectively imposed no administrative fines and issued no formal orders under their DSA powers during 2025. The relevant legal powers existed throughout the year and were not used.

Under German law, the DSC can impose coercive daily fines of up to five percent of a provider's average global daily revenue to enforce compliance orders. In serious cases it can pursue fines of up to six percent of annual worldwide turnover - the same ceiling used by the European Commission against large platforms. Neither threshold was reached.

The procedural explanation is that a formal order requires the platform to have been heard, to have failed to remedy the problem, and for the agency to have made a discretionary decision to escalate. Since most proceedings were either recently opened or resolved voluntarily, none progressed far enough along that chain. The report notes that 2024 saw four proceedings opened; those that were not closed by voluntary compliance were still in the investigation phase at year-end 2025. That is now more than a year since the DSA became fully applicable, without a single German-issued fine.

The contrast with European Commission-level enforcement is pointed. On December 5, 2025, the Commission imposed 120 million euros in fines against X - the first non-compliance decision under the DSA - for a deceptive verification system, an inadequate advertising repository, and insufficient researcher data access. That decision followed years of investigation. Germany's proceedings are earlier-stage, but the gap between EU-level action and national-level action raises questions about whether the distributed enforcement model is producing consistent platform pressure.

Staffing at 37% of estimated need

The zero-fine outcome and the 1.3% conversion rate do not exist in a vacuum. The DSC operated in 2025 at roughly 37% of the staffing level its own founding legislation estimated as necessary.

The original German Digital Services Law projected a need for 70.56 full-time positions for substantive enforcement tasks, plus 20.8 for cross-functional roles - a total of 91.36. In practice, 34 positions were allocated and 30 were filled as of December 31, 2025. Ten of the 34 allocated positions lacked confirmed budget funding for 2025, though the report says this was expected to be resolved in 2026.

The operational budget for non-staff costs was set at 1.7 million euros, covering IT systems, software licenses, training, networking, and conferences. Because Germany's federal budget operated under provisional management for nine of the twelve months - a consequence of the snap Bundestag election in February 2025 - many planned activities could not be financed within the year. The full allocation for research and studies was spent. The rest was only partially drawn down. According to the report, this directly prevented a number of planned projects from being executed.

The snap election added an unplanned workload

Under the DSA, platforms are required to assess and mitigate systemic risks to democratic processes. The early election date compressed what would normally have been a multi-month preparation process into weeks. Between January 7 and February 23, 2025, the DSC held a series of external meetings with X, Google/YouTube, TikTok, Meta, and Microsoft in connection with election preparations. A round table with the European Commission and multiple VLOPs took place on January 24. A formal stress test involving the Commission, platforms, civil society, and research organizations ran on January 31. Post-election review meetings followed in late February and early March. This work was not budgeted at the start of the year and consumed staff capacity that would otherwise have been available for the core enforcement caseload.

What the complaints actually covered

The 2,033 DSA complaints that arrived via the portal represent only part of the total contact volume. The portal received 3,321 submissions in total - meaning 1,288 were addenda to existing complaints or non-DSA submissions. A further 332 portal submissions had no connection to the DSA, covering data protection disputes, telecom provider complaints, and similar issues. An additional 2,688 contacts came via fax, email, or letter on digital topics.

According to the report, complaints primarily concerned insufficient justifications when platforms restricted or removed accounts or content, the non-removal of content users had flagged as illegal, and the poor usability of platform reporting channels. Most complaints targeted VLOPs with headquarters or legal representatives in Ireland - which explains the 92.9% Ireland share of outbound referrals. The DSC itself received ten incoming complaints from other member states' coordinators via the AGORA exchange system, from Denmark, Finland, Lithuania, Luxembourg, Austria, and Sweden.

The KidD and BfDI picture

The KidD examined 31 services for compliance with youth protection precautions under Article 28 DSA and assessed 39 services for child-friendly terms and conditions under Article 14(3). It commissioned 11 expert opinions, initiated eight provider dialogues, and requested assessments from multiple bodies. No formal orders were issued. According to the report, formal enforcement requires completed provider dialogues as a prerequisite - a process that had not concluded for any provider by year-end.

The BfDI found no DSA violations at all in 2025 and deployed approximately 0.6 full-time equivalents for DSA-related work. The Landesmedienanstalten found no violations among platforms with a German registered presence, though they identified ongoing violations at ten foreign-based platforms relating to age verification - cases already partly in judicial proceedings.

What did produce results: Articles 9 and 10

The area with the clearest enforcement outcomes in 2025 was not the DSC's administrative proceedings, but the Article 9 and 10 order mechanisms - where courts and administrative bodies can directly order platforms to remove illegal content or provide user information.

The DSC received 19 Article 9 orders and 23 Article 10 orders via its dedicated portal. The 16 Article 9 orders issued within 2025 itself broke down as: seven cases of incitement to hatred (Volksverhetzung), four cases of Holocaust denial, three cases involving symbols of unconstitutional organizations, and two involving violence or violations of human dignity. Of those 16 orders, nine pieces of content were confirmed deleted - a 56.3% confirmed deletion rate. The legal basis in each case was the German Interstate Treaty on Youth Media Protection. Article 10 information orders came primarily from police, with 15 confirmed executed.

Trusted Flaggers: 12.9% approval rate

The DSC certified three new Trusted Flaggers in 2025 - organizations whose illegal-content reports platforms must prioritize under Article 22 DSA: Bundesverband Onlinehandel e.V. (BVOH), HateAid gGmbH, and Verbraucherzentrale Bundesverband e.V. (vzbv). Together with Meldestelle REspect!, certified in 2024, Germany had four at year-end.

The approval rate was 12.9%: four approved from 31 total applications. The DSC applies strict standards deliberately - platforms must legally prioritize Trusted Flagger reports, so a weak or non-independent organization holding the designation would distort that system. High rejection rates are, by the agency's own account, a design feature rather than a processing bottleneck.

One new out-of-court dispute resolution body was certified in 2025. Platform Control (KLN information service UG), certified on November 4, 2025, handles disputes covering Google Maps, YouTube, Reddit, Tinder, Hinge, and OKcupid. User Rights GmbH, certified in 2024, received an expanded scope on June 25, 2025, adding Facebook and Pinterest.

Researcher data access: 75% of applications withdrawn

The implementing regulation enabling researcher access to VLOP and VLOSE data under Article 40 DSA entered into force on October 29, 2025. The first application reached the German DSC within 24 hours. By December 31, eight applications had been submitted. Six - 75% - were subsequently withdrawn. Applicants found that the lower-barrier Article 40(12) access route was sufficient for their needs and switched to it. One was resubmitted in amended form; the DSC completed an initial assessment and forwarded it to the relevant platform's home-country coordinator on December 29, 2025. The report expects application volumes to grow continuously into 2026.

European enforcement and what it shows by comparison

The German domestic picture sits alongside more active EU-level enforcement. The Commission fined X 120 million euros on December 5, 2025. X terminated the Commission's advertising account two days later. On the same December 5 date, TikTok made binding commitments on its advertising database following the Commission's preliminary findings. Those preliminary findings against TikTok and Meta were reported in October 2025. AliExpress made binding commitments in June 2025. The Commission opened proceedings against four major pornography platforms in May. The German DSC contributed information to all of these cases - but the fines and binding commitments came from Brussels, not Bonn.

Political advertising regulation adds scope without staff

The TTPW political advertising regulation entered full force on October 10, 2025. Meta blocked EU political ads as it took effect. Google had already restricted EU political advertising to official communications only. The German government provisionally named the DSC as national contact point pending a national implementing law that had not passed by year-end. Six months after the regulation took effect, Germany's BVDW association published the first practical guidance for running political ads under the TTPA rules - illustrating how fragmented implementation guidance remained well into 2026. The DSC's TTPW coordination role is arriving on top of an existing enforcement caseload, before the staffing gap from the DSA mandate has been resolved.

Why this matters for platforms and advertisers

For the digital advertising industry, the 1.3% conversion rate and zero-fine outcome have a practical meaning. Platforms operating in Germany can observe that, in 2025, the national enforcement authority converted a small fraction of user complaints into proceedings and escalated none of them to financial penalties. The structural reasons - jurisdictional routing to Ireland, staffing at 37% of projected need, budget uncertainty for most of the year - are documented in the report. They are also visible to the platforms being regulated.

The 26 proceedings do target commercially significant provisions. Article 16 compliance - which German businesses have been documented abusing to suppress negative reviews at scale - directly affects how advertisers and sellers interact with platform moderation systems. Article 30 trader traceability touches every brand selling via an online marketplace with a German legal presence. The January 2025 court ruling confirming DSA platform liability added commercial pressure to over-remove content, which in turn creates the conditions for the review-removal abuse the DSC is also investigating.

The report is candid about its constraints without offering a timeline for resolving them. Whether Germany's legislature allocates the remaining positions - and when - will determine how quickly those 20 open proceedings move toward conclusions that platforms actually feel.

Timeline

- November 2022 - Digital Services Act enters into force; DSA and DMA overview on PPC Land

- February 17, 2024 - DSA becomes fully applicable for all platforms

- January 15, 2025 - Dusseldorf court rules platforms can be held liable as disruptive parties under DSA; PPC Land coverage

- January 7 - February 23, 2025 - DSC holds emergency election-integrity meetings with X, Google, TikTok, Meta, and Microsoft

- March 27, 2025 - Data Protection Conference establishes liaison group with the DSC

- April 2025 - DSC launches @DSC bimonthly stakeholder dialogue series

- May 2025 - Commission opens proceedings against Pornhub, Stripchat, XNXX, and XVideos over minor protection risks

- June 18, 2025 - AliExpress provides binding commitments on advertising transparency and researcher data access

- June 25, 2025 - User Rights GmbH receives expanded dispute resolution scope covering Facebook and Pinterest

- July 2025 - Commission issues Article 28 DSA guidelines on minor protection

- July 25, 2025 - U.S. House Judiciary Committee releases report on DSA and American political speech; PPC Land coverage

- August 2025 - German businesses documented abusing DSA Article 16 to suppress online reviews; PPC Land coverage

- October 6, 2025 - Meta blocks political ads in EU; PPC Land coverage

- October 10, 2025 - TTPW political advertising transparency regulation enters full force

- October 24, 2025 - Commission makes preliminary findings of violations by TikTok and Meta; PPC Land coverage

- October 29, 2025 - Researcher data access portal launches; first German DSC application received within 24 hours

- November 4, 2025 - Platform Control certified as out-of-court dispute resolution body; PPC Land coverage

- November 2025 - Irish DSC opens proceedings against X over Article 20 DSA

- December 5, 2025 - Commission fines X 120 million euros; TikTok provides binding commitments on advertising database; PPC Land coverage on X fine

- December 7, 2025 - X terminates Commission advertising account; PPC Land coverage

- December 2025 - Irish DSC opens proceedings against TikTok and LinkedIn over Article 16 DSA

- April 30, 2026 - Federal Network Agency publishes DSC 2025 activity report

Summary

Who: The Digital Services Coordinator (DSC) within Germany's Federal Network Agency (Bundesnetzagentur), supported by the Landesmedienanstalten, the KidD, and the BfDI.

What: The DSC's 2025 activity report documents 2,033 formal DSA complaints received, 26 administrative proceedings opened, 10 closed through voluntary platform compliance, zero fines issued by any German DSA authority, 42 orders under Articles 9 and 10, four Trusted Flaggers certified, two out-of-court dispute resolution bodies certified, and eight researcher data access applications received - six of which were withdrawn.

When: Published April 30, 2026, covering 2025 - the first full year of DSA enforcement in Germany.

Where: Germany, operating within the EU-wide DSA framework. Most complaints involving the largest platforms are routed to Ireland, where those companies hold their European legal registration, requiring cross-border cooperation through the AGORA exchange system.

Why: The DSC is required under Article 55 DSA and Section 17 of Germany's Digital Services Law (DDG) to publish an annual activity report accountable to the national legislature and the European Commission. The 2025 report reveals the structural constraints - jurisdictional routing, staffing at 37% of estimated need, nine months of provisional budget management, and an unplanned election-integrity workload - that shaped an enforcement year characterized by process-building rather than penalties.