Google today made Canvas in AI Mode available to every user in the United States without requiring a Search Labs opt-in, removing the experimental barrier that had previously limited the feature to a smaller pool of early testers. The announcement, dated March 4, 2026, also introduces new capabilities: Canvas now supports creative writing and coding tasks, expanding well beyond its original focus on planning and organising information.

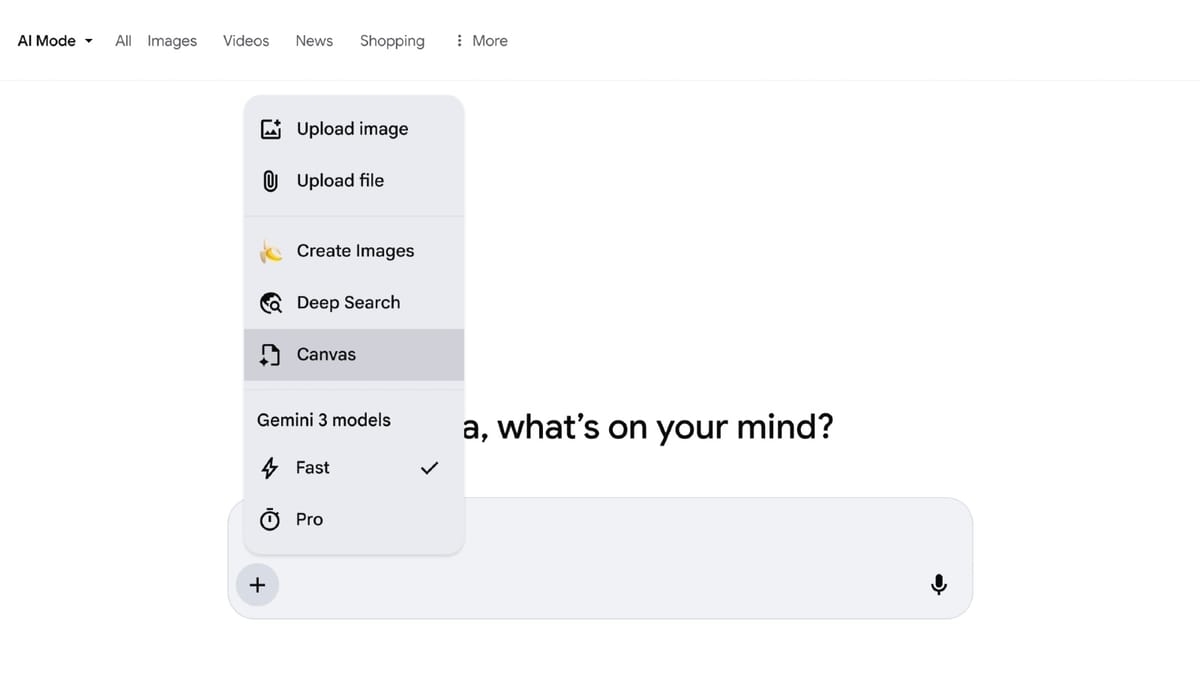

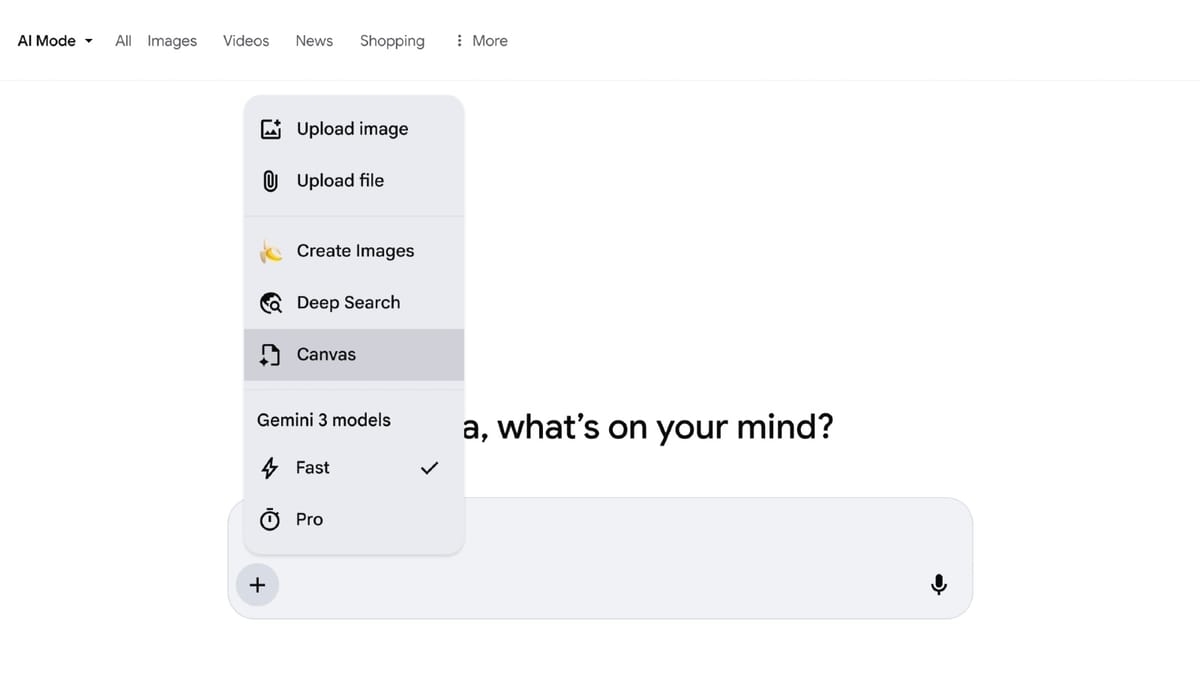

The rollout marks a notable shift in how Google is positioning the feature. Until today, Canvas had operated within the Labs experiment framework, meaning users had to actively enrol in Search Labs to access it. General availability changes that dynamic entirely. Any U.S. user searching in English can now open Canvas directly from the tool menu - the plus (+) icon - within AI Mode, without any additional account settings or subscription.

What Canvas does and how it works

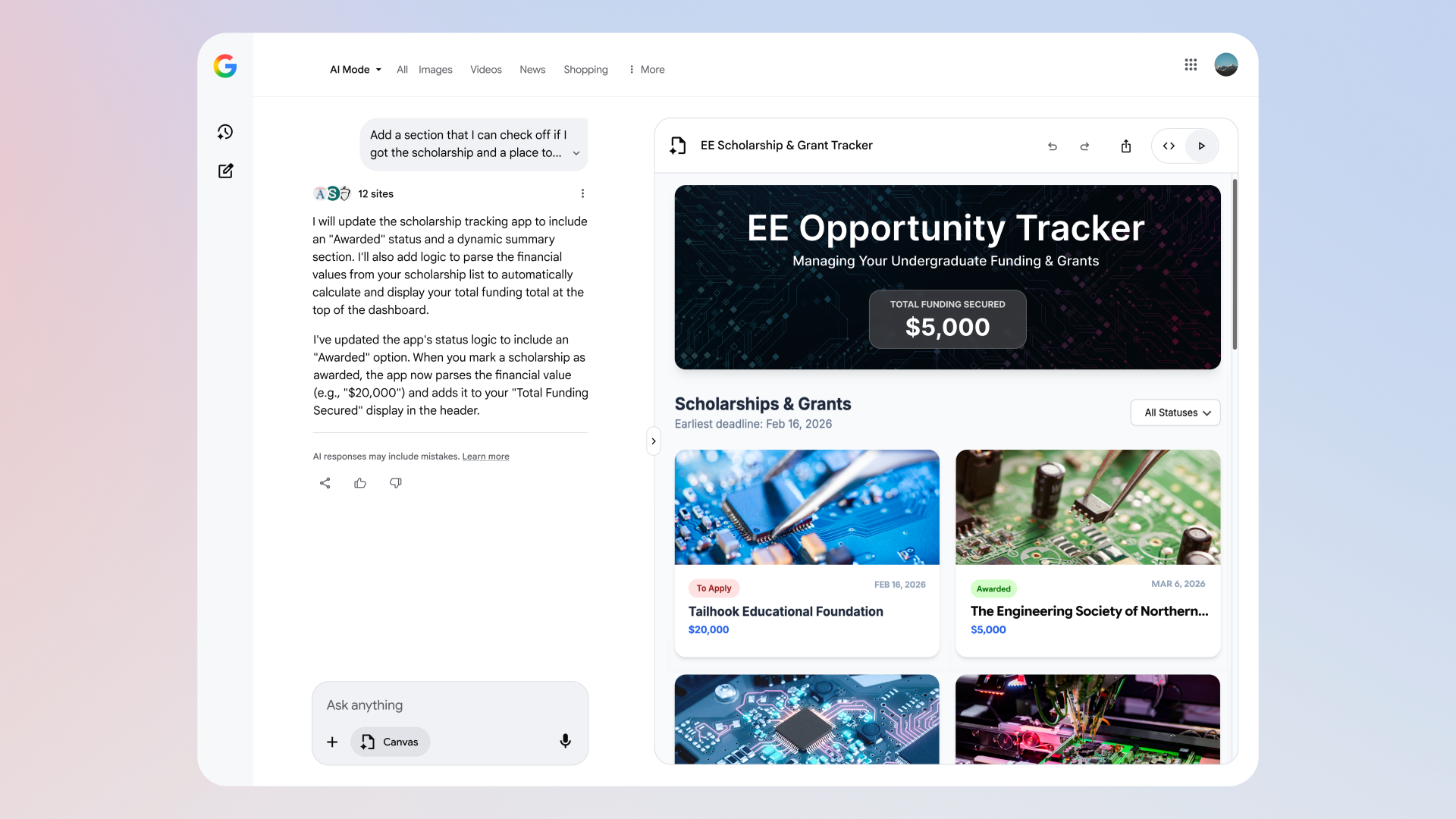

Canvas is a persistent side panel that sits alongside the main AI Mode conversation in Google Search. According to Google's announcement, it offers "a dedicated, dynamic space to organize your plans and projects over time." The practical mechanics are straightforward. A user selects the Canvas option from the tool menu, describes what they want to create, and receives a working prototype in the side panel. That prototype draws from two sources simultaneously: real-time information from the web and data from Google's Knowledge Graph.

From that starting point, the workflow is iterative. Users can test the functionality of whatever was built, toggle to view the underlying code, and refine through conversational follow-ups. The feature is explicitly designed for multi-session work - projects can be picked up and continued through the AI Mode history panel rather than rebuilt from scratch each time.

The addition of creative writing and coding support is the substantive capability expansion accompanying the general availability launch. According to Google, users can now "draft documents or create custom, interactive tools right within Search." The coding capability in particular means Canvas can produce functional, interactive outputs - not just text summaries or bullet-pointed plans.

Google cited a concrete example from early testers: a dashboard built to visualise and track information about academic scholarships, including requirements, deadlines, and dollar amounts. The example illustrates how Canvas bridges the gap between a search query and a usable artefact - a prototype tool built through natural language rather than through a separate development environment.

A feature that has been building since July 2025

Canvas did not appear from nowhere today. The feature's history stretches back to July 29, 2025, when Google first introduced Canvas functionality alongside Search Live with video input and enhanced Chrome desktop integration. At that point, Canvas was positioned around study planning - allowing students and researchers to build structured plans in a dynamic side panel that updated as they went. The July 2025 version was limited to desktop browsers in the U.S., and only for users enrolled in the AI Mode Labs experiment.

The travel planning dimension came next. On November 17, 2025, Google expanded Canvas to cover itinerary building, integrating real-time flight and hotel data from Google Search alongside location details, reviews, and photos from Google Maps. That version of Canvas generated hotel comparisons based on pricing and amenities, suggested restaurants and activities optimised by travel time, and allowed users to refine plans through follow-up questions. Travel planning with Canvas remained desktop-only at that stage and required Labs enrollment.

By January 14, 2026, Google had detailed six distinct ways Canvas could be used for trip planning, including accepting image uploads from users who had aesthetic preferences but no specific destination in mind. That piece of functionality - submitting a photo to prompt destination matching - showed how Canvas was moving toward genuinely multimodal interaction.

Today's announcement consolidates all of that earlier work into a single, broadly accessible product. The Lab experiment phase is over for U.S. English users. Creative writing and coding support represent the next layer of functionality built on top of the planning and travel features already in place.

Technical architecture: Knowledge Graph, web data, and code execution

The technical underpinning of Canvas involves several components working together. According to Google, the side panel "pulls together the freshest information from the web and Google's Knowledge Graph" when generating a prototype. This dual data sourcing matters because it means Canvas responses are not purely based on pre-trained model knowledge - they draw from current web information while also benefiting from the structured entity data in the Knowledge Graph, which includes verified facts about organisations, places, products, and more.

The coding capability adds another dimension. When Canvas generates an interactive tool or dashboard, it produces executable code rather than a static representation. Users can toggle between the rendered output - what the tool looks like and how it behaves - and the underlying code. This means a user who wants to understand or modify what was built has direct access to the source, not just the surface. Conversational refinement then works on both levels: a natural language follow-up can change the tool's behaviour or its visual design.

The Knowledge Graph integration is particularly relevant for tasks that require structured, factual data - scholarship requirements, event schedules, product specifications - rather than open-ended prose generation. Combining live web data with Knowledge Graph facts gives Canvas access to both the breadth of indexed web content and the precision of verified structured data.

Context: AI Mode's path from Labs to mainstream

Canvas's general availability comes within a broader AI Mode expansion that has unfolded steadily over the past year. Google opened AI Mode to all U.S. users without a waitlist in May 2025, extended it to Workspace accounts in July 2025, and expanded it internationally to over 40 countries and 35 languages in October 2025. By the third quarter of 2025, AI Mode had reached 75 million daily active users, according to figures disclosed in Alphabet's Q3 earnings call.

The feature's technical core - a custom version of Gemini 2.5 with query fan-out processing that simultaneously searches across multiple subtopics - has remained consistent even as the interface and capabilities have grown. AI Mode queries tend to run two to three times longer than standard search inputs, reflecting the nature of complex, exploratory use cases it is designed to serve.

Canvas sits within that framework as a tool for users who need to move beyond a single AI response toward something persistent and actionable. The original AI Mode value proposition was multi-turn conversation within a session; Canvas extends that to multi-session persistence and now to code execution and document drafting.

In December 2025, Google began testing seamless transitions from AI Overviews directly into AI Mode on mobile devices globally, positioning AI Mode as a natural escalation path from a standard search result rather than a separate destination. That test added an "Ask Anything" button at the bottom of expanded AI Overview results. Canvas, now available to all U.S. users, represents the endpoint of a user journey that can begin with a simple search query and end with a functional, customised tool.

What this means for the marketing and advertising community

For marketers and advertising professionals, Canvas's general availability raises several practical questions. The feature's ability to generate interactive dashboards, custom tracking tools, and structured plans from natural language inputs could change how some professionals prototype and test ideas before committing to more developed tools. A paid search manager building a tracking framework for a campaign, or an analyst trying to visualise performance data across different dimensions, could use Canvas to get a working prototype without writing code from scratch.

More structurally, the general availability of Canvas - and the removal of the Labs requirement - is another step in Google's ongoing effort to make AI Mode the default search experience rather than an optional experiment. A Google product manager signalled in September 2025 that AI Mode was heading toward becoming the default search interface, and the data from Alphabet's Q3 earnings confirmed that AI Mode drives incremental commercial query growth.

The shift matters for publishers and content creators, too. Canvas does not simply surface links - it synthesises information into structured outputs. When a user builds a scholarship dashboard or a travel itinerary through Canvas, the underlying web sources contribute data that is reorganised into a new artefact. This continues a pattern that has been a recurring concern in the search industry: AI-powered search features can reduce click-through rates to original sourceswhile still drawing on their content. Whether Canvas's interactive tool outputs are viewed differently from standard AI Overviews in terms of traffic implications is not yet clear.

The coding functionality deserves specific attention from the marketing technology community. Canvas can produce functional web-based tools - prototypes that could be embedded, shared, or iterated upon. This positions Google Search not just as a research interface but as a lightweight development environment for users who need custom tools quickly. The implications for how marketers build quick-and-dirty tracking dashboards, calculators, or comparison tools are worth watching.

Availability details

The general availability is limited to the United States and to searches conducted in English. There is no subscription requirement and no Labs opt-in needed. Access comes through the plus (+) icon in the AI Mode tool menu. Previous iterations of Canvas that remain available in other contexts - including travel planning via Canvas for Labs users, and the earlier study planning functionality - build on the same underlying infrastructure.

Google has not specified a timeline for expanding Canvas general availability to other countries or additional languages, though AI Mode itself now operates across more than 200 countries and territories following the October 2025 expansion.

Timeline

- July 29, 2025 - Google introduces Canvas in AI Mode for the first time, alongside Search Live with video input and enhanced Chrome desktop integration. Available on desktop for U.S. Labs users only, focused on study planning.

- October 7, 2025 - Google expands AI Mode to over 40 countries and 35 languages, bringing total availability to more than 200 countries and territories.

- November 5, 2025 - A prediction circulates that Google will merge AI Mode, AI Overviews, and Web Guide into a unified interface.

- November 17, 2025 - Google extends Canvas to travel planning, integrating real-time flight and hotel data, Google Maps details, and reviews into itinerary-building for U.S. Labs users on desktop.

- December 1, 2025 - Google begins testing direct AI Mode access from AI Overviews on mobile, globally, adding an "Ask Anything" button at the bottom of expanded summaries.

- January 14, 2026 - Google details six Canvas travel planning use cases, including image-upload-based destination matching.

- March 4, 2026 - Google makes Canvas in AI Mode available to all U.S. users in English with no Labs opt-in required, adding creative writing and coding support.

Summary

Who: Google, announced via The Keyword blog on March 4, 2026. The communication was also distributed by Robert Scala of Milltown Partners to industry contacts including PPC Land.

What: Canvas in AI Mode is now available to all users in the United States searching in English, with no Search Labs opt-in required. The feature gains support for creative writing and coding tasks, allowing users to draft documents and build interactive, functional tools directly within Google Search. Canvas draws from real-time web data and Google's Knowledge Graph, and generates executable code that users can view, test, and refine through conversational follow-ups.

When: The announcement was published on March 4, 2026. The feature became available the same day.

Where: Available in the United States, in English, across Google Search via the AI Mode interface. No specific device restriction is mentioned for the general availability release; earlier iterations were desktop-only for Labs users.

Why: The move takes Canvas out of its experimental phase and positions it as a standard part of the AI Mode experience for U.S. users. It reflects Google's broader strategy of making AI Mode the primary search interface, building on a year of incremental capability additions and geographical expansions that began with the feature's initial launch in March 2025.