Nick Fox, Google's senior vice president for knowledge and information products, today laid out in unusually granular detail how the company is restructuring search, maps, and advertising around reasoning models and agentic AI - including specific technical choices, deployment timelines, and product examples that rarely surface in public statements. The interview, published on April 23, 2026, on Bernard Marr's Future of Business and Technology podcast on YouTube, covered AI Mode's architecture, the agentic restaurant booking feature, personal intelligence, and the internal tooling shift that Fox says has made Google operate faster than at any point in its history.

Fox has led the knowledge and information division since October 2024, when Google reorganized its AI and search teams and moved Prabhakar Raghavan to a chief technologist role. The division includes search, maps, commerce, and ads. It is one of the most consequential product portfolios in consumer technology, and Fox has been at Google for more than 20 years.

The query fan-out: hundreds of searches behind a single answer

The mechanism Fox described as the "biggest leap forward" in AI Mode is what Google calls query fan-out. When a user submits a query to AI Mode, the model does not simply retrieve results for that literal query. Instead, according to Fox, the system breaks the original request into "tens, maybe hundreds, maybe even thousands" of sub-queries, issues those against the web simultaneously, and synthesizes the results into a single response with links.

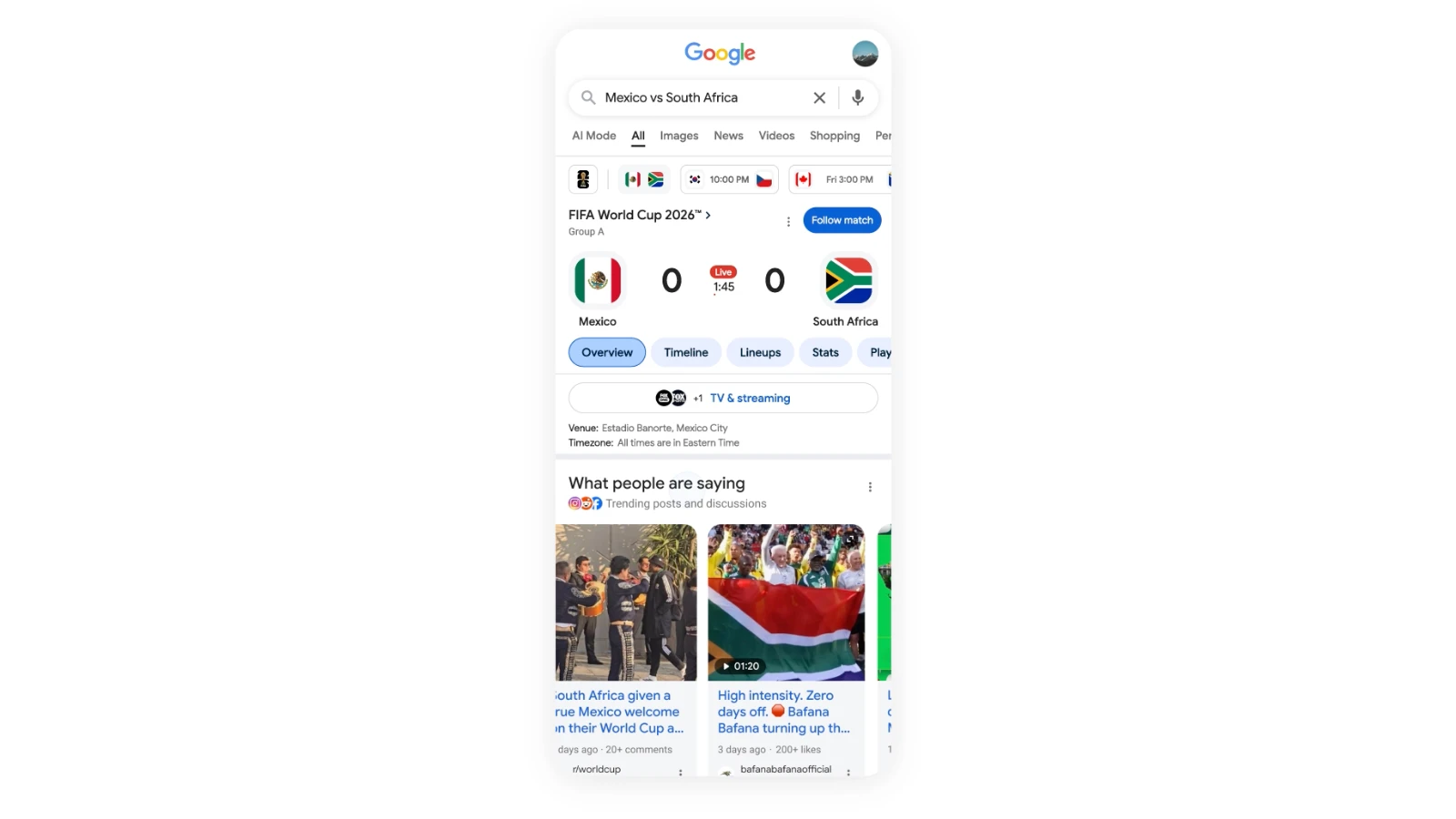

Fox gave a concrete example from his own use: planning a last-minute dinner reservation in San Francisco for two people on a Friday night. Normally, he said, a user would need to check multiple booking platforms - OpenTable, Yelp, Resy, and others - because restaurants are distributed across different systems. AI Mode fanned out to all of those platforms simultaneously, cross-referenced availability against personal preferences and prior visits, and returned a ranked list. He said it reduced what would have been roughly 20 minutes of manual checking to 30 seconds.

The architecture behind AI Mode has attracted scrutiny from technical researchers. As PPC Land reported in April 2026, controlled experiments by SEO agency DEJAN found that AI Mode appears to draw from a proprietary content store called FastSearch rather than the live web index used by classic Search. FastSearch is based on RankEmbed signals and retrieves fewer documents than full-ranked Search to reduce latency, according to court documents filed in Google's antitrust case. Fox did not address that distinction in the interview, describing AI Mode's process simply as issuing multiple queries across the web.

The fan-out approach is also central to what Fox described as the reasoning shift. Older search systems ranked pages. AI Mode, by contrast, reasons across the results of many parallel queries to construct an answer. Fox used the example of looking for a sunset spot while on vacation: the system needed to know his current location, the exact time of sunset, how long it would take to drive to various beaches, parking conditions, and how to build in a margin so the family arrived before the sun dropped. "Those are the questions that we all have in our heads," he said. "These are the questions that we actually care about."

Agentic booking expands, but the last click stays with the user

The restaurant booking feature Fox described launched roughly six months before the interview in the United States and, according to Fox, recently expanded to other countries. Google extended agentic restaurant booking to the United Kingdom on April 10, 2026, with eight booking platforms including TheFork and OpenTable integrated into the interface. In November 2025, Google had announced agentic booking as part of a broader travel update that also brought Flight Deals to more than 200 countries and Canvas travel planning to AI Mode on desktop.

Fox was careful about the boundaries of the feature. He noted that even in his own use case - a sushi restaurant for two on a Friday night - AI Mode surfaced the options and identified an available table at a place he would not otherwise have tried, but the final booking was a single tap that the user completes. "When people are spending money, they like to have some agency over that last step," he said.

That framing matters for how marketers read the commercial stakes. PPC Land has tracked that agentic features shift the moment of conversion inside Google's interface, potentially bypassing a restaurant's own website, its direct booking system, and any conversion tracking implemented there. When John Mueller described agentic reservation features in November 2025, he framed it as AI navigating websites on behalf of users - not as a Google-hosted transaction layer. Fox's description is consistent: the booking finalises through the partner platform, not inside Google.

What Google has built is effectively a meta-aggregator. It queries OpenTable, Resy, Tock, and their equivalents simultaneously, reasons across availability and personal preferences, and delivers a shortlist. The structural parallel to how Google intermediates between users and websites in organic search is plain, and Fox acknowledged the tension around the web ecosystem explicitly.

Personal intelligence: opt-in context from Gmail and Photos

Fox spent considerable time on personal intelligence, the feature that connects Google's user data - Gmail, Google Photos, calendar, search history - to search and AI Mode responses. He framed its origins as user demand: people who already store data across Google's services found it jarring that AI Mode did not use any of it. "You didn't know any of that," he quoted users as saying. "And they're like, that's weird."

The feature is opt-in. Fox said not all users choose to enable it, and he described that as the correct outcome - evidence that the choice mechanism is working as intended. Personal intelligence launched for Gemini app subscribers on January 14, 2026. By late January, Google had added source icons to AI Mode showing which apps - Gmail, Photos, YouTube, or Search - contributed to a given response, with icons functioning as tappable citations.

Fox gave a detailed personal example. He was skiing in poor visibility and asked AI Mode which ski goggle lenses to use for low light. The system found an email his wife had sent him years earlier - a gift receipt for ski goggles containing details of two lens sets - and cross-referenced that with current weather conditions at his location to recommend the appropriate lens for the following day. He called it "subtly magical." The example is notable not because the capability is unprecedented but because it illustrates what the system requires to function well: a long history of stored data across multiple Google products, a specific query with implicit context, and a model capable of drawing connections across those sources.

The transparency mechanism Fox described rests on three elements: explicit opt-in, data that is already stored within Google rather than pulled from outside it, and visible attribution for which data sources informed the response. What the feature does not do, according to Google's own documentation noted in PPC Land's coverage, is automatically delete that data when a user disconnects an app. Users must separately manage their Gemini Apps activity if they want to prevent continued use.

How ads fit into conversational search

One of the more commercially significant portions of the interview covered advertising. Fox's position was that the principles governing ads in traditional search - clear labeling, clear separation from organic results, relevance as the core value driver - will carry forward unchanged into AI-powered experiences.

He gave two examples to make this concrete. In the hotel booking scenario, the AI response might identify the top three hotels matching a user's specific criteria - proximity to a tube station, a pool for children, nearness to an office. Below that organic AI response, ad units could surface booking options: the hotel's own site, Booking.com, or other platforms where the reservation can be completed. The AI provides the recommendation; the ads provide a convenient transactional path.

In the second example, a user asks for home remedies to clean cat mess from a carpet. The organic AI response might describe baking soda and vinegar approaches. An adjacent ad unit could surface cleaning products available for purchase. According to Fox, the two layers are intentionally separate: the organic response addresses the question, and the ad provides an additional dimension or next step.

This structure is under active observation in the marketing industry. PPC Land documented in March 2026 that sponsored store units had been spotted inside AI Mode's product sidebar panels - a placement observed by search analyst Glenn Gabe during a shopping query for Gap men's chinos. The sponsored entry appeared above the organic "All stores" list within the same sidebar, following a structure similar to sponsored placements in standard Shopping results but relocated inside a conversational interface. Google opened AI Mode to all US users in May 2025 without a waitlist, and advertising integration within the interface has expanded incrementally since.

Fox also noted that Gemini models have produced a meaningful improvement in Google's ad prediction stack - the system that estimates, before an ad is shown, whether a user will click on it or convert. He described this as one of the first areas he worked on when joining Google, and said the improvement from applying Gemini models to that prediction problem is "a massive step up." With billions of queries and millions of advertisers, prediction at that scale requires AI to handle the matching problem.

Ask Maps: specific questions for a spatial product

Fox discussed Google Maps as a product undergoing a parallel shift to the one in Search. He described two distinct user populations: those who use Maps for turn-by-turn navigation, and those who use it to understand the world around them and discover places. The second group is where AI changes the experience most substantially.

Ask Maps, which Fox said launched in the weeks before the interview alongside immersive navigation, allows users to pose specific, conditional questions. His example: instead of asking "what is a great Mexican restaurant nearby," a user can ask for a place with good shrimp tacos, suitable for children, open late into the afternoon, and close to home. The distinction matters, he argued, because the generic "best Mexican restaurant" answer and the genuinely specific answer to that question are often different places.

Ask Maps draws on context from a user's lists within Maps, places previously visited, and current location. Results appear on the native map interface, making it easy to navigate directly to a recommendation. Fox described it as enabling "personal itineraries" within Maps.

Google Maps has been expanding AI-powered discovery since at least early 2024, when it launched generative AI place discovery for Local Guides in the US. That feature used the same database of 250 million places and 300 million contributor insights that underpins current Maps functionality.

How Google tests new AI features before full rollout

Fox described a staged deployment process that the company has applied to each major AI feature in Search: internal testing, then a small group of external early adopters through what Google calls Labs, then live experiments at fractions of a percent of users - 0.1%, 1%, 5% - before reaching 100% deployment.

He applied this description to both AI Overviews and AI Mode, framing the latter as a larger step than Overviews but following the same playbook. The approach, according to Fox, allows the company to observe whether users find a feature jarring in the first few days but like it after a week, and love it after a month - a behavioral pattern that informs decisions about whether to continue the rollout.

AI Mode launched in Search Labs in March 2025 for Google One AI Premium subscribers, expanded to all US users in May 2025, and reached Google Workspace accounts in July 2025. It launched in India in June 2025 and in the United Kingdom in July 2025. Testing of an AI Mode button in the Google homepage search bar began on June 11, 2025.

Internal development: product managers building prototypes

Fox's comments on internal AI tooling were striking. He said Google engineers are now managing "coding agents" rather than writing code themselves, with code review agents and other automated systems in the workflow. He described this as "a massive sea change in how development works."

The shift extends beyond engineering. Product managers, according to Fox, can now build end-to-end working prototypes directly instead of writing product requirement documents. "Here is a prototype. Here is my idea. Here is actually how it is working." UI designers have access to the same capability. Fox described this as a democratisation of prototyping across the product development stack.

He gave a specific example from the Gemini 3 rollout in Search. The team was running evaluations across multiple slices - country, language, user age group, device type - trying to identify where the new model underperformed the previous one. Someone set an AI system running on the evaluation data at around 10 or 11 at night. By morning, it had produced a summary report: where the new model was working, where it was not, and what the potential root causes were for each gap. That overnight analysis informed model adjustments before the launch. Fox said this kind of acceleration applies across the entire product lifecycle, not just engineering.

"I've never seen Google operating at the speed that it's operating at right now," he said.

The web ecosystem: a stated commitment with unresolved tensions

Fox identified himself explicitly as a "web optimist," and repeated Google's position that the company has a strong business incentive to ensure the web thrives. He said Google spends "far more effort" than any other AI product on ensuring links work well within Search. He cited, as a recent example, a new link experience within Chrome where clicking a link in AI Mode opens the destination as a side panel next to the AI response - designed to reduce friction and, according to Fox, increase the rate at which users click through to the web.

The context for that claim is a web publishing industry facing significant pressure. Google Web Search traffic to news publishers declined from 51% to 27% between 2023 and 2025 while Discover feed traffic climbed. Fox made that same publisher visit on December 15, 2025, telling publishers that optimising for AI search requires the same approach as traditional SEO - build great sites, publish great content - a position that drew sharp reactions from outlets experiencing steep traffic declines.

His argument for why content creators remain essential is structural: the models will only work well if great content continues to be produced. "It has to be the case," he said. Whether that structural dependence translates into material traffic or revenue for publishers is a question the interview did not resolve.

The ceiling on agents: user preference, not technology

Fox was asked where the ceiling is on what AI agents in search will eventually be able to do. He declined to identify a technological ceiling, but offered a different kind of constraint: user preference. He said he personally enjoys planning vacations - choosing hotels, deciding on seats, building anticipation by thinking through the trip in advance. An agent that booked everything automatically would remove something he values. Other people, he noted, feel differently. Clothes shopping is another case: some people find it an enjoyable activity they would not want to automate; others would happily delegate it.

His framing was that the product will give users a choice. Those who want more automation can have it; those who want to maintain personal agency at certain decision points can do so as well. Buying toilet paper, he offered as an example, is a task where most users would probably accept automation without feeling they had given something up.

For marketers, this framing has direct implications. The degree to which agentic features compress or eliminate the user's journey to a purchase decision - and how much of that journey passes through Google's interface versus a brand's own channels - will vary by category and by individual user preference. Tracking the boundary of where that line sits across different verticals is a practical challenge that tools and attribution models have not yet fully solved.

Timeline

- February 2024 - Google Maps launches generative AI-powered place discovery for Local Guides in the US, drawing on 250 million places and 300 million contributor insights

- August 2024 - Google expands AI Overviews to the UK, India, Japan, Indonesia, Mexico, and Brazil

- October 17, 2024 - Google reorganizes AI and search teams; Nick Fox takes over the Knowledge and Information division

- March 5, 2025 - Google reveals AI Mode in Search Labs for Google One AI Premium subscribers

- April 7, 2025 - Google adds multimodal image search capabilities to AI Mode

- April 11, 2025 - Google adds internal links within AI Overviews pointing to other Google Search pages

- May 1, 2025 - Google removes the waitlist for AI Mode, opening it to all US users over 18

- June 11, 2025 - Google begins testing an AI Mode button directly in the Google.com homepage search bar

- June 24, 2025 - AI Mode launches in India

- July 2, 2025 - Google extends AI Mode to Workspace accounts in the US

- July 9, 2025 - Google integrates AI Mode into Circle to Search on more than 300 million Android devices

- July 16, 2025 - Google launches automated business calling in Search for US users, covering most states

- July 28, 2025 - AI Mode launches in the United Kingdom

- July 30, 2025 - Google reports a 65% surge in visual searches as AI Mode drives multimodal adoption

- November 13, 2025 - Google launches agentic shopping features including autonomous checkout and Duplex-powered inventory calling

- November 17, 2025 - Google announces agentic booking for restaurants in AI Mode without Labs opt-in, plus Flight Deals expansion to 200+ countries

- December 1, 2025 - Google begins testing a path from AI Overviews directly into AI Mode on mobile, globally

- December 15, 2025 - Nick Fox tells publishers on the AI Inside podcast that AI search optimization requires the same approach as traditional SEO

- January 14, 2026 - Google launches personal intelligence for Gemini app subscribers, connecting Gmail, Google Photos, and other services to AI responses

- January 28, 2026 - Google adds source icons to AI Mode showing which apps contributed to a response

- March 25, 2026 - Sponsored store units spotted inside AI Mode product sidebar panels

- April 10, 2026 - Google launches agentic restaurant booking in AI Mode in the UK with eight booking partners

- April 21, 2026 - Researchers and antitrust filings reveal AI Mode draws from FastSearch rather than the live web index

- April 23, 2026 - Nick Fox interviewed by Bernard Marr on the Future of Business and Technology podcast; interview published on YouTube

Summary

Who: Nick Fox, senior vice president of knowledge and information products at Google, overseeing search, maps, commerce, and ads. The interview was conducted by Bernard Marr for his Future of Business and Technology podcast, published on April 23, 2026.

What: Fox described the technical architecture and product philosophy behind AI Mode, including the query fan-out mechanism that issues hundreds or thousands of sub-queries behind a single user search, the agentic restaurant booking feature that aggregates availability across multiple platforms, the personal intelligence feature connecting Gmail and Google Photos to search responses, and the internal shift toward AI-assisted software development. He also addressed how advertising will remain clearly labeled and separate from organic AI responses.

When: The interview was published on April 23, 2026, on YouTube. The features and deployments Fox discussed span a timeline from early 2025 through the present, with AI Mode first appearing in Search Labs in March 2025 and agentic booking reaching the UK as recently as April 10, 2026.

Where: The conversation covers Google's global search and maps products, with specific references to US deployments, UK expansions, and internal development practices at Google's San Francisco offices.

Why: The interview provides a rare detailed account from a senior Google executive of how AI reasoning models are being applied across the company's highest-traffic consumer products, with direct implications for advertisers, publishers, local businesses, and the booking platforms that are becoming infrastructure within Google's agentic ecosystem. For the marketing community, the key questions are how ad placements evolve inside conversational interfaces, how agentic transactions affect attribution and web traffic, and what personal intelligence means for targeting precision and user trust.

Discussion