Meta yesterday announced a set of AI-powered age assurance measures aimed at placing teenagers in age-appropriate experiences on Instagram and Facebook, including a new visual analysis system that scans photos and videos for physical cues - such as height and bone structure - to estimate whether an account belongs to someone under 13.

The announcement, published May 5, 2026, on Meta's newsroom, details three overlapping initiatives: stronger enforcement of the under-13 ban using AI that reads contextual and visual signals across profiles, a geographic expansion of the company's age prediction technology to cover Instagram in the European Union, Brazil, and Facebook in the United States, and a parent notification program set to roll out in the US this month.

The timing is not coincidental. Just days before Meta published this update, the European Commission issued preliminary findings that Instagram and Facebook were in breach of the Digital Services Act for failing to adequately prevent children under 13 from accessing their platforms. Those findings, announced in Brussels on April 29, 2026, threaten fines of up to 6% of Meta's total worldwide annual turnover if they are ultimately confirmed.

What the visual analysis system actually does

The most technically specific part of today's announcement concerns visual analysis, which Meta is now adding to its suite of age detection tools. According to Meta's newsroom, the system allows AI to scan photos and videos for visual clues about a person's age that text alone might miss.

Meta was explicit that the technology does not constitute facial recognition. "Our AI looks at general themes and visual cues, for example height or bone structure, to estimate someone's general age; it does not identify the specific person in the image," according to the announcement. The distinction matters from a regulatory standpoint, particularly in the EU, where biometric identification systems face strict constraints under both the GDPR and the AI Act.

The visual analysis is combined with text-based detection, which has been running for some time. That layer analyzes entire profiles for contextual signals - birthday celebration posts, mentions of school grades, references to age in bios, captions, and comments. According to the announcement, Meta is continuing to expand this contextual analysis to additional parts of its apps, including Instagram Reels, Instagram Live, and Facebook Groups. When either system flags an account as likely underage, the account is deactivated. The account holder must then provide proof of age to prevent deletion.

Not all features are available everywhere. According to Meta's announcement, certain advanced capabilities - specifically the visual analysis - are currently available only in select countries as the company works toward a broader rollout.

Expanding the age prediction rollout

The second strand of today's announcement concerns a separate technology: age prediction, which attempts to identify accounts that list an adult birthday but are likely operated by a teenager. This is distinct from the under-13 enforcement work. It targets the 13-17 age range, where a teen might have entered a false birthdate to avoid Teen Account restrictions.

Meta first deployed this technology in the United States, Australia, Canada, and the United Kingdom, where it placed millions of accounts into Teen Account settings. Today's announcement extends the technology to all 27 EU member states and Brazil on Instagram, and to Facebook in the US for the first time.

The company said UK and EU Facebook users will follow in June. Global expansion on Instagram is planned for the remainder of 2026.

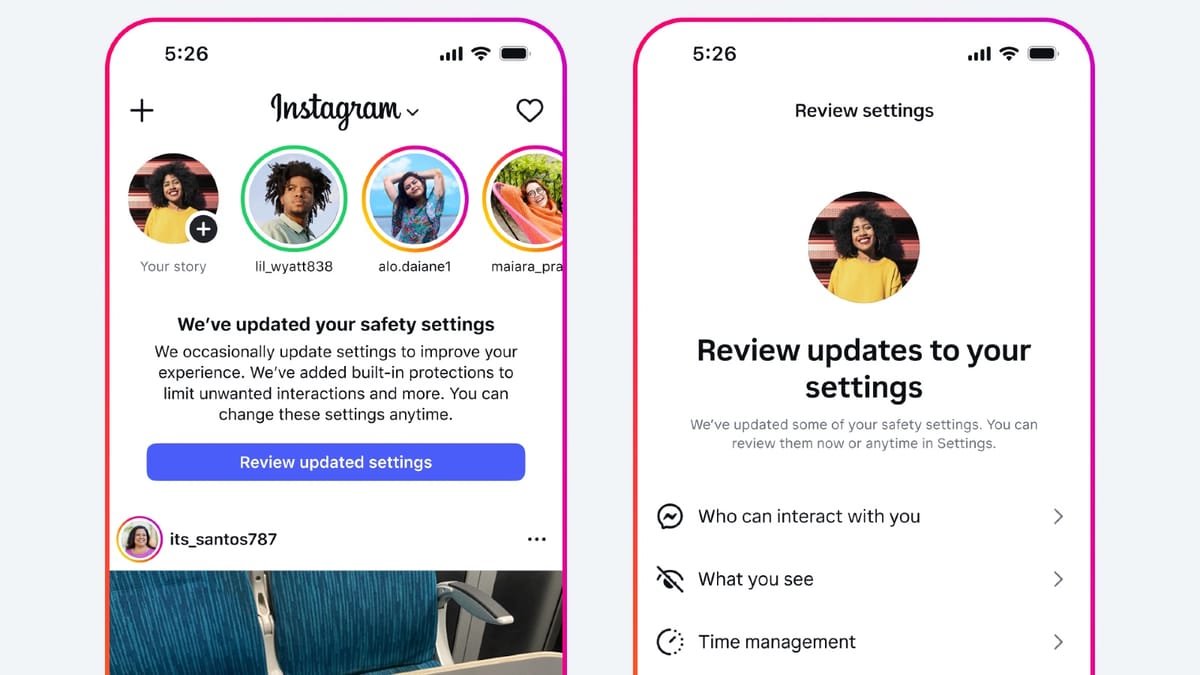

Teen Accounts - the destination for any account identified by this system - apply a defined set of restrictions. According to Meta's newsroom, these include limits on who can contact teens, content filtering calibrated to age-appropriate standards, and default settings that require parental permission to loosen. Instagram launched Teen Accounts in September 2024, initially in the US, UK, Canada, and Australia. The system has grown to at least 54 million active Teen Accounts globally, according to figures Meta published in April 2025. In October 2025, Instagram aligned those accounts' content filters with PG-13 movie rating standards, extending the rollout to the four initial markets.

Earlier expansions brought the Teen Accounts framework to India. Meta launched Instagram Teen Accounts in India on Safer Internet Day in February 2025. In April 2026, Instagram further extended the updated 13+ content rating and a new Limited Content parental control option to Indian users.

Reporting flows and AI-assisted moderation

Today's announcement also described changes to how user reports of underage accounts are handled. Meta said it is simplifying the reporting process, both within the app and on its Help Center, to make it easier for community members to flag accounts they believe belong to minors.

On the back end, Meta said it is supplementing human review teams with AI models that apply consistent evaluation criteria to every report. According to the announcement, "in our testing, this AI-driven review delivers higher accuracy and faster resolutions than human review alone." The company framed this as improving both speed and reliability for resolving underage account reports. What it means in practice is that a significant share of these reports will be handled without a human reviewer looking at any specific case.

The company is also working to strengthen what it calls "circumvention measures" - systems that detect when a user Meta has previously identified as likely underage tries to create a new account to bypass the restrictions.

Parental notifications

The third component of today's announcement focuses on parents. According to Meta, this month the company will begin sending notifications to parents in the US on both Facebook and Instagram. The notifications will include guidance on how to check and confirm their teens' ages, along with tips for conversations about honesty when registering for apps.

Parents outside the US can already access similar tools through Meta's Family Center. Today's announcement does not specify a timeline for extending the notification program to other markets.

Joel Kaplan, Meta's Chief Global Affairs Officer, described the challenge in a LinkedIn post accompanying the announcement: "knowing someone's age on the internet remains one of the hardest challenges in our industry." He noted that teens in the US use around 40 apps per week, making per-app enforcement difficult for parents to track.

Kaplan also reiterated Meta's position on what it believes would be a more effective structural solution. According to the LinkedIn post, Meta believes it would be better if app stores themselves sought parental approval before a child downloads an app, a change that would consolidate oversight in one place rather than requiring each platform to enforce its own age rules independently. According to Meta, 88% of US parents support that approach.

The app store argument and regulatory friction

Meta's call for app store-level age verification is not new. It has been central to the company's public policy arguments in multiple jurisdictions. But the position has attracted criticism, including from within the technology industry.

In June 2025, Google's Global Director of Privacy Safety and Security Policy, Kate Charlet, published a detailed critique of Meta's proposed app store verification model, arguing that it would require sharing granular age data with millions of developers who do not need it. Charlet also noted the approach would be ineffective for desktop users and for pre-installed apps, which do not go through app store download flows.

That disagreement has played out against a backdrop of expanding platform-level age checks elsewhere. Google began rolling out machine learning-based age detection for ad protections in the US in July 2025, applying restrictions on personalized advertising for users identified as likely minors. Google Search started rolling out age verification notifications to users in August 2025. X introduced its own age verification system around the same period, using third-party processors including Au10tix, Persona, and Stripe to handle ID checks.

The regulatory environment keeps shifting under all of these platforms. The UK Online Safety Act came into force on July 25, 2025. The EU's DSA guidelines on the protection of minors added a compliance benchmark in 2025. And in the US, Google and Meta filed separate lawsuits in November 2025 challenging California Senate Bill 976, which would restrict personalized feeds for minors.

The DSA preliminary findings against Meta, announced on April 29, 2026, are a direct escalation of that regulatory pressure. The Commission's position, as PPC Land reported, is that Meta's terms set the threshold at 13, but the company's systems do not meaningfully enforce that threshold. Today's announcement addresses exactly that gap - though whether it satisfies the Commission's requirements will not be determined until Meta responds to the preliminary findings and the process works through to a final decision.

What this means for the advertising ecosystem

For marketing professionals, the expansion of Teen Account coverage to the EU and Brazil on Instagram and to US Facebook users carries practical implications. Advertisers have faced tightening restrictions on reaching teen audiences since Meta tightened restrictions across platforms in April 2025. As more accounts are automatically moved into Teen Account settings through the age prediction system, the portion of each platform's inventory carrying teen-specific restrictions grows.

That shift is relevant to targeting and brand safety decisions. The expansion of age-gated inventory in the EU and Brazil is particularly significant given the size of those markets and the regulatory scrutiny surrounding them. Advertisers running campaigns on Meta platforms in these regions will need to account for audiences that now sit behind automatic protections that constrain content delivery and targeting parameters.

The pattern at PPC Land shows a consistent trajectory across platforms: AI-driven age detection is becoming the primary mechanism for sorting users into age-appropriate inventory segments, with human oversight increasingly limited to edge cases and appeals. Meta's announcement today is a step in that direction, and given the regulatory pressure the company faces in the EU, it is unlikely to be the last.

Timeline

- September 2024 - Instagram launches Teen Accounts with built-in protections in the US, UK, Canada, and Australia

- February 11, 2025 - Instagram expands Teen Accounts to India on Safer Internet Day with sleep mode and time-tracking features

- April 8, 2025 - Meta tightens Teen Account restrictions and announces expansion to Facebook and Messenger; at least 54 million Teen Accounts active globally

- June 13, 2025 - Google's privacy chief publishes critique of Meta's proposed app store age verification system, citing data-sharing risks for children

- July 30, 2025 - Google begins machine learning age detection for ad protections in the US

- August 3, 2025 - X implements age verification system using third-party processors Au10tix, Persona, and Stripe

- August 15, 2025 - Google Search begins age verification notifications for users, following earlier YouTube implementation

- August 12, 2025 - German court confirms Meta AI training includes children's data despite protective measures

- October 14, 2025 - Instagram aligns Teen Account content filtering with PG-13 rating standards in the US, UK, Australia, and Canada

- October 24, 2025 - EU finds TikTok and Meta in breach of DSA transparency rules

- November 13, 2025 - Google and Meta sue California over social media age restrictions law

- April 9, 2026 - Instagram brings 13+ content ratings and Limited Content setting to India

- April 29, 2026 - European Commission issues preliminary DSA breach findings against Meta for failing to keep children under 13 off Instagram and Facebook

- May 5, 2026 - Meta announces AI-powered visual analysis for age detection, expands age prediction technology to the EU, Brazil, and US Facebook, and introduces parent notification program

Summary

Who - Meta, the parent company of Instagram and Facebook, announced the measures. Joel Kaplan, Meta's Chief Global Affairs Officer, described the rationale in an accompanying LinkedIn post. The changes affect teen users on Instagram across 27 EU member states and Brazil, and on Facebook in the United States.

What - Meta introduced three initiatives: an AI visual analysis system that scans photos and videos for physical cues like height and bone structure to detect likely underage accounts; an expansion of its age prediction technology - which identifies teens who registered with adult birthdates - to new geographic markets; and a parent notification program providing US parents with guidance on confirming their teens' ages.

When - The announcement was made on May 5, 2026. The age prediction technology expansion began today. The parent notification program will begin rolling out in the US this month. UK and EU Facebook expansion is scheduled for June 2026. Global Instagram expansion is planned for the remainder of 2026.

Where - The age prediction technology now covers Instagram in all 27 EU member states and Brazil, and Facebook in the United States. The parent notification program applies to US Facebook and Instagram users. Visual analysis features are currently limited to select countries.

Why - Meta faces compounding regulatory pressure, including preliminary DSA breach findings from the European Commission announced on April 29, 2026, and ongoing legislative scrutiny in multiple markets. The company has enrolled hundreds of millions of teens in Teen Account protections since September 2024, but regulators have found that voluntary measures have not been sufficient to prevent under-13 access. The expansion of AI-driven age detection is Meta's primary response to that enforcement gap.