DoubleVerify's Fraud Lab this month published a detailed investigation into AutoBait, a coordinated network of more than 200 Made for Advertising domains that uses artificial intelligence to generate clickbait content at industrial scale - while leaving its own operational code fully visible inside the sites' JavaScript. The report, published on March 4, 2026, by DV Fraud Lab researchers Arik Nagornov, Merav Geles, and Lia Bader, offers what the company describes as a rare look inside the technical infrastructure of an AI content operation built not to inform readers, but to extract advertising revenue from unprotected brands.

The findings arrive at a moment when the marketing industry is paying close attention to the quality of programmatic inventory. MFA sites and AI-generated content have been among the most discussed threats in digital advertising for the past two years, with platforms, verification companies, and publishers all staking out positions on how to address the problem. AutoBait, according to DoubleVerify, represents a qualitative step forward in the sophistication - and the audacity - of these operations.

What AutoBait is

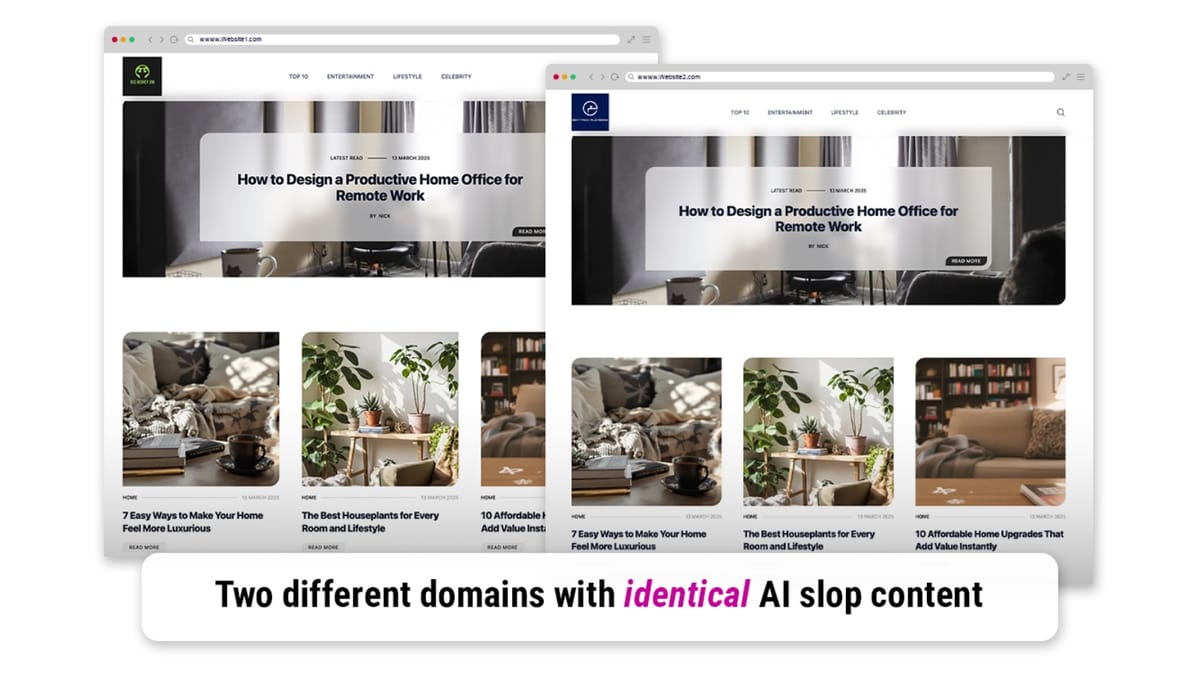

Viewed individually, each domain in the AutoBait network resembles an independent lifestyle blog. The sites carry AI-generated articles and images and share a consistent design optimized for ad delivery rather than for readers. According to DoubleVerify, the network has already generated tens of millions of impressions that advertisers paid for without knowing the nature of the inventory.

What makes AutoBait unusual is not simply its scale. It is that the operators, in their rush to expand across hundreds of domains simultaneously, left the system's content-generation code exposed in the sites' JavaScript. That code - including the large language model prompts used to produce articles and images - was visible to anyone who inspected the page source. DoubleVerify's researchers were able to read it in full, providing a technical window into how these operations function that is rarely available to outside observers.

The content generation system

The AutoBait system operates around a templated framework with three configurable variables: [TITLE], [SUMMARY_LENGTH], and [SLIDES_COUNT]. The text prompt instructs the model to write listicle-style articles in a format calibrated to maximize emotional engagement and extend time on page, thereby multiplying the number of ads served per visit.

According to the exposed code, articles must begin with an attention-grabbing summary in a "friendly yet provocative tone," followed by a fixed number of slides, each revealing one "distinct, concrete sign, warning, or truth." The prompt instructs the model to prioritize slides that are "the most sensational or shocking points - anything that stops someone mid-scroll." Headlines are required to be "ultra-literal," running between four and seven words, and must describe conditions using plain, immediately visual language. Examples provided in the prompt itself include phrases like "A mole with a funny shape" and "A sore that won't heal."

Emotional manipulation is built directly into the specification. Body text, according to the exposed instructions, must "inject real emotion (fear, anger, shock, relief) into every paragraph," must "ask a direct question, hint at danger or relief, and convey urgency," and must be "highly specific: name colors, objects, or settings exactly." Each paragraph, the prompt states, "should feel like one sentence that lands like a gut punch, plus one supporting detail." There is no mention anywhere in the prompt of factual accuracy, sourcing, or editorial review. None is required. The system is designed entirely around psychological effect, not informational value.

Image generation and intentional deception

The image generation component is equally revealing. AutoBait uses the Flux 1.1 Pro text-to-image model, identified in the exposed code as black-forest-labs/flux-1.1-pro. According to DoubleVerify, this is a top-tier commercial image model. The prompts instruct it to generate "ultra-realistic, landscape-oriented" photos that look like they were "casually taken on a smartphone by a real person - unfiltered, unstaged, and emotionally authentic."

The instructions explicitly state that images should "NOT look artificial, stylized, or generated by AI." That instruction, embedded in the operational code of an AI-generated content farm, is a direct acknowledgment that the entire system is built on deliberate misrepresentation. The goal is not to illustrate content. It is to simulate authenticity in a way that prevents readers - and programmatic buyers - from recognizing the content as machine-made.

Image prompts are derived from the article headlines and body text, and are designed to reinforce the emotional framing of the written content. The system specifies that images depicting medical symptoms must show "tight, dramatic close-ups" that fill at least 60 percent of the horizontal frame. Where a person is depicted, the system defaults to women over 30, shown in "candid, unstaged emotions" including "fatigue, frustration, sadness," with "messy hair and unfiltered skin texture." No smiles, no eye contact, no posed expressions. The specificity is total. Everything is designed to simulate the appearance of real, spontaneous documentation.

The economics of automated ad fraud

The cost structure of AutoBait is what makes it a serious financial threat to advertisers. According to DoubleVerify's analysis of the exposed code, the operators pay approximately 4 cents per slide to generate content. Some articles in the network run to 56 slides. Pages are engineered for maximum ad density - each slide can carry up to eight ad banners, and those banners refresh every few seconds, creating multiple distinct ad-serving opportunities within a single page view.

Working through the arithmetic: a 56-slide article costs at most $2.25 to produce. That same article can generate hundreds of individual ad impressions. Once a fraction of those impressions are filled at even modest CPMs, the production cost is recovered. Everything beyond that is margin. A network of 200 domains, each publishing dozens of articles per day, can generate tens of thousands of pages per month and millions of distinct ad-serving opportunities. The financial return on investment, at these production costs, is substantial - provided enough unprotected ad spend reaches the network.

DoubleVerify's 2024 Global Insights Report had already documented a 19 percent year-over-year increase in MFA impression volume, based on analysis of more than one trillion impressions from over 2,000 brands globally. AutoBait represents the logical continuation of that trajectory: lower production costs, higher volume, less human involvement, and better tools for simulating legitimacy.

The trivia and personality quiz extensions

The exposed code goes beyond lifestyle clickbait. The AutoBait system also includes templates for trivia quizzes and personality quizzes, both using the same structural approach. The trivia template instructs the model to generate 100 questions, each with three multiple-choice answers, a short heading, and an explanation. The personality template generates 100 questions with binary answer options and two outcome "personality types." Both formats include image generation instructions following the same ultra-realistic, faux-candid aesthetic as the article content.

These formats serve a specific engagement function. Quizzes require users to interact with each slide individually, extending session length and increasing the number of ad impressions per visit beyond what a standard article can achieve. The model is instructed to keep language "casual, fun, and conversational" to maximize completion rates. The result, according to DoubleVerify, is a content system capable of producing multiple content formats at scale, each optimized for a different engagement pattern - and each capable of sustaining a long sequence of ad impressions.

The broader context for advertisers

The AutoBait investigation lands inside a well-documented pattern. DoubleVerify launched tiered MFA classification categories in early 2024 in response to exactly this type of inventory proliferation, categorizing sites by ad density, traffic sources, and content creation practices across High, Medium, and Low tiers. That same year, the company released Global Insights data showing MFA attention deficits of 7 percent for display and 28 percent for video compared to quality inventory.

Integral Ad Science, in a July 2025 analysis, identified AI-generated slop sites as a critical threat to programmatic effectiveness, noting that quality inventory delivers 91 percent higher conversion rates than ad clutter environments. A December 2025 IAS survey of UK media professionals found that 56 percent cited AI-generated content adjacency as a top challenge for 2026, with ad fraud and MFA sites ranking second at 50 percent among advertiser and agency concerns.

The scale of undetected AI slop is significant. According to DoubleVerify, in just the first few weeks of 2026 alone, the DV Fraud Lab identified thousands of AI slop websites across multiple languages. EMarketer has projected that as much as 90 percent of web content could be AI-generated by 2026, creating categorization and detection challenges that existing systems were not designed to handle at this volume.

DoubleVerify has previously exposed similar network-scale operations, including Synthetic Echo - a network of over 200 AI-generated websites monetizing across multiple supply-side platforms using deceptive domain names designed to mimic legitimate news organizations such as espn24.co.uk and nbcsportz.com. AutoBait follows a similar structural logic but differs in one critical respect: it is the first such operation where the AI generation code itself has been publicly exposed, allowing verification researchers to document the system from the inside.

What DV says its product does

DoubleVerify states that its existing clients are already protected from AutoBait and similar networks through AI SlopStopper, a genAI website avoidance and detection solution. According to the company, AI SlopStopper is already operating across web environments and is scheduled to expand to social platforms during 2026. The product is positioned within DoubleVerify's broader DV Media AdVantage Platform alongside fraud detection, viewability, brand suitability, and attention measurement tools.

The timing of the AutoBait report aligns with a period of significant pressure on DoubleVerify as a business. The company faced a class action lawsuit filed in May 2025 alleging securities violations related to its representations about bot detection effectiveness, following a 36 percent single-day stock price decline after disappointing fourth quarter results. A separate shareholder derivative lawsuit followed in December 2025. The AutoBait investigation - and the public exposure of the AI SlopStopper product - represents a significant piece of evidence in DoubleVerify's ongoing effort to demonstrate the continued relevance and effectiveness of third-party verification in a market where advertiser confidence has been shaken.

The publisher dimension

AutoBait's financial model has a direct consequence for legitimate publishers. According to DoubleVerify, every ad dollar that flows to an AutoBait domain is a dollar diverted from a publisher that invests in human-produced content and editorial infrastructure. At the cost economics of automated content generation - where a $2.25 article can generate hundreds of ad impressions - quality publishers producing articles at multiples of that cost cannot compete purely on price in an open programmatic auction. The problem is structural. Industry analysis has put the share of programmatic budgets reaching valid, viewable, measurable impressions at just 36 percent, suggesting that the majority of programmatic spend is already subject to quality questions that AutoBait exploits.

The Association of National Advertisers has found that approximately 15 percent of current ad spend reaches MFA sites, a figure that predates the acceleration in AI content generation tools visible throughout 2025 and early 2026. That figure could be higher now, given the documented drop in production costs and the ease of operating a large network with a small team.

Timeline

- March 2024: DoubleVerify launches enhanced tiered MFA classification system covering High, Medium, and Low categories.

- June 14, 2024: DoubleVerify releases Global Insights Report showing 19% year-over-year increase in MFA impression volume based on one trillion impressions.

- October 2, 2024: Taboola announces partnership with Jounce Media to exclude MFA publishers from its Taboola Select network.

- January 2025: DoubleVerify publicizes initial findings about the Synthetic Echo network of over 200 AI-generated websites.

- May 22, 2025: DoubleVerify issues comprehensive alert documenting over 100 cases of ads.txt manipulation and exposing the Synthetic Echo operation.

- May 22, 2025: Class action lawsuit filed against DoubleVerify alleging securities violations related to bot detection representations.

- July 17, 2025: Integral Ad Science identifies AI-generated slop sites as a critical programmatic threat, with quality inventory delivering 91% higher conversion rates.

- July 30, 2025: DoubleVerify publishes inaugural DEEP DIVES report examining AI-generated recipe sites targeting advertiser budgets.

- September 25, 2025: DoubleVerify discloses surge in AI-powered fraudulent mobile applications executing ad fraud schemes.

- December 8, 2025: IAS survey of 290 U.S. media professionals finds 53% cite AI content adjacency as a top 2026 challenge.

- December 8, 2025: IAS survey of 220 UK media professionals finds 56% flag AI-generated content as a top challenge; MFA sites rank second at 50%.

- December 9, 2025: Shareholder derivative lawsuit filed against DoubleVerify executives.

- January 3, 2026: PPC Land publishes comprehensive explainer on AI slop tracking industry milestones from 2019 to present.

- March 4, 2026: DoubleVerify Fraud Lab publishes AutoBait investigation, exposing the AI content generation code of a 200+ domain MFA network.

Summary

Who: DoubleVerify's Fraud Lab, specifically researchers Arik Nagornov, Merav Geles, and Lia Bader, uncovered and reported on the AutoBait network. The network's operators - unnamed in the report - run a coordinated scheme targeting programmatic advertisers. Unprotected advertisers across the open web are the affected parties, as is any legitimate publisher competing for the same ad inventory.

What: AutoBait is a network of more than 200 Made for Advertising domains that uses large language models and an AI image generator to produce high-volume, emotionally manipulative clickbait content at approximately $2.25 or less per article. Each article can contain up to 56 slides, each carrying up to eight ad banners that refresh every few seconds. The network's AI prompt system was inadvertently left exposed in its own JavaScript code, allowing DoubleVerify researchers to document the full content generation pipeline.

When: The investigation was published on March 4, 2026. The AutoBait network has been generating tens of millions of impressions, with DoubleVerify noting that thousands of similar AI slop sites were identified in just the first few weeks of 2026.

Where: The AutoBait domains operate across the open programmatic web, appearing to programmatic buyers as independent lifestyle blogs. The underlying content generation infrastructure uses third-party LLM and image generation APIs, with the operator's configuration code stored in client-side JavaScript on the live sites.

Why: The economics of AI content generation make MFA operations highly profitable at minimal cost. Production costs of under $2.25 per article, combined with hundreds of ad-serving opportunities per page, allow a small team to operate hundreds of sites simultaneously and generate significant revenue from even a small share of filled impressions. The financial model depends entirely on advertisers lacking tools to distinguish automated low-quality content from human-produced editorial content in real time.