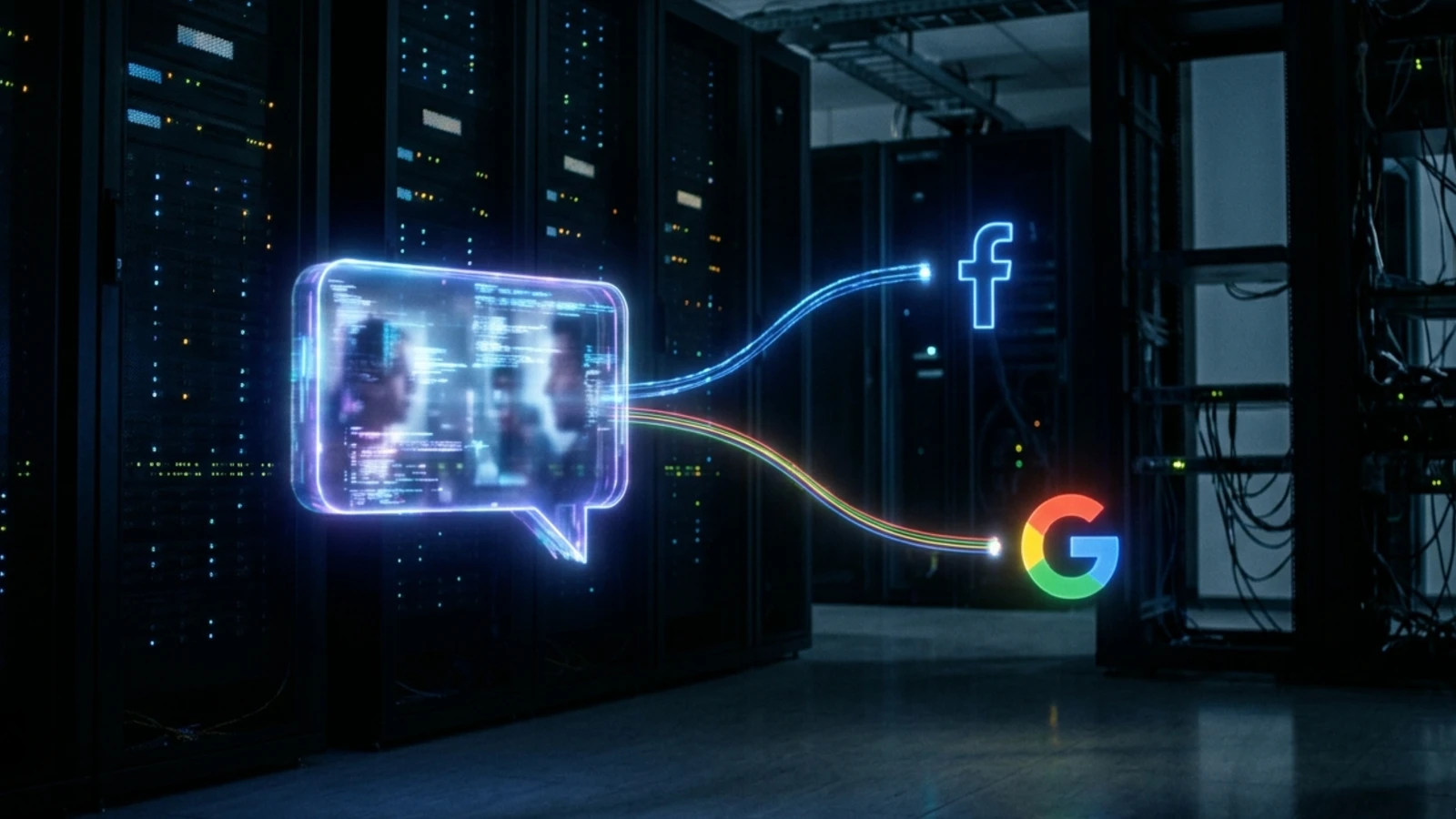

A class action complaint filed yesterday in the United States District Court for the Southern District of California alleges that OpenAI Global, LLC embedded third-party tracking code inside ChatGPT that transmitted users' private queries and personal identifiers to Meta Platforms, Inc. and Google, LLC in real time - without users' knowledge or consent. The case, Couture v. OpenAI Global, LLC, Case No. 3:26-cv-03000-H-GC, was filed on May 13, 2026, by law firm Bursor & Fisher, P.A. on behalf of plaintiff Amargo Couture, a San Diego, California resident, and a proposed class of all United States residents who have accessed ChatGPT.com.

The complaint runs 36 pages and asserts four counts, including violations of the Electronic Communications Privacy Act (ECPA), the California Invasion of Privacy Act (CIPA) under both sections 631 and 632, and invasion of privacy under the California Constitution and common law. Statutory damages under the ECPA are sought at $10,000 or $100 per day per violation. Under CIPA, plaintiffs seek the greater of $5,000 per violation or three times the amount of actual damages.

What the complaint alleges

According to the complaint, ChatGPT.com hosted two categories of third-party tracking technology during the period covered by the filing: Meta's Facebook Pixel and Google Analytics. Both tools, the filing argues, operated as real-time interception mechanisms, capturing the content of users' queries and forwarding them alongside personally identifiable information to Meta and Google servers - all while users believed they were communicating privately with OpenAI's chatbot.

The plaintiff used the website throughout 2025 and 2026 and entered queries relating to sensitive personal topics including health, finances, and other private matters, according to the filing. She had an active Facebook account and a Google account, and used the same browser for both social media activity and ChatGPT sessions. Because she remained logged into Facebook and Google while using ChatGPT, those sessions generated cookies that the tracking tools could use to link her queries to her existing profiles on both platforms.

The mechanics described in the complaint are technical and specific. When a user types a query into ChatGPT - say, "who won the Superbowl in 2005?" - that query becomes the title of the browser tab. According to the filing, that title value is captured by the Facebook Pixel and transmitted immediately to Meta's servers in a GET request to www.facebook.com/tr/. The complaint reproduces a network capture showing that the transmission, timestamped Tuesday April 28 at 18:18:03 EDT 2026, included the c_user cookie identifying the logged-in Facebook account, the fr cookie containing an encrypted Facebook ID and browser identifier, and a field labelled pmd[title] carrying the value "Super Bowl 2005 Winner" - the generated title of the ChatGPT conversation.

How the Facebook Pixel transmission works

According to the complaint, Meta's Facebook Pixel is a JavaScript snippet that OpenAI chose to embed in ChatGPT.com. When any action is taken on the website, the user's browser sends a standard GET request to OpenAI's server. Meta's embedded code then issues a separate, simultaneous instruction back to the browser - without alerting the user - directing it to duplicate the communication and send it to Meta's servers, along with additional data identifying both the activity and the individual.

The three cookies at the centre of the Meta interception allegations are technically distinct. The c_user cookie, which expires 90 days after the user checks "keep me logged in," contains an unencrypted Facebook ID (FID). The fr cookie, which also expires after 90 days unless the browser logs back into Facebook - at which point the timer resets - contains an encrypted Facebook ID along with a browser identifier. The _fbp cookie, which resets its 90-day window every time the user's browser revisits the same website, was transmitted as a first-party cookie set on ChatGPT.com's domain.

A Facebook ID is not an opaque internal code. According to the filing, any ordinary person in possession of an FID can look up the corresponding Facebook profile and name. That property means the FID functions as a de facto identity link between a person's public Facebook presence and whatever query they typed into ChatGPT.

The complaint notes that Meta's own documentation describes the Facebook Pixel as allowing business customers to "make sure your ads are shown to the right people" and to "find people who have visited a specific page or taken a desired action on your website." According to the filing, Meta processes incoming Pixel data immediately, adds it to targeting datasets, and uses it to serve advertising both to the user who generated the query and, potentially, to other Facebook advertisers whose audiences overlap with the query topic.

Google Analytics and the hashed email problem

The Google Analytics interception alleged in the complaint follows a parallel structure but involves different identifiers. According to the filing, when a user creates a ChatGPT account or logs in using their email address, Google Analytics intercepts a hashed version of that email address, marked by the identifier "em" in the transmitted data. The network capture reproduced in the complaint shows a Google Ads conversion call to www.google.com/pagead/1p-conversion/16679965591/, timestamped Tuesday April 28 at 18:17:19 EDT 2026, carrying a Secure-3PSID cookie that contains the user's Google profile ID alongside multiple other Google Signal cookies.

The hashing argument is contested territory. The complaint invokes Federal Trade Commission guidance stating that "hashing is vastly overrated as an 'anonymization' technique" and that "the casual assumption that hashing is sufficient to anonymize data is risky at best, and usually wrong." According to the filing, Google, as the recipient of hashed email data and as the entity that generates the hash function, can decrypt incoming hashed addresses and match them to its existing advertising profiles.

Google Analytics also collects device IDs derived from the _ga cookie's client ID property, User-IDs assigned during login (capable of storing up to 256 characters), and data sourced from Google Signals - a feature that associates analytics data with signed-in Google accounts for the purpose of cross-device remarketing. According to the filing, Google Signals enables Google Analytics to collect additional demographic and interest information from signed-in users and to export key events to Google Ads for use in automated bidding campaigns.

The complaint describes the result: when a user enters a query into ChatGPT, the page title reflecting that query - the same "dt: Super Bowl 2005 Winner" value visible in the network capture - is transmitted to Google via the Analytics code alongside the user's session and profile data.

Why this matters for advertising and marketing professionals

The case arrives at a moment of acute tension between ChatGPT's expanding role as a platform and its stated privacy commitments. OpenAI formally launched a self-serve Ads Manager for US businesses on May 5, 2026, just eight days before this complaint was filed. The company's updated US privacy policy, dated April 30, 2026, for the first time disclosed that OpenAI receives purchase data from advertisers and their partners, shares user information with marketing partners for third-party targeting, and uses personal data to promote its own products.

The complaint does not address that privacy policy update directly - the conduct alleged largely concerns events predating May 2026. But the timing places the lawsuit inside a broader debate about what data flows underpin ChatGPT's advertising infrastructure. StackAdapt joined the ChatGPT ad pilot on May 5, alongside Criteo, Kargo, Adobe, and Pacvue. OpenAI has described its ad model as contextual rather than behavioral, with stated privacy principles prohibiting the sale of user conversation data to advertisers. The complaint, if its factual allegations are accurate, describes a different set of data flows operating in the background - ones involving Meta and Google rather than OpenAI's own ad partners.

This lawsuit is not the first to raise these specific concerns. A structurally identical case, Doe v. Perplexity AI, Inc., Meta Platforms, Inc., and Google, LLC, was filed on March 31, 2026, in the Northern District of California. That complaint, which runs 140 pages, alleged that Perplexity embedded the Meta Pixel, the Conversions API, Google Ads, Google DoubleClick, Google Firebase, and Google Analytics inside its AI search engine, and used those tools to forward users' private conversations to Google and Meta without consent. The legal theories are nearly identical: CIPA sections 631 and 632, the ECPA, and California constitutional privacy violations.

The pattern extends further back. In August 2025, a federal jury found Meta violated CIPA by collecting sensitive health data from users of the Flo period-tracking app without consent, in a case that also centred on Meta's SDK embedded inside a third-party application. California entered a final $50 million judgment against Meta in March 2026 over Facebook user data shared with third-party developers. The Ace Hardware case, reported by PPC Land in April 2026, raised parallel Federal Wiretap Act and CIPA claims, alleging that Google Analytics and a third-party pixel kept firing even after users explicitly rejected all non-essential cookies.

The legal framework

CIPA, codified at California Penal Code section 630 et seq., was originally enacted to address telephone wiretapping. Courts have since extended its application to internet communications, email, and other digital channels. In Matera v. Google Inc. (2016), the Northern District of California held that CIPA "applies to new technologies and must be construed broadly to effectuate its remedial purpose of protecting privacy." The Ninth Circuit, in In re Facebook, Inc. Internet Tracking Litigation (2020), reversed dismissal of CIPA and common law privacy claims based on Facebook's collection of consumers' browsing history.

Section 631(a) of CIPA reaches anyone who, "by means of any machine, instrument, contrivance, or in any other manner," intentionally taps or makes an unauthorized connection with a wire, line, or cable, or willfully and without the consent of all parties reads or attempts to learn the contents of a message while it is in transit. Critically, it also covers anyone who "aids, agrees with, employs, or conspires with any person or persons to unlawfully do" any of those things. The complaint targets OpenAI under the aiding provision, characterising its installation of the Facebook Pixel and Google Analytics as assistance to Meta and Google's interception activities.

The ECPA count alleges violations of 18 U.S.C. section 2511, which prohibits intentional interception of the contents of any electronic communication. The complaint argues that the "party exception" in section 2511(2)(d) - which would normally shield a party to a communication from liability - does not apply here because OpenAI allegedly intercepted communications for the purpose of committing a tortious or criminal act, specifically violations of CIPA and invasion of privacy. The filing cites Brown v. Google, LLC (N.D. Cal. 2021) and R.C. v. Walgreen Co. (C.D. Cal. 2024) as precedents supporting that theory.

The class definition in the complaint covers all United States residents who accessed ChatGPT.com and had their personally identifiable information and communications disclosed to third-party entities. A California Subclass covers residents who used the site while in California. The proposed class is estimated to number in the millions, according to the filing.

A technical note on ChatGPT topic generation

The complaint's theory depends on the proposition that ChatGPT's conversation titles transmit the substance of users' queries. Cobun Zweifel-Keegan, Managing Director at IAPP in Washington, D.C., commented on LinkedIn on May 14, 2026, noting that "topics are essentially the same type of data captured when a web page title is transmitted through a pixel" and that "if this includes sensitive data, it could violate privacy expectations."

However, Zweifel-Keegan also highlighted a distinction that complicates the analysis. According to his post, "one major difference between a website title tag and a ChatGPT 'topic' is the former is static and the latter is generated during the interaction." ChatGPT likely uses what he described as "a secondary summarization-style LLM call, operating mainly on the opening user prompt (and possibly early assistant context), constrained by formatting and moderation instructions to generate short, human-readable conversation titles." He added: "Does the summary call include moderation instructions so titles don't reflect, say, explicit content, PII, health information, or other potentially sensitive revelations? I don't know but would be intrigued to find out."

That uncertainty is material. If ChatGPT's title-generation logic applies moderation filters that strip out sensitive information before the title is produced, the data transmitted through the Pixel could be less revealing than the original query. The complaint, for its part, presents a fairly benign example - "Super Bowl 2005 Winner" - rather than a sensitive medical or financial query. Whether the same transmission mechanism operates identically for queries involving health conditions, legal matters, or financial situations remains a factual question that would require discovery to resolve.

Broader context for the ad tech industry

For advertising and marketing professionals, the core issue in this case is not new: third-party tracking pixels embedded on websites capture and transmit user data. What makes the ChatGPT context distinctive is the nature of the data. Web page titles on most sites reflect relatively generic content - product categories, article headlines, navigation paths. ChatGPT conversation titles are generated summaries of user intent, often reflecting the specific question or problem a user brought to the platform. The complaint contends that transmitting those titles to Meta and Google effectively gives both companies a window into query-level behaviour that is qualitatively different from standard browsing signals.

The DOJ's argument in a February 2026 case that conversations with Claude AI are not legally privileged, on the grounds that users voluntarily shared queries with a third-party platform subject to data collection, establishes a parallel legal observation: AI chatbot queries are not confidential by default. The Couture complaint argues the opposite claim - that users had a reasonable expectation of privacy in those queries, and that OpenAI breached that expectation by facilitating interception.

OpenAI has not publicly responded to the complaint as of the time of writing. The company is represented as a Delaware Limited Liability Corporation with its principal place of business at 1455 3rd Street, San Francisco, CA 94158. Bursor & Fisher, P.A. is representing the plaintiff, with Philip L. Fraietta, Max S. Roberts, and Joshua R. Wilner listed as attorneys of record.

Timeline

- August 2025 - Federal jury finds Meta violated CIPA by collecting sensitive health data from Flo app users without consent

- November 13, 2025 - OpenAI must turn over 20 million anonymized ChatGPT conversations to the New York Times after federal judge denies stay request

- February 14, 2026 - DOJ argues conversations with Claude AI are not legally privileged, citing third-party platform data collection policies

- March 3, 2026 - San Francisco Superior Court enters $50 million final judgment against Meta over Facebook user data shared with third-party developers

- March 12, 2026 - Ace Hardware sued in Northern District of California for alleged tracking via Google Analytics and Bazaarvoice Pixel even after cookie opt-out

- March 31, 2026 - Doe v. Perplexity AI filed in Northern District of California, alleging embedded Meta Pixel and Google Analytics transmitted AI conversation data to Meta and Google without user consent

- April 28, 2026 - Network captures included in the ChatGPT complaint, timestamped this date, show GET requests to Facebook and Google servers transmitting ChatGPT query titles alongside user cookie data

- April 30, 2026 - OpenAI updates its US privacy policy, for the first time disclosing that purchase data from advertisers is received, user information is shared with marketing partners, and personal data is used to promote OpenAI products

- May 5, 2026 - OpenAI opens self-serve Ads Manager to all US businesses; StackAdapt joins ChatGPT ad pilot alongside Criteo, Kargo, Adobe, and Pacvue

- May 13, 2026 - Couture v. OpenAI Global, LLC, Case No. 3:26-cv-03000-H-GC, filed in the Southern District of California by Bursor & Fisher, P.A., alleging Facebook Pixel and Google Analytics on ChatGPT.com intercepted user queries and PII without consent

Summary

Who: Plaintiff Amargo Couture, a California resident, filed on behalf of a proposed class of all US residents who accessed ChatGPT.com and had their data disclosed to third parties. The defendant is OpenAI Global, LLC, a Delaware LLC headquartered in San Francisco. Meta Platforms, Inc. and Google, LLC are named as the third-party recipients of the allegedly intercepted data, though they are not defendants in this action.

What: A federal class action alleging that OpenAI embedded the Facebook Pixel and Google Analytics inside ChatGPT.com, and that those tools transmitted users' conversation topic titles and personal identifiers - including Facebook IDs via the c_user and fr cookies, and hashed email addresses via Google Analytics' "em" identifier - to Meta and Google in real time without user knowledge or consent. The complaint asserts four counts: violation of the ECPA (18 U.S.C. section 2511), violation of CIPA section 631, violation of CIPA section 632, and invasion of privacy under the California Constitution and common law.

When: The complaint was filed on May 13, 2026. The underlying conduct is alleged to have occurred throughout 2025 and 2026. Network captures included in the complaint carry timestamps of April 28, 2026.

Where: Filed in the United States District Court for the Southern District of California. The conduct giving rise to the claims is alleged to have occurred on ChatGPT.com, accessible from anywhere but specifically affecting California residents under the California Subclass definition.

Why: The complaint argues that ChatGPT users, who routinely enter sensitive personal, medical, financial, and legal queries into the platform, had a reasonable expectation of privacy in those communications. By incorporating third-party tracking code that forwarded query content and identity-linked cookies to Meta and Google - both of whom used the data to build advertising profiles and serve targeted ads - OpenAI allegedly violated that expectation and breached its legal duties under federal and California wiretapping law. Statutory damages of $10,000 per ECPA violation and $5,000 per CIPA violation are sought, with class membership estimated in the millions.

Discussion