Researchers at DoubleVerify published a detailed investigation on March 4, 2026, into a coordinated network of more than 200 Made for Advertising (MFA) domains that uses large language models and an AI image generator to produce emotionally manipulative clickbait at industrial scale - while leaving its own operational code fully visible inside the sites' JavaScript. The network, named AutoBait by the DV Fraud Lab, has generated tens of millions of advertising impressions, with unprotected advertisers unknowingly paying for placements on what appear to be independent lifestyle blogs.

The report was authored by DV Fraud Lab researchers Arik Nagornov, Merav Geles, and Lia Bader. It is one of the most technically detailed public accounts of how an AI-powered MFA operation actually functions, because the network's operators made an operational security error: as they rushed to scale across hundreds of domains, they left the content-generation system - code, prompts, and all - exposed within the sites' client-side JavaScript.

That exposure gives the industry a rare, unobstructed view of how AI slop is manufactured and monetized at scale.

A $2.25 article generating hundreds of ad impressions

The economics of AutoBait are stark. According to the DV Fraud Lab report, the network uses a top-tier text-to-image AI model - specifically black-forest-labs/flux-1.1-pro - to generate images at a cost of 4 cents per slide. Articles are structured as slideshows, with some reaching 56 slides in length. Each slide can carry eight ad banners, and those ads refresh every few seconds.

The arithmetic is simple and deliberate. A single article may cost less than $2.25 to produce while creating hundreds of distinct ad-serving opportunities. Every impression filled after that threshold is pure margin for the operator. In one month, according to DoubleVerify, an AutoBait-style network can produce tens of thousands of pages with millions of ad-serving opportunities. Even a modest fill rate generates significant revenue.

This cost structure places legitimate publishers at a structural disadvantage in open programmatic auctions. A human journalist writing a single article at anything approaching professional rates cannot compete on price-per-impression against a system that creates content for under $2.25. The problem is not just fraud in the traditional sense - it is a fundamental disruption of the economic model that funds quality journalism.

The code left in plain sight

What makes the AutoBait investigation unusual is the granularity of the evidence. Because the operators exposed their JavaScript, researchers could read the exact prompts used to instruct the large language model.

The text prompt instructs the model to generate listicle-style articles using a templated framework with three configurable variables: [TITLE], [SUMMARY_LENGTH], and [SLIDES_COUNT]. From there, the instructions become highly specific about manipulation. According to the exposed code, the LLM is told to "start with a brief, attention-snatching summary" and to "promise shocking or little-known insights so readers feel compelled to dive in immediately."

Each slide must contain a headline of four to seven words - described in the prompt as "ultra-literal," with examples like "A mole with a funny shape" or "A sore that won't heal." The body copy, 50 to 70 words per slide, is required to "inject real emotion (fear, anger, shock, relief) into every paragraph." The ordering instruction is equally deliberate: the first five to ten slides must be "the most sensational or shocking points - anything that stops someone mid-scroll."

The image prompts are no less calculated. The AI is instructed to produce "ultra-realistic" photos that look like they were "casually taken on a smartphone by a real person - unfiltered, unstaged, and emotionally authentic." The code explicitly states that images should "NOT look artificial, stylized, or generated by AI." That phrase - written by the operators themselves - is a direct acknowledgment that the entire operation is built on deception.

For images involving people, the prompt specifies that subjects should be women aged 30 or older, shown with "raw, candid emotion matching the text - fatigue, frustration, shock, or relief," wearing "plain or mismatched" clothing with "messy hair" and "unfiltered skin texture." No eye contact. No posed looks. No smiles.

Psychological engineering at scale

The AutoBait prompts are not simply instructions to produce content. They are a documented methodology for exploiting psychological vulnerabilities. The exposed code instructs the model to "ask a direct question, hint at danger or relief, and convey urgency" in each paragraph. It calls for content that makes "the reader feel something visceral." It demands specificity designed to trigger the imagination: "name colors, objects, or settings exactly. Paint a scene someone could immediately picture and photograph."

This is clickbait engineered with the precision of a conversion rate optimization exercise, applied to emotionally charged topics - health symptoms, financial fears, physical warnings - and deployed across hundreds of domains simultaneously. The DV Fraud Lab describes the content as exhibiting "repetitive phrasing, statistical word choices and occasional hallucinations" that render it often nonsensical on close reading. The articles are not designed to be read carefully. They are designed to be clicked.

The Trivia and Personality content types in the AutoBait system follow the same automated structure. According to the exposed code, the trivia system generates exactly 100 questions per quiz, each with a heading, question, three answer choices, a correct answer index, and a post-question explanation. The personality quiz variant generates exactly 100 questions as well, but with only two answer options each, and produces two final personality archetypes. Both content types use the same flux-1.1-pro image generation pipeline with identical instructions to make the images look like unedited smartphone photography.

Scale and detection challenges

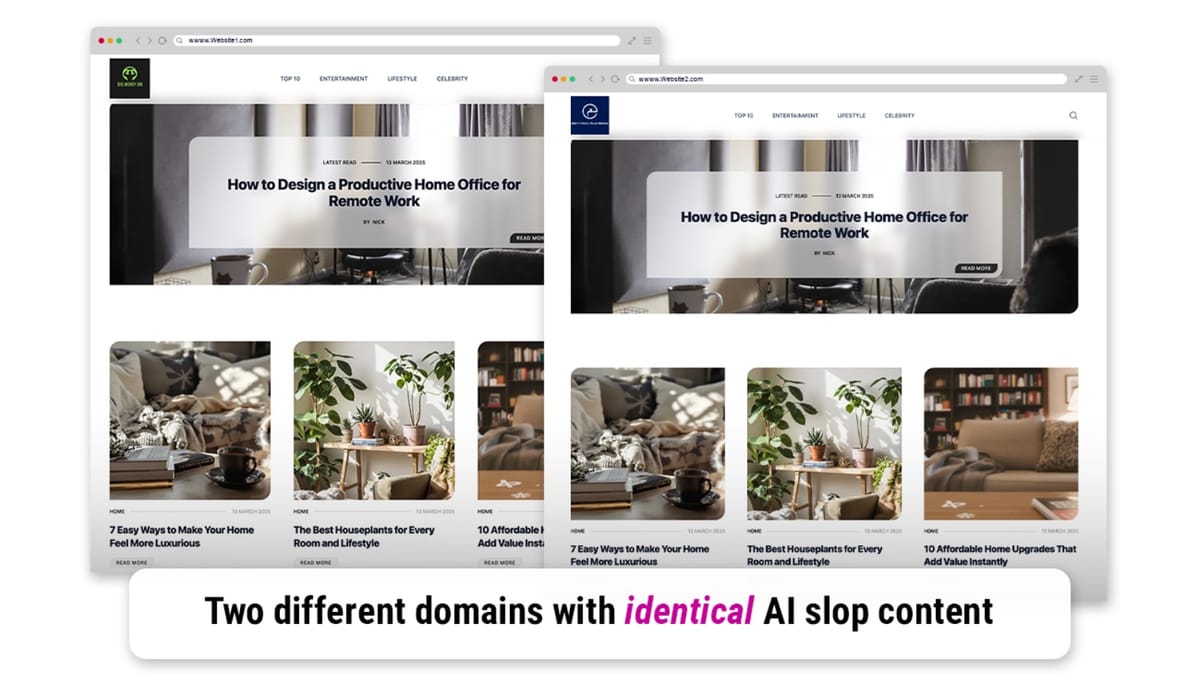

The proliferation of AI-generated content for advertising purposes is not a new concern, but AutoBait represents a step change in operational scale and automation. In just the first few weeks of 2026, according to DoubleVerify, the DV Fraud Lab identified thousands of AI slop websites across multiple languages. Each domain in the AutoBait network appears, in isolation, to be an independent lifestyle blog. It is only when viewed as a coordinated network that the pattern becomes visible.

The Association of National Advertisers has estimated that approximately 15 percent of current ad spend reaches MFA sites - a figure that predates the acceleration in AI content generation tools visible throughout 2025 and early 2026. That percentage could be higher now, given the documented drop in production costs and the ease with which a small team can operate a large network of sites using automated content systems.

DoubleVerify's own Global Insights Report from June 2024 found a 19 percent year-over-year increase in MFA impression volume in 2023, based on analysis of over one trillion impressions from more than 2,000 brands globally. At the same time, 57 percent of advertisers globally surveyed by DV viewed AI-generated content as a challenge for the digital advertising ecosystem. The AutoBait report arrives as that challenge has grown significantly more acute.

Integral Ad Science, in a July 2025 analysis, identified AI-generated slop sites as a critical threat to programmatic effectiveness. Quality inventory, according to IAS data, delivers 91 percent higher conversion rates than ad clutter environments. A December 2025 IAS survey of 220 UK media professionals found that 56 percent cited AI-generated content adjacency as a top challenge for 2026, with MFA sites ranking second at 50 percent among advertiser and agency concerns.

AutoBait in the context of a broader pattern

AutoBait is not the first network of this type that DoubleVerify has uncovered. In January 2025, the company publicized initial findings about a network it named Synthetic Echo - also comprising more than 200 AI-generated websites - which used deceptive domain names such as espn24.co.uk and cbsnewz.com to impersonate established news organizations. The Synthetic Echo investigation revealed systematic content plagiarism, with fraudulent domains duplicating and modifying articles from legitimate publishers.

The DV Fraud Lab has also been tracking ads.txt manipulation, documenting more than 100 cases since the standard launched in 2017. A comprehensive alert issued in May 2025 warned that fraudsters were exploiting the ads.txt system to divert advertising revenue from legitimate publishers through increasingly complex manipulation techniques.

The broader fraud picture is substantial. The World Federation of Advertisers has estimated that ad fraud will exceed $50 billion globally in 2025. DoubleVerify's report on ShadowBot from June 2025 documented a single mobile and CTV fraud scheme that generated over 35 million spoofed mobile devices during the first quarter of 2025 alone, at a cost to unprotected advertisers of an estimated $2.5 million.

What distinguishes AutoBait is the transparency of its mechanics. Most fraud investigations rely on behavioral signals and traffic pattern analysis. The exposed JavaScript in the AutoBait network provides something rarer: documentary evidence of intent. The operators wrote down exactly what they were trying to do - manipulate users emotionally, deceive them visually, and maximize the number of ads served per session - and left those instructions on the public internet.

A product response and its context

DoubleVerify states that existing clients are already protected from AutoBait and similar networks through AI SlopStopper, described as a genAI website avoidance and detection solution. According to the company, the product is already operating across web environments and is scheduled to expand to social platforms during 2026. DoubleVerify positions AI SlopStopper within its broader DV Media AdVantage platform alongside fraud detection, viewability, brand suitability, and attention measurement tools.

The timing of the AutoBait report sits within a period of significant scrutiny for DoubleVerify. A class action lawsuit filed in May 2025 alleged securities violations related to the company's representations about bot detection effectiveness, following a 36 percent single-day stock price decline after the company disclosed disappointing fourth-quarter results. A shareholder derivative lawsuit filed in December 2025 targeted CEO Mark Zagorski, CFO Nicola Allais, and eight board members, alleging the company systematically misled investors about challenges to its core business model.

Neither lawsuit makes claims about the AutoBait investigation itself. The investigations by the DV Fraud Lab into MFA and AI slop networks are separate from the financial and legal issues the company faces. What the juxtaposition highlights is an industry under pressure from multiple directions simultaneously - fraudulent inventory expanding, detection credibility challenged, and the open programmatic web losing share to closed platforms.

What the industry is watching

For the marketing community, the AutoBait investigation illustrates why the detection of AI slop at scale remains a technically difficult problem. DoubleVerify's ability to read the exposed code was, in this case, a gift from the operators' own operational error. Without that exposure, detecting the network would have required identifying behavioral and linguistic patterns across hundreds of seemingly independent domains - a much harder task, and one that becomes harder still as the language models powering these systems improve.

The broader context for AI slop in digital advertising has been building for years. EMarketer has projected that as much as 90 percent of web content could be AI-generated by 2026. Merriam-Webster named "slop" its 2025 Word of the Year in December 2025. The industry has responded with a range of tools - DoubleVerify's tiered MFA categories launched in early 2024, IAS classification systems, and supply-side integrations through platforms like OpenX - but the economics continue to favor the operators of these networks.

Every ad dollar that reaches an AutoBait domain is a dollar that did not reach a publisher investing in human-produced content. At the cost structures AutoBait operates at, that trade-off happens quietly, automatically, and at scale - unless detection systems specifically identify and block it.

Timeline

- February 2024: DoubleVerify launches enhanced tiered MFA classification covering High, Medium, and Low categories, using a combination of human expertise and AI-powered analysis.

- June 14, 2024: DoubleVerify releases its Global Insights Report, documenting a 19 percent year-over-year increase in MFA impression volume in 2023, based on more than one trillion impressions from over 2,000 brands globally.

- January 2025: DoubleVerify publicizes initial findings about Synthetic Echo, a network of more than 200 AI-generated websites using deceptive domain names to impersonate established news publishers.

- May 22, 2025: DoubleVerify issues a comprehensive alert documenting more than 100 cases of ads.txt manipulation since 2017, warning that fraudsters are diverting revenue from legitimate publishers.

- May 22, 2025: A class action lawsuit is filed against DoubleVerify alleging securities violations related to its bot detection representations, following a 36 percent single-day stock price decline.

- June 25, 2025: DoubleVerify uncovers ShadowBot, a scheme generating over 35 million spoofed mobile devices during Q1 2025 and costing unprotected advertisers an estimated $2.5 million.

- July 17, 2025: Integral Ad Science identifies AI-generated slop sites as a critical programmatic threat, noting that quality inventory delivers 91 percent higher conversion rates than ad clutter environments.

- September 25, 2025: DoubleVerify discloses a surge in AI-powered fraudulent mobile applications executing ad fraud schemes across mobile platforms.

- December 8, 2025: IAS surveys of 290 U.S. and 220 UK media professionals find that 53 percent of U.S. respondents and 56 percent of UK respondents cite AI-generated content adjacency as a top challenge for 2026.

- December 9, 2025: A shareholder derivative lawsuit is filed against DoubleVerify executives, alleging systematic misleading of investors about bot detection capabilities and business challenges.

- January 3, 2026: PPC Land publishes a comprehensive explainer on AI slop tracking industry milestones from 2019 to the present.

- March 4, 2026: DoubleVerify's Fraud Lab publishes the AutoBait investigation, authored by Arik Nagornov, Merav Geles, and Lia Bader, exposing the AI content generation code and operational structure of a 200-plus domain MFA network generating tens of millions of impressions.

Summary

Who: DoubleVerify's Fraud Lab - specifically researchers Arik Nagornov, Merav Geles, and Lia Bader - conducted the investigation into AutoBait. The network's operators, unnamed in the report, run a coordinated MFA scheme targeting programmatic advertisers. Unprotected advertisers across the open web and legitimate publishers competing for the same inventory are the affected parties.

What: AutoBait is a network of more than 200 Made for Advertising domains using large language models and the black-forest-labs/flux-1.1-pro image generation model to produce high-volume, emotionally manipulative slideshow articles. Each article costs less than $2.25 to generate and can create hundreds of ad-serving opportunities across as many as 56 slides, each carrying up to eight ad banners with frequent refresh rates. The operators left their AI content-generation code - including detailed text and image prompts - exposed inside the sites' public JavaScript.

When: The investigation was published on March 4, 2026. The AutoBait network had been operating before that date, generating tens of millions of impressions. DoubleVerify noted that thousands of similar AI slop websites were identified in just the first few weeks of 2026.

Where: The AutoBait domains operate across the open programmatic web, presenting to buyers as independent lifestyle blogs. The underlying content generation infrastructure uses third-party LLM and image generation APIs, with configuration code stored in client-side JavaScript on the live domains.

Why: The economics of AI content generation make MFA operations highly profitable at minimal cost. At under $2.25 per article and hundreds of ad-serving opportunities per page, a small team can operate hundreds of sites simultaneously and generate significant revenue from even a modest share of filled impressions. The financial model depends entirely on advertisers lacking tools to distinguish automated low-quality content from human-produced editorial content in programmatic auctions.