Google expanded the capabilities of its Gemini app on April 9, 2026, enabling the assistant to generate functional, interactive simulations and models directly inside a conversation. The change marks a significant departure from the format that has characterized AI chat interfaces since their emergence - responses that combined text with static images or fixed diagrams. Now, at least for users on the Pro model, a single prompt can produce a live, controllable simulation.

According to the announcement published on Google's The Keyword blog, the feature is rolling out globally to all Gemini app users. Access requires selecting the Pro model in the prompt bar at gemini.google.com before submitting a request. The simplest way to activate the capability, according to the announcement, is to phrase a question with "show me" or "help me visualize" before describing the concept.

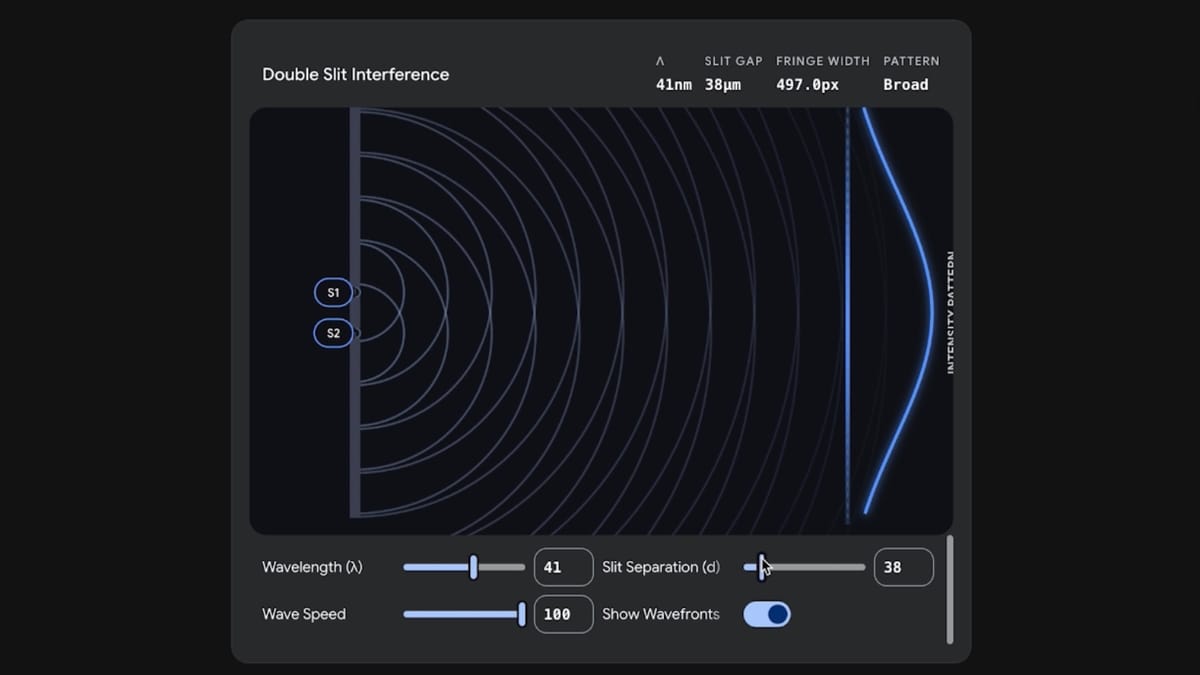

The scope of what qualifies as a complex concept is deliberately broad. Rotating a molecule, running a physics engine, or exploring how gravitational forces create a stable orbital path all fall within the stated use cases. The orbital mechanics example is worth examining in detail, because it illustrates what separates this output from a static diagram.

From fixed images to adjustable physics

According to the announcement, a user exploring how the moon orbits the Earth is no longer limited to a fixed illustration. The new output type includes sliders and input fields for variables such as initial velocity and gravitational strength. Adjusting either parameter produces an immediate visual response - the simulated orbit changes in real time to reflect the new values. That interactivity is the core distinction. A static diagram communicates a single state; a simulation allows exploration of the parameter space.

This capability builds on a trajectory that Google has been advancing through its Gemini 3 model since November 2025. When Google launched Gemini 3 on November 18, 2025, the announcement introduced generative UI - a technical approach where the model constructs interfaces dynamically in response to a query rather than selecting from a library of pre-built templates. The model writes code for visualizations, games, widgets, and simulations and executes that code as part of the response. The April 9 announcement extends that same capability into the standalone Gemini chat application, making it accessible to a much wider user base than the AI Mode in Google Search, where generative UI first appeared.

Google deployed Gemini 3 in Search with model-designed interfaces on December 18, 2025, following the November 18 model launch. Robby Stein, Vice President of Product for Google Search, described a concrete example of the simulation capability during a December 18 podcast episode of Google AI Release Notes. "I was teaching my daughter about lift and I asked it to create a simulation or a visualization for it and it made this crazy little window with vectors, like arrows running over a wing," Stein stated. "Through these sliders, it would adjust the wing and then show how much lift was occurring, like, where the arrows would start under the wing and pushing the plane up." That exchange happened within Search's AI Mode. The April 9 update brings comparable output to the dedicated Gemini application.

The technical mechanism behind the output

The underlying process involves the Gemini model generating code - most likely JavaScript or a similar web-rendering language - that is then executed within the chat interface to produce the interactive result. This is different from retrieving a pre-built simulation from an external source. The model constructs the visualization from scratch in response to the specific query, which is why arbitrary or niche topics can produce usable output. A prompt about fractal geometry, for instance, can yield a render of fractal patterns alongside adjustable parameters.

When Gemini 3 launched in November 2025, Google researchers including Yaniv Leviathan, Google Fellow, Dani Valevski, Senior Staff Software Engineer, and Yossi Matias, Vice President and Head of Google Research, described the generative UI approach as differing fundamentally from static, predefined interfaces. The prompts feeding the system can range from a single word to detailed multi-paragraph specifications, with the model determining the appropriate interface type and component layout from context. That flexibility is precisely what allows a casual conversational prompt - "help me visualize how a stable orbit works" - to produce a functional physics sandbox rather than a generic illustration.

The January 27, 2026 upgrade to AI Overviews, which made Gemini 3 the default model for AI Overviews globally, had already extended the simulation capability to billions of search results pages. The April 9 Gemini app update is a parallel distribution move, pushing the same class of output into a different product surface where users engage in longer, more exploratory conversations. The two surfaces now share a common capability, though the conversational context of the Gemini app may produce more tailored simulations given the extended back-and-forth that chat naturally enables.

Context: a user base of 750 million monthly active users

The scale of the rollout matters. According to Alphabet's fourth quarter 2025 earnings announcement released February 4, 2026, the Gemini app had reached 750 million monthly active users. That figure represented a substantial climb from the 650 million monthly active users reported in October 2025, and from 450 million in July 2025. The platform's growth over that eight-month period - from 450 million to 750 million - coincided with a sequence of feature additions including the Nano Banana image generation tool released in August 2025 and the subsequent Gemini 3 integration.

Similarweb data published January 22, 2026, showed Gemini capturing 22% of global AI website traffic, up from 19.5% in mid-December 2025 and from just 5.3% twelve months prior. That 315% increase over twelve months reflects sustained platform growth rather than a single product moment. Each feature addition has contributed to cumulative engagement. The interactive simulation capability announced April 9 represents another functional differentiator in an increasingly competitive AI assistant market.

What Google is doing with the Gemini app is progressively narrowing the gap between asking a question and understanding the answer. Text responses require the reader to construct a mental model from description. A static diagram provides one pre-selected view. A live simulation lets a user probe the underlying system - adjust a variable, observe the effect, and develop intuition about causal relationships that text alone struggles to convey.

The Pro model requirement and global availability

The feature is not universally active across all Gemini tiers. According to the announcement, generating interactive simulations requires the Pro model. Users must select it explicitly from the model selector in the prompt bar at gemini.google.com before submitting the request. The Pro model selector has been the gateway for other advanced capabilities in the Gemini app, and the pattern here is consistent with previous launches.

The global rollout distinguishes this from earlier capability launches that initially restricted access to specific markets or subscription tiers. When generative UI first appeared in Search's AI Mode in November 2025, access required a Google AI Pro or Ultra subscription in the United States. The April 9 announcement does not carry those geographic or subscription restrictions for the base interactive simulation feature - it is rolling out globally to Gemini app users who select Pro from the model dropdown.

This matters for international users who have been on the receiving end of staged launches. The Gemini mobile app expanded to Europe in June 2024, following the initial February 2024 launch in select markets. AI Overviews, separately, expanded to nine European countries including Germany, France, Italy, and Spain in March 2025. The April 9 simulation update applies globally from the start.

Implications for how AI assistants handle technical queries

The shift from text-plus-static-image to live simulation has particular relevance for queries involving physics, mathematics, chemistry, engineering, and any field where understanding emerges from interaction with a system rather than from reading about it. A molecular biology student asking about protein folding receives something fundamentally different when the response includes a rotatable 3D model versus a labeled 2D illustration. A physics student exploring orbital mechanics gets more from adjustable sliders than from a written description of the equations.

Whether the generated simulations are consistently accurate at the parameter boundaries - extreme values, unusual configurations - is a separate question. Google has acknowledged in related product contexts that generation times for complex interactive outputs can exceed one minute, and that occasional output inaccuracies are areas of ongoing research. Those limitations applied specifically to generative UI in Search when Gemini 3 launched in November 2025, and the same technical constraints likely apply here.

For the marketing technology community, the April 9 announcement is less directly about advertising tools and more about what the Gemini platform is becoming as a product. Google's NewFront 2026 presentation on March 23 at Pier 57 in New York City made clear that the underlying Gemini model's advances propagate across every Google product simultaneously. A Gemini app capable of generating live simulations is also a Gemini model with expanded reasoning and code generation capability - and that same model powers the AI features embedded in Google Ads, Display & Video 360, and Search Ads 360.

Google denied plans to introduce advertising within the Gemini app in December 2025, with Vice President of Global Ads Dan Taylor stating on December 8 that there were no current plans to add ads to the Gemini app. Whether that position holds as the app accumulates users and feature depth remains an open question for the advertising industry. A Gemini app that generates interactive, multi-minute engagement experiences around complex topics presents a qualitatively different advertising surface than a text-response chatbot - though Google has not signaled any plans to use it as such.

The generative UI approach also connects directly to how Google has been rethinking search interfaces. When AI Overviews were upgraded to Gemini 3 globally in January 2026, Google described scenarios where users could transition seamlessly from a search result into an extended AI conversation. A simulation embedded in a search result carries the same logic: rather than sending a user to an external website to find an interactive physics demo, Google provides it inline. That dynamic has implications for content publishers and advertisers alike, as engagement that previously required navigating to a third-party site increasingly resolves within Google's own interface.

Summary

Who: Google, through its Gemini app team, announced the feature on April 9, 2026.

What: The Gemini app gained the ability to generate interactive simulations and models directly inside chat. Users can adjust sliders, input numerical values, and manipulate variables in real time within the generated output. The feature builds on the generative UI technology underlying Gemini 3, which writes and executes code to construct custom visual interfaces in response to individual prompts.

When: The announcement was published on April 9, 2026. The rollout began on that date.

Where: Available globally at gemini.google.com. The Pro model must be selected from the model selector in the prompt bar to access the feature.

Why: The stated purpose is to help users better understand complex topics through direct interaction with functional models rather than static diagrams. The capability extends a line of development that began with Gemini 3's generative UI launch in November 2025, which first introduced dynamic interface generation in Search's AI Mode before expanding to the standalone Gemini application.

Timeline

- December 6, 2023: Google announces Gemini AI, its multimodal model capable of processing text, code, audio, images, and video.

- February 2024: Gemini app launches in select markets, replacing Google Bard.

- June 5, 2024: Gemini mobile app expands to Europe, following initial launch in select markets.

- July 2025: Gemini app reaches 450 million monthly active users.

- July 7, 2025: Google reveals Gemini 2.5 multimodal advances, including tokenization efficiency reducing frame representation from 256 to 64 tokens.

- October 2025: Gemini app reaches 650 million monthly active users.

- November 18, 2025: Google launches Gemini 3 with generative UI, introducing dynamic interface generation and interactive simulations in AI Mode for Google AI Pro and Ultra subscribers in the United States.

- December 8, 2025: Google denies plans to add ads to the Gemini app, after Adweek reported briefings with advertisers. VP Dan Taylor states: "There are no ads in the Gemini app and there are no current plans to change that."

- December 18, 2025: Google deploys Gemini 3 in Search with model-designed interfaces, enabling real-time simulation generation for complex queries.

- January 22, 2026: Gemini captures 22% of global AI website traffic according to Similarweb, up from 5.3% twelve months prior.

- January 27, 2026: Google makes Gemini 3 the default model for AI Overviews globally and introduces seamless transitions from AI Overviews to AI Mode.

- February 4, 2026: Alphabet reports Gemini app has reached 750 million monthly active users in Q4 2025 earnings announcement.

- March 23, 2026: Google presents Gemini advantage at NewFront 2026, integrating Gemini into Google Marketing Platform with live sports bidding, Confidential Publisher Match, and Ads Advisor.

- April 9, 2026: Google announces the Gemini app can now generate interactive simulations and 3D models globally for all users on the Pro model.