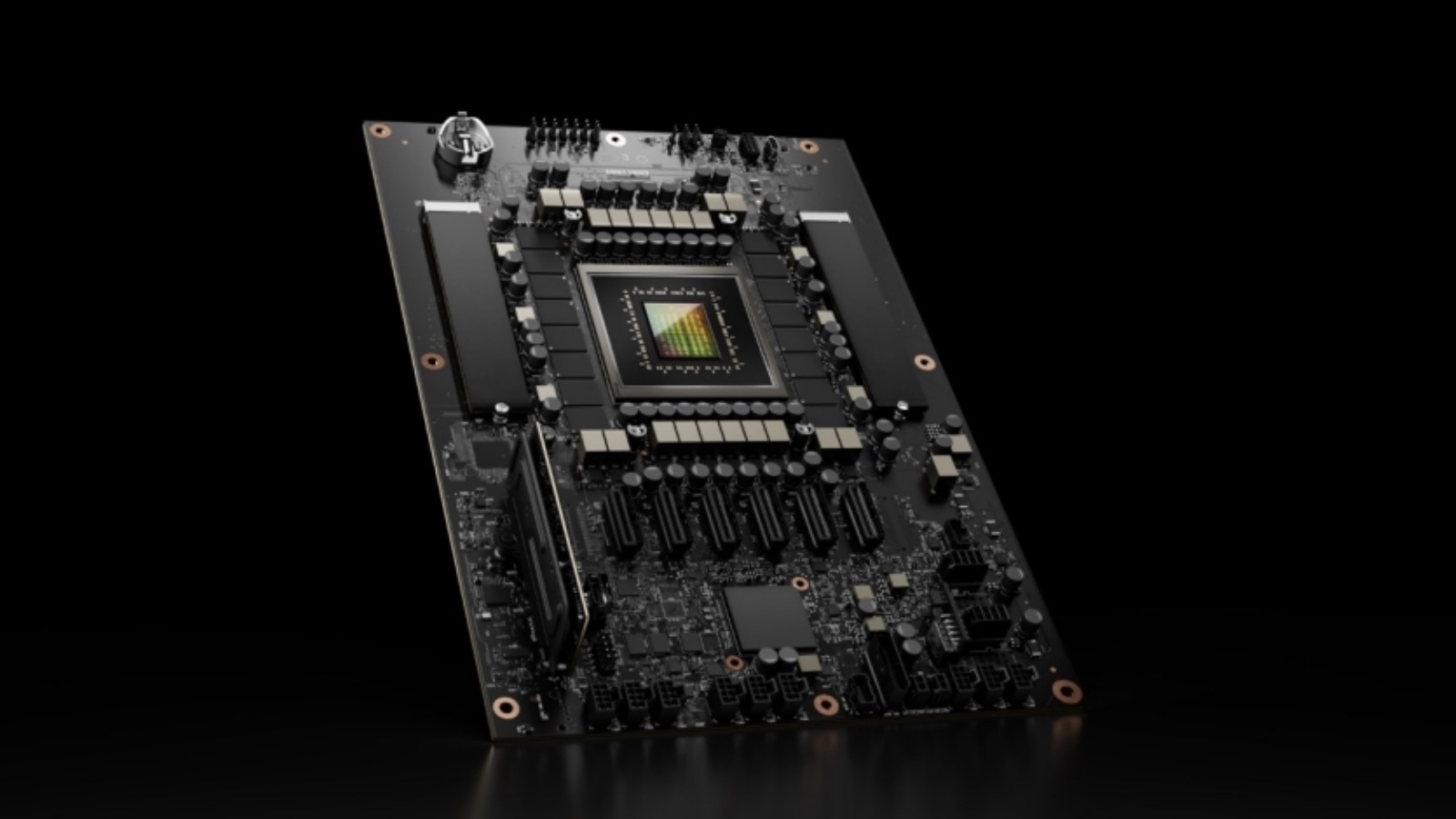

LiveRamp today announced native support for NVIDIA AI infrastructure inside its clean rooms, upgrading the underlying compute architecture to handle the most demanding AI model training and inference workloads at up to 15 times the speed of CPU-based environments. The move addresses a structural limitation that has constrained how AI companies work with clean room data - and it repositions LiveRamp as something closer to a compute platform than a pure data collaboration layer.

The San Francisco-based company, listed on the New York Stock Exchange under the ticker RAMP, serves a network of more than 900 brands, publishers, and platforms. Until today, its clean room environments ran on CPU-based compute. That created a quiet but persistent problem: AI partners wanting to bring their models into LiveRamp's secure environments were forced to rearchitect those models from GPU-native formats to run on CPU infrastructure - a process that can absorb weeks of engineering work, introduce performance regressions, and add validation overhead before a single byte of brand data is touched.

What the GPU upgrade changes

The technical shift is direct. LiveRamp has replaced the CPU-based compute layer inside its clean rooms with GPU-optimized infrastructure provided by NVIDIA. AI partners can now bring existing code into the clean room environment without modification. According to the announcement, the plug-and-play model means no complex or time-consuming recoding is required to connect heavy-duty models to the platform.

Preliminary tests show model performance up to 15 times faster than the prior CPU-based environments. LiveRamp did not publish specific benchmark conditions or detail which model architectures were tested, but framed the figure as representative of performance optimization timelines for marketers. The 15x number applies to both training - the process of building and refining a model on data - and inference, the process of running a trained model to generate predictions. Both are computationally intensive operations that GPUs handle with substantially greater throughput than general-purpose processors.

Matt Karasick, Chief Product Officer at LiveRamp, put the upgrade in terms of what it unlocks for the platform's network. "Whether a data scientist is training a predictive model on billions of rows of transactions, or a marketer needs a new model to improve measurement," he said, "LiveRamp empowers clients to train and run their most advanced models at scale, without ever compromising data security or proprietary IP." The scale reference matters: billions of rows of transaction data is not a workload CPU environments handle gracefully. GPU parallelism is what makes that kind of computation viable inside a governed clean room rather than an open cloud environment.

NVIDIA framed the same development from the compute side. Jamie Allan, Director of AdTech & Digital Marketing Industries at NVIDIA, described GPUs as the foundational layer for the next generation of marketing technology. "GPUs are the engine of the next generation AI marketing tech stack, purpose-built for the most demanding training and inference workloads," he said. "Extending the power of NVIDIA's accelerated computing through LiveRamp gives marketers a frictionless foundation to scale their marketing and transform the speed of innovation." The framing positions this not as a feature add but as an architectural shift - the kind of compute that previously required a separate cloud environment is now embedded inside the clean room itself.

Clean rooms, model weights, and data security

What makes the GPU integration structurally significant is precisely where it sits: inside LiveRamp's clean room architecture, not adjacent to it. Clean rooms are secure data collaboration environments where multiple parties can analyze combined datasets without any single party accessing the raw data of another. LiveRamp's infrastructure uses its RampID pseudonymized identifier system to match data across advertisers, publishers, and data partners while preventing individual-level exposure.

Adding GPU compute inside that perimeter means computationally intensive models can train on brand first-party data without the model weights or the underlying data leaving the secure environment. This is a meaningful protection for AI companies whose models represent substantial proprietary investment. A company that has trained a sophisticated outcome prediction model on years of campaign data cannot hand that model's weights to a brand without exposing its intellectual property. Clean room compute solves that: the model runs inside the secure environment, the brand's data never leaves, and neither do the model weights.

The data movement question is equally important from the brand's perspective. Previously, a brand wanting to train a model on its first-party data using GPU infrastructure would typically need to export permissioned or aggregated datasets to an external cloud GPU environment. Each data movement step introduces latency, governance complexity, and surface area for potential exposure. Running the same workload inside LiveRamp's clean room eliminates that movement entirely.

Proprietary models can be run inside the clean room to provide campaign optimization, audience selection, and performance prediction services to brands, according to the announcement - without raw data ever leaving the secure environment. That capability serves a specific market segment: AI companies that want to offer model-as-a-service to brands but cannot afford to expose their code or weights in the process.

The inPowered AI perspective

inPowered AI, a sell-side AI company building outcome-driven models for programmatic advertising, participated as an early partner in the integration. Pirouz Nilforoush, President and Co-Founder at inPowered AI, explained the problem the GPU infrastructure solves for companies in that position. "Training and scaling outcome-driven models on the sell-side requires both massive compute and a secure data foundation," he said. The combination of those two requirements - serious compute and secure data access - has historically forced a tradeoff: companies either accepted the performance limitations of CPU-based clean rooms or moved data outside secure environments to access GPU infrastructure.

The GPU-enabled clean rooms resolve that tradeoff directly. "LiveRamp's GPU-enabled clean rooms give us the ability to move faster and operate at greater scale," Nilforoush said, "unlocking more precise decisioning across the open web for brands." For a sell-side AI company, more precise decisioning translates into better campaign performance outcomes, which in turn justifies the commercial model of offering AI optimization as a service. The clean room removes the data exposure risk that would otherwise prevent brands from allowing a third-party AI model to train on their first-party customer records.

inPowered AI's involvement as a launch partner also signals the intended customer profile. The GPU integration is not aimed only at large brands with internal data science teams. It targets the ecosystem of AI companies that want to distribute their models through LiveRamp's network of 900+ partners - accessing brand data at scale without the legal and technical friction of bilateral data-sharing agreements.

Availability and go-to-market

The integration is in limited release as of today. General availability is expected later in 2026. LiveRamp is directing interested parties to info@liveramp.com for early access.

That staged rollout is consistent with how LiveRamp has introduced other significant infrastructure changes over the past eighteen months. The company launched agentic AI orchestration in October 2025, enabling autonomous AI agents to access identity resolution, segmentation, activation, measurement, and clean room capabilities - positioning itself as the first data collaboration platform to give AI agents governed access to its full marketing toolset. It then expanded its Data Marketplace in January 2026 to include licensing data for AI training, accessing pre-built AI models, and deploying AI-powered applications through governed infrastructure. In March 2026, live AI agents from Newton Research and SemantIQ were deployed for cross-media measurement and healthcare audience building.

The GPU upgrade does not stand alone in that sequence. It provides the raw compute capacity that makes the agents, models, and marketplace infrastructure useful at production scale.

Context: the gap between clean rooms and GPU compute

Clean rooms were designed for analytics workloads: SQL queries across matched datasets, attribution calculations, audience overlap measurements. They were not built for the iterative, matrix-heavy operations that neural network training demands. As a result, companies wanting to use clean room data for AI model training typically faced a two-environment problem - run privacy-preserving data preparation inside the clean room, then export to a separate GPU cluster for training, then reimport results. Each boundary crossing between environments is a governance event and a potential exposure point.

LiveRamp's clean room technology has expanded substantially since the January 2024 acquisition of Habu, a $200 million deal that brought cross-cloud clean room capabilities across clouds, warehouses, and walled gardens. In October 2025, LiveRamp enabled retail media networks to measure Meta campaign performance through its Clean Room, demonstrating measurement use cases beyond traditional audience activation. In December 2025, Uber launched its Intelligence insights platform through LiveRamp's infrastructure - a significant validation of the platform as a neutral data collaboration layer for companies with large consumer data holdings. And this month, DIRECTV Advertising became the first multichannel video programming distributor to integrate with LiveRamp's CAPI Hub, enabling real-time conversion signals across premium CTV inventory ahead of the 2026-27 Upfront season.

Each of those milestones expanded what LiveRamp's clean room can do with data. The GPU integration expands what it can do with compute. The combination - governed data access plus GPU-class processing power - is what makes training sophisticated AI models inside a clean room viable.

What it means for AI partners and marketers

For AI companies building advertising models, the GPU integration opens a route to market that did not previously exist at this scale. Running proprietary models inside LiveRamp's secure environment means accessing brand first-party data without the legal scaffolding of a full data-sharing agreement, without exposing model weights, and without managing a separate GPU cluster. The clean room handles governance. NVIDIA's infrastructure handles compute. LiveRamp's identity layer - RampID, connecting over 900 partners - provides the data breadth that gives those models meaningful signal density.

The signal density question is worth dwelling on. A predictive model trained exclusively on a single brand's first-party customer records is bounded by that brand's data. A model trained inside a clean room, where that first-party data is matched and enriched against broader signals from LiveRamp's partner network, has access to a substantially larger and more diverse training corpus. GPU compute is what makes training on that larger dataset feasible within the same secure environment, rather than requiring a separate infrastructure arrangement.

For marketers without internal data science teams, the upgrade has a different implication. Rather than building models from scratch, they can collaborate with AI model providers directly through the Marketplace or clean room environment, accessing advanced optimization capabilities without surrendering control over their first-party data. The announcement describes this as overcoming data science resource limitations through seamless access to advanced AI models - a positioning that targets the majority of marketing organizations that cannot staff and maintain GPU infrastructure themselves. Karasick's point about making "the highest-performance computing and collaboration easy and accessible" to the network's 900+ members is where the commercial logic lands: the GPU upgrade is not just a technical upgrade for data scientists, it is a capability shift for practitioners who have never written a line of model training code.

Industry backdrop

The GPU integration arrives as the advertising technology industry is actively building AI-native infrastructure at every layer. As ppc.land has tracked across its coverage of LiveRamp's recent product cadence, the company has moved deliberately from data matching toward a full-stack AI platform. The partnership with Akkio announced in April 2026integrates conversational AI into measurement reports, allowing non-technical users to interrogate data through natural language. The IAB Tech Lab's AAMP initiative, formally named in February 2026, is building standards infrastructure for the agentic advertising layer that sits above all of this - the autonomous systems that will eventually consume model outputs to make real-time decisions at scale.

Those agentic systems require compute. The faster and more capable the underlying models, the more precisely those agents can optimize. GPU compute inside clean rooms is, in that context, infrastructure for the agentic advertising stack - not a standalone product feature but a foundational layer that makes everything running on top of it more capable. Allan's characterization at NVIDIA - that GPUs are "purpose-built for the most demanding training and inference workloads" - points at why the clean room compute upgrade matters beyond its immediate use cases. The next generation of agentic advertising depends on models that are fast, frequently retrained, and operating on rich first-party data. LiveRamp is building the infrastructure that makes all three conditions achievable inside a single governed environment.

Timeline

- January 2024 - LiveRamp acquires Habu, a data clean room software provider, in a cash and stock transaction valued at approximately $200 million, gaining cross-cloud clean room capabilities

- August 6, 2025 - LiveRamp reports Q1 fiscal 2026 revenue of $194.8 million, representing 10.7% year-over-year growth, with Data Marketplace generating $35 million

- October 1, 2025 - LiveRamp launches agentic AI orchestration, AI-powered segmentation, and AI-powered Marketplace search, positioning itself as the first data collaboration platform to give AI agents governed access to its full marketing toolset

- October 23, 2025 - LiveRamp enables retail media networks to measure Meta campaign performance through its Clean Room platform

- November 3, 2025 - LiveRamp donates the User Context Protocol to IAB Tech Lab to establish an open standard for AI agent signal exchange in advertising

- December 8, 2025 - Uber launches Intelligence insights platform powered by LiveRamp's clean room infrastructure

- January 6, 2026 - LiveRamp expands its Data Marketplace to include data and models for AI training, as well as governed access to partner AI-powered applications and agents

- February 26, 2026 - IAB Tech Lab formally names its agentic advertising initiative AAMP, consolidating seven protocol components including the User Context Protocol donated by LiveRamp

- March 3, 2026 - LiveRamp deploys live AI agents from Newton Research and SemantIQ for cross-media measurement and healthcare provider audience building

- April 7, 2026 - LiveRamp and Akkio announce a strategic partnership integrating Akkio's conversational AI engine into LiveRamp's measurement reports

- April 16, 2026 - DIRECTV Advertising becomes the first MVPD to integrate with LiveRamp's CAPI Hub, enabling real-time conversion signals for CTV inventory

- April 27, 2026 - LiveRamp announces native support for NVIDIA AI infrastructure inside its clean rooms, with preliminary tests showing up to 15x faster model performance compared to CPU-based environments; integration currently in limited release with GA expected later in 2026

Summary

Who: LiveRamp (NYSE: RAMP), headquartered in San Francisco, California, in partnership with NVIDIA. Key voices in the announcement include Matt Karasick, Chief Product Officer at LiveRamp; Jamie Allan, Director of AdTech & Digital Marketing Industries at NVIDIA; and Pirouz Nilforoush, President and Co-Founder at inPowered AI.

What: LiveRamp announced native support for NVIDIA AI infrastructure, upgrading the compute layer inside its clean room architecture from CPU to GPU-optimized infrastructure. The upgrade allows AI partners to run existing models inside LiveRamp clean rooms without rearchitecting them, and enables brands to train and deploy sophisticated AI models on first-party data at up to 15 times the speed of prior CPU-based environments, without exposing data or model weights.

When: Announced April 27, 2026. The integration is currently in limited release, with general availability expected later in 2026.

Where: LiveRamp is headquartered in San Francisco, California, with offices worldwide. The integration is available through LiveRamp's clean rooms and via the LiveRamp Marketplace.

Why: CPU-based clean room infrastructure created a technical barrier for AI partners, who were forced to rearchitect GPU-native models before bringing them into secure environments. The GPU upgrade removes that barrier, enabling plug-and-play model deployment inside clean rooms. For brands, it means faster AI model training and inference on first-party data without compromising data governance or intellectual property. The integration extends LiveRamp's strategy of building a full AI infrastructure layer on top of its data collaboration network, adding compute capacity to complement its existing identity resolution, agentic AI, and marketplace capabilities.

Discussion