Meta this week announced a sweeping set of anti-scam measures across Facebook, Messenger, and WhatsApp, combining new artificial intelligence detection tools, expanded advertiser verification requirements, and a series of law enforcement partnerships that resulted in arrests and the disabling of hundreds of thousands of accounts. The announcement, published March 11, 2026, on Meta's official newsroom, arrives at a charged moment for the company's relationship with the fraud problem on its platforms.

The scale of the challenge is significant. According to the announcement, Meta removed over 159 million scam ads in 2025 for violating its policies - up from the 134 million scam ads the company reported removing as of its December 3, 2025 Global Anti-Scam Summit disclosure. Of those 159 million, according to Meta, 92% were taken down before anyone reported them to the company. That figure reflects a significant improvement in proactive detection. At the same time, Meta says it took down 10.9 million accounts on Facebook and Instagram associated with criminal scam centers.

The announcement follows months of sustained scrutiny. Internal company documents reviewed by Reuters in November 2025 indicated that Meta's platforms were exposing users to an estimated 15 billion higher-risk scam advertisements daily, and that Meta internally projected earning approximately 10% of its 2024 annual revenue - roughly $16 billion - from advertisements promoting scams and banned goods. The company has disputed elements of that characterization.

New AI detection capabilities

The technical heart of today's announcement centers on AI systems designed to catch deception that traditional rule-based detection misses. According to Meta, the company's engineers built systems capable of analyzing multiple signals simultaneously - text, images, and surrounding context - to identify "a broader range of more sophisticated scam patterns faster and at scale."

Two specific AI-powered capabilities stand out. The first targets celebrity and brand impersonation, a category that has become one of the most lucrative vectors for fraud on social platforms. According to Meta, AI now helps analyze fake fan sentiment, misleading biographical information, and apparent associations with public figures or brands. The system can, according to the company, process "far more contextual information about public figures, enhancing our ability to catch deceptive impersonations." This builds on the facial recognition approach Meta described at the Global Anti-Scam Summit in December 2025, which more than doubled the volume of fraudulent ads detected during testing phases.

The second capability addresses deceptive links and domain impersonation. Meta states it uses advanced AI to proactively detect content that redirects users to webpages designed to mimic legitimate ones - a tactic common in phishing and investment fraud. The technology, according to the announcement, identifies "a broader range of deceptive behaviors with higher precision to protect thousands of brands against impersonation."

User-facing warnings: Facebook, WhatsApp, and Messenger

Beyond back-end detection, Meta is introducing or expanding three user-facing tools. The first is a Facebook feature that generates alerts when users send or receive a friend request from an account showing certain signs of suspicious activity - for instance, when there are few mutual friends or when the account indicates a different country location in its profile. The feature is described as currently in testing.

The second targets a specific WhatsApp attack vector. According to the announcement, scammers have been tricking users into linking their WhatsApp accounts to attacker-controlled devices, sometimes by posing as talent competitions asking people to enter a phone number and a linking code, or by presenting fraudulent QR codes. WhatsApp will now generate alerts when behavioral signals suggest a device-linking request might be suspicious. The warning shows where the request originates and flags it as a potential scam before the link is made.

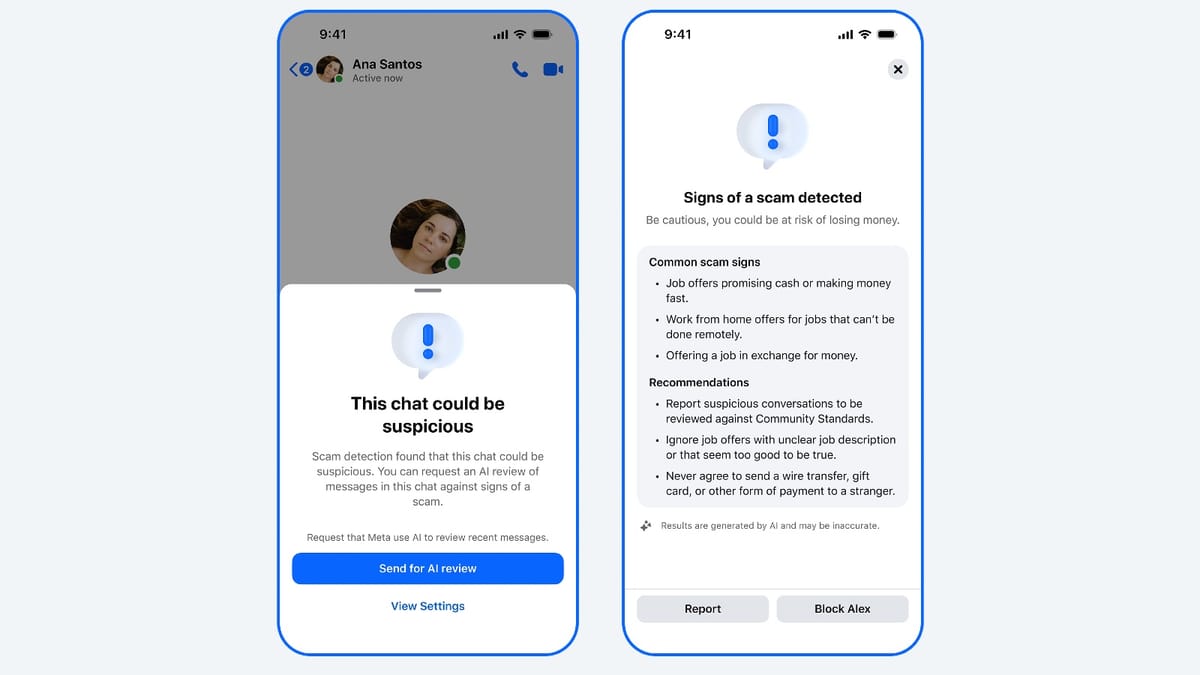

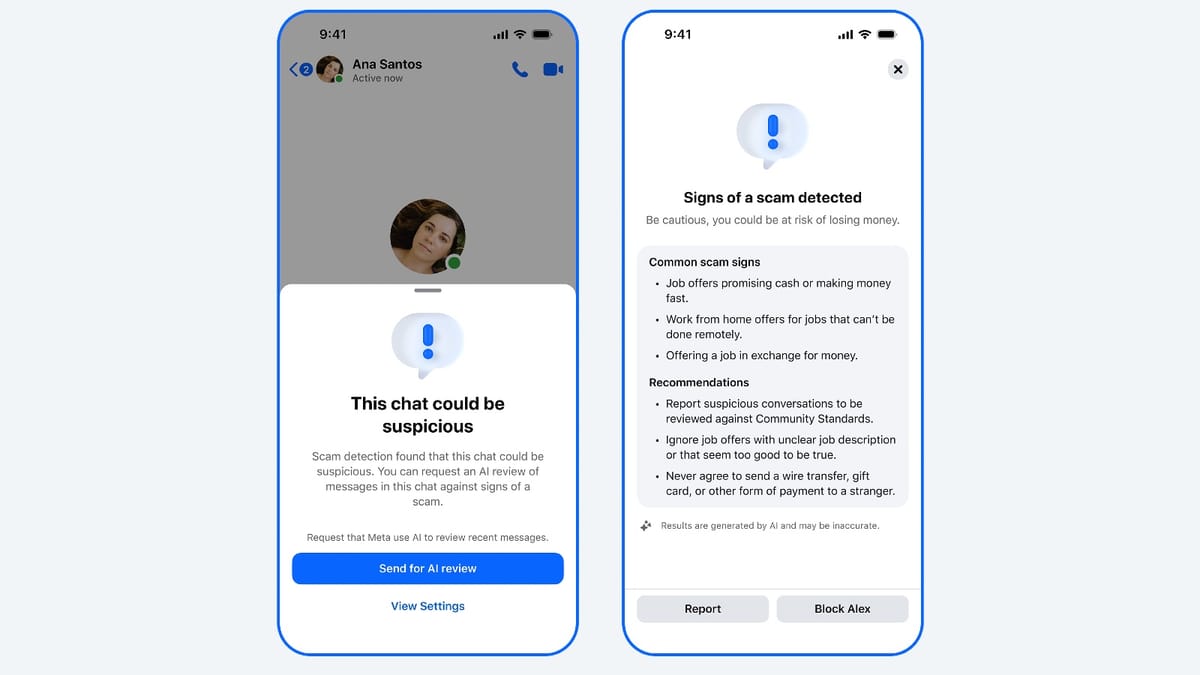

The third is an expansion of advanced scam detection on Messenger. Meta states it is rolling out this system to more countries during March 2026. When a new contact's chat contains patterns consistent with common scams - such as suspicious job offers - users are warned and invited to share recent chat messages for an AI scam review. If a potential scam is detected, the platform provides information on common scam types and suggests actions including blocking or reporting the account.

Advertiser verification: from 70% to 90%

Perhaps the most consequential change for the advertising community specifically is the expansion of advertiser verification. According to today's announcement, Meta is working to ensure that verified advertisers drive 90% of its ads revenue by the end of 2026, up from the current 70%. The remaining 10% would come from what Meta describes as low-risk businesses.

The expansion covers the highest-risk advertising categories. According to the announcement, "The verification process helps promote greater transparency, limiting attempts to misrepresent advertiser identity." Meta frames this as one layer in a multi-layered approach to platform safety, not a standalone solution.

For context, Meta has been expanding verification requirements selectively across markets for some time. The company announced verification requirements for advertisers targeting Thailand in October 2025, requiring affected advertisers to verify both the beneficiary and payer of their campaigns, with results displayed in the Ad Library. That policy gave advertisers a seven-day window to comply or lose the ability to deliver ads to Thai users.

The 90% target represents a meaningful shift. Today, roughly 30% of Meta's ad revenue flows through unverified advertisers - a pool large enough to encompass significant fraud exposure. The company has not disclosed the specific verification methodology it will apply to reach the new threshold, nor has it specified which advertising categories beyond existing high-risk areas will be brought into scope.

Law enforcement actions: 150,000 accounts, 21 arrests

Today's announcement also details enforcement activity carried out alongside global law enforcement agencies. According to Meta, a Joint Disruption Week involving the FBI, the Department of Justice Scam Center Strike Force, the Royal Thai Police Anti-Cyber Scam Center, and other global law enforcement agencies resulted in Meta investigators disabling over 150,000 accounts associated with scam center networks. The same operation contributed to 21 arrestsmade by the Royal Thai Police.

This represents the second Joint Disruption Week with the Royal Thai Police since December 2025. According to Meta, the first such operation, in December, led to the removal of over 59,000 Facebook pages and accounts linked to money laundering operations and illegal recruitment schemes.

A separate enforcement action used Meta's Fraud Intelligence and Reciprocal Exchange program, known as FIRE. Through that mechanism, Meta removed, disabled, and unpublished more than 15,000 assets on Facebook and Instagram. Those assets, according to the announcement, used deceptive personas claiming to be Japanese women seeking relationships with millennials and older men, with some accounts also promoting gambling-related content.

A third operation involved collaboration between Meta, the Nigeria Police Force, and the UK National Crime Agency. According to the announcement, the joint effort led to the arrest of seven suspects allegedly involved in a scam center in Agbor, Delta state, Nigeria. The syndicate targeted British and American citizens based in the UK, using fake social media accounts impersonating cryptocurrency traders and Facebook groups.

These enforcement efforts carry direct continuity from Meta's February 26, 2026 lawsuits against deceptive advertisers in Brazil, China, and Vietnam, which targeted celeb-bait and cloaking operators and included cease-and-desist letters to eight former Meta Business Partners accused of selling enforcement evasion services.

Awareness campaigns

The announcement covers four public awareness campaigns running in parallel with the technical and enforcement measures.

The #TrappedinScamCrime campaign, developed in partnership with the United Nations Office on Drugs and Crime, the International Justice Mission, and the US Department of State, has launched across Vietnam, Thailand, Laos, Cambodia, Myanmar, Indonesia, Malaysia, and the Philippines. The campaign addresses online recruitment and human trafficking for forced criminality - a category tied to the scam compound operations Meta has been disrupting.

The Scam Se Bacho campaign, a year-long initiative running in India with the Indian Cyber Crime Coordination Centre and the Securities Exchange Board of India, features Indian actor Neena Gupta. Educational campaigns in Brazil and Mexico, developed in partnership with the Brazilian Federation of Banks and Mexico's consumer protection agency Profeco, focused on scam awareness during Cybersecurity Awareness Month and the holiday season.

Context for marketing professionals

The advertising industry is watching Meta's anti-scam measures closely, partly because of what they imply for legitimate advertisers and partly because of the context in which they are being announced. The IAB Sweden expelled Meta from its membership on March 12, 2026, ruling that the platform's work against deceptive advertising was insufficient - a rare trade body sanction that followed the November 2025 Reuters disclosures about Meta's internal scam ad revenue projections.

For advertisers, the verification expansion carries practical implications. The advertiser support infrastructure at Meta has drawn criticism from practitioners who find themselves unable to resolve account issues through automated systems. Expanding the scope of verification requirements raises questions about what happens when legitimate advertisers fail to complete verification correctly, and whether adequate support will exist to resolve resulting disputes.

The expert reaction to the Reuters documents in November 2025 highlighted the tension between platform scale and enforcement precision. At approximately 15 billion daily ad impressions across Meta's properties, distinguishing fraudulent from legitimate advertising at 95% certainty thresholds means a meaningful fraction of fraud passes through. The new tools and the raised verification target represent Meta's stated answer to that gap. Whether the operational execution matches the announced ambition remains to be seen.

Meta's 90% verification target also provides a measurable benchmark the industry can track. The company has set an explicit end-of-2026 deadline. That specificity, unlike many platform anti-fraud commitments, creates a defined accountability point.

Timeline

- February 12, 2025 - Meta announces comprehensive romance scam prevention measures, reporting 408,000 accounts removed in 2024

- August 20, 2025 - Former Meta product manager files whistleblower complaint alleging artificial ROAS inflation for Shops ads

- October 27, 2025 - Meta announces expanded advertiser verification requirements for Thailand campaigns

- November 6, 2025 - Reuters publishes internal Meta documents; PPC Land reports Meta earned billions from scam ads through a penalty bidding system

- November 30, 2025 - Industry expert commentary on the Reuters scam ad findings published

- December 3, 2025 - Meta reports removal of 134 million scam ads at the Global Anti-Scam Summit in Washington, DC

- December 2025 - First Joint Disruption Week with the Royal Thai Police; 59,000 Facebook pages and accounts removed

- January 15, 2026 - Meta advertiser support failures documented, raising concerns about verification dispute resolution

- January 22, 2026 - Instagram product scam case study illustrates continued gaps in Meta's ad verification

- February 26, 2026 - Meta files lawsuits against scam advertisers in Brazil, China, and Vietnam for celeb-bait and cloaking

- March 11, 2026 - Meta announces new AI scam detection tools, WhatsApp device-linking warnings, expanded advertiser verification to 90%, and second Joint Disruption Week resulting in 150,000 accounts disabled and 21 arrests by the Royal Thai Police

- March 12, 2026 - IAB Sweden expels Meta from membership over insufficient action against deceptive ads

Summary

Who: Meta Platforms, operating Facebook, Messenger, and WhatsApp, working alongside the FBI, the US Department of Justice Scam Center Strike Force, the Royal Thai Police Anti-Cyber Scam Center, the Nigeria Police Force, the UK National Crime Agency, and awareness campaign partners including the UNODC, IJM, the US Department of State, India's I4C and SEBI, Febraban in Brazil, and Profeco in Mexico.

What: Meta launched new AI-powered scam detection tools targeting celebrity impersonation and domain spoofing, introduced user-facing warnings on Facebook for suspicious friend requests and on WhatsApp for suspicious device-linking attempts, expanded Messenger scam detection to more countries, and announced a plan to raise the share of ad revenue from verified advertisers from 70% to 90% by end of 2026. Concurrent law enforcement operations disabled over 150,000 accounts associated with scam center networks and contributed to 21 arrests.

When: The announcement was published March 11, 2026. The second Joint Disruption Week with the Royal Thai Police took place in early 2026, building on a first operation in December 2025. The 90% advertiser verification target is set for the end of 2026.

Where: The tools and features apply across Meta's global platforms - Facebook, Messenger, and WhatsApp. Law enforcement operations focused on scam center networks, with arrests made in Thailand and disruption of a scam center in Agbor, Delta state, Nigeria. Awareness campaigns are running across eight countries in Southeast Asia and in India, Brazil, and Mexico.

Why: Meta states that criminal scam networks are growing in sophistication, industrializing fraud at scale across platforms and borders. The company faces intensifying regulatory scrutiny and trade body pressure following internal document disclosures in late 2025 that revealed the scale of fraudulent advertising on its platforms. The new measures represent Meta's stated effort to demonstrate active enforcement, expand transparency through advertiser verification, and work with law enforcement to address fraud that transcends any single platform or jurisdiction.