Meta this week announced Incognito Chat with Meta AI on WhatsApp and the Meta AI app, a feature the company describes as a completely private way to interact with artificial intelligence. Built on WhatsApp's Private Processing infrastructure, the launch marks one of the more technically elaborate privacy claims any major platform has made for an AI product - and comes at a moment when Meta faces legal and regulatory pressure over data practices across multiple jurisdictions.

The announcement, dated May 13, 2026, positions the feature as distinct from other incognito-style modes. According to Meta, when a user starts an Incognito Chat with Meta AI on WhatsApp, messages are processed in a secure environment that Meta itself cannot access. Conversations are not saved and disappear by default. "Other apps have introduced incognito-style modes, but they can still see the questions coming in and the answers going out," Meta stated in its announcement. "Incognito Chat with Meta AI is truly private, meaning no one - not even Meta - can read your conversations."

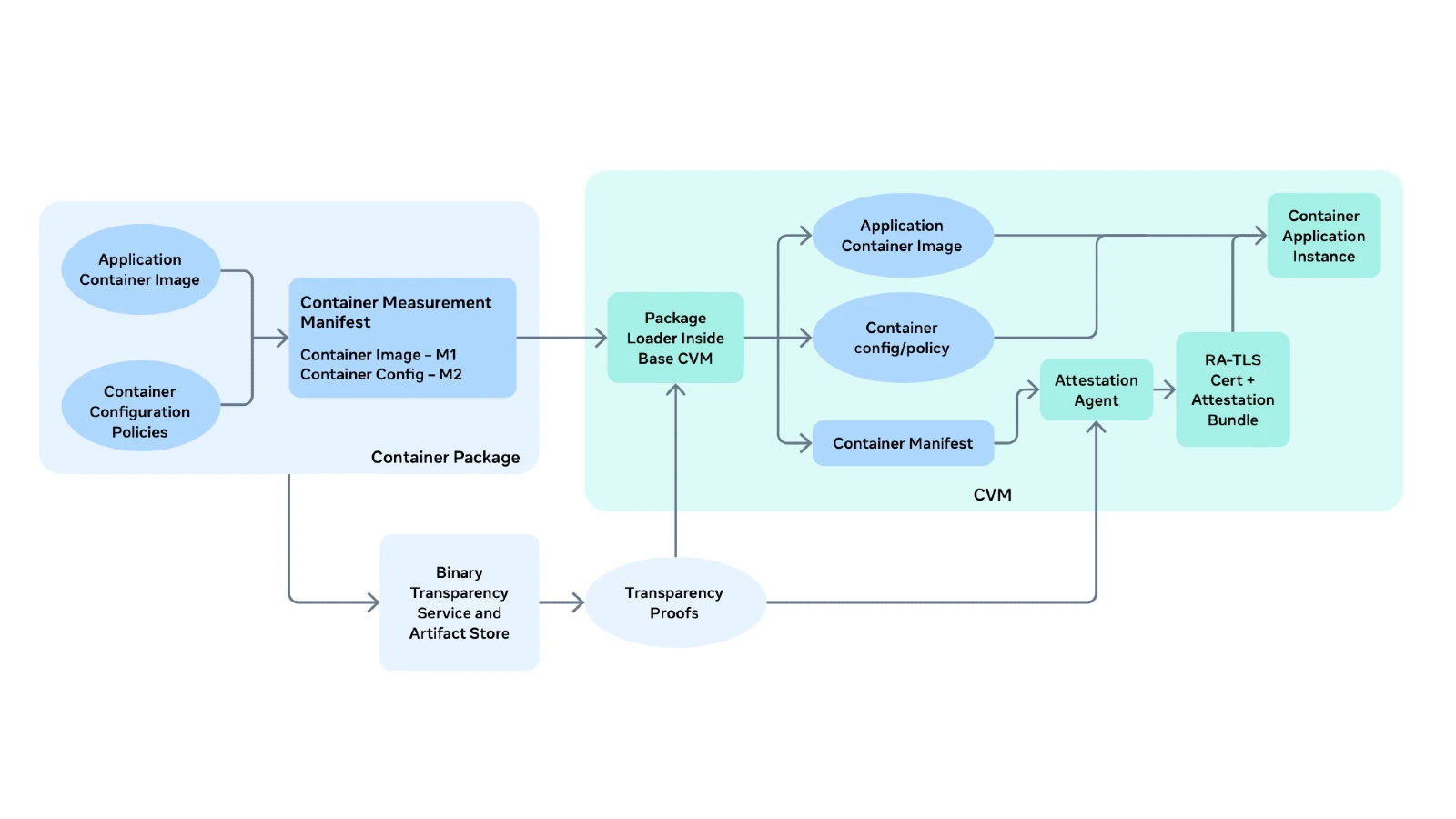

What Private Processing actually is

The privacy claim rests on a specific piece of infrastructure called Private Processing, a server-based system Meta built on Trusted Execution Environments (TEEs). A TEE is a hardware-isolated region of a processor that enforces three core properties: data confidentiality (unauthorized parties cannot view data in use), data integrity (unauthorized parties cannot alter data in use), and code integrity (unauthorized parties cannot alter the code running inside the environment). According to Meta's technical white paper, first published June 10, 2025, and updated March 16, 2026, the goal is to ensure that "sharing messages with Private Processing does not make them available to Meta, WhatsApp, or anyone else."

The system relies on two hardware components. For CPU processing, Meta uses AMD EPYC processors with AMD Secure Encrypted Virtualization - Secure Nested Paging (SEV-SNP), a technology that encrypts virtual machine memory and enforces page table protections that move the hypervisor outside the trust boundary of the guest VM. According to Meta, main memory is encrypted with AES in a mode that includes the physical address to prevent blocks from unauthorized relocation. The AMD Secure Processor generates and manages distinct AES keys per VM and host, communicated over a secure channel to on-die memory controllers without ever exposing them to the host or hypervisor in clear text.

For GPU processing - necessary because large language models require the kind of parallel computation that only specialized accelerators can provide at scale - Meta uses NVIDIA Hopper H100 Tensor Core GPUs with NVIDIA Confidential Computing. The GPU authenticates its boot flow, and all firmware and microcode is signed by NVIDIA and verified by an on-die wafer before execution. A secure tunnel based on the Security Protocol and Data Model (SPDM) specification, relying on AES in GCM mode, connects the guest VM and the GPU over PCIe. The white paper acknowledges one hardware limitation on the GPU side: NVIDIA's High Bandwidth Memory is not encrypted, creating a theoretical surface for cold boot attacks. Meta notes this is mitigated by SoC packaging and the GPU's ability to lock memory access during card resets. A second acknowledged gap is that NVLink - the interconnect used when multiple GPUs collaborate for inference - is not encrypted on the Hopper platform. Meta states it is evaluating NVLink encryption as hardware like the Blackwell platform becomes available.

How a request travels through the system

The data path from a WhatsApp user to the TEE involves several distinct steps. First, Private Processing obtains anonymous credentials through Meta's open-source Anonymous Credential Service (ACS), which authenticates requests without identifying the user making them. The credentials take the form of ACS tokens usable a finite number of times. Second, the system fetches HPKE (hybrid public key encryption) public keys from a third-party CDN - currently Fastly - to support Oblivious HTTP (OHTTP), a protocol that routes requests through a relay that strips IP information before passing them to Meta's gateway. According to the white paper, this design ensures that an attacker cannot target a particular user without attempting to compromise the entire Private Processing system.

Once those preliminaries are done, the client establishes a Remote Attestation + Transport Layer Security (RA-TLS) session with a specific TEE chosen by a load balancer. The load balancer itself has no knowledge of the user making the request. Attestation verification cross-checks measurements against a third-party log - currently Cloudflare - to confirm the client is connecting only to code that satisfies verifiable transparency guarantees. The request itself is encrypted with an ephemeral key that neither Meta nor WhatsApp can access. Only the user's device and the selected TEE can decrypt it.

After processing, the response travels back encrypted with the same ephemeral key, either via the OHTTP channel directly or via WhatsApp's chat servers. According to the white paper, Private Processing does not retain access to messages after a session completes. The Orchestrator service - which coordinates multiple AI models to produce a response - is stateless by design, with no database access and no persistent storage. The LLM inference component similarly turns off persistent KV cache so that computation state has the same lifecycle as the request itself.

Artifact transparency and Cloudflare's role

Meta has partnered with Cloudflare to maintain a third-party, append-only log of security-critical artifacts, including binaries, revocation lists, and authorized host identities. This log records digests of code before it runs in production. The white paper describes five artifact types: binaries (auditable), revocation lists (auditable), models (private), system prompts (private), and authorized host identities (auditable). Artifact namespaces published in the white paper include entries such as prod.pc.orchestrator.cvm for the base confidential VM image of the orchestrator, prod.pc.predictor.llm for the LLM model, and prod.pc.orchestrator.trending_topics for the list of trending topics fed to the system.

The revocation list is published every 3 hours, and each signature expires every 24 hours. The CVM base image is updated on a weekly cadence to incorporate kernel updates, while machine learning models update on an ad-hoc cadence with expiration measured in months. When patches arrive under coordinated disclosure, Meta states it typically applies them within a 90-day timeframe - a window standard across the industry for embargoed security fixes.

What the boot process verifies

The attestation chain begins with the AMD Security Processor, which calculates a launch digest covering the initial RAM disk (initrd), kernel, firmware, and kernel command line. The rootfs is then verified against a hash included in the launch digest, and includes a package loader and attestation agent. If a GPU is present, the Confidential Virtual Machine attests the GPU and compares measured values against golden reference measurements from NVIDIA's Reference Integrity Manifest Service API. If any check fails, the VM shuts down. Container images are then loaded, measured, and verified against transparency proofs before the attestation agent generates an RA-TLS certificate binding the session's public key to the measured state of the entire stack.

The guest kernel running in each CVM is a Linux 6.12 or later kernel from the stable tree, configured in accordance with recommendations from the Linux Kernel Self-Protection Project. The guest OS is based on CentOS 10. All non-essential services are removed, including network interfaces. Network traffic enters and leaves through a VSOCK interface. The application itself runs in an LXC container, using the vLLM inference library with the safetensor weight format to avoid deserialization attack surfaces from Python pickles. The front-end orchestration layer relies on LlamaStack.

Web search and its limits

One technical detail the white paper covers explicitly is web search. LLMs carry training cutoffs and cannot answer questions about recent events without an external retrieval mechanism. According to Meta, when the model in the TEE determines a user's prompt requires real-time information, it generates a search query and sends it to Meta infrastructure outside the TEE, which routes it to selected web search engine providers. The query is limited to 100 characters to minimize the data leaving the Private Processing system. Only up to 5 search queries per user prompt are permitted. Search queries are not associated with the user making a prompt, and the user can view the exact search terms used in the UI and in the in-app transparency report. The web search capability is optional and can be disabled at any time through an in-app setting.

In-app transparency reports

WhatsApp also allows users to record and export their own interactions with the Private Processing system through in-app transparency reports, which are off by default. Once enabled, reports are generated on-device and can be saved locally. They include the type of request processed (for example, summarization or writing suggestions), the request timestamp, the messages sent to the Private Processing system, attestation information for the TEEs that processed the data, a Cloudflare signature on verified data, and an epoch value - an ever-increasing counter incremented by 1 for each Cloudflare signature. Phone number country code is included in the report, but not the phone number itself.

What comes next

Meta indicated in its May 13 announcement that Incognito Chat with Meta AI is rolling out over the coming months to WhatsApp and the Meta AI app. A second feature, called Side Chat with Meta AI, is described as arriving in subsequent months. Side Chat, also protected by Private Processing, would allow users to get private assistance in the context of an existing WhatsApp conversation without interrupting it.

Why this matters for the marketing and ad tech community

The Private Processing architecture and Incognito Chat launch sit against a complicated backdrop for Meta and data privacy. WhatsApp had already introduced Status advertising using cross-platform data from Instagram and Facebook in June 2025. Meta confirmed in October 2025 that AI chat interactions would be used to personalize ads starting December 2026. Meanwhile, the European Commission has been escalating an antitrust case since April 2026 over Meta's approach to third-party AI assistants on WhatsApp, arguing that the pricing framework Meta introduced in March 2026 produces the same effect as the outright ban on competing AI services that had preceded it.

For marketers and ad tech professionals, the tension is real. Private Processing is designed specifically to prevent the kind of data access that fuels behavioral advertising. If WhatsApp AI interactions processed through the TEE genuinely cannot be accessed by Meta - and the white paper describes the engineering effort to make that case technically credible - then Incognito Chat conversations cannot feed into Meta's ad targeting systems. A lawsuit filed March 31, 2026, and subsequently dismissed May 1, 2026, had alleged that a competing AI platform forwarded private conversations to Meta via embedded advertising trackers. The fact that such claims are being litigated signals how closely the advertising ecosystem is watching the intersection of AI chat and data flows.

The timing of Private Processing's deployment also intersects with Instagram's decision to end support for end-to-end encrypted chats after May 8, 2026, a divergence in Meta's privacy posture across different products that regulators and privacy researchers have noted. Meta's private processing white paper explicitly welcomes feedback from the security and research community, listing a bug bounty contact at bugbounty@meta.com. Whether independent researchers can verify the end-to-end claims - particularly around the non-encrypted NVRAM and NVLink gaps the white paper itself flags - will determine how much weight the technical architecture ultimately carries with skeptics.

Timeline

- June 10, 2025 - Meta publishes V1 of the Private Processing for WhatsApp technical white paper

- June 16, 2025 - WhatsApp introduces Status advertising using cross-platform data from Instagram and Facebook accounts

- October 25, 2025 - Meta confirms AI chat interactions will be used for ad personalization from December 2025

- November 15, 2025 - WhatsApp enables third-party messaging in Europe under DMA interoperability rules

- January 15, 2026 - Meta bans third-party AI chatbots from WhatsApp Business API, keeping Meta AI as sole assistant

- February 9, 2026 - European Commission sends Statement of Objections to Meta over WhatsApp AI lockout

- March 3, 2026 - San Francisco court enters $50 million privacy judgment against Meta over Facebook user data

- March 16, 2026 - Meta publishes V2 update to the Private Processing white paper

- March 10, 2026 - Meta's 2026 DMA compliance report confirms WhatsApp advertising rollout in the EU and updated consumer profiling rules

- March 15, 2026 - Instagram confirms it will end support for end-to-end encrypted direct messages after May 8, 2026

- April 15, 2026 - European Commission issues Supplementary Statement of Objections escalating WhatsApp AI antitrust case AT.41034

- May 1, 2026 - Class action alleging Perplexity forwarded AI chat data to Meta is voluntarily dismissed

- May 13, 2026 - Meta announces Incognito Chat with Meta AI on WhatsApp and the Meta AI app, built on Private Processing

Summary

Who: Meta Platforms, through its WhatsApp and Meta AI app products, announced the Incognito Chat with Meta AI feature. The underlying infrastructure - Private Processing - was designed by Meta's engineering teams. Third parties involved include AMD (hardware), NVIDIA (GPU hardware), Cloudflare (artifact transparency log), and Fastly (OHTTP relay and CDN for key distribution).

What: Incognito Chat with Meta AI is a private AI conversation mode built on Private Processing, a server-side system using Trusted Execution Environments. When active, messages are processed inside a hardware-isolated environment that Meta states it cannot access. Conversations are not retained after a session ends and disappear by default. A technical white paper published June 10, 2025, and updated March 16, 2026, describes the full architecture, including AMD SEV-SNP CPUs, NVIDIA H100 GPUs, Oblivious HTTP routing through Fastly, anonymous credentials, remote attestation via Cloudflare, and stateless request processing.

When: The Incognito Chat feature was announced May 13, 2026. The underlying Private Processing white paper was first published June 10, 2025, with a second version released March 16, 2026. Rollout of the feature to WhatsApp and the Meta AI app is described as occurring over the coming months.

Where: The feature is available globally through WhatsApp and the Meta AI app, with rollout phased over coming months. The Private Processing infrastructure runs on Meta's server hardware using AMD and NVIDIA confidential computing technologies, with third-party relays and transparency log services provided by Fastly and Cloudflare respectively.

Why: Meta framed the launch as a response to user demand for private AI interactions on questions that may be sensitive - such as health, financial, or personal matters. The technical motivation is structural: LLMs require server-side GPU processing that conventional end-to-end encryption cannot protect, because the server must decrypt data to run inference. Private Processing uses TEEs to perform that inference in an environment where neither the server operator nor any intermediary can read the data. The launch also arrives as Meta faces sustained regulatory scrutiny over data practices in Europe and the United States, giving the architecture a credibility dimension beyond its technical merits.

Discussion