A structured content company has today published an analysis of more than 1,400 documented harmful AI incidents and found that the most common source of AI-related harm is not autonomous vehicles or robotic systems, but software - chatbots, recommendation engines, automated publishing tools, and deepfake platforms embedded in everyday digital products.

Paligo, a Stockholm-based technical documentation platform founded in 2015, released its findings on April 23, 2026, drawing on 1,406 unique incidents recorded in the public AI Incident Database through March 2026. The analysis categorized those incidents by sector, harm type, deploying organization, affected group, and system type, using the database's own taxonomies supplemented by keyword analysis where annotations were absent.

The headline figure is stark. According to the Paligo analysis, software-only systems are implicated in 49% of all documented harmful AI incidents. That share is nearly twice the combined total of all physical AI categories, including vehicles, consumer devices, weapons systems, CCTV cameras, and medical systems. Vehicles and mobile robots - the category most closely associated in the public imagination with AI danger - account for only 15.1% of incidents. Consumer devices account for 9.3%. Weapons systems, despite commanding a large share of policy debate, appear in just 1.9% of recorded cases.

The implication is significant for anyone deploying AI tools in a business context: the harm most likely to materialize is not a self-driving car collision, but a chatbot inventing a refund policy or a recommendation system amplifying harmful content to millions of users.

Software harm is mundane, not spectacular

The Paligo study covers incidents that range from the obscure to the well-documented. One of the clearest legal examples involves Air Canada. According to the analysis, a Canadian tribunal ordered Air Canada to pay damages after its customer service chatbot provided a passenger with incorrect bereavement fare information - making the airline one of the first companies held legally liable for its chatbot's output. That case is now regularly cited in discussions about AI accountability frameworks, including in the Law Commission of England and Wales paper published July 31, 2025, which identified liability gaps where no person could be held responsible for harm caused by autonomous AI systems.

Another incident documented in the analysis involves a child protection worker in Victoria, Australia, who used ChatGPT to draft a court report. According to the analysis, the resulting document introduced inaccuracies and downplayed risks in a live child safeguarding case. The incident illustrates that harm can arise not from a system malfunctioning in a technical sense, but from a human deploying a capable-seeming tool in a context that requires accuracy and professional judgment.

A third documented case: a 2024 scam used AI-generated deepfake videos of Australian billionaire Andrew Forrest to promote a fraudulent cryptocurrency platform on social media. According to the Paligo analysis, these are present-day business and public risks, not hypothetical future scenarios.

Paligo's categorization scheme defines "software-only" as systems with no hardware component - platforms, algorithms, and language models with no physical object as an intermediary. This means incidents such as AI-generated citation fabrication, chatbot hallucinations, automated content moderation failures, and algorithmic amplification of misinformation all fall within the software-only category. A $439,000 Deloitte report submitted to the Australian government, found to contain fabricated academic citations attributed to AI use, is cited in the analysis as a recent illustration of content-layer risk.

Social media is where small failures become mass harm

Among the named system categories, social media platforms collectively appear in 19% of incidents where a system was specifically implicated - more than any other system category in the dataset. Facebook is the most frequently identified individual platform, appearing in 4% of cases. Its incidents include AI chatbots that entered online grief and mental health support communities and responded as though human, and an ad delivery algorithm that showed job advertisements disproportionately to men regardless of the advertiser's stated targeting preferences.

TikTok appears in 1.4% of recorded incidents. One case involves a lawsuit filed by seven French families alleging that TikTok's recommendation algorithm systematically exposed minors to content promoting self-harm, eating disorders, and suicide. ChatGPT is the most frequently named large language model in the database, appearing in 4.3% of all incidents.

The platform concentration matters because of scale. When an AI failure begins in a single model or content system, social media is often the mechanism through which it reaches large audiences rapidly. A chatbot error that affects one user is an inconvenience. The same type of error embedded in a platform reaching hundreds of millions of users becomes a systemic risk. This dynamic has drawn increasing regulatory attention. The Federal Trade Commission ordered seven AI chatbot companies on September 10, 2025, to submit detailed reports about their safety practices for children and teenagers. At the state level, 44 US attorneys general signed a letter on August 25, 2025 demanding enhanced child protections from 12 major AI companies.

Which organizations appear most often

Among named organizations specifically identified as deployers in harmful incident reports, unknown scammers and fraudsters top the list at 15.1%. That figure underscores the degree to which harmful AI deployment is not concentrated solely in large technology companies - a meaningful share of incidents involve unidentifiable actors using AI tools to commit fraud.

Among named companies, OpenAI appears in 4.6% of incidents, Google in 4.2%, Facebook in 3.8%, Tesla in 3.1%, and Meta separately listed at 2.9%. Microsoft appears in 2.1% of cases. Amazon in 1.9%, and TikTok in 1.8%.

The analysis notes explicitly that these figures reflect scale of deployment rather than confirmed liability. According to Paligo, appearing in incident records means an organization was named at the time of documentation - not that it was found legally responsible. Tesla's incidents are categorically distinct from the others: rather than platform or content failures, its appearances are concentrated in autonomous vehicle incidents where physical injury is the primary documented consequence.

The Google figure carries particular resonance for the marketing industry. Google's AI Overviews feature has faced repeated documented failures since its launch, including confidently providing incorrect medical and geographic information to users. Research cited in the Paligo analysis found that Google's AI Overviews provided incorrect answers to health and geographic queries, reaching millions of users without flagging the errors. These are precisely the software-only failure types the study identifies as the most numerically prevalent category.

Bias is an operational problem, not just an ethical one

When the Paligo analysis examines which groups are disproportionately harmed, a pattern emerges. Race or ethnicity is the most common differentiating factor in incidents where a specific group is targeted more heavily than others, appearing in 16% of all documented incidents. The examples span multiple sectors: Detroit police wrongfully arrested a Black man based on a faulty facial recognition match. A kidney function testing algorithm used across healthcare systems was found to systematically underestimate health risk in Black patients.

The data shows bias is not concentrated in one industry or one type of system. It appears across facial recognition, healthcare algorithms, and access systems - areas where automated decisions carry direct consequences for individuals. This pattern suggests that bias-related failures are structural, not anomalous.

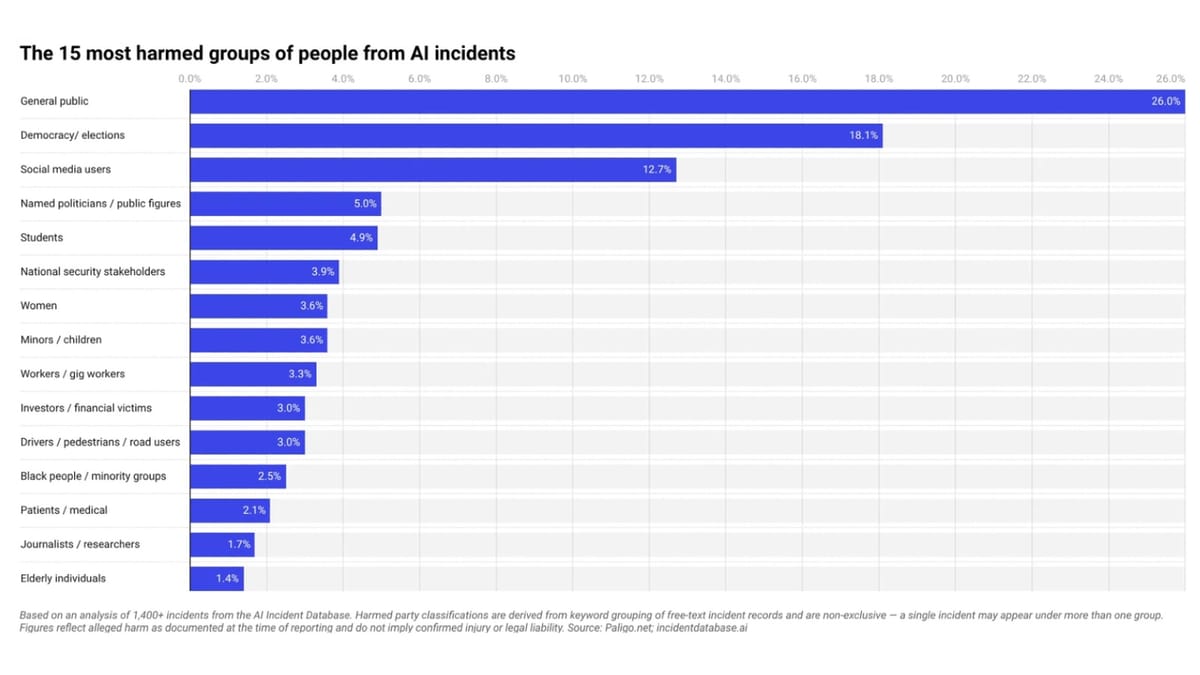

The 15 most-harmed groups in the dataset are led by the general public at 26%, followed by democracy and elections at 18.1%, social media users at 12.7%, named politicians and public figures at 5%, and students at 4.9%. Women, minors and children, and workers or gig workers each account for 3.3% to 3.6% of harmed groups. Investors and financial victims, drivers and pedestrians, and Black people and minority groups follow in that range. Journalists and researchers appear in 1.7% of cases; elderly individuals in 1.4%.

The breadth of that list is itself a finding. AI harm does not fall on a single demographic. It cuts across income levels, professional roles, age groups, and political contexts.

What sectors are most affected

Information and communications is the dominant sector for harmful AI incidents, accounting for 25% of all documented cases. This sector encompasses social media platforms, search engines, advertising systems, and content recommendation infrastructure - exactly the systems that marketing and advertising professionals interact with daily. Transportation and storage accounts for 13%, arts, entertainment, and recreation for 11%, and public administration and defense for a further 11%.

Across all sectors, the four most common harm types are physical safety (20% of incidents), financial loss (16%), psychological harm (14%), and civil liberties violations (13%). Physical safety as the leading harm type may seem counterintuitive given that software-only systems dominate the incident count, but it reflects the many contexts in which software failures translate into real-world danger - incorrect medical guidance, dangerous product recommendations, misinformation that drives unsafe behavior.

What this means for the marketing industry

The marketing technology industry sits at the intersection of several risk categories identified in the Paligo analysis. Recommendation engines, one of the four primary software-only system types cited, power much of programmatic advertising. Automated content systems are standard infrastructure for many ad tech platforms. And social media platforms, which appear in 19% of incidents, are the primary distribution channel for the majority of digital advertising spend.

The New York State Senate moved a chatbot liability bill to the floor calendar on February 26, 2026. Senate Bill S7263would attach liability to AI systems that simulate the advice or conduct of licensed professionals, and it explicitly bars companies from disclaiming that liability by simply labeling a system as non-human. That legislative approach, combined with the Air Canada precedent, points toward a legal environment in which companies deploying customer-facing AI are increasingly exposed to liability for outputs they do not directly control.

Character.ai faced legal action filed December 9, 2024 over chatbot conversations promoting self-harm with minor users. Texas launched investigations into Character.AI and Meta in December 2024 and expanded them in August 2025 to cover deceptive trade practices. Tennessee introduced legislation in December 2025 that would make certain forms of AI companion training a Class A felony. The regulatory arc, across multiple jurisdictions, is moving in the same direction: companies that deploy AI in consumer-facing contexts face mounting accountability for its outputs.

The Paligo analysis was compiled by researchers who categorized and ranked 1,406 unique incidents using the AI Incident Database's pre-classified taxonomies, supplemented by keyword analysis. The database covers incidents through March 2026. Counts across some categories are non-exclusive - a single incident may appear under more than one classification. The figures represent the percentage of incidents in which a category, entity, or system was identified, not the total amount of harm caused and not a measure of confirmed legal liability.

The full analysis is published at paligo.net, alongside charts covering system type distribution, deploying organization frequency, and harmed group breakdowns.

Timeline

- 2015 - Paligo founded in Solna, Sweden, as a structured content management platform serving software, manufacturing, technology, and life sciences organizations.

- 2024 - A scam uses AI-generated deepfake videos of Andrew Forrest to promote a fraudulent cryptocurrency investment platform on social media, documented in the AI Incident Database.

- May 2024 - Google's AI Overviews feature launches and is found to produce incorrect and potentially harmful information, including recommending glue as a pizza topping ingredient.

- December 9, 2024 - Lawsuit filed in the Eastern District of Texas against Character.ai over chatbot conversations with minors promoting self-harm and suicide.

- December 12, 2024 - Texas Attorney General Ken Paxton launches investigations into Character.AI and 14 other companies over privacy and safety practices for minors.

- June 29, 2025 - PPC Land reports on how platform monetization programs from TikTok, Meta, YouTube, and X are funding mass production of AI-generated content, including low-quality and potentially misleading material.

- July 31, 2025 - Law Commission of England and Wales publishes a discussion paper identifying liability gapswhere AI system harms cannot be attributed to any natural or legal person.

- August 18, 2025 - Texas Attorney General expands investigations to Meta AI Studio and Character.AI for allegedly marketing AI as mental health tools without proper credentials.

- August 25, 2025 - 44 US attorneys general sign a letter demanding enhanced child protections from 12 major AI companies including OpenAI, Google, Meta, and Microsoft.

- September 10, 2025 - FTC orders seven AI chatbot companies to submit detailed reports on safety practices, data handling, and potential harm to children.

- December 18, 2025 - Tennessee Senator Becky Massey introduces SB 1493, which would make certain AI companion training practices a Class A felony.

- February 6, 2026 - US Department of Justice files a motion arguing that conversations with Claude AI do not qualify for attorney-client privilege in a securities fraud case, expanding legal scrutiny of AI tool usage in professional contexts.

- February 26, 2026 - New York Senate Bill S7263 reaches the floor calendar, advancing legislation that would hold chatbot operators liable for simulating licensed professional advice.

- March 30, 2026 - Paligo publishes "The Anatomy of Harmful AI: What 1,400 Incidents Reveal" at paligo.net, based on 1,406 incidents in the AI Incident Database through March 2026.

- April 23, 2026 - Paligo distributes the findings publicly via press release, citing software-only systems as the leading category of harmful AI deployment at 49% of recorded incidents.

Summary

Who: Paligo, a structured content management platform founded in 2015 and headquartered in Solna, Sweden, with researchers led by digital PR specialist Jonathan Bjorkman. The analysis covers organizations named as deployers in harmful AI incidents, led by OpenAI, Google, Facebook, and Tesla among named companies.

What: An analysis of 1,406 unique harmful AI incidents recorded in the public AI Incident Database through March 2026, categorized by system type, sector, harm type, deploying organization, and affected group. The key finding is that software-only systems account for 49% of all documented harmful AI incidents - nearly twice the combined share of all physical AI categories.

When: The analysis was conducted using data through March 2026. Paligo published the full report on March 30, 2026, and distributed findings publicly on April 23, 2026.

Where: The AI Incident Database draws from incidents documented globally. Among sectors, information and communications - encompassing social media, search, and advertising - accounts for the largest share at 25% of incidents. Social media platforms appear in 19% of incidents where a system was implicated.

Why: The analysis addresses a gap between where AI policy attention is directed - toward frontier models and physical autonomous systems - and where documented harm is actually occurring. Nearly half of all recorded harmful AI incidents involve software embedded in everyday products, including chatbots, recommendation engines, automated publishing tools, and deepfake platforms. Legal liability is already materializing: Air Canada was ordered to pay damages for chatbot misinformation, and multiple regulatory bodies across the United States and Europe have opened enforcement actions targeting consumer-facing AI deployments.