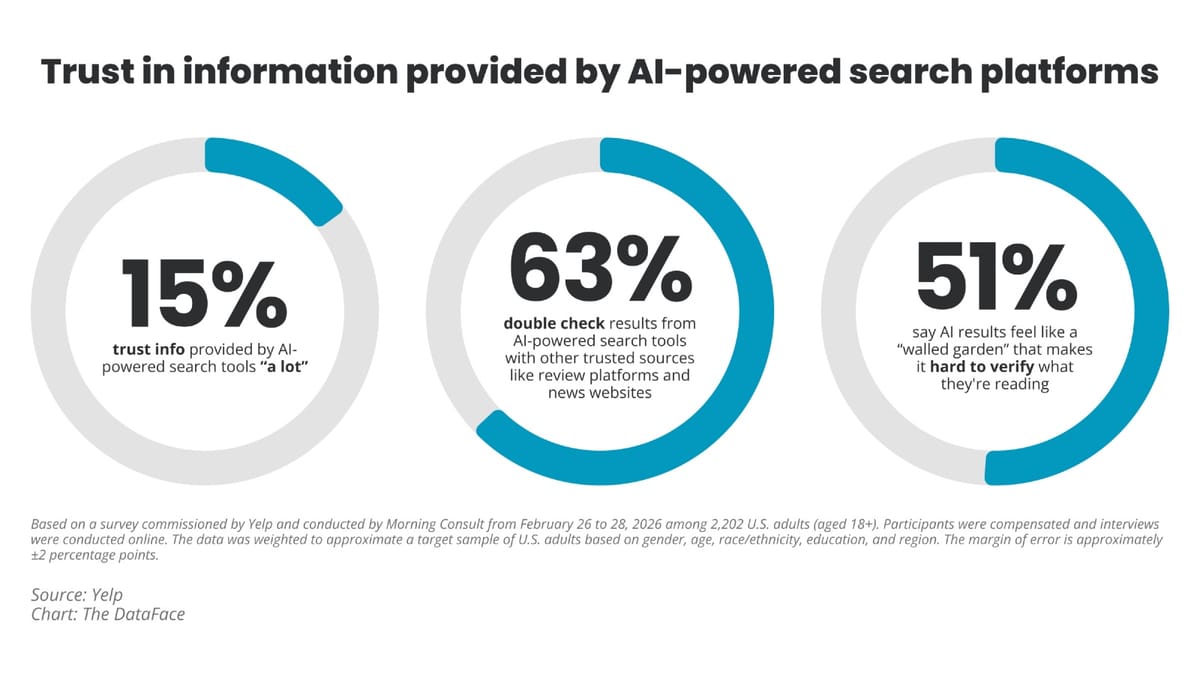

Yelp this week published research conducted in partnership with Morning Consult showing that widespread adoption of AI-powered search has not produced consumer trust in those platforms. The study, based on a survey of 2,202 U.S. adults, finds that while 65% of respondents have used an AI-powered search tool in the past six months, only 15% say they trust the information those platforms provide "a lot." The findings were published on April 14, 2026, under embargo and released publicly today.

The research arrives as AI search tools have rapidly expanded their reach across news, local discovery, and commerce. According to the report, the gap between usage and confidence is not marginal - it is structural. The majority of users are actively working around AI outputs rather than relying on them.

The verification problem

The numbers tell a precise story. According to the study, 63% of respondents say they double-check AI search results against other trusted sources like news websites and review platforms. More than half - 51% - say AI results feel like a "walled garden" that makes it hard to verify what they are reading.

The term "walled garden" carries specific meaning in digital advertising, where it typically refers to closed platform ecosystems that restrict data portability and third-party visibility. Its application here to consumer AI search reflects a parallel frustration: platforms that present synthesised answers without surfacing the underlying evidence. The concept has become a recurring point of tension across the marketing technology industry, with PPC Land previously documenting how walled garden dynamics create opacity for advertisers and, now apparently, for ordinary searchers as well.

The Morning Consult survey was conducted from February 26 to 28, 2026, among U.S. adults aged 18 and over. Participants were compensated and interviews were conducted online. The data was weighted to approximate a target sample of U.S. adults based on gender, age, race and ethnicity, education, and region. The margin of error is approximately plus or minus 2 percentage points.

What consumers say they need

According to the Yelp research, consumers are explicit about what would close the trust gap. The findings show that 72% of respondents say AI platforms should always show where their information comes from. 69% want the option to leave AI platforms and visit trusted sites for more information. 66% want more proof of trusted sources - specifically links to news sites or review platforms - when presented with information on an AI search platform. A further 52% say that seeing visual evidence, such as before-and-after photos of a service or dish photos, would increase their trust.

These are not abstract preferences. They describe specific design decisions: citation links, outbound navigation, photo integration, and source attribution. Taken together, they amount to a consumer demand for AI search to function more like a bridge to the broader web and less like a terminal destination.

According to Craig Saldanha, Yelp's Chief Product Officer, who authored the blog post accompanying the research: "To fix this trust problem, AI search platforms need to be designed as a bridge to trusted sources, rather than a dead end."

The framing is significant. It positions the trust deficit not as a temporary problem to be solved by improving AI accuracy alone, but as a design challenge requiring structural changes to how platforms present and link information.

Local search carries the highest stakes

The research draws particular attention to local business search, where the consequences of inaccurate or unverifiable information are more immediately felt by consumers. According to the study, more than half of respondents - 57% - use AI tools to find local businesses at least once a month. When doing so, what matters to them is specific: 76% say it is important to see where the information comes from; 76% say seeing multiple reliable sources of information is important; and 73% say ratings and reviews from real customers are important.

Yelp's framing in the report reflects the company's commercial position as a platform built on user-generated reviews. According to the research document: "Local businesses are highly dynamic - chefs leave, menus change, ownership evolves, hours shift." An AI summary without dated sourcing or customer verification carries meaningful risk of being out of date or wrong in ways that directly affect consumer decisions.

The ability to take action is also a factor. According to the study, nearly two-thirds of respondents - 65% - say being able to take action, such as making a reservation or booking a service, on trusted platforms is important when using AI tools for local discovery. This finding aligns with Yelp's own product strategy, which has focused on integrating booking and quote-request functionality directly into its AI-powered features. In January 2026, Yelp acquired Hatch for $270 millionto strengthen its AI communication tools for local service businesses - a move that situates this research within a broader strategic context.

Generation Z and the paradox of high adoption, high demand

The generational data in the study complicates any simple narrative that younger users are more permissive toward AI. According to the research, 84% of Gen Z has used an AI search platform in the past six months - the highest rate of any generation. 60% are using these tools to find local businesses at least once a month.

But Gen Z is also the most demanding cohort when it comes to source transparency. 72% say AI platforms should provide more proof of trusted sources, compared with 63% of Millennials, 59% of Gen X, and 69% of Baby Boomers. 61% of Gen Z respondents say that seeing quotes or excerpts from verified user reviews would increase their trust, compared with 54% of Millennials, 47% of Gen X, and 46% of Baby Boomers.

This pattern - highest adoption, highest demand for evidence - is notable. It suggests that familiarity with AI tools has not produced complacency. On the contrary, the generation most embedded in these platforms is also the one applying the most scrutiny. A March 2026 Shift Browser survey covering 1,448 U.S. adults found a parallel dynamic: 32% of consumers use AI daily, yet only 16% trust AI answer engines "a great deal" - a figure almost identical to the 15% floor found in Yelp's data.

Design preference tests add behavioural evidence

Beyond the survey, Yelp commissioned two separate design preference tests through Lyssna on April 2, 2026, each involving 200 U.S. adult respondents aged 18 and over. One test simulated a restaurant search; the other simulated an HVAC service search. In each test, participants were shown two versions of an AI response.

Version one reflected a typical AI response: a plain text summary, no citations, and no ability to take further action. Version two included ratings, photos, relevant review snippets, citations and links to trusted sources, and either a reservation widget or a quote request widget.

The results were decisive. According to the report, 80% of restaurant search respondents preferred the version featuring authentic human content and trusted sources. In the HVAC test, 77% chose the same. 81% of HVAC respondents said seeing links to well-known platforms made them feel more confident about the AI's recommendations; in the restaurant group, 74% said the same. These are not marginal preferences - they are clear majorities across both test scenarios.

The broader pattern: trust deficits across AI research

The Yelp findings land within a well-documented pattern. Research published in February 2026 found that 47.1% of marketers encounter AI inaccuracies several times each week, and that more than a third of marketing professionals admitted that hallucinated or incorrect AI-generated content has already been published publicly. A study by Raptive, published in July 2025, found that content perceived as AI-generated reduces reader trust by nearly 50% and decreases purchase consideration by 14%.

The Equativ consumer survey from October 2025 showed that while 67% of consumers use AI more than once per week, 60% still cross-check results with other sources - a figure that tracks closely with Yelp's 63% double-checking finding. These numbers have remained remarkably consistent across independent research conducted by different firms over several months.

For the marketing community, the implications are layered. Platforms that generate AI-powered discovery - whether in search, local listings, or e-commerce - face a consumer base that has adopted the technology but has not ceded judgement to it. The State of Digital Trust 2025 report documented in July 2025 that only 23% of consumers fully understand how companies use their personal data, while technology services companies achieved only 33% consumer trust in data handling - a structural gap that shapes how AI-generated recommendations are received.

The link-out argument

Yelp's research document closes with an argument that has direct implications for digital publishing and advertising economics. According to the report: "More generous, transparent linking is a rising tide that lifts all boats: consumers get the ability to do their own research and make decisions with confidence, content creators and publishers receive the traffic that sustains a healthy content ecosystem, and AI platforms themselves benefit from stronger relationships with the quality sources that make their answers worth trusting in the first place."

This framing positions outbound linking not as a concession by AI platforms, but as a mechanism that improves the quality and credibility of AI answers. The argument has a financial dimension too. Yelp has reported strong demand for its data licensing products from AI search platforms seeking access to its local business content - a dynamic documented in Yelp's Q3 2025 earnings, where other revenue, which includes data licensing, grew 17% year-over-year to $19 million. Whether AI platforms adopt more generous linking practices voluntarily or under pressure from consumer expectations and potential regulation remains an open question.

What the Yelp and Morning Consult data establishes is that the consumer preference is unambiguous. Trust follows transparency. And at present, the gap between how AI search platforms present information and what users say they need to trust it remains wide.

Timeline

- February 26-28, 2026 - Morning Consult surveys 2,202 U.S. adults on behalf of Yelp on AI-powered search usage and trust

- April 2, 2026 - Lyssna conducts two design preference tests among 200 U.S. adults each, comparing AI search results with and without citations, photos, and action widgets

- April 10, 2026 - Kylie Banks, Senior Communications Manager at Yelp, reaches out to PPC Land with embargo offer for the research

- April 14, 2026 - Yelp publishes the research publicly at 7 am ET, with an accompanying blog post by Chief Product Officer Craig Saldanha

- For prior context on Yelp's AI strategy, see Yelp expands AI features with assistant, menu vision, and call answering tools (October 2025)

- For prior context on the AI trust gap in marketing, see Nearly half of marketers encounter AI errors weekly as study exposes trust gap (February 2026)

- For prior context on Yelp's $270 million Hatch acquisition, see Yelp bets $270M on AI with Hatch acquisition to automate services leads (January 2026)

- For the Shift Browser consumer AI trust survey showing 16% deep trust, see 81% of consumers fear AI data access, but daily use keeps climbing (March 2026)

Summary

Who: Yelp, Inc. commissioned the research in partnership with Morning Consult, with findings presented by Craig Saldanha, Yelp's Chief Product Officer. The survey covered 2,202 U.S. adults aged 18 and over.

What: A study measuring consumer trust in AI-powered search platforms, finding that only 15% of users trust AI search results "a lot," that 63% double-check AI outputs against other sources, and that 51% describe AI search as a "walled garden." Separate design preference tests showed 77-80% of participants prefer AI search results that include citations, photos, and action widgets over plain text summaries.

When: The Morning Consult survey was conducted from February 26 to 28, 2026. The design preference tests were conducted on April 2, 2026. The research was published on April 14, 2026.

Where: The survey was conducted online among U.S. adults, weighted for national representativeness across gender, age, race and ethnicity, education, and region. The design preference tests were also conducted among U.S. adults through the Lyssna research platform.

Why: The research was commissioned as AI-powered search platforms have expanded rapidly while consumer trust has not kept pace. Yelp's position as a platform built on verified user reviews and local business data gives it a direct commercial interest in arguing that AI search should surface and link to authenticated human-generated content rather than synthesise it into opaque summaries.