Spain's data protection authority today has imposed a total fine of €950,000 on Yoti Ltd, the British digital identity and age verification company, after finding three distinct violations of the General Data Protection Regulation in the operation of its Digital ID application. The resolution, published under file reference EXP202317887, was signed by Lorenzo Cotino Hueso, president of the Agencia Española de Protección de Datos (AEPD), and represents one of the most detailed public rulings yet on the obligations of age verification providers operating in Spain.

The three penalties break down as follows: €500,000 for the unlawful processing of biometric data under Article 9 of the GDPR; €200,000 for obtaining invalid consent for research and development processing in violation of Article 7; and €250,000 for excessive data retention contrary to the storage limitation principle in Article 5.1(e). Alongside the financial penalties, the AEPD has ordered Yoti to implement corrective measures within six months of the resolution becoming final.

Yoti Ltd, registered in the United Kingdom with tax identification number 08998951, operates a range of age verification services used by platform operators across multiple markets. According to the resolution, all of the company's age verification methods - facial age estimation, document-based verification, credit card checks, mobile number matching, and its Digital ID app - are available for use in Spain. The company's most recent published revenue figures, cited in the resolution as of March 2025, stand at €15,029,907, a figure the authority used as the reference point when calibrating proportionate and dissuasive penalties.

How Yoti's technology works

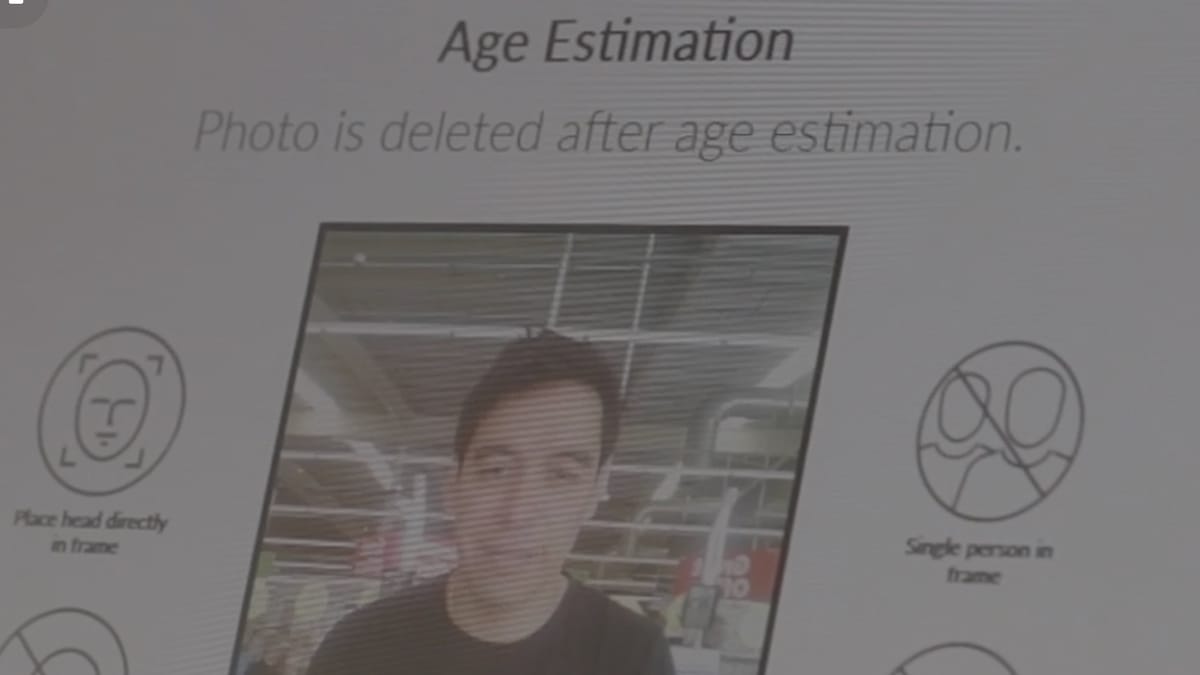

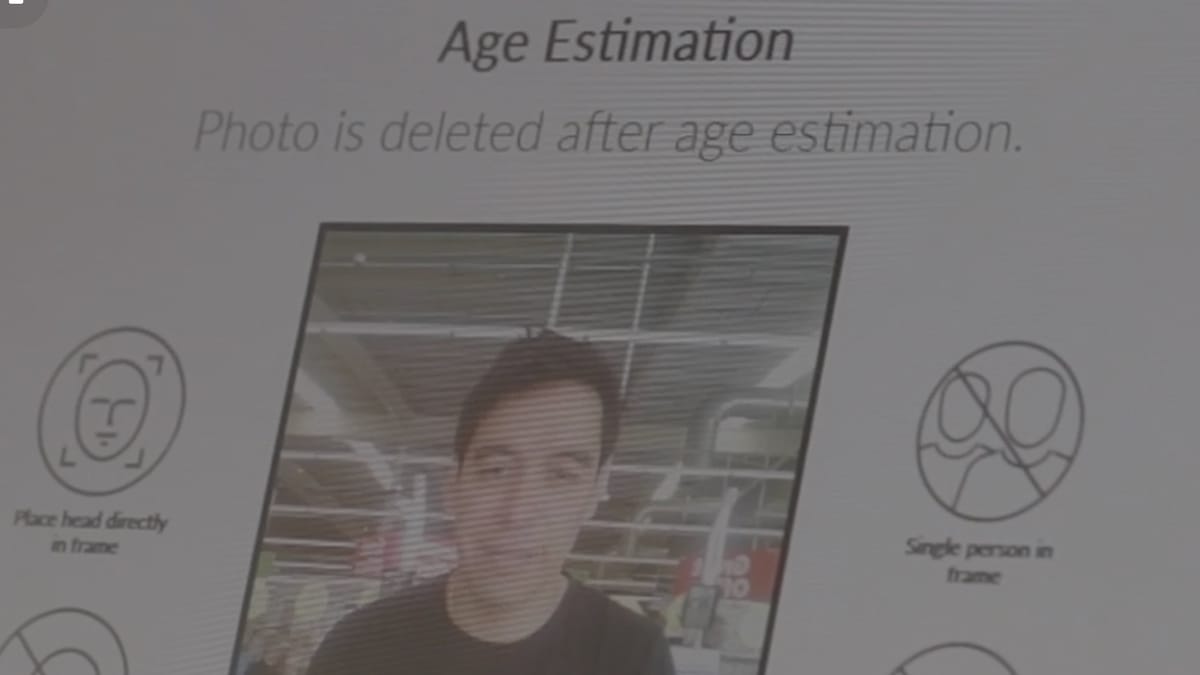

The Digital ID app is the service at the centre of the enforcement action. According to Yoti's own documentation submitted during the investigation, the app allows users to create a verified identity account by uploading a government-issued identity document and taking a selfie. The technology uses deep neural networks to process the facial image: the image is broken into pixels, each treated as a numerical value, fed through a network of mathematical nodes arranged in layers analogous to the human brain's structure. A typical run through the network produces an estimated age in approximately 1 to 1.5 seconds.

Yoti describes the age verification services it offers to business clients as comprising eight distinct methods. According to the company's own data protection impact assessment (DPIA), these include facial age estimation, verification via Digital ID app, document identification, credit card verification, mobile number verification, database checking, electronic identity (eID) services used in Switzerland, Denmark and Finland, and a US mobile driving licence option. When these services are provided to clients on a software-as-a-service basis, those clients act as data controllers and Yoti acts as processor. Within the Digital ID app specifically, however, Yoti is the controller.

The facial age estimation product operates on a machine learning model trained using 12 age range categories (0-1, 2-3, 4-6, 7-9, 10-12, 13-15, 16-17, 18-24, 25-29, 30-39, 40-49, 50-60), four gender groupings, and three skin tone groups based on the Fitzpatrick scale - giving a total of 144 demographic combinations. According to Yoti's white paper on the technology, referenced in the resolution and updated to September 2, 2024, the model was accurate within 1.28 years and performed effectively across gender and skin tone. To build this model, particularly for children aged 6 to 12, the company collected training images from an online portal requiring adult consent, and separately through a South African family welfare organisation, Be In Touch, via its relationships with schools. The UK Information Commissioner's Office, which participated in Yoti's regulatory sandbox, advised against the South African collection method due to data protection implications.

The app imposes age-based controls by jurisdiction. According to Yoti, "the Digital ID app cannot be used by persons under the digital age of consent, i.e. 13 years in the United Kingdom and 14 years in Spain." On the second screen of the account creation process, the app detects the user's location. In Spain, it presents two options: "I am 14 or over" or "I am 13 or under," and only allows the process to continue if the first option is selected. No mechanism exists to verify whether the user's self-declaration is accurate.

For age token reuse, Yoti created a cookie-based system. Age tokens are browser cookies with a lifespan of 30 days. Users who verify their age once can reuse that result across participating platforms within the cookie's validity period. A separate "age account" feature allows users to store tokens in a username-password account accessible across devices.

First violation: biometric special category data

The AEPD's primary finding concerns the processing of biometric data without a valid legal basis under Article 9 of the GDPR. Article 9 prohibits the processing of special category data - which includes biometric data used for unique identification - unless one of the specific exemptions in Article 9.2 applies.

Yoti's position throughout the investigation was that the facial scan it performs does not constitute biometric data of a special category because, in its view, the purpose is not to uniquely identify users but to authenticate them. The authority rejected this argument. According to the resolution, data constitutes biometric special category data under Article 4.14 of the GDPR when: it relates to the physical, physiological, or behavioural characteristics of a natural person; it is intended to confirm unique identification; and it has been subjected to specific technical processing to generate, store, exploit, and destroy biometric templates derived from raw samples.

The AEPD found all three criteria satisfied. The facial scan produces a biometric template stored while the account is active. When users modify their PIN or recover their account, the app captures a new facial scan and compares it against the stored template - a 1:1 matching operation. According to the authority, "despite repeatedly asserting - both during account creation and in the privacy policy - that the purpose of processing the biometric facial pattern is to guarantee user identification, Yoti does not consider itself to be processing special category personal data," a position the authority characterised as reflecting "particular negligence."

The fine for this infringement was set at €500,000. Aggravating factors included the involvement of minors - the authority noted that an age verification application would, by its nature, be used extensively by users under 18 - and the fact that data is processed on servers outside the European Union.

For transfers from the United Kingdom to India, where Yoti operates a Security Centre providing manual verification support, the company relies on EU standard contractual clauses with a UK addendum. According to the DPIA, this centre can access document images and selfies remotely on UK servers through what the company describes as "thin terminals," with no Yoti staff outside the Security Centre able to view this data. The AEPD noted that this international dimension further constrains users' practical control over their own data.

Second violation: pre-ticked consent boxes for R&D

The second violation concerns the mechanism Yoti uses to obtain consent for using users' biometric data in internal research and development. According to the AEPD's investigation, the app's settings screen displayed a pre-ticked checkbox through which users' biometric data would be used to train and improve Yoti's facial age estimation algorithms - unless users actively unchecked the box.

Yoti's own documentation confirms the arrangement. According to the company, "In the Digital ID app, the default value is that data can be used for R&D. Yoti has taken steps to make this clear to users. Users can opt out, preventing their data being used for R&D, by using the app settings."

This is precisely what the GDPR prohibits when dealing with special category data. Article 4.11 defines valid consent as "any freely given, specific, informed and unambiguous indication of the data subject's wishes by which he or she, by a statement or by a clear affirmative action, signifies agreement." Pre-ticked checkboxes do not constitute a clear affirmative action. They represent the inverse: processing that proceeds unless the user actively intervenes to stop it.

The European Data Protection Board's Guidelines 05/2020 on consent, issued on May 4, 2020, are explicit on this point. The AEPD cited these guidelines in its analysis, noting that consent obtained by default can be revoked does not remedy the underlying failure: "Yoti consciously notes that consent granted by default can be revoked, without taking into account that there should not be a subsequent revocation at the data subject's request, but rather that consent should be obtained in accordance with the safeguards and guarantees established by the GDPR."

The types of data flowing through the R&D pipeline are substantial. According to the DPIA, the processing for internal research purposes includes facial images with a timestamp, month and year of birth from identity documents, gender information, document type information, country code, video recordings, audio recordings, device information, user behaviour data, health-related information, and - for the purpose of bias labelling - race and ethnic origin data estimated using the Fitzpatrick skin tone scale. Data from children aged 13 to 18 who are Digital ID app users is explicitly included.

The fine for this infringement was set at €200,000. The AEPD noted that the processing serves Yoti's commercial interests as a company seeking to improve its product, that the data is processed outside user control on servers outside the EU, and that the involvement of minors constitutes an aggravating factor.

Third violation: retention periods beyond stated purposes

The third infringement involves the retention of personal data - including special category biometric data - for periods that exceed what is necessary for the purposes for which it was collected.

According to Yoti's own DPIA, age tokens and Digital ID app data are retained for as long as the user maintains an active account, or for three years following the last activity. The biometric facial pattern used to verify the user is a real person is kept throughout this entire period. The AEPD found this disproportionate.

The authority's reasoning proceeds in two steps. First, the liveness check - confirming that the person in front of the camera is real - is completed at account creation. Once that check is done, the purpose is fulfilled. Retaining the biometric pattern indefinitely after that point cannot be justified by reference to a completed purpose. Second, the subsequent operations for which Yoti retains the biometric template (PIN modification, account recovery) may never occur during the account's lifetime. Storing special category data for a potential future use that might never materialise does not satisfy the storage limitation principle in Article 5.1(e).

A related problem was identified with geolocation data. According to Yoti, it collects users' country code, city, and state derived from their IP address, and retains this data for five years. The stated purpose for this collection is determining which jurisdiction's age restrictions apply to the user. The AEPD found this retention excessive: once the applicable rules have been identified at account creation, the IP-derived geolocation data serves no continuing purpose that could justify a five-year retention period.

A further retention issue was identified with fraudulent documents. According to Yoti's submission, when identity documents are identified as fraudulent, the company may retain the document image for up to two years in order to train its fraud detection software. The AEPD held that this purpose - improving software - is separate from and not derivable from the original purpose for which the document was collected, namely verifying a user's identity at account creation.

Video recordings from the liveness detection process present an additional dimension. The app's terms and conditions state that video recorded during liveness checks "will be permanently deleted within 30 days of the date it was recorded, unless we are required to retain it for regulatory reasons." The authority noted that once liveness is confirmed, the video's purpose is exhausted, meaning retention for any period beyond that moment exceeds the legitimate need.

The fine for this infringement was set at €250,000, reflecting the broad scope of affected users (all account holders, not merely those who use specific services), the involvement of special category data, and the absence of justification from Yoti for the retention periods chosen.

Corrective measures and timeline

The AEPD has ordered three corrective measures, which Yoti must implement within six months of the resolution becoming final:

- Demonstrate that the processing of biometric special category data complies with the GDPR.

- Demonstrate that consent-based processing meets GDPR requirements in full.

- Demonstrate that personal data is retained only for the period strictly necessary to fulfil the processing purpose under Article 5.1(e).

The resolution becomes final once the one-month period for filing an optional appeal before the AEPD presidency has elapsed without action, or on the date the resolution is notified if no appeal is filed. Yoti may also appeal directly to the Contentious-Administrative Chamber of the National Court within two months of notification.

Failure to comply with the corrective measures would itself constitute a separate administrative infringement under Articles 83.5 and 83.6 of the GDPR, potentially triggering a further enforcement procedure.

Why this matters to the marketing and advertising community

The Yoti case sits within a broader pattern of Spanish enforcement actions that PPC Land has tracked extensively. The AEPD fined FC Barcelona €500,000 for an inadequate DPIA covering biometric facial and voice data from approximately 143,000 members. The authority separately imposed €1.8 million on AENA for insufficient data protection impact assessments before deploying facial recognition at Spanish airports. In January 2025, Informa D&B received a €1.8 million fine for processing over 1.6 million business owners' personal data without a valid legal basis.

For advertisers and ad technology companies, the Yoti resolution contains important signals. Pre-ticked consent boxes remain a recurring enforcement failure across European jurisdictions. The distinction between consent as a genuine affirmative act and consent as a default that users must actively reverse is not a formality - it is the mechanism through which regulators assess whether users have exercised real control over their data. Any platform, publisher, or verification service that defaults to broad data usage and then provides an opt-out is operating in territory that the GDPR explicitly prohibits for special category data.

The European Data Protection Board's Statement 1/2025, adopted on February 11, 2025, which established ten principles for GDPR-compliant age assurance systems, is directly relevant here. The EDPB specified that age verification processes must use the least intrusive method available, must not enable additional tracking or profiling of users, and must implement short retention periods. Yoti's approach - collecting data for R&D using pre-ticked boxes, retaining biometric patterns throughout account lifetime, and holding geolocation data for five years - runs counter to all three of these principles.

The question of what counts as biometric data continues to generate enforcement divergence across Europe. The AEPD's February 2026 warning to Tools for Humanity over planned iris-scanning operations signalled the authority's expansive view of what constitutes biometric data requiring Article 9 protection. Age estimation technology occupies contested ground: Yoti and the UK ICO previously agreed that facial age estimation does not constitute biometric processing when used purely for categorisation without unique identification. Spain's AEPD has now taken a different position when the same underlying facial templates are retained and used for 1:1 matching during account operations. The tension between these interpretations is unresolved at European level.

Age verification providers - and the advertising platforms that use them to gate content or qualify audiences - need to understand that deploying facial technology does not insulate a service from GDPR Article 9 obligations if the system generates persistent biometric templates. The Yoti resolution makes this explicit.

The broader regulatory trajectory is tightening. German data protection authorities in November 2025 called for ten specific GDPR amendments to strengthen protection for children's data, including restrictions on consent for profiling and advertising. The UK's ICO fined Reddit £14.47 million for the absence of age assurance mechanisms, and fined MediaLab £247,590 for similar failures at Imgur. Together, these cases describe a regulatory environment where age verification is now treated as an affirmative obligation - not merely a technical option - and where the privacy architecture of verification systems is subject to detailed scrutiny.

For marketing professionals specifically, the case reinforces that third-party verification services selected to establish audience eligibility or age gates carry their own compliance risk. If a platform deploys an age verification provider whose data practices violate the GDPR, the contractual allocation of controller and processor responsibilities will be examined closely by regulators - and, as the Yoti case shows, the provider's obligations as controller within its own app are separate from, and not absorbed by, its role as processor for client platforms.

Timeline

- July 2018 - Yoti creates the initial version of its Data Protection Impact Assessment for age verification services.

- October 2021 - Yoti publishes its Facial Age Estimation White Paper, claiming a model accurate within 1.28 years for children aged 6-12.

- December 12, 2023 - The Director of the AEPD instructs the Subdirección General de Inspección de Datos (SGID) to open preliminary investigations into Yoti under file EXP202317887.

- February 11, 2025 - The EDPB adopts Statement 1/2025 establishing ten GDPR-compliant principles for age assurance. PPC Land coverage.

- January-December 2022 - Period covered by Yoti's ISAE3000 SOC 2 compliance audit cited in the case file.

- March 1, 2024 - Yoti's internal DPIA for R&D processing last updated.

- May 14, 2024 - The AEPD issues a second information request to Yoti covering methods used in Spain, manual verification safeguards, geolocation data, and data retention for onboarding documents.

- September 2, 2024 - Yoti updates its Facial Age Estimation White Paper.

- February 2024 - Yoti's Statement of Applicability for certification is last updated.

- March 2025 - Yoti's most recently published revenue figure of €15,029,907 cited by the AEPD.

- November 2025 - Spanish AEPD fines AENA €1.8 million for airport facial recognition failures.

- November 21, 2025 - German data protection authorities call for enhanced GDPR protections for children.

- January 27, 2026 - Austrian Data Protection Authority rules against Microsoft for tracking school children. PPC Land coverage.

- February 2026 - AEPD issues formal warning to Tools for Humanity. PPC Land coverage.

- February 24, 2026 - UK ICO fines Reddit £14.47 million for children's data failures including the absence of age assurance.

- March 4, 2026 - Spain's AEPD fines FC Barcelona €500,000 for a deficient biometric data DPIA.

- March 10, 2026 - AEPD publishes resolution fining Yoti Ltd a total of €950,000 across three GDPR violations, with six months to implement corrective measures.

Summary

Who: Yoti Ltd (NIF 08998951), a British digital identity and age verification company with annual revenue of €15,029,907, sanctioned by the Agencia Española de Protección de Datos (AEPD), Spain's national data protection authority.

What: A total fine of €950,000 broken down into three separate penalties - €500,000 for unlawful processing of biometric special category data under GDPR Article 9; €200,000 for obtaining invalid consent via pre-ticked checkboxes for R&D data use under Article 7; and €250,000 for retaining personal data, including biometric templates and geolocation data, beyond what the purposes of processing require, in violation of Article 5.1(e). The AEPD also ordered three corrective compliance measures to be implemented within six months.

When: The investigation was opened on December 12, 2023. A supplementary information request was made on May 14, 2024. The resolution was signed by AEPD president Lorenzo Cotino Hueso and published on March 10, 2026.

Where: The violations relate to Yoti's Digital ID app as operated in Spain. Data processing takes place on servers in the United Kingdom, with manual verification support from Yoti's Security Centre in India. Transfers from the UK to India are covered by EU standard contractual clauses with a UK addendum.

Why: The AEPD found that Yoti obtained facial biometric templates - which enable 1:1 identity matching during PIN changes and account recovery - without acknowledging that this constitutes special category data processing requiring an explicit legal basis. The authority found that pre-ticked consent for R&D data use fails the GDPR's requirement for a clear affirmative act, and that indefinite retention of biometric templates, five-year retention of geolocation data, and two-year retention of fraudulent document images all exceed the periods strictly necessary for the purposes originally stated.